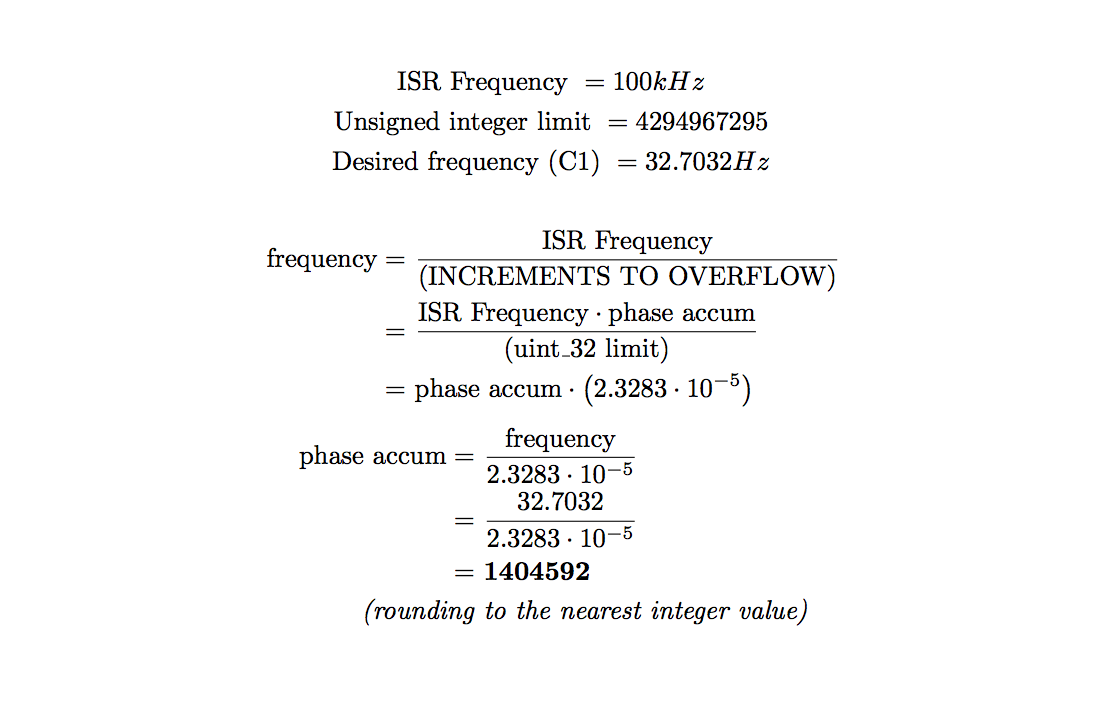

In our software, there are dozens of variables that refer to different synthesis

parameters. Two char arrays, step_notes and steps_on, represent the note and

activity (rest or note) of each step in the 16-step sequence. In step_notes, a

note is represented by an index, 0-48, into a table of phase accumulators,

calculated using freq_accum_calcs.rb, which can be found in the

Commented Program Files section.

The old_step_select, step_select, note_select, old_step, and curr_step variables

are used for sequence editing and playback, both to supply the TFT-update thread

with information it needs to draw the sequence state, and to facilitate reading

and writing data to/from the two sequence arrays mentioned above. The seq_active

variable is used in the DDS ISR to determine whether or not to add the sequence

advancing phase accumulator to the step_accum counter. The phase accumulators for

each tempo were calculated using tempo_accum_calcs.rb, which can be found in the

Commented Program Files section.

There are many flags, accumulators, and values that go into making the amplitude

and shape blend envelopes work. The amp_env, shape_env, and shape_amt variables

hold the values for the modal envelopes, and the attenuation amount for the shape

envelope respectively, all set directly from the ADC reading thread. The rising

variables are used as flags in the DDS ISR to determine whether to add or subtract

the accumulator. The rise_acc and fall_acc variables for both envelopes are set

using envelope rise/fall phase accumulators calculated in env_bound_accum_calcs.rb,

which can be found in the

Commented Program Files section. These accumulators are

blended across the turn of the modal envelope knobs, allowing fades through

different envelope lengths and shapes.

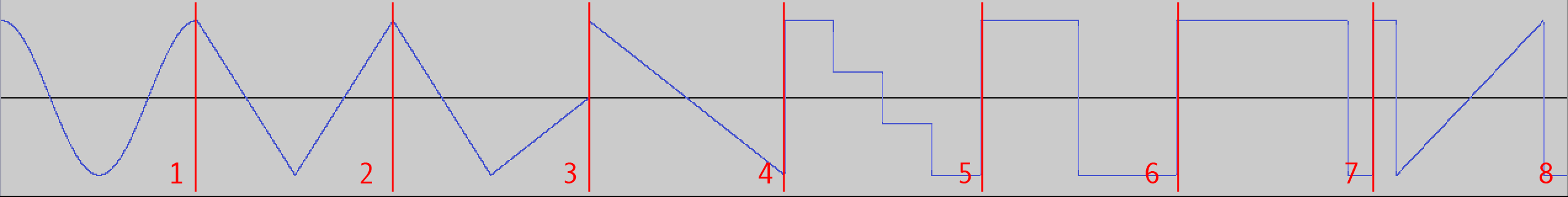

Both envelopes are AD envelopes. At the left side of the knob, the envelope has

a short attack and long decay. As the knob moves towards the center of its turn,

the decay becomes longer until a certain keyframe, at which point the attack

starts lengthening, making the envelope's shape more triangular. After this

keyframe, the attack continues to become longer as the decay shortens, eventually

resulting in shapes inverse to those on the left side of the knob, in which the

attack is long but the decay is very short. The specific time values of each

envelope keyframe are listed in comments in env_bound_accum_calcs.rb.

Our DDS ISR (Direct Digital Synthesis Interrupt Service Routine) accomplishes

all the tasks it needs to very expediently. This is necessary because of the speed

at which it is run: 100kHz. In these 400 cycles, with cycles to spare for other

threads like the button, multiplexer, or TFT threads, the procedures executed

are as follows:

-

Scaling the shape envelope value by its attenuation, then adding it

to the wave blend offset and clipping the result if it is too high

-

Getting the two waves within the current wavetable to use in the

WAVE_BLEND macro, using bitwise operations on the overall blend

value

-

Using the WAVE_BLEND macro to blend between the two waves, using

the subtable blend value, the above-calculated table offsets, and

the DDS phase accumulator

-

Scaling the calculated sample by the value of the amplitude envelope,

then writing this sample to the DAC

-

Updating the DDS phase accumulator and sequence position

-

Updating the envelopes' values, taking into account each envelope's

rising flags and rise/fall phase accumulators, clipping results if

necessary

-

Setting step update flag for TFT thread if the sequencer step changed,

so that the UI can reflect this change

Using integer operations instead of fix16 operations, as well as choosing scaling

values to allow division using variable right shift, allowed the DDS ISR to function

properly in the small window of time it was allowed.

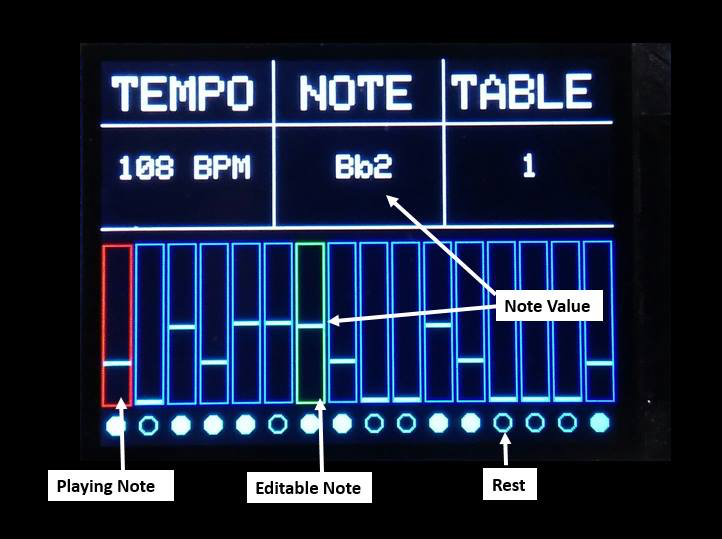

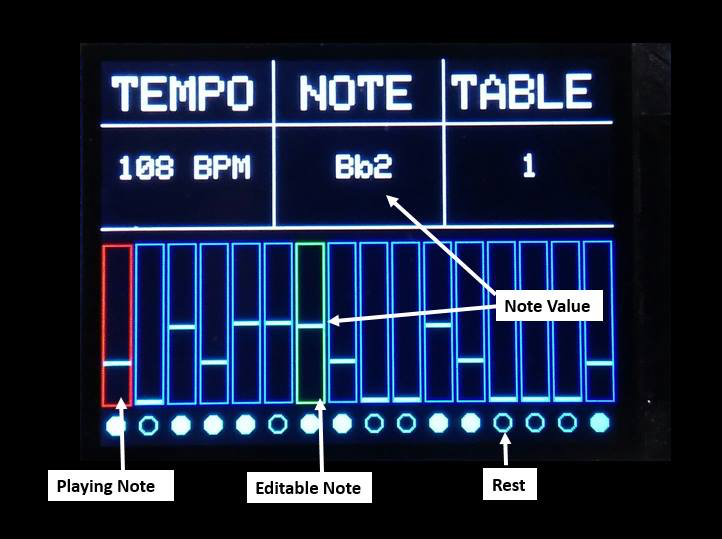

The intitial sequence state, as well as the general UI format, is drawn to the TFT

using the helper function initTFT(), which is called from main. After this point,

once threads have been scheduled and the program is fully started, the TFT is

updated 20 times per second, using a dedicated TFT update thread.

In the TFT update thread, flags set in the ISR and the other threads allow for

updating the sequence state only when necessary. Only steps which have changed

will be redrawn, and the red/green selector boxes for selected and active

sequence steps will only be moved if their positions have been changed. Copies

of all flags which are set in the ISR, such as the old and current step, are

made in the TFT update thread to ensure that their values don't change in between

dependent drawing steps. The tempo, note-to-write, and table index are all

rewritten each time the TFT update thread's loop executes.

User Interface on the TFT Display

The multiplexing of input through the 1 on-board ADC was done in a dedicated

thread. In this thread, all 7 potentiometer values were read into their respective

variables, with any data manipulations needed done as well (such as converting

raw envelope positions into rise/fall accumulators). The 3 control bits for the

multiplexer had to be switched before each read, after which a couple short waits

(implemented using an empty while loop) was required before reading the ADC value.

Overall, the procedure for each of the 7 reads was largely identical and was as

follows:

-

Set BIT_7, BIT_8, and BIT_9 on IOPORT_B to represent the index of the

multiplexer input in binary, from 0-7

-

Wait for approximately 80 cycles

-

Acquire the ADC (would not have been needed if auto-capture were on)

using the AcquireADC10() function

-

Wait for approximately 40 more cycles

-

Set old value variables if needed, then read the new value using

ReadADC10(0) and process the read value quickly

The four buttons were processed using a thread which was run at

approximately 10Hz. Because of the slow rate of button value capture,

we deemed it unnecessary to debounce the buttons. The button thread was,

as a result, rather simple. For each button, the previous value of the

button was stored. If the newly-read value of the button from its digital

input pin was different from the previous value, and this new value signified

that the button was being pressed, the data the button controlled would

be modified. When writing a new note value, or changing a step's rest

state, the button thread would set some flags for the TFT thread to notify

it about which step had been changed, allowing the TFT thread to do less

work.

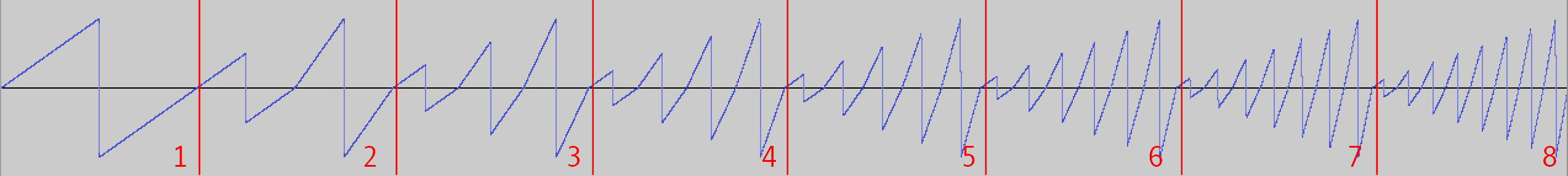

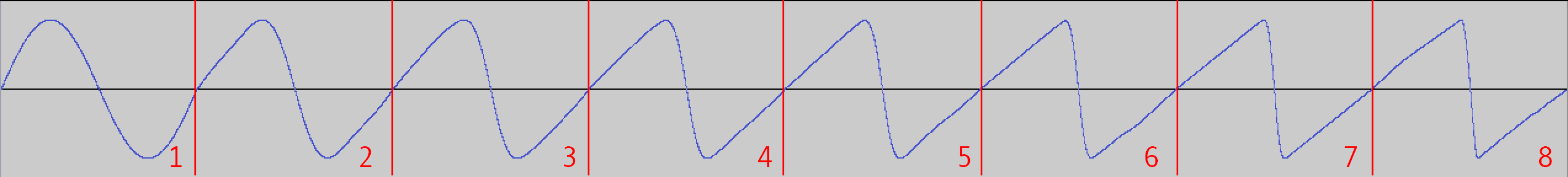

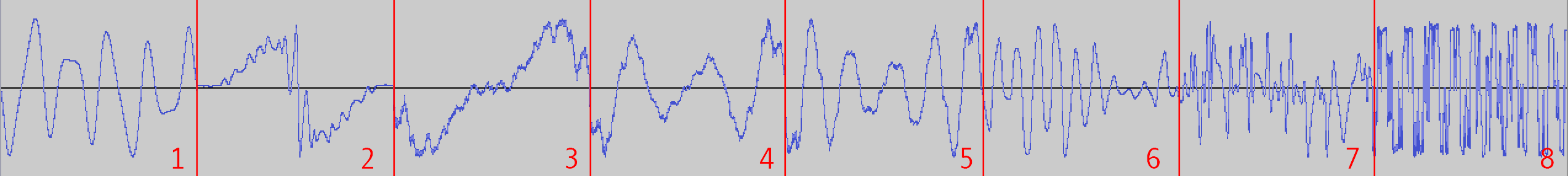

All of our wavetables were initially designed custom in the VST Plugin

Serum

by Xfer Records, an advanced wavetable synthesizer with a built-in

wavetable creation tool. After these wavetables were created, the .wav files

that represented them were converted to 32-bit signed .raw files using

the free audio software, Audacity. The wavetables in Serum initially

were represented as 32-bit float, with 512 samples per table. Using

8 waves per table, as we did, this meant that each wavetable had 4096

32-bit samples, resulting in around 16KB per table.

After being converted into .raw format, each wavetable was converted

into a C header file using a custom command-line script, convert.cpp,

which can be found in the

Commented Program Files section. It allows the user to specify the

name of the array in which the samples will be placed. We used these

array names later in our int *tables array, which contained the four

wavetables. The header files containing the wavetables were rather large,

and as such have not been included in this report.

We decided to use unsigned integers to hold all of our synthesis parameters,

even ones that would have to be scaled by others. We made this choice mainly

to achive speed in the DDS ISR, for better audio fidelity via a higher sample

rate and more time for threads to perform their respective tasks. The barrel

shift allowed by the PIC32's ALU made division before scaling very fast, which

came in handy for crossfading between waveshapes, fading between envelope

keyframes, scaling shape modulation, and scaling the DDS sample by the

amplitude envelope.

The DAC and ADC were configured using code modified from examples on the

course website. We put most of our configuration code in two functions,

initADC and initDAC, which were both called from the main procedure of our

program. We also used some macros for the bitwise ORs of configuration flags.

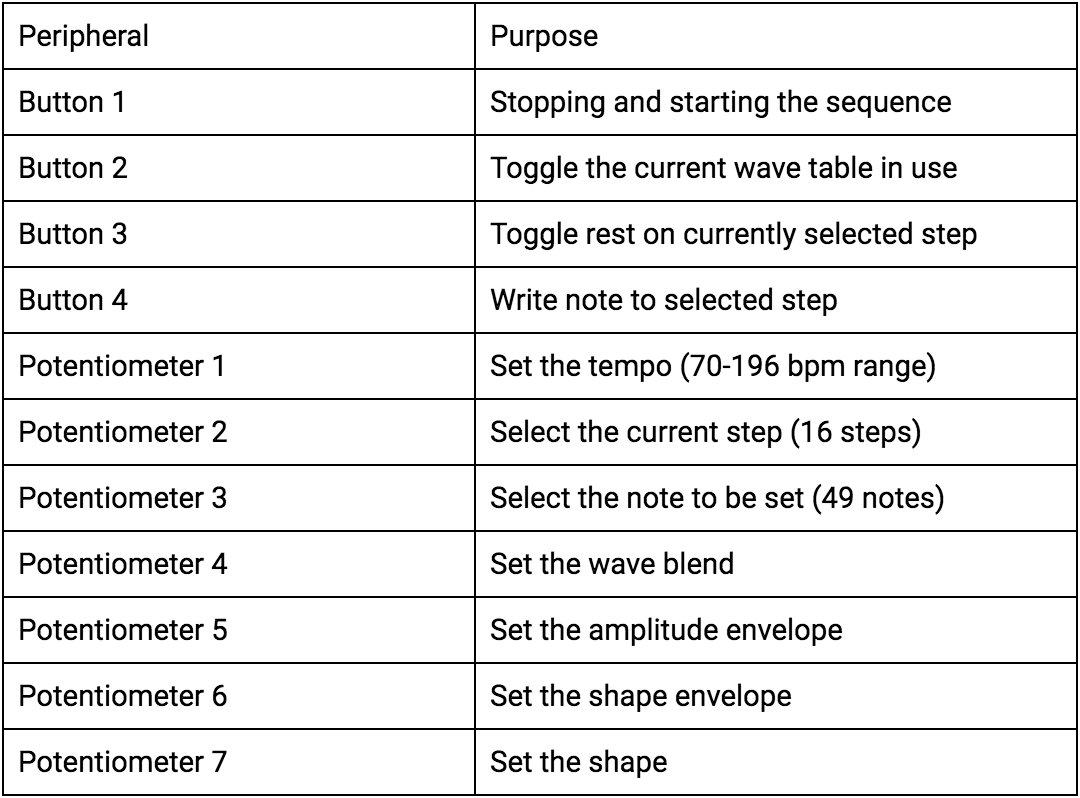

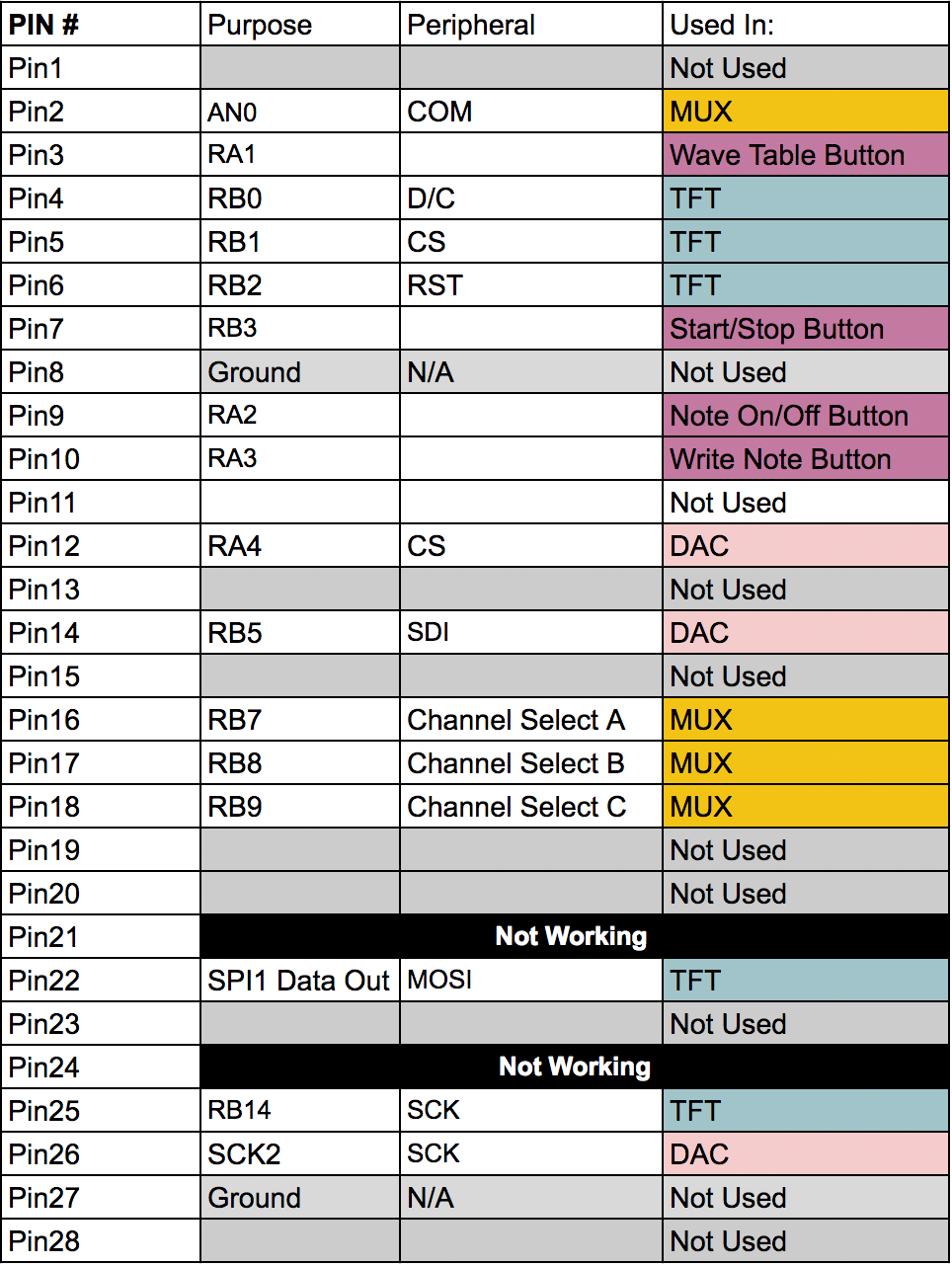

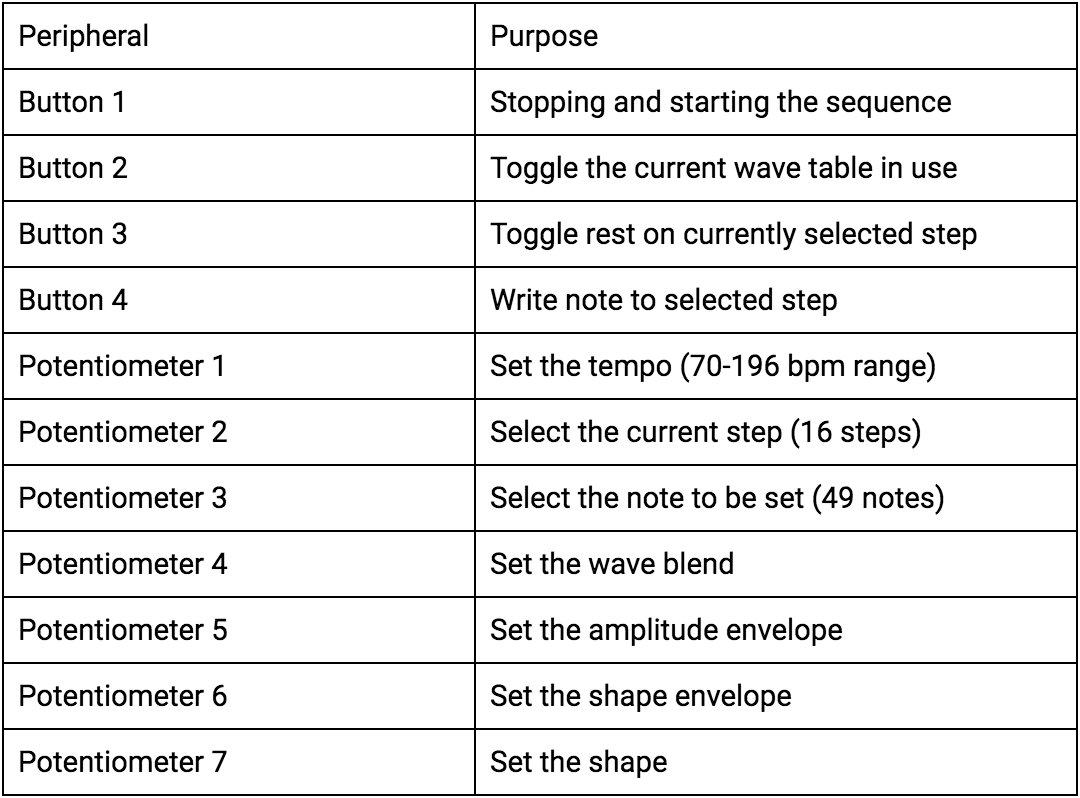

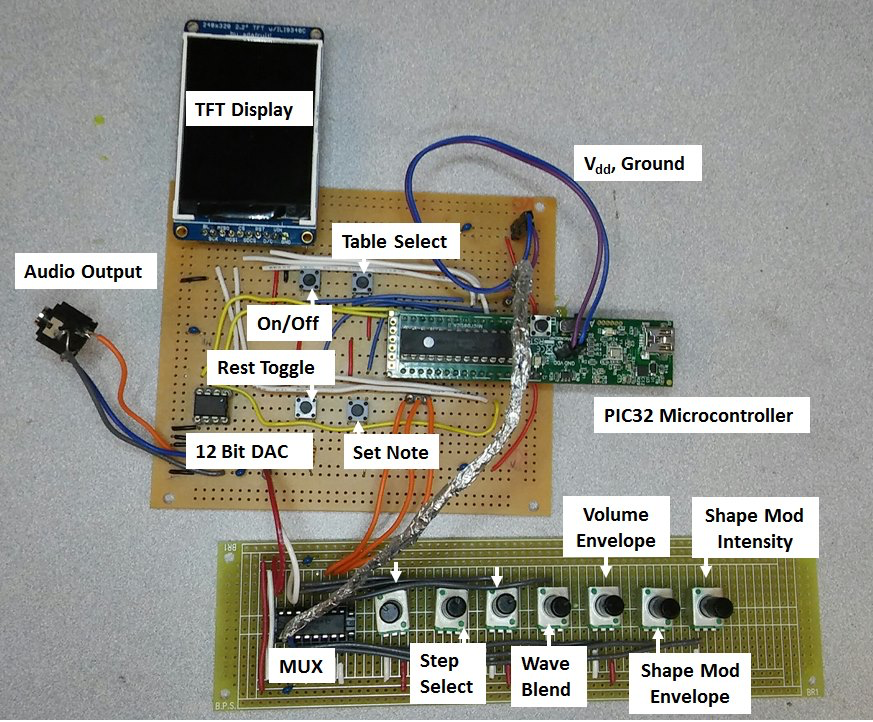

The hardware for the synthesizer is composed of a PIC32 microcontroller,

7 potentiometers, 4 buttons, an 8:1 analog multiplexer, a 12-bit DAC,

and a TFT display. Using user input from the various potentiometers and

buttons, the PIC then generates different sequences and waveforms based

upon the current inputs.

A full hardware diagram can be found in the

Schematics

section.

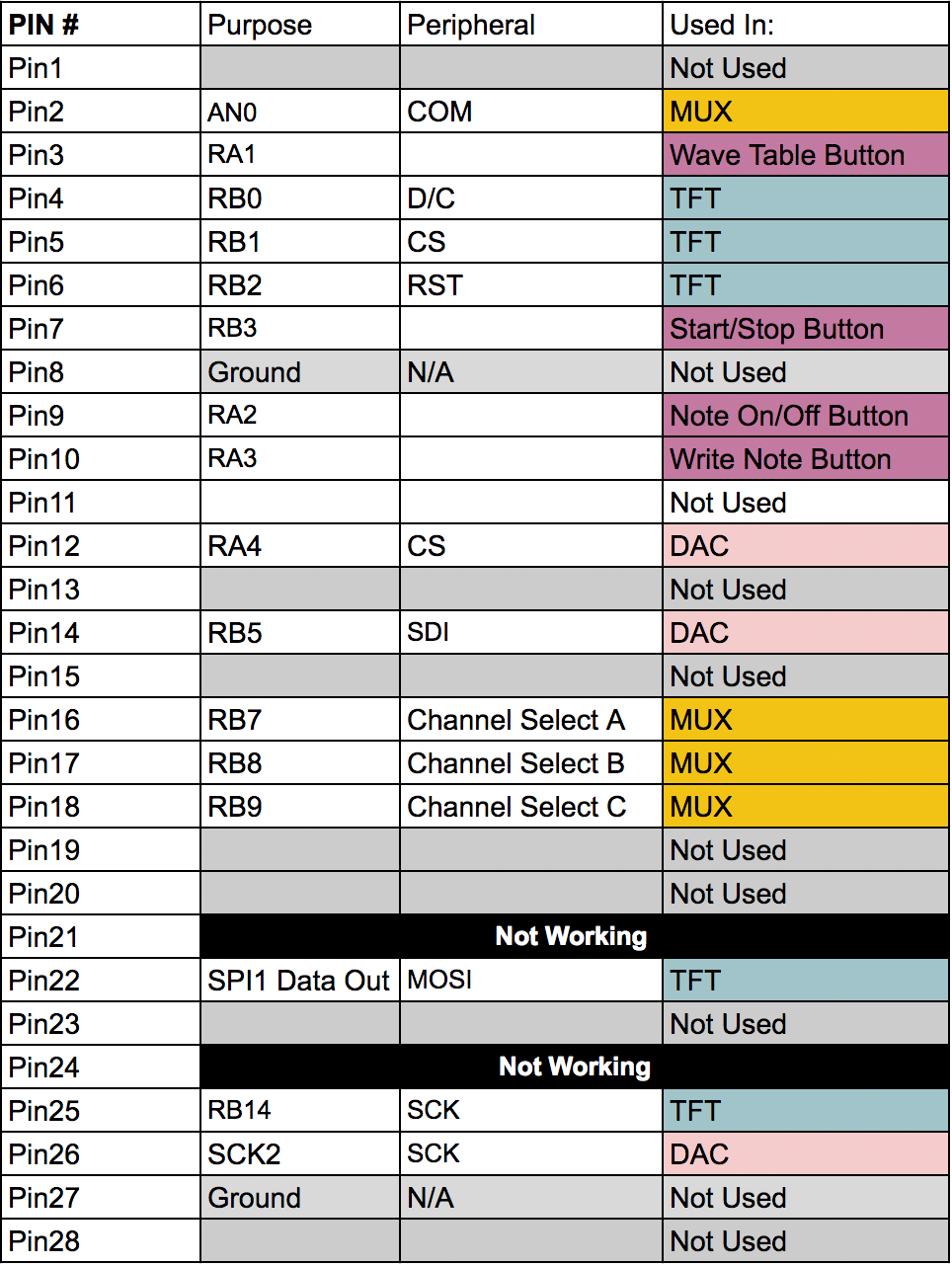

Our TFT display is connected the way it has been for the previous labs,

using pins 4, 5, 6, 22, and 25.

Button/Knob Control Functions

Pin Connections

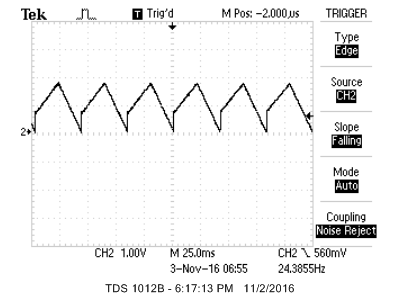

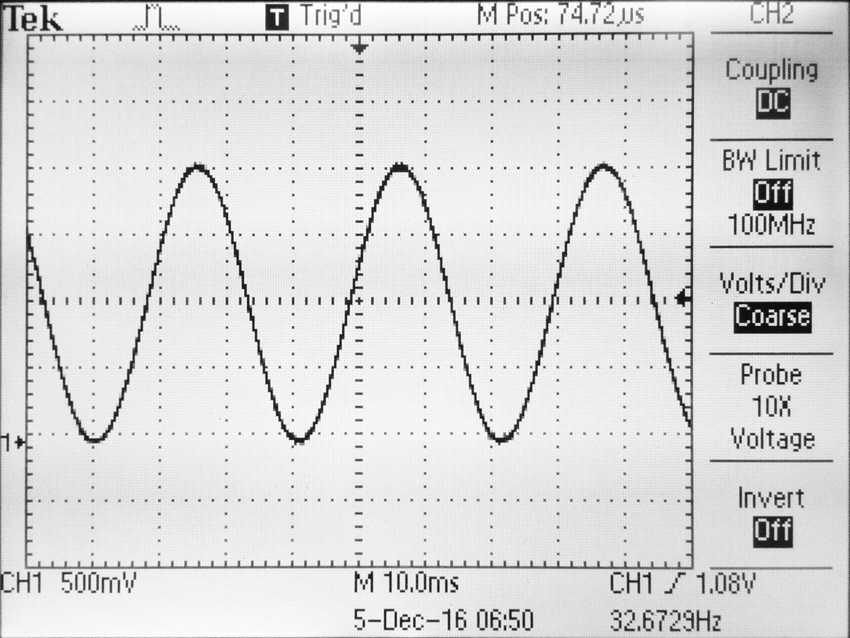

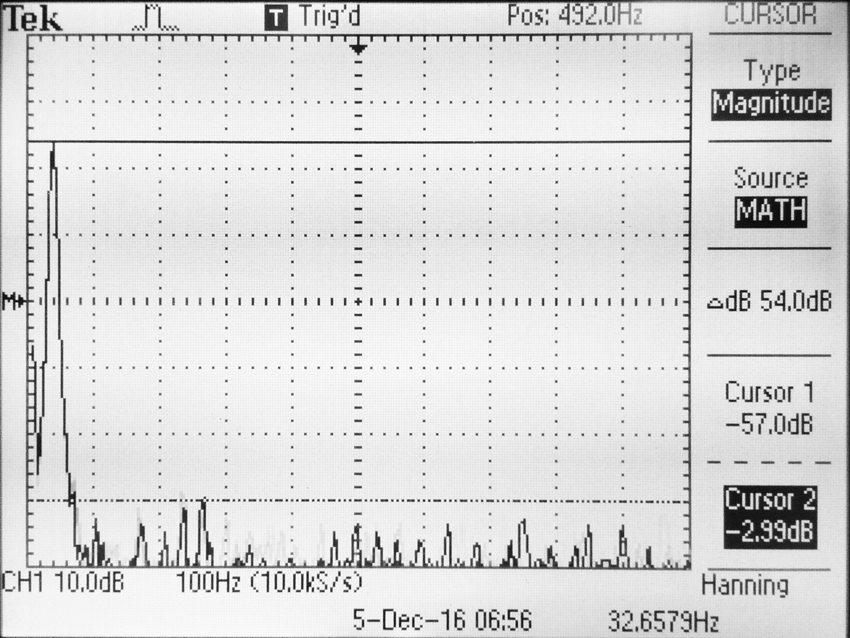

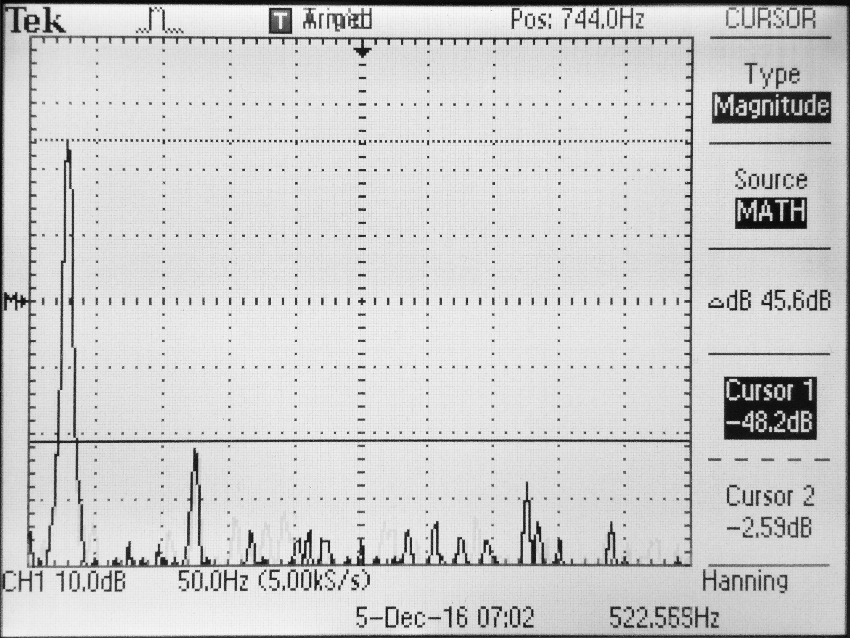

The PIC32 then used direct digital synthesis running at 100 kHz to generate

the correct waveform specified by the input settings. The generation of

the waveform required the use of an external 12-bit DAC, the MCP4822.

The DAC has two output pins, VoutA and VoutB, of which we only use VoutA.

VoutB is sent to ground, along with Vss, and the LDAC pins.

The DAC is responsible for the main deliverable portion of our project,

so its function is quite visible. However, the other main bit of

hardware in our setup is the CD4051B multiplexer, which takes input

signals from all of our potentiometers and relieves pressure on the PIC32’s

ADC capabilities. Most of the ADC pins on the PIC32 are taken up by the

TFT display, and so only four pins exist for our 7 analog inputs.

Clearly, even if we were to use all the ADC inputs, there would be a need

for multiplexing, as there would be three knobs unconnected. If we were

to use multiple ADC pins, then we would also have to re-configure the

ADC setup in order to multiplex between all the ADC pins.

Under the hood, there exists only one ADC in the microcontroller, so it

would be multiplexing between all of the ADC pins, in addition to the

multiplexing we would be doing on each pin. The CD4051B perfectly addresses

this issue, due to it having eight inputs, outputting a single signal which

we could read on the one ADC pin on the PIC32. Thanks to the multiplexer,

we can keep the existing ADC configuration. Although the multiplexer requires

three extra channel inputs, which are controlled by the PIC32, we have plenty

more options with it, seeing as they can be digital outputs. The multiplexer

takes Vdd from the PIC32 power rail, and uses all the signal input pins except

for Signal 5, which we grounded. The Vss, Vee, and INH pins were also sent

to ground.

In order to stabilize the voltage output by the PIC32, we placed various 10µF

capacitors between power and ground on the hardware. This smoothes out

spikes and drops in the 3.3V output of the microcontroller.

All of the preexisting code we used was boilerplate code from the ECE 4760

course website for setting up ISRs, DACs, I/O pins, and timers. We also

used the

Protothreads

library by Adam Dunkels, and the tft_master.c/tft_gfx.c libraries

provided by Professor Land.

The most vexing bottleneck in our project was getting the multiplexer to work

properly. After wiring it up according to the data sheet, it would only function

intermittently, working properly sometimes, and then being completely unresponsive

at others. In addition to this, when the hardware did function, a few potentiometer

inputs seemed to interact with others. Specifically, the first signal input would

be altered slightly by every other knob, and the sixth knob would bleed into all

other inputs. Since the multiplexer would work sporadically and unpredictably, it didn’t

seem as if software was the issue, though it was possible. When touching and bending

wires, we would occasionally be able to change the functionality of multiplexer, but

these events would not affect the multiplexer consistently.

One possible explanation for

this behavior takes into account the electric fields our bodies generate.

Depending on where we would poke wires and our own orientation, the multiplexer signal

wires could have been affected. This issue could be exacerbated by the fact

that we had channel select wires crossing over the analog outputs of the multiplexer,

which were both in close proximity to our power rails. This served as part of

the motivation to move our hardware setup to perfboard. To further address

the signal-to-noise issue, we placed capacitors between power and ground, and moved

wires away from the TFT display. In case of internally broken wires, we also

replaced all the signal wires connecting to the multiplexer. In order to address the

possible internal signal bleeding of the multiplexer, we grounded pin 5 of the multiplexer,

which equated to the 6th signal input, the multiplexer pins being 0-indexed.

After taking all the actions listed above, the multiplexer behaved more consistently, but

we were unable to read from more than 2 different sources, beyond which we received

garbage data. This issue this time was a software issue; we weren’t waiting

long enough between switching index bits to read the ADC pin. The PIC’s DigitalRead

settling time, internal ADC sampling speeds, and the multiplexer’s switching speed require

dozens of processor cycles of waiting, so we put in a delay of approximately a microsecond

before each read. The function for waiting 1µs included in the TFT library was not working

for some reason, so this wait was achieved using a for loop with no body.

Carrying out these fixes largely took care of all the issues we were seeing. Because we

implemented most of these methods in parallel, we were unable to isolate

one particular root cause of the malfunctioning, but taken together,

we would say that the issues were some combination of the challenges

mentioned above.

Another issue that was present throughout much of the project was the random

appearance of white dots on the TFT display. Over time after power-on, pixels

that should have stayed black would become white.The code never

called for white dots to be printed, so we couldn’t isolate the error. Our first

fix to this problem was to write a segment of the screen black every 10 seconds.

However, this was a purely aesthetic fix, which did not remove the underlying

problem.

Eventually, we realized that putting our sequencer's active-step TFT updates in an ISR

to achieve a constant, fast update at high tempos was the root of the problem. Since

we had TFT updates in this ISR, and in a separate thread used to update more slowly-

updating parts of the UI, there were many scheduling scenarios in which the serial data

sent to the TFT was corrupted. In some instances, this conflict would cause the PIC32

to crash entirely. After realizing the origin of our problem, we moved all TFT updates

to a single thread. This thread ran at 15-20 fps, and due to the less-frequent update,

our sequencer's active-step readout could be jumpy at certain sequence speeds. However,

we gained overall performance by eliminating this potentially fatal stress on the serial

data bus.

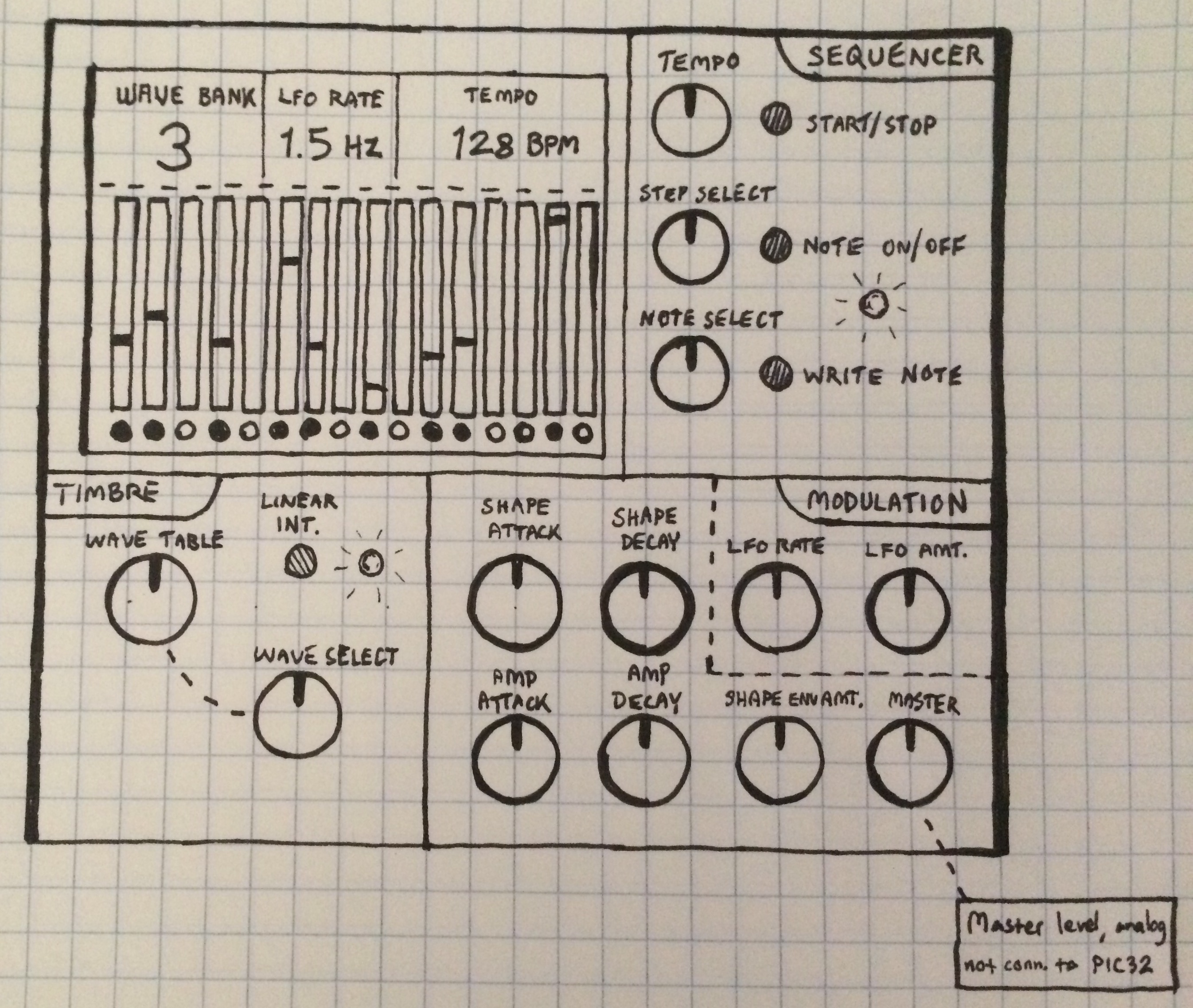

Some of the design changes between the intial and final designs were

just logistic changes, such as the two perfboards in the final product rather

than the initial projection of one large PCB behind a panel. The layout is

a more messy in our final prototype, with the knobs and buttons unlabeled

and ungrouped. We also cut back on a few different controls, both for usability

and because of hardware limitations. The LFO and its intensity control was

removed entirely. The amplitude and shape envelopes were cut back from two

knobs each, for attack and decay, to one knob each, using a modal envelope

scheme. This change allowed for most common attack/decay parameter combinations,

but avoided a complicated control scheme and complicated ADC configuration.

Some of the design changes between the intial and final designs were

just logistic changes, such as the two perfboards in the final product rather

than the initial projection of one large PCB behind a panel. The layout is

a more messy in our final prototype, with the knobs and buttons unlabeled

and ungrouped. We also cut back on a few different controls, both for usability

and because of hardware limitations. The LFO and its intensity control was

removed entirely. The amplitude and shape envelopes were cut back from two

knobs each, for attack and decay, to one knob each, using a modal envelope

scheme. This change allowed for most common attack/decay parameter combinations,

but avoided a complicated control scheme and complicated ADC configuration.