Introduction

Sensing in autonomous vehicles is a growing field due to a wide array of military and reconnaissance applications. The Adaptive Communications and Signals Processing Group (ACSP) research group at Cornell specializes in studying various aspects of autonomous vehicle control. Previously, ACSP has examined video sensing for autonomous control. Our goal is to build on their previous research to incorporate audio source tracking for autonomous control.

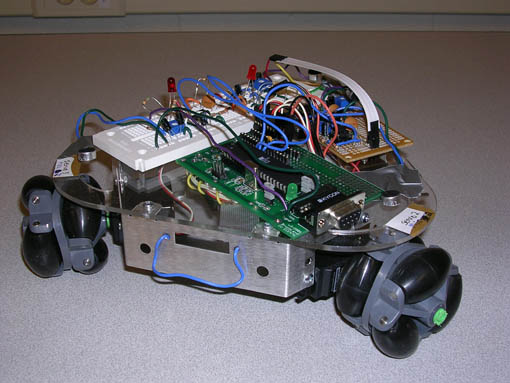

Our project involves implementing a signal processing system for audio sensing and manipulation for the control of an autonomous vehicle. We are working with the ACSP to develop PeanutBot to help advance their research in audio sensor networks. Our system will have two modes, autonomous and control. In autonomous mode, the robot will detect and follow pulses of a predetermined set of frequencies and the robot will approach the source. In control mode, the robot will execute commands by an administrator on PC transmitted to the robot via an RS-232 serial connection.

A short video demonstration of the robot is available at YouTube for viewing, and for download in AVI (29 MB), XVid 4 (5 MB) and Windows Media Video (6 MB).

High Level Design

The PeanutBot robot consists of three microphone circuits, three servo motors, an MCU and a PC. The concept chart of the system and communication protocols is shown in Figure 1.

Figure 1

The PC is used to communicate with the MCU in control mode for transmitting commands. During development, the PC communication was useful for testing, debugging and verification. A basic block diagram of the system is shown below in Figure 2.

Figure 2

The three microphones were used to triangulate the angle of the source relative to the robot. The audio source plays a continuous stream of pulses. Pulses were chosen over a continuous tone because, instead of detecting phase difference in the audio signal, our system detects the arrival time of the signal at a certain amplitude at each microphone. The robot is designed to be autonomous and is, therefore, not synchronized with the pulse generator. As a result, the time of flight of each impulse is not available and the robot is unable to quantify the distance to the source. Instead, the robot advances by a small predetermined distance and listens for the signal again. To find the sound source, the robot listens for the arrival of an impulse on any of the three microphones. Once an impulse has been detected at one of the microphones, the robot records the microphone data at 10 microsecond intervals for 10 milliseconds. Using this data, the arrival time of the impulse at e! ach microphone is calculated and the direction of the source is obtained. Once the angle of the source has been identified, the robot rotates and pursues the source for a short period, and then promptly resumes triangulation of the signal to repeat the process.

Background Mathematics

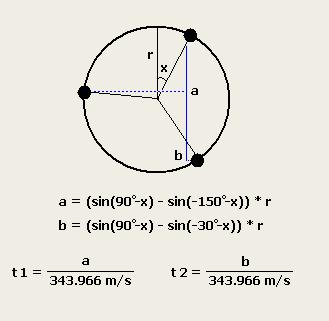

The three microphones are placed at equal distances (7 inches apart) and one microphone is chosen as the first microphone. To find the location of the sound source, the difference in the arrival time of the signal at the microphones is calculated according to the equations shown below in Figure 3.

Figure 3

To calculate the angle of the source with respect to the front of the car, a lookup table containing arrival times and angles is used. The arrival times in the lookup table are calculated using the speed of sound at Ithaca's altitude (343.966 m/s) and the distance between microphone one and the other microphones on the plane of the sound wave fronts for each angle in the table. This table maps the time differences t1 and t2 to a specific angle with an accuracy of 1 degree. Once the arrival times are observed, an angle is chosen based on the closeness of the relative arrival times to t1 and t2.

Logical Structure

PeanutBot has three software state machines for the servo control, user control mode, and autonomous control mode. The robot boots up in autonomous mode but can be transferred into user controlled mode if given instructions via its serial port. The control mode that is selected operates on its data, updates the appropriate servo variables, and transfers control over to the servo control state machine. The servo state machine will read and operate on the servo control variables and, once finished, return control to the mode which called it.

Hardware / Software Tradeoffs

During the design of the robot, there were hardware and software tradeoffs. Most notably, interfacing with the microphones had a complicated circuit to parse information before the software on the MCU manipulated the data. While the MCU does have an 8-channel A/D converter which is significantly more than the 3-channels required for triangulation, the on-board A/D converter requires several hundred microseconds to converge for a single channel, and only 1 channel can be read at a time. As a result, reading all three microphones on the MCU would require about 1-2 ms. Since the microphones were positioned 7 inches apart, it would take less time for the sound wave to travel from the first microphone to the second microphone then for the first A/D reading to converge. Furthermore, reading the microphones in serial instead of parallel would create an inherent delay added asymmetrically to the microphones, making it difficult to triangulate the source of the microphone. Con! sequently, most of the manipulation of the microphones was done in hardware to maintain the functionality of the robot.

Standards

The design of the robot conforms to IEEE standards such as the RS232 standard protocol.

Hardware Design

The hardware design consists of three microphone circuits, a microcontroller, a robot frame, three servo motors, three omni-directional wheels, a 9V battery for the MCU and circuitry, and a AA-battery pack for the servos. Each microphone circuit consists of amplifiers, filters, and comparators. A diagram of the Hardware and software is shown below in Figure 4.

Figure 4

Microphone Circuitry

To control the level of the microphone output, resistors are used to center the signal around 1.5V. This level-shifted output is amplified via an operational amplifier and then passed through a passive lowpass filter. It is then put through a half-wave rectifier with a capacitor to bridge the gaps between positive swings caused by the half-wave rectifier. That output is then passed though an analog comparator to discretize the signal for reading into the port pin on the MCU. The discrete output of the microphone circuit is approximately 4V when sound is detected and 0V when no sound is detected. All 3 microphone circuit outputs are read in parallel using PORTA on the microcontroller. The output is also passed through an LED circuit to ground in order to debug when a particular microphone circuit detects sound. A schematic of the circuit is shown in Figure 5.

Figure 5

Servo Circuitry

The three servos were connected to their own 6V power supply which was filtered using a small capacitor. Each servo was connected to its own pin on PORTB of the MCU and was controlled by a PWM which actuated the servo as desired. The circuit of the servos is shown in Figure 6.

Figure 6

Robot Frame Design

The robot's mechanical frame comes as a kit which requires minor assembly of its provided pieces and connectors. The omni-directional wheels were provided separate from the kit, and proved to be a bit too large for both the frame and the servos that came with the kit. In order to make the wheels fit on the frame, the servos were mounted lower than intended, which gave the wheel enough clearance with the frame so that it fit. In order to mount the wheel on the servo's powered axle, we purchased flame-retardant nylon tubing which provided for a tighter fit between the axle of the servo and the inside of the wheel. The inside of the wheel was also lined with cement in order to ensure a tight fit. The battery packs were mounted to the bottom of the robot frame with duct tape and the circuits were all placed on top of the robot and held in place via wire tension.

Hardware Stuff that Didn't Work

There were many routes that were experimented with before the best hardware design was found. The servo control circuitry was simple and therefore did not require multiple revisions; however, the analog filter circuit for the microphones went through multiple revisions of both design and fabrication. A bandpass filter was originally implemented on our op-amp in order to pass an audio pulse of 2kHz frequency from the microphone, which was selected based on its wavelength as compared to the dimensions of the Peanutbot. The bandpass was being implemented on the same op-amp as our signal amplifier in order to save fabrication space and complexity, however its protoboard design gave unreliable results and poor attenuation of frequencies outside of the passband. Therefore, additional highpass and lowpass filters were added to the output of the op-amp bandpass/amplifier combination in order to implement a 2-pole bandpass filter to achieve greater attenuation of frequencies! outside of the passband. However, the quality of the overall filter was still lacking and the overall signal attenuation was too great. Adding another amplifier stage to increase the signal amplitude to desirable levels was unfavorable because of its increased fabrication complexity. Next, the amplifier circuit was separated from the filter circuitry and passive filters were implemented instead. However, it was noted that the highpass filter was still attenuating the signal greatly at all frequencies instead of filtering the signal selectively. Therefore, the design was changed to the current design, lowering the target frequency to the sub-1kHz range and using only a passive low-pass filter with the op-amp amplifier.

Software Design

The MCU software has three state machines that control the servos, user control mode, and autonomous control mode. During execution, the system uses an interrupt driven hardware timer, interrupt driven communication to the PC, and input from the microphones to update the state machine. The MCU continuously loops over code that updates the state machines and pulses the servos as needed to rotate the robot and move the robot forward. The servos have four states, Idle, Waiting, Rotating and Forward. When the robot moves, it transitions from the Idle state to the Rotating or Forward states, and pulses the servos for 1.5 ms. The robot then transitions from the Rotating or Forward state to the Waiting state. When the system is in controlled mode, it uses an interrupt driven serial communication protocol to receive user commands and send debugging information to the terminal. The code was designed for easy integration into a wireless mesh network. A preprocessor flag was used to ! incorporate the optional flexibility of wireless PDA but still allow debugging via HyperTerm. The servo code and most of the user code was derived from SearchBot. The format for a valid input to control mode is described below in Table 1.

| Control | Rotation Angle | Distance |

|---|---|---|

| 0 to 2 | -180o to 180o | -300 to 300 |

The control states are described in Table 2 below:

| Value | Mode | Function |

|---|---|---|

| 0 | User Control | Rotate the given angle, then move forward the given distance |

| 1 | Autonomous Control | Track and home onto the audio pulse |

| 2 | Update Servo Scaling | Modify the servo scaling factors increase or decrease the robot's responsiveness |

The heart of the software is the autonomous mode. The autonomous mode has five states, Stabilize, Listen, Locate, Mode and Done. When the robot enters autonomous mode, the robot enters the Stabilize state. The Stabilize state waits for silence on all three microphones to ensure that robot will only listen to the start of the audio pulse, and not the middle or end of a pulse. Once the robot hears silence, it transitions to the Listen state. In the listen state, the MCU constantly samples the microphone port pins until the start of an audio pulse is detected. Once the pulse is detected, the robot records the next 10 ms of audio and determines the time of the first pulse on each microphone. If only one or two of the microphones hear the pulse, then the sample is discarded. The robot repeats this process of sampling the microphones for a total of four consistent pulses. After the data from all four audio pulses are sampled, the machine transitions into the Locate state. In ! the Locate state the robot averages the four time samples. The three average microphone timestamps are then used as indexes into a Matlab-generated lookup table stored in flash memory. The mathematics in the Matlab script are described above. The lookup tables is used to calculate the direction of the source. The robot then records the analysis, and transitions to the Move state. In the Move state, the robot updates the Servo states to rotate the calculated angle and move forward by 20 cm.

In the future, code should be written which analyzes the last several moves to determine if its net movement is negligible. If there is not any net movement, the robot transitions into the Done state, and remains there until the robot is reset by the user. Otherwise, if the robot has made progress towards the source, then the robot returns to the Stabilize state and the process repeats.

Software Stuff That Didn't Work

Several designs of the software were considered before the final design was implemented. One failed implementation was designed to have very simple microphone circuits that are sampled continuously by a multi-channel AD converter. However, due to inherent hardware limitations on the Atmel Mega32 microcontroller this design was not feasible. Additionally, due to the variation in the microphone circuit, the microphones needed to be sampled multiple times and averaged. Furthermore, outliers in the sample had to be ignored to prevent skewing the average of the microphone samples.

Results

Testing results prove that the hardware of the project works properly. The robot can be commanded to rotate and move via serial in the user controlled mode and it properly detects audio stimulus in the autonomous control mode. The direction that the robot moves in the autonomous mode is sometimes inconsistent with the direction of the audio source, possibly due to audio reflections or inconsistent readings on the microphones. The output readings of the microphones are outputted to the serial port if a "debug' mode is initiated in the software, and they show that the robot's three microphones typically detect sound when they are expected to. However, at times the robot still reacts randomly and goes in an unexpected direction. The consistency has increased slightly since a 4-reading average was implemented, and also if the stimulus to the microphones is loud and clear such that all 3 microphones detect it cleanly and accurately.

The analog circuit requires time to settle between audio pulses due to capacitive discharging. Therefore, there is a two second wait time implemented between the sets of readings. Additionally, by averaging four readings, the time to locate the audio source has increased.

Safety is ensured by adding an accessible power switch on the side of the robot chassis which disables both the power to the servos and the power to the MCU. The user may put the robot in user control mode at any time as the communication is implemented via interrupts. Our robots primary interface with the Administrator is via the PC, and as a result, there are not any interface design considerations for people with special needs. We do not have any intellectual property issues since we will design the system and code except for the wireless code which will be used with the permission from the ACSP. Lastly, we will operate within FCC regulations since our communication occurs between two FCC-compliant devices. Additionally, the robot does not interfere with other people's designs as there is no RF communication and we produce minimal electric and acoustic noise. The robot will, however, react to loud audio pulses generated by other people's designs.

Conclusions

The microphone data observed through the serial port to the PC meets the project expectations when the audio source is relatively close and the volume is high. However, the sensitivity of the microphones and the analog circuit did not meet our expectations. When the volume of the audio signal is too low, the results are somewhat unreliable. Additionally, the capacitors used in the circuit proved to have a large margin of error (~20%). The design of the circuit was altered to minimize this effect, but the error was not eliminated. Subsequently, the capacitors provided limitations on the filtering of the audio signal and therefore the inputs provided to the MCU. This cascaded through the design and could be causing some of the unexpected movements the robot occasionally makes. Future designs could take this into account and either minimize the number of capacitors further or order higher quality capacitors with smaller margins of error. However, since the user controlle! d mode does not rely on capacitors to determine the response of the robot, it responds excellently to the commands it receives and therefore meets all expectations fully. Another solution to the circuit error problem would be to analyze the signal using software. This requires an MCU with an A/D converter that could listen to three inputs simultaneously. Since the design is derived from the Searchbot project from Spring 2006, the source code for the control modes and the servo control acted as a shell for the PeanutBot code.

Ethical Considerations

The project has been designed with the IEEE Code of Ethics in mind. As required, the project team accepts all responsibility for issues concerning the safety, health and welfare of the public, and the project team is responsible for disclosing factors that might endanger the public or the environment. Since the project involves an autonomous control mode where the robot is not under direct human control, this is an ever-present consideration. PeanutBot also strives to avoid real or perceived conflicts of interest whenever possible, and to disclose them to affected parties when they do exist. The project team has strived to be honest and realistic in stating claims or estimates based on available data. The results of testing have been presented in an unaltered manner. The team has not been subject to bribery, and will not be involved in bribery in the future. The team strives to improve the understanding of technology, its appropriate application, and potential consequen! ces by understanding the military and reconnaissance applications of the robot and attempting to advance understanding of audio processing and triangulation of a sound source. The team seeks, accepts, and offers honest criticism of technical work, to acknowledge and correct errors, and to credit properly the contributions of others. The use of code designed by others is documented above. During the execution of the project, the team has treated fairly all persons. The safety considerations described above fueled the team's commitment to avoiding injuring others, their property, reputation, or employment by false or malicious action. Finally, the team has assisted colleagues and co-workers in their professional development and to support them in following this code of ethics. As stated above, we have followed all legal considerations such as the FCC regulations.

Contact Information

Contact Angela Israni, Hemanshu Chawda or Seth Spiel for questions or comments.

Appendices

Source Code

- Commented MCU Code that Averages 4 Pulses

- Commented MCU Code that uses a Single Pulse

- Commented MCU Header File

- Angle Array Header File

- MATLAB Angle Array Generation Code

- MATLAB Audio Generation Code

Schematics

Parts Appraisal

| Parts | Cost |

|---|---|

| Acronome PPRK Robot Vehicle | $325 |

| Batteries (9V and 4 AA) | $10 |

| Audio Source (Speaker) | $0 |

| Atmel Mega32 Microcontroller | $8 |

| Custom Solder Board | $1 |

| White Breadboard | $4 |

| Max233 CPP | Sampled |

| RS232 Connector | $8 |

| Analog Circuit Components | $0 |

| Green Tubing | $5 |

| Total | $361 |

Work Distribution

Seth:

- Writing the Audio Generation script

- Writing test files for the servos and communication

- Writing most of the software

- Soldering the boards

- Physical building of the robot including attaching the wheels and kit assembly

- Testing the analog circuitry

- Working with ACSP to ensure correct application of assignment

- Shopping for parts

- Working on the project report

Hemanshu:

- Some software

- Soldering the boards

- Physical building of the robot including attaching the wheels

- Designing the analog circuitry

- Testing the analog circuitry

- Shopping for parts

- Working on the project report

Angela:

- Writing a test file for the port input

- Writing the Angle Array Generation file

- Soldering the boards

- Some software

- Physical building of the robot including attaching the wheels and kit assembly

- Designing the analog circuitry

- Testing the analog circuitry

- Working on the project report

References

Data Sheets:

- Panasonic Microphone Specifications

- Panasonic Microphone Part Information

- Nylon Tubing

- Mega32 microcontroller

Vendor Sites:

Borrowed Code:

More Information

Contact Angela Israni, Hemanshu Chawda or Seth Spiel.

Related Resources

PeanutBot in the News

PeanutBot in action.

Professor Land teaching microcontroller fundamentals.

The Project Team on Holi.

The Project Team on a Billboard in Times Square.

The Project Team in Phillips.

The Project Team at Dinner.