Introduction

Maze in a Box is a portable game in which you tilt a TV to navigate your way around a 3D maze as though you were in it.

We created Maze in a Box as a challenge to generate 3D looking graphics using the Atmel Mega32. We also looked to implement the game in a manner that was completely easy and intuitive to play. Most importantly, it was way cooler than any other idea we could think of.

High Level Design

The original idea for the project was to recreate something resembling the classic video game 'Doom'.

After some discussion, we decided to settle with something simpler, like the ubiquitous windows screensaver.

The finalized idea was a maze in which a person could walk within by holding up a completely portable game unit.

The general schematic is as follows: The Atmel Mega32 generates a video signal to the TV showing the user's current view of the maze. The user understands the information moves in a real space to modify his position within the maze. The accelerometers picks up these values and passes it to the analog to digital converter on the processor, which then interprets the changes in acceleration and modify's the user's position within the maze accordingly. This continues until the person reaches the end of the maze, which is marked by a corridor with a diamond at the end of it.

It was realized early on that there would be two main tasks involved in the project-- creating the drawing system and interfacing with accelerometers. We started off with the former by exploring different ideas for creating 3D images for the game.

There were a few questions that needed to be addressed.

Performance, performance, performance.

The biggest compromises we had to make in the project involved performance, storage space and programming complexity. Since we are programming on the Atmel Mega32, performance and space are both scarce resources. Thankfully, we managed to come up with a good compromised that managed to achieve reasonable framerates, kept within the storage space and that was tenable to code within the allotted time frame.

How much real 3D calculations should there be?

Performing 3D calculations requires significant resources in terms of matrix multiplications. From our initial research, we found that true 3D required 4 4-D matrix calculations per point (which translates to 64 multiplications). This was unacceptable. In order to simplify the math and reduce the number of calculations, we could constrain the viewport. It was soon decided that for the best processor efficiency, we could execute the entire task without any real math and still conform to a reasonably realistic display specification.

One processor, or two?

When choosing how the drawing system should work, an important consideration was whether we should use one processor or two. One processor would be cheaper and more elegant. A couple of interesting schemes were conceptualized in order to try to squeeze as many clock cycles as possible out of the processor while it was generating video output. Interestingly enough, one of the schemes made it into the final build even though we finally opted for the two processor configuration. The eventual reason for using two processors was so we could work on the drawing system without having to worry about timing issues. This turned out to be a very fortunate decision.

Full frame updates, or incremental screen updates?

We had two options for the drawing system with very different implications. The first one was to update the entire video buffer on every iteration. The problem with this approach was that it was prohibitively slow due to the large size of the video buffer. On the up side, it produces a very elegant result, whence each frame can be drawn solely based on the current position the user is in the maze. Incremental screen updates would be much faster in updating the video buffer, however the screen updates would have to be a function of both the current user position and the next user position, which seemed much more complicated and prone to bugs that would be debugged by painful pixel counting. For this reason, we opted with full frame updates.

Random access updates, or serial updates?

When we inspected the video code from Lab 4, we realized that the code uses random access to update the video buffer, which would have been criminally inefficient for drawing solid shapes like in our game. We had to create our own system for drawing polygons, and the important question was whether we were going to draw using a 'random access' approach which would allowed us to draw polygons by specifying pixel values, or whether we should use a 'serial' approach, updating the video buffer sequentially. The trade off here is between programming complexity and execution time, and we eventually opted for serial updates in order to improve performance.

Discrete or continuous?

In order to create a compelling game, we needed to decide on how fine grained movements would be. For walking forward and backward, creating fluid movement seemed feasible enough. However, we had a long list of problems with performing continuous turning (since we had opted for no 3D math). By preliminary tests, we realized that 90-degree snap turning would be completely counter intuitive. With this in mind, we decided to implement as much as we could, with the contigency of defaulting to discrete movements if we could not work out the code in time. Thankfully, we did not have to revert to that.

After the drawing system was completed, we moved our attention to the accelerometer input. We started off with a X-Y accelerometer, taking in values and trying to deduce two kinds of movement-- walking and turning. After a long series of particularly faulty inputs, the accelerometer stopped working. At Bruce's suggestion, we switched to two Z accelerometers perpendicular to each other. This worked much better, but still after much work, we were still unable to devise any heuristics that deduced movement in an accurate enough fashion for it to be intuitive. We eventually gave up on trying to detect walking and turning and switched to a tilt based system which worked quite well.

Standards

We conformed to NTSC standard for video generation.

Program Design

Processor Communication System

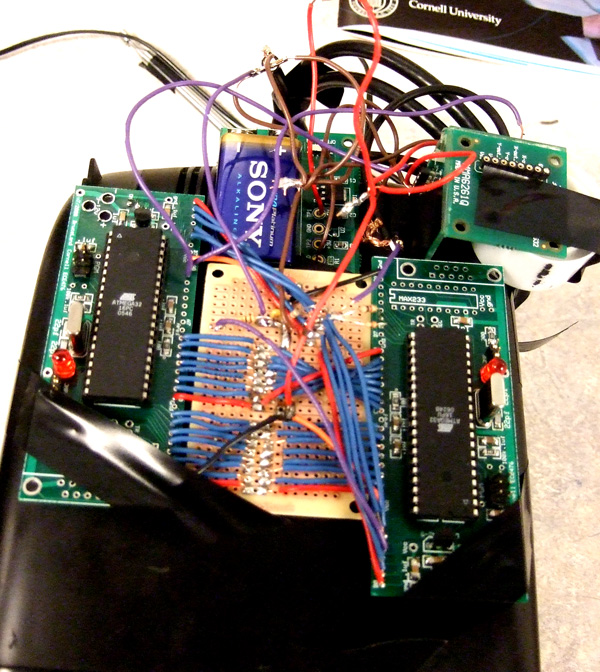

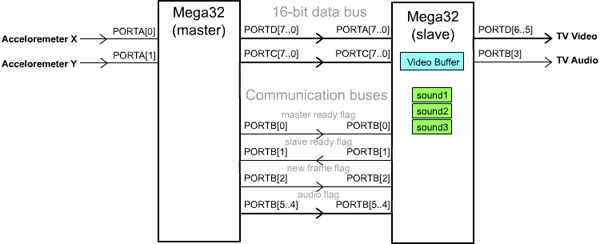

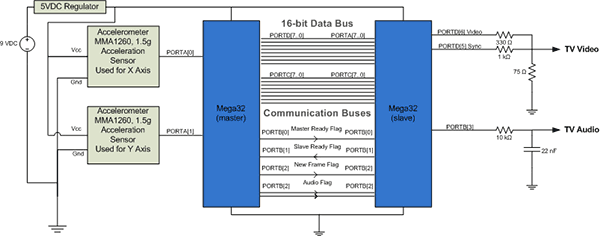

Since we decided to work with two processors in this project, the first part of the project was spent working on the communication system between the two Amtel Mega32's, which we shall name master and slave.

The primary job of the slave is to maintain video and audio outputs while the master is doing processing. It holds a 1600 byte video buffer which displays 100 lines of video, with 16 bytes a line, with a total resolution of 128x100.

The master takes inputs from the accelerometer and modifies its current state. It then generates a single video frame which is transmitted to the slave to update the slave's video buffer. Additionally, the master generates the audio flag to trigger sound outputs on the slave.

Communication Protocol

A system of two way flagging is used to push video frames from the master to the slave. Two channels are used primarily-- the master ready flag and the slave ready flag, with the addition of the new frame flag which is used to indicate the start of a new video frame. On every iteration, the master checks if the slave ready flag has changed from its last value. If the flag has changed, 16 bits are put onto the data bus and the master inverts its own ready flag. The slave continually polls for changes in the master ready flag and once it senses that the flag has been inverted, it reads 16 bits off the data bus and uses it to update two bytes in the video buffer. This process is repeated until the entire video buffer is updated. Video frames are transmitted sequentially, therefore the new frame flag is used to reset the position of the update to the start of the video buffer. For audio, the slave simply reads off the value from the audio flag and plays the appropriate sine wave, or no sound if it is so flagged.

Video Frame Generation

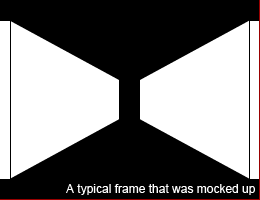

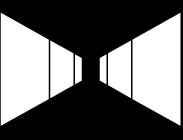

The drawing system's job is to generate video frames from the current state of the game. There were a couple of key observations that were used to optimize the drawing system. (1) Every column has at most only one contiguous white area. (2) The entire video frame is always symmetric around the middle horizontal line. With these two observations, a system was designed to generate such images with as little processor overhead as possible.

The first task at hand was to determine the video display area. In order to create a 1 pixel border around the image, the 128x100 screen resolution was given a 7 pixel empty border and a 1 pixel white border. This reduced the screen resolution to 112x84, which is 84 lines of 14 bytes. A video state buffer (VSB) was created in the master. The VSB is analogous to the video buffer in the slave, except it does not hold actual pixel information. Since all images are symmetrical in the vertical, only half the size was required. The final size for the VSB was 14 bytes x 42 lines = 588 bytes.

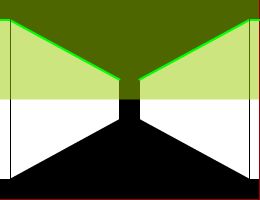

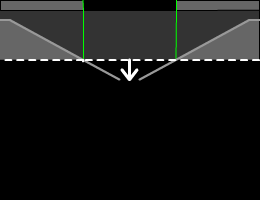

Updating individual pixels in the VSB is not only programatically messy, but also highly inefficient. Given the observation that each column only has at most one contiguous white area, an interesting scheme was devised to generate video frames. The state of the game is used to draw a series of lines in the VSB such as the solid grey lines in the diagram above. The actual 'rendering' of the video frames is performed in real time during the tramission of video frame to the slave processor. During transmission, a line buffer of 14 bytes is kept, holding the last transmitted line. During each line, the corresponding information is read out from the VSB. The line from the VSB is OR-ed with the data from the line buffer to generate the new line. When the end of the VSB is reached, the pointer starts moving backwards through the VSB, performing the XOR operation with the line buffer, in order to produce a mirror image in the middle horizontal line of the image. In this fashion, full video frames are generated from simple line data that can be written to the VSB in a reasonable amount of time.

Video/Sound Generation

The slave processor generates the video signal from its video buffer. Every 63.5us, an interrupt is called that generates a single line of the video signal. The current line count is used to determine if a sync pulse should be generated, otherwise, a single line from the video buffer is sent to the output signal. In the time before and after a single line of video data per video line, a frame of information can be received from the data bus. At the start of each frame, the compare value for the PWM output of the slave processor is set to a new value. In the time between video frames, a routine is run that checks the audio flags and toggles the increment for the sine wave generation, and determines if the PWM output should be on or off.

Maze System

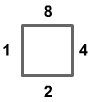

The maze is represented by an arbitrarily sized character array of defined width and height. Each cell is defined by 4 bits and so each character represents two adjacent cells within the maze. Each cell is defined by the walls on 4 sides, as denoted below. For example, if a cell has a wall in the +ve and -ve Y directions, the binary representation of the cell is 0b1010.

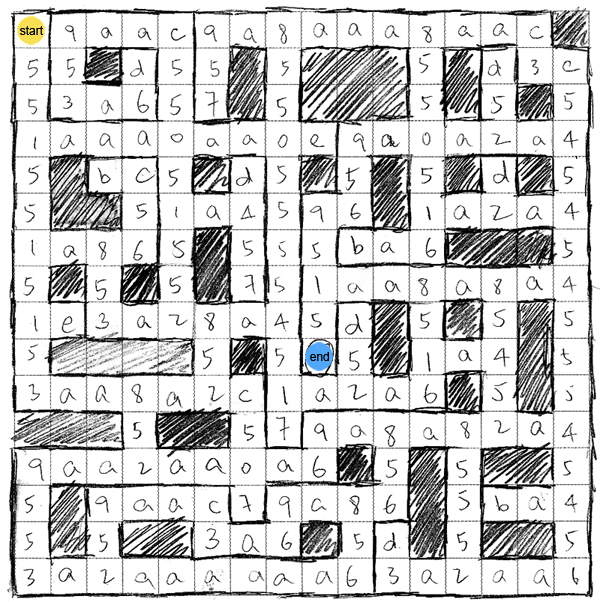

The maze used for the primary demonstration is illustrated below.

Drawing System

The general technique

It was realized early on that since we were not doing any real 3D, we would have to handle forward/backwards movement separately from turning motions. In order to optimize the process, we tried to reduce the number of random accesses made to the update VSB. The general technique that was used was to update the buffer using a character pointer and a rolling bit mask that traversed the screen in one direction (left or right), pin pointing specific pixels and modifying them.

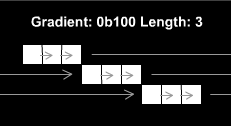

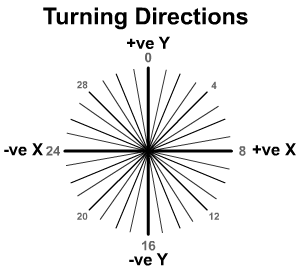

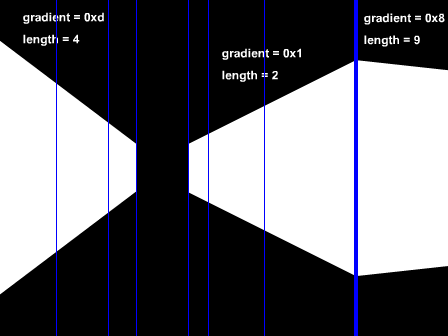

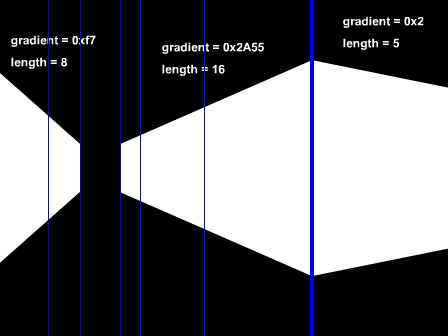

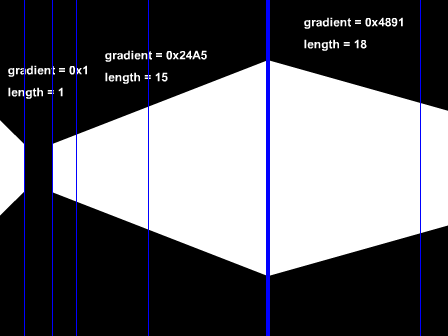

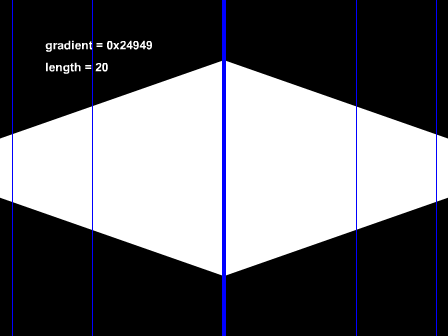

Encoding gradients

Since no lines in the display had a gradient of more than 1, we could draw slanted lines by incrementing or decrementing the screen pointer by the width of the screen between consecutive pixels. In this fashion, we could encode any arbitrary gradient that was between 0 and 1 by defining a binary string and a length.

Walking backwards/forwards

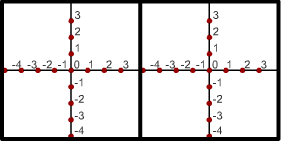

Above is an example of a mockup that was created in the planning of the back/front walking system. In order to achieve fluid motion, each cell of the maze is divided into 8 divisions along the x and y axes, as shown below. At any one time, a user can exist at any one of the red nodes and be facing one of 4 directions.

Two pointers are initialized and set to the starting positions for the right and left sides of the screen as shown below. These positions are hard-coded, as is the gradient for the envelope that is denoted by the grey line below. The system renders a total of 24 cell subsections (or a total of 3 cells) in front of the user, starting from the subsection the user is currently residing in. In order to create the illusion of perspective, cell subsections closer to the user are wider. (Specifically, the first 8 subsections have 3 pixels width each. The next 8 subsections are 2 pixels and the last 8 subsections are 1 pixel wide). Lines are drawn at the start of each new cell.

At each cell subsection, a routine is run to determine if a wall should be drawn in a specific direction, by masking the direction bit mask with the bit mask of the cell. If a wall is found in front of the user, the wall is drawn in front of user (by means of drawing a horizontal line), and no further 'exploration' of the maze is done. If a wall is not present at the side of the passage, a routine is run that checks the rooms at the side for a wall in front. If the wall is present, it shows up as a passageway going off to the side.

Continuous Turning

One of the biggest challenges was to implement a system of continuous turning that was reasonable simple to be rendered in a short amount of time. After haplessly around buildings and trying to decide the best way to draw pseudo-3D, a few drafts of the continuous turning drawings were created, before one was finally settled on. The challenges were to make it look convincingly 3D to the user, while making it programmatically simple and consistent with the backwards/forwards drawing mechanism.

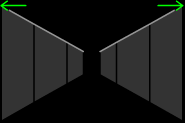

A user can only turn when he is in the middle of a cell (at x and y positions 0,0). From this perspective, there are 32 possible directions the user can be facing. After trying to animate the turning, we discovered that it was possible to reduce the animation to 4 unique frames. (Note that when the user is facing directions 0, 8, 16 or 24, the backwards/forwards drawing system would kick in.) These frames are displayed below.

Whilst there are only 4 frames shown, all the frames (except the cardinal directions) can be represented by one of the frames. For example between directions 0 and 8, direction 1-4 can be represented by the pictures in the same order. Directions 5-7 can be represented by a vertical mirror images of frame 3-1.

The drawing area in each frame is split into 3 walls that are drawn one by one, starting with the right most. Each wall is defined by a starting position and a gradient. The starting position itself is defined by a character offset and a bit mask, whilst each gradient was represented by a bit string and a length. Based on the turning direction, the relevant information is retrieved for the current configuration in order to draw the three walls.

Similar to the backwards/forwards drawing system, the routine checks for walls on each side of the each passageway as it traverses the area in front of the user. Based on that information, it chooses whether to draw walls in or not. Similarly, if front walls are found, the rendering down that passageway stops, and if a wall is missing, it checks the next room in order to find out of a passageway ought to be drawn in. In order to draw these passageways, the gradient of a wall parallel to the wall to be drawn is used to draw the line.

Accelerometer and movement

A good amount of work was put into detecting walking and turning motions using the accelerometer, but eventually it turned out to be too difficult to implement, and we eventually settled with using the accelerometers as a tilt sensor. On initialization, 16 values are read in for X and Y tilt. The average of these values is used as a calibration point for 0 tilt. On every subsequent iteration, the input from the accelerometer is recorded and the tilt is found. If the tilt in the Y direction is above a certain threshold, the game moves the user forward. If the user is not facing a cardinal direction, the user is rotated back to a cardinal position before the movement is executed. Similary, if there is tilt in the X direction, the game turns the user. If the user is not in the middle of a cell, the game moves the user towards the middle of his cell before turning. There are two speeds for moving and turning-- full speed and half speed. Each of these speeds is toggled at different amounts of tilt.

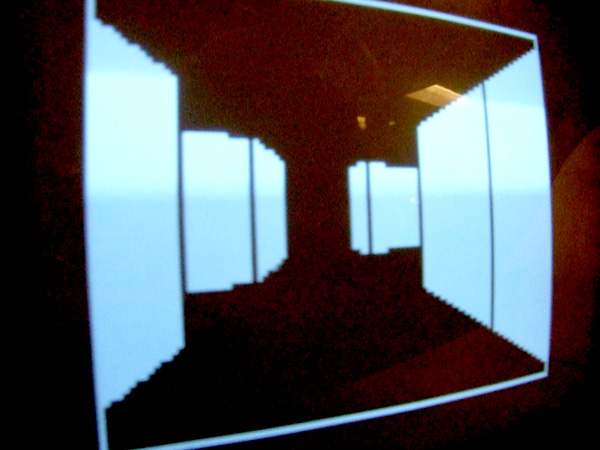

Results

We were very happy with the results we got. There were enough free cycles in the video code on the slave processor to achieve a good framerate for obtaining frames from the master without any visible tearing or jitter in the video. The 3D frame generation was fast enough for fluid motion, and not too fast which would have caused the motion to be too fast (since accelerometer data is read on every new frame generation). The sound generation was accurate to the frequencies specified when measured by the oscilloscope.

The game is intuitive and very much playable by everyone who tried the game. Since the entire project is battery powered, the game has a very nice portable feel to it. For the demonstraion, we loaded a typical maze into the system that showcases the typical images generated by the drawing system. The system will however work for any maze that can fit within the memory of the system.

In the current iteration, there is no instruction screen, menu screen because of the current limitations of the drawing system that leads to special restrictions. With more time, we would have been able to create a more compelling interface.

Whilst we were unable to create a walking-sensing system for the game, it turns out that the tilt sensor still was very effective and engaging to users. It also has the advantage that users will not knock into things in the real world if we had implemented a walking system.

Demo Video

Conclusions

We are very happy with the results of our project, and it had met most of our expectations. If we were to have more time, we would have made the product more polish such as menu screens, reset buttons and a level structure for the mazes. But for all intents and purposes, the game in its current iteration is indeed very entertaining.

Ethical Considerations

Throughout the course of this project, ethical considerations were kept in mind in accordance with the IEEE Code of Ethics. We maintained a safe, healthy work environment we building and testing all of the hardware. When soldering or handling any equipment to build the hardware, we were careful not to disturb or harm anyone or any property. We treated others in the lab with respect and politeness, and tried to cooperate with the other users of the shared resources in the lab, such as the soldering and lab equipment. We sought, accepted and offered help whenever needed in the lab. We were honest in making the claims of the performance and abilities of our project. We declared all of the cost considerations appropriately. We maintained integrity when writing code as to avoid any intellectual property issues. The final product was built securely to ensure safety and the well being of the public, and not cause any injury or harm to any person or property. Since the device must be held by the end user to play the game, all wires and components were attached in a safe manner to avoid electrical shock and puncture wounds or scrapes from wires, chips or edges. Any devices that may radiate energy must comply with Part 15 of the FCC rules and regulations, but since our project does not involve any wireless transmission or communication, this is not a major consideration.

Appendices

Program Listing:

Cost Details:

| Quantity | Item | Cost |

|---|---|---|

| 2 | Atmel Mega32 | $8 |

| 2 | Custom PC Board | $5 |

| 1 | Small Solder Board | $1 |

| 1 | 9V Battery | $2 |

| 12 | 1.5V C Battery | $1 |

| 1 | 9V Battery Board | sampled |

| 2 | MMA1260D Accelerometer | sampled |

| 1 | B/W TV | $5 |

| Total: | $46 |

Tasks

We both collaborated together on all aspects of the project.

Click to enlarge

Click to enlarge