ECE4760 Project: Tic Tac Toe with CMOS Camera

Paul Cheng (pc389)

Fei Sun (fs256)

Introduction

Using computer vision and rules for a ��perfect tic tac

toe game��, we have built a system that would never lose in a fair game (i.e.

marking 1 cell per turn) of tic tac toe.

The idea is simple, have a camera driven Mega644 system

that detects for changes on a Tic Tac Toe board, figure

out the next move, and not lose a single game ever! The motivation for this

project is to test the computer vision code we learned last semester and try to

incorporate it on a real time microcontroller. Also, most of the projects from

the previous years used the same camera module but did the calculation on a

desktop PC, this project will try to keep everything simple on the built in 4kB

memory and be as autonomous as it can.

Having a successful outcome is a great proof of concept

for running computer vision algorithms straight from the MCU and may open

opportunities up for interesting future projects.

High Level Design

Rationale

The rationale behind the idea of

this project is based on a computer vision algorithm on detecting changes in

images; the algorithm focuses on the different features between the still components

and dynamic components in consecutive images. Generally speaking, the idea of ��motion

detection�� is basically to subtract the ��static background�� from the current

frame. There are many applications developed based on this algorithm. However,

to implement it on the MCU with limited data memory provides a real challenge.

We designed our project around images that are 36 x 36 pixels. The reason why

we used this resolution is because we need at least two image frame to compute

the difference; through simple calculations, one can see that that using 2 x (36Byte

x 36Byte) =2.6kB of the 4kB data memory is approaching the MCU��s limit and any

images larger than that may affect the overall performance of the system

drastically.

Logical Structure

This project relies on two main

components �V computer vision code segmenting the user��s input, and code to

compute the next move given a particular situation. Below is a flowchart

depicting the overall process of our system:

High Level Flowchart of the Project

The high level flowchart of the

project first generates a ��background�� frame that is the average of the

previous frames, it then takes the current frame and computes the difference

between that and the background, and decides on the position of the new object

in order to return the next move made by the computer.

The background math involved in

segmenting the new object is simple: we divide the window into 9 regions, each

contains 12x12 pixels, we then take the difference between the current frame

and the background and sum the total pixel value inside each region; if the

pixel value is above a certain threshold, we say that the region is filled by

the user in the previous move and sets that cell in our program to a value

corresponding to an user input.

Once the user input is detected,

the program passes the current state of the game into the Tic-Tac-Toe (TTT)

algorithm, which will be explained in more detail later on in this report.

Hardware/Software Tradeoffs

Once again, the size restraint

on the Mega644 is the main bottleneck for this project. This constraint made us

down sample the already small 356x292 image from the imager. Lowering the

resolution into 36x36 arrays made it possible to store and run computer vision

codes on the MCU; however, losing 95% of the image was bad for the accuracy of

the system but was necessary due to the limited chip memory.

Program Details

After

the computer sets the user input, it computes its next move using the following

logic priority: look for a spot to win in one move, look for a spot such that

the user would win in one move, look for a spot such that the user would win in

two moves, look for a spot to win in two moves.

The

check for the computer to win in one move is done by using an exhaustive search

that creates a temporary TTT array with a probable next move in cell i, check for the winning condition, if

the result is not a win, move through the rest of the empty cells.

The

check for the user��s next move is very similar to the previous step, except the

algorithm now creates a temporary file with a probable user move in cell i, check for the winning condition, if

the result is not a loss, move through the rest of the empty cells.

The

next check looks for patterns that would lead to a win or a loss in two moves

(patterns that leads to a ��fork��, when there are more than one cell to win

with).

Main Functions Used:

void init_mcu(void) �V initializes all the variables

used in the program

void init_uart_tx(void) �V initializes the

registers for RS232 communication

void camera_write(unsigned int reg, unsigned char value) �V writes to the

camera��s build in registers using TWI

unsigned char camera_read(unsigned int reg) �V reads from the camera��s registers using TWI

void getnewFrame(void)/void getHistory(void) �V reads from the ADC��s

of the camera, compresses the image and saves the values in the appropriate

arrays

void calibarate(void)/void calibaratenew(void) �V computes the

average of the past 2 frames to obtain a static background image.

void cameraSetting(void) �V initializes the camera for image

acquisition.

void getImageTest(void) �V creates an 36 x 36 image with no

mean filter applied

void getCenterPoint(void) �V computes the center of moment

based on the pixel value of the image

void getDifference(void) �V computes the absolute

difference between the background and the new frame.

unsigned char computeBlock(void) �V computes the location of the

cell with the new object placed

char checkWin(unsigned char* T) �V checks for the status

of the game, possible outcomes are: ��3�� = computer wins, ��2�� = player wins, ��1��

= game continues, and ��0�� = tie.

char nextStep(unsigned char* T) �V computes the next step for the

computer. It first looks through immediate win/loss and then calls twoMove to

check for common patterns. If no result is given, it goes to the first

available cell.

char twoMove(unsigned char* T) �V looks for preset patterns and

returns the next step if found, otherwise returns a ��9�� = no solutions found.

Hardware Details

The

hardware setup for this system is relatively simple; it involves a C3088 board

with an OmniVision OV6620 CMOS camera attached to it. The output of the camera

is attached to portA on the STK500, with timing signals (HREF, VSYNC, PCLK) attached to portD, and I2C lines hooked to

the TWI lines on portB.

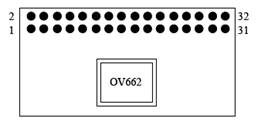

The

following is the pin layout (top view) on the C3088 module and where they

connect to on the STK500:

|

Pin Layout on the C3088 module |

|

Pin connection between C3088 and STK500

Reference

Our code uses the camera library

wrote by Ruibing Wang in codeVision. This was needed to write into the camera��s

registers to adjust the frame rate, white balance, video mode of the camera.

The name of this library is ��twi_master.h��.

The

algorithm for Tic Tac Toe is a combination of the

algorithm from ��Tic Tac Toe (to Death!)�� and the ��Strategy�� section from the

article on Tic Tac Toe from Wikipedia.

Things that Didn��t Work

The

original goal of this project was to make a automated

darts scoring system using computer vision algorithms. But due to the memory

and optical constraints of the camera, we decided that it was not feasible to

implement a system that only tracks darts in a 5cm x 5cm area.

Since

we did all our labs using the gcc compiler, we took the libraries written in

codeVision from the previous years and tried porting them to work under gcc.

However, we were not able to communicate with the camera through the TWI

interface under gcc, all the signals looked correct on the scope but failed to

write into the register. We then tested the original codeVision library and

confirmed that our camera was working properly. After a week of trying, we

ended up moving our development platform to codeVision and utilize the existing

working library in order to focus our effort on the main goal of our project.

Several

algorithms were implemented and tested before the change, namely the code that

finds the center of moment in the captured image (originally used to find the

bull��s-eye are the dart board), the code that finds the center of moment for

the regions with the greatest change (originally used to find the location of

the last thrown dart), and the code that computes the distance between 2

locations using pixel location and conversion factors.

We

originally planed to have the camera hovering over the drawing area, this

proved to be very error prone and it was very hard to reproduce the exact test

results. The finalized version has the camera pointed upwards at a patch of

clear tape, where we would draw on the other side using dry-erasable markers.

This eliminated the problem we had with setting up the camera before each game

and the uneven lighting/shadow problem and gave more accuracy to our result.

Results

According

to the Wikipedia article, the starting player wins ~66% of the possible 138

scenarios, loses ~31% of the time and ties ~2% of the time. However, if the

player follows the set of strategies used in our algorithm, the worst turn out

would be a tie and he/she should never lose.

The

speed of the execution is greatly influenced by the number of pixels used,

since the number of pixels is proportional to the speed of the computer vision

algorithm. In general the algorithm takes about 2 seconds to read in the image,

compute the changed region, and compute the next step it takes.

The

usability of this project should be intuitive to everyone who has played the

game before. Automatic sensing of the user input and outputting the next step

on to a LCD could not be included by the end of the project, but would be very

nice to have to make the project more autonomous.

Out

of all 9 possible steps, 7 of them are almost always correctly detected. Positions

0 and 6 sometimes goes into other cells, this should not affect the gaming

experience too much and should be tweaked if given more time.

Below

are some images we acquired throughout the project:

|

Image used to determine the

orientation of the camera |

The 3 x 3 grid seen by the camera

(filled with 9 blocks) |

|

|

Grid with 2 blocks on (background) |

Grid with 3rd block added on (current

frame) |

The result of the current frame

subtracted by the background |

Conclusion

Results vs. Expectations

We

spent a majority of our time trying to write a library for reading from and

writing to the registers on the OmniVision camera. This had to be done because

all the projects involving the cameras were done on codeVision and not gcc. We

spent about a week of our time trying to get it all done under gcc using the

camera library from the previous years written in codeVision but kept getting

status errors. We validated this with an oscilloscope but did not think we can

resolve this in time. At the end of the week we decided to go back to the

working codeVision library in order to have our project completed in time.

Due

to the field of view of the camera, we were only able to place the camera ~10cm

above the target (FOV = 5cmx5cm), anything further would result in noisy images

that stops the detection algorithm from working properly. This is mainly due to

the data storage constraint we have (4kB data on mega644), which made us

decrease the resolution of the stored image down to 36x36 pixels. If we were to

do this project again, we would have incorporated an external RAM module to

accommodate for the image data and perhaps improve the result of our algorithm.

The

outcome of this design proves the concept of programming computer vision

algorithms on an MCU; however, the constraints on the memory greatly limited

the scale of our project, making it a lot less applicable than we intended.

Intellectual Property

Considerations

The

only code we reused was the previously mentioned library for TWI interfacing

with the camera��s registers. This was done by Ruibing Wang and used in other

previous projects using the C3088 camera module.

No

code from the public domain was used for this project. All of our design were done using knowledge from the labs as well as

other courses.

Potential

patent opportunities for this project seem low, as the goal can probably be

solved more easily and robust using pressure sensors. However being able to

perform computer vision algorithms on a microcontroller level may provide a

good starting point for projects such as a low budget auto tracking camera.

Ethical Considerations

All

the lines on the IEEE Code of

Ethics were followed throughout this project. No one��s personal safety was

compromised during any part of this project. The only conflict of interest we

perceive with this project is that we are building a project that will at worst

tie the human player and may hurt the player��s feelings. Since the project��s

TTT algorithm is autonomous, no bribery will change the game��s result in

anyway. The goal of this project was to test out the computer vision algorithm

we learned last semester on real world applications; this follows directly with

line 5 �V improve the understanding of technology and its appropriate

applications and potential consequences. Since the goal of our project was not

fully defined at the beginning of the semester, we followed line 7 and took

note of all the constructive criticism we received with regards to the focus of

the project. This system is designed without bias towards any ethnic groups; it

will treat all players equally and play fair games. The relationship between

line 3, 6, 9 of the code and our project did not occur to us at any point, but

would have been resolved if they conflicted with project development. Lastly,

we would gladly help anyone with their project if they seek assistance.

Legal Considerations

None

of the code or parts used infringes any intellectual property laws. All code

developed by others are documented and the rest were all made and designed by

our team.

Appendix A �V Commented Program Listing

Appendix B �V Schematics

Appendix C �V Parts List

Appendix D �V Work Distribution

|

Paul

Cheng l TTT algorithm coding l Parts acquirement l Hardware connection l Final Report l Website constructing |

Fei Sun l

TWI library implementing l

Computer vision algorithm

coding l

Code integration l

Final Report |

References

Data sheets

OmniVision OV6620 optical sensor

Vendor sites

Code/designs borrowed from

others

"Customizable Virtual Keyboard" by Naweed Paya and Venkat Ganesh

Background sites

Tic-tac-toe - Wikipedia, the free encyclopedia

Paper Used

Maddalena, L.; Petrosino A., A Self-Organizing Approach to Background Subtraction for Visual Surveillance Applications, IEEE TRANSACTIONS ON IMAGE PROCESSING, VOL. 17, NO. 7, JULY 2008.