Results

Our implemented design was able to successfully identify and track human faces based on skin color, and it was able to successfully draw the appropriate projections of a 3D cube onto a 2D surface.

The following videos demonstrate our running project. The first video shows the downsampled skin map with the centroid in red. We then switch the display to show the projected cube. Note that the user sometimes partially leaves the camera's field of view when moving from side to side. This decreases the area of the face region, which will be interpreted as the user backing away. The second video shows the projected cube from a first person view.

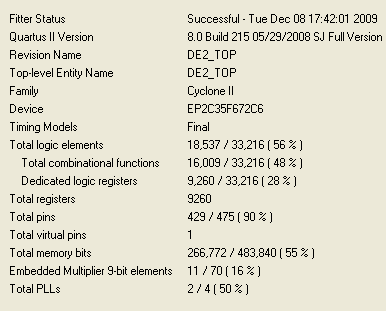

The following figure shows the FPGA resources our project required. We used approximately half the logic elements and memory.

We did encounter some problems with using the Terasic camera and associated modules, especially when it came to setting the camera exposure time. We could not set the exposure time in our own module, so we always had to program the FPGA with the .sof file supplied with the camera, set the exposure to our liking, and then reprogram with our own project. Furthermore, the .sof file did not seem to match its associated project that was included with the camera. Recompiling the source files produced a different .sof file and different program execution. Therefore, we always used the .sof file supplied. The project accompanying the camera was written for Quartus II v5.1, while we used Quartus II v8, so that may account for these issues.

We also encountered an odd bug with the Nios II IDE. Whenever we tried to compile our project, there was an error with one of the automatically generated debug files. We found that we could run our project regardless, and this error did not seem to affect our project’s performance in any way. The exact error message was:

/cygdrive/c/Jasper/finalproj_12_8/template/software/projection_syslib/Debug/libprojection_syslib.a(alt_main.o) In function `alt_main': projection line 0 1260301440533 528

One other comment to make on usability is that others may have to alter the L1 and L2 values to match for their own use. We manually calibrated these values to our own skin colors under our lab’s lighting conditions. Our design was able to accurately detect other hues of skin, but we could not test every single variety of human pigmentation. Dimmer or brighter lights would affect the RGB content of each pixel as well. Our design is still usable by anyone seeking to recreate our experiment, but these values may need to be adjusted for the best performance.