Introduction

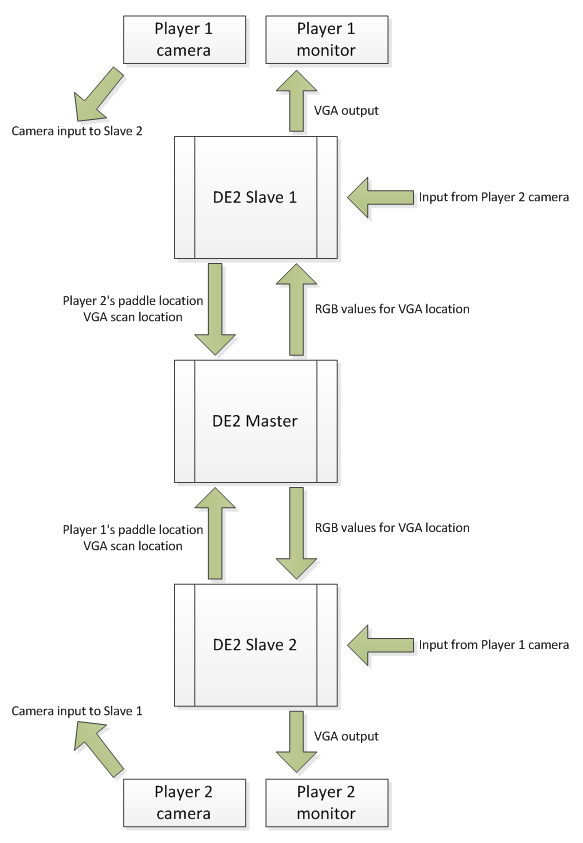

POTION is a motion-controlled game system that allows two players to battle head-to-head or one player to compete against AI for ultimate ping pong glory. For the two-player setup, two video feeds from consumer digital cameras are each separately fed into a pair of slave DE2 boards. These inputs are primarily used to detect objects held in players' hands to represent paddles. Additionally, each of the slave DE2 boards drives a display output to a separate monitor, in which the camera feed of the opposing player is shown in a picture-in-picture window embedded within the game graphics. The game software runs on a third DE2 board that acts as a master, which is connected to the two slave boards via GPIO and controls graphics as well as arbitrates game state. The single-player case requires only a single camera, monitor, and DE2 slave.

High Level Design

Rationale and Sources

The motivation for this project comes from our mutual love for table tennis, as well as having seen a number of motion-controlled video games come into popularity in recent years. All three of the major game consoles today support some type of motion input either natively or through an add-on. We desired to create this sort of immersive entertainment experience ourselves, especially since an FPGA provides an ideal, highly-parallelizable platform for fast object tracking in real-time. Additionally, we stumbled across the addicting flash-based game Curveball, and we quickly realized that this would be an ideal model for a multiplayer motion-based game that is easy to learn yet challenging to implement elegantly.

Features

Object Tracking:

We decided that color object tracking would be the most feasible way to implement motion controls. We had previously considered implementing true feature detection to detect a unique arbitrary object using a MOPS-based or SIFT-based feature descriptor and thereafter querying an on-chip database, but there simply is not enough logic on the onboard Cyclone FPGA to implement this type of algorithm in parallel, just as there is not enough time to perform this iteratively. We settled on tracking the largest bright green object in the camera's field of view, as this color is strikingly absent from our lab. Thus, we have made large green paddles for players to use to control the game (as long as they are not wearing bright green clothing). Note, however, that our detection code will work with any color as long as we can ensure its scarcity, so it is possible that one may opt to track a sterile, grayish hue in a verdant environment teeming with life.

Game Mechanics and AI:

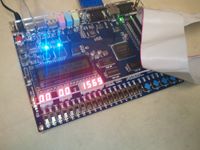

The ball moves back and forth at a constant velocity along the z-axis (depth) inside a narrow tunnel. Each player operates a paddle that is at either end of the tunnel. If the ball hits the paddle, it bounces backwards at the same speed toward the other player's paddle, so this is pretty much pong in 3D. To add some more excitement, an x or y velocity is added to the ball depending on where on the paddle it hits. Finally, the main feature of the game mechanics is that if you are moving the paddle at a fast speed when you hit the ball, you can hit a curveball that accelerates toward the sides of the tunnel back to the other player. If a player is unable to hit the ball when it reaches his side, then that player loses. Time and score are displayed on the 7-segment LEDs.

There is also a very basic AI implemented for the game that can be toggled using a switch if a second player is not available. The AI will track the ball perfectly and always be able to hit it back to the player. Essentially, it has godmode on.

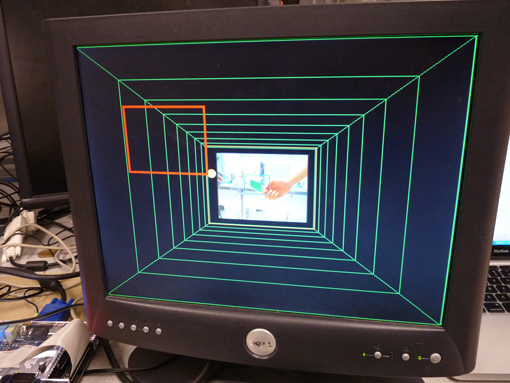

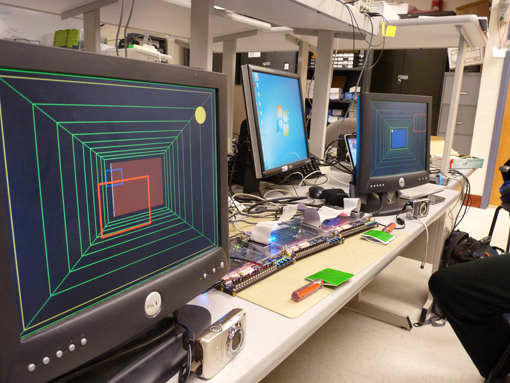

Graphics:

In the background is a set of disproportionally sized rectangles that inscribe each other, creating the illusion that you are looking into the tunnel. As the ball goes deeper into the tunnel, or further along the z-axis, it becomes smaller and moves more toward the center, so that it actually looks it is getting further away. Another rectangle is drawn that is at the same depth of the ball but is as wide as the tunnel to further enhance the 3D feel. The player's paddle is a large red rectangle and the opponent's paddle is a smaller blue rectangle at the other end of the tunnel.

Picture-in-Picture:

Within each monitor, the camera feed of the opposing player is also downsampled by a factor of 4 (in height and width) and displayed in the very center of the screen to create the effect of seeing the adversary in the video game display itself at the end of the tunnel. Of course, this idea is borrowed from our experiences with webcam chatting through popular services such as Skype and Chatroulette. It is very interesting to see the opponent's paddle superimposed on this image, and it shows just how well our object tracking works.

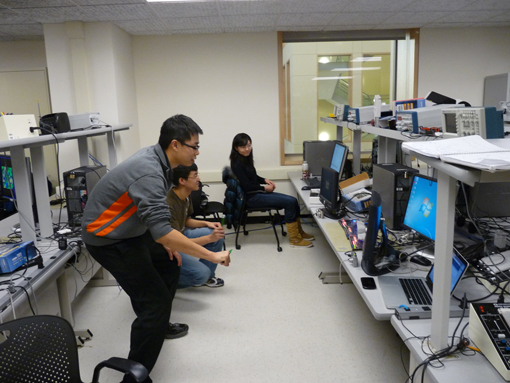

Logical Structure

We describe the high-level logical structure for a two-player head-to-head game. We connect a commercial digital camera's analog video output to each of the slave DE2 boards through the composite video input. Each of the DE2 boards converts the YCbCr video into RGB and next performs object detection. Each sends the coordinates of the locally detected paddle, along with the current location at which it is drawing to the central master DE2 board via GPIO connections. This master DE2 board uses this information to calculate where to draw all four paddles and both balls (each player sees his own paddle as well as his opponent's detected paddle, along with the ball) and relays this information back to the slave boards in the form of binary red, green, and blue values (we have a total of eight possible colors) as the VGA controllers in the slaves move from pixel to pixel.

Block diagram for three-board solution

Note from the diagram that each slave DE2 board is actually collecting the video input from one player yet drawing the video output for the opponent player in the two-player case, as all of the picture-in-picture information is kept local to the slave boards. Additionally, each DE2 board can independently draw the background tunnel graphics of the game, as this does not alter dynamically and needs no intervention or arbitration from the master.

In a single-player situation, only a master-slave relationship is required from just a pair of DE2 boards, and the picture-in-picture of the lone player would be his own captured image.

Hardware/Software Tradeoffs

SDRAM is a very precious resource, and is in fact the reason why our two-player scheme needs three boards and our single-player scheme needs two boards. The NIOS II and YCbCr-to-RGB conversion both require SDRAM, and we did not find an efficient method to multiplex these resources without causing severe video artifacts and timing errors. Additionally, this SDRAM was used for object detection by each of the DE2 board slaves as it makes for an efficient frame buffer. Finally, we chose to pre-calculate the background graphics, as well as the paddle and ball shapes in a hardware lookup table as software rendering would be too expensive to do in real time.

Hardware

Detection Scheme

Our goal here was to get fast, yet accurate object tracking. We noticed very early on that we could not simply track single pixels of the target color since this would introduce too much jitter even with some hysteresis added on. In fact, we desired to track the largest object of some color so that smaller objects in the background that happen to be a similar color would not disturb the gameplay.

The algorithm here is as follows: we iterate through each line of a captured frame and look for the longest horizontal line of the desired color. If we approximate the object to be a circle (or a regular polygon-like shape), this would then give its equator. Then, the center of this horizontal line should give approximately the center of the detected object.

Our actual hardware works by reading as its video source the buffered frames stored in SDRAM (after the YCbCr-to-RGB conversion). To detect bright green as its target color, we set the requirement that a pixel must be at least halfway green on the intensity scale, while less than halfway blue and less than a quarter red. These values were determined after extensive experimentation. We allow a higher threshold for the blue as we found that the commercial digital cameras we used happened to assign strong blue tones to the picture overall, especially as they automatically respond to the perceived lighting conditions of the room.

An important optimization we make is that we decouple our iteration through the frame with the actual line-by-line scanning performed by the VGA controller. This eliminates cross-interference and gives us the freedom and flexibility to explore detection strategies with hysteresis that take longer to compute per frame than it takes for the VGA to scan a frame (although we did end up deciding to use a much faster approach). In any case, such a circumstance would result in contents of the frame buffer changing before we finish iterating through a frame if our detection iterator is out of phase, thus introducing unwanted jitter and detection error. This then requires that we take the frame buffer and perform detection on it within a downsampled binary intensity matrix (where high bits denote detected target colors within the block corresponding to the sub-sampled point), which we take with a factor of 8 in this case. This is achieved as we downsample by using only every 8th VGA scanline. Within each VGA scanline, we traverse segments of 8 pixels and store 1 in the intensity matrix if at least half of those pixels match the target pixel requirements.

As we traverse through the intensity matrix independently of the VGA scan, we keep track of the center of the largest consecutive line with consecutive high bits, and we call this the center of the detected object within the current traversal through the intensity matrix. But this does not necessary correspond to our output detection location, as we apply hysteresis to prevent jitter. In fact, we only call this our output detection location if it is at least a threshold distance away from the previous output detection location. A pixel distance of 16 was empirically evaluated to give a good tradeoff between preventing excessive jitter and providing rather smooth movement.

Additionally, we must take care not to update the output detection location at all if the object of interest moves out of the field of view of the camera. We maintain that a threshold of 24 pixels is the minimum width of the equator in order for the output detection location to update. Otherwise, the output freezes and assumes that it does not see anything of interest. This also gives a tighter maximum distance on how far a player can stand away from the camera and still have his paddle detected, although we found this value to be suitable for the distances we were standing away from the screen, usually below 2 [m].

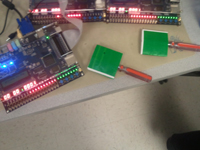

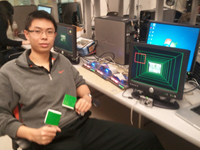

Paddles

These objects used for detection are still technically hardware. We simply tape large green paper squares (approximately 10 [cm] by 10 [cm]) as the paddle base taped to screwdrivers (the handles) created using colored printer ink. These work unbelievably well compared to other objects that we thought would be more easily detectable. We do note that we tried to make PlayStation Move-style paddles earlier by putting 1 [Watt] LEDs inside ping pong balls (what better object!). However, we could not find a reasonable intensity, as putting too much current through would appear to the cameras as pure white (saturating the Bayer filters), while putting not enough current would appear to the cameras... still as white since that is the color of the ping pong balls themselves. We were unable to find a happy medium with this setup, and it also required battery power, so we went forward with our aforementioned poor man's solution.

Do note that the other reason we tried using LEDs was that we had initially experimented with the Terasic cameras. However, the images obtained from them were terribly underexposed and totally unusable with anything less than a bright LED light source. This actually made detection extremely easy, except that the camera also could not distinguish between light sources of different colors since everything saturated into white, including the fluorescent lighting in the lab. Additionally, players looked terrible in the picture-in-picture window as they were horribly dark. Hence, we switched to using commercial digital cameras.

Graphics

The goal for graphics is to generate a clean, nice-looking game, but also occupy as little space as possible. The logic also needs to be as fast as possible so that it can keep up with the rate of 25.2MHz at which the VGA scans across the screen. A major obstacle was that, to make the game have a 3D feel, to compute the position and size of any object on the screen required multiple 3D projection calculations, each of which requires a multiply and divide. Both these operations are extremely difficult to implement on the FPGA. To overcome this challenge, we did most of the graphics using lookup tables, which are stored in M4K blocks. Each pixel color is generated on the fly according to the current VGA scan position, with values coming from either the lookup tables or combinational logic.

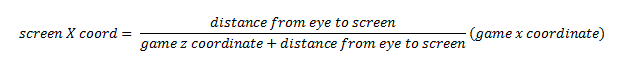

The background uses a lookup table where there is 1 bit for every pixel on the screen, for a total of 640 x 480 = 307200 bits, or 19200 16-bit words. The ball uses two lookup tables. One lookup table provides the radius of the ball, given its z position. The second lookup table is indexed by the radius of the ball and the VGA y-axis scan position, and provides the x-axis pixel distance from the edge of the ball to the center of the ball. The rectangle that is drawn at the same depth of the ball is referred to as the depth tracker. We actually computed the position of this tracker using the 3D projection formula because there was no good way to do it without using too much memory, while there are only projection calculations needed to be done as we only have to calculate one corner of the rectangle.

Here is our projection formula for x (y is similar):

To draw the two paddles and the tracker, we checked whether the VGA scan position is along the bounds of the rectangle, and assert if it is. To enforce the depth of the objects such that objects in front will cover objects behind it, we asserted whether the object is present at that pixel and multiplexed the output color, prioritizing objects in front.

Picture-in-Picture

To create the scaled-down version of the picture, we piggy-backed off the VGA controller's read requests to the SDRAM. For every 4 pixels and every 4 lines, using the VGA controller's X and Y scan coordinates, we wrote the pixel colors into SRAM, scaling the address to the size of the picture-in-picture window. Then, when the VGA controller requests a pixel inside the picture-in-picture window and no game objects are in front, we compute its position in the SRAM and give it to the VGA controller. The SRAM had to be dual ported for this to work, and we simulated the dual porting by running the SRAM at twice the VGA clock and then reading and writing on alternate cycles. While this may sound simple, the timing between the SDRAM, VGA controller, SRAM, and various game elements was extremely tricky to implement without causing serious video artifacts.

Software

A NIOS II processor was instantiated on the FPGA to compute game mechanics. The processor uses the GPIO ports to output the position of the ball, and has for input the positions of the paddles. There is also an output for the AI's position for single-player mode. At the start of the game, the ball is initialized in the center of the screen in front of the left player, with a set z velocity towards the right player.

The game runs inside an infinite loop, where each iteration of the loop is considered a step in the game. In the loop, the paddle positions are first read. Then the step function is called to compute the next position of the ball and win/lose conditions. If the game is in single-player mode, the position of the AI paddle is set equal to the position of the ball so that it never loses. The ball's ideal next positions are then updated without checking for boundaries. A boundary check is done on the tunnel walls and the ball is deflected if it is outside the boundary. After that, a collision check is done on both players' paddles to see if the ball has reached a player, and whether the player was able to hit it back. If a player fails to hit the ball, then the game over function is called to pause the game until the start button is pressed. If the player does hit the ball, the offset of the ball from the center is used to compute an x or y velocity to add the ball. Also, the difference between the paddle's last position and current position is taken to also add an x or y velocity, opposite in direction and proportional in magnitude to the difference vector, to create curveball mechanics. The acceleration is reset on each paddle collision while the velocity is added, giving the game mechanics a more realistic feel. Finally, the finalized next position of the ball is updated. In the last step, the GPIO outputs are updated to change the position of the ball and AI paddle.

Note that we do use the buttons on the master board to start a new round each time a player has lost.

Results

The detection scheme suffers from minimal jitter and tracks our paddles up to approximately a distance of 2 [m] away from the camera. The paddles are also tracked consistently even for large movements, as a player can move the paddle rapidly from one side of the screen to another and still have it tracked quickly enough to make the game feel responsive to the input.

Note that our algorithm is robust and putting smaller green objects such as green marker caps into the camera's field of view will not disturb it. Additionally, we noticed that while playing, sometimes a player may get excited and not notice that he is moving his paddle outside of the camera's field of view. But since our detector freezes the paddle location if no object of a suitable size is detected, the paddle drawn on the screen does not make a huge jump or flicker. Additionally, this sort of feedback easily helps the player realize he must move back into the camera's field of view.

The graphics for the game exceeded expectations. The logic for computing colors at each pixel was able to finish much faster than the VGA scan speed so that there was no glitching or video artifacts of the game objects. There are, however, rare problems with the picture-in-picture where the opponent is displayed at the other end of the tunnel, but it was unavoidable due to the poor quality of the TV video decoder code and documentation available to us. We are actually impressed with how well the picture-in-picture worked.

Preparing our three-board system; cameras are not yet online, hence the blank picture-in-picture windows

(click for full image)

Conclusions

POTION, while rather complex to set up, is fun and very easy to learn to play. The drawing of the local player's paddle certainly helps to guide player responses, and the drawing of the opponent player's paddle makes the game much more personal and social. The detection code is very fast and tracks in real time. In fact, it tracks our green paddles pretty smoothly and there is minimal jitter due to the added hysteresis. Additionally, using the improved VGA code provided by Skyler Schneider, and very fast graphics computation, there is minimal glitching or video artifacts.

The simplest add-on to our project would be to allow sound and music to be played out of the audio port. This could be in the form of music played by storing audio data on a SD card and using the built-in SD card reader. On top of this, it would be very interesting to explore how to overlay sound effects over the music, such as paddle hits and ball deflections against the tunnel walls.

Another add-on to the detection would involve experimenting further to see if there in fact is an easy way to track LED sources without imposing saturation to the camera. Then, one could imagine a scheme where one or several of multiple LEDs (of different colors) is lit based on that color or color combination being scarce in the captured scene. This concept is borrowed from the operation of the PlayStation Move motion controller.

However, even more interestingly, one could explore how to transmit the game data, including picture-in-picture data, and receive graphics updates through the ethernet port. This could then evolve into something of a client-server model, where the master now becomes a server (accessed from behind a router) that calculates a central game state for its clients, and also becomes a routing point for the two sets of picture-in-picture data from each end to fly past one another. This may in turn create the need for yet another audio feature, which is to take microphone input from a player and play it out to his opponent, and vice versa, as ethernet will permit players to truly be remote from one another. Such a configuration would be perfect for sessions with a trainer (one player would coach the other) or for sessions between bloodthirsty rivals (facilitate trash talking).

A final extension to this project would be to convert the graphics to an anaglyph so that players may wear red-cyan glasses to see the game environment in 3-D. This is since our gameplay perspective looks down a tunnel and has great potential to look believable in 3-D. The main challenge here would be to convert the picture-in-picture itself to an analyph as well, since converting the rest of the graphics should be as simple as changing the contents of the lookup tables.

In the course of crafting this game system, we used the TV decoder code from Altera, which very importantly has the YCbCr-to-RGB conversion that we need to display composite video from the cameras onto the VGA monitors, as well as run our detection code. Additionally, we used a NIOS II processor in our design, which is part of Altera's intellectual property. Also, we used a VGA control module from fellow ECE 5760 student Skyler Schneider. We did not use any other public domain code. Since our game is similar to the Curveball game, though it greatly improves upon it by adding motion control and picture-in-picture, we are not considering patent opportunities at this time.

Appendix

Code Listing

Slave Board Quartus Project: potion_slave.zip

Master Board Quartus Project: potion_master.zip

Game Program in C: potion_software.zip

Task Breakdown

Project Idea and Board-to-Board Protocol - Sean

Detection and Tracking - Sean

Picture-in-Picture - Both

Graphics - Yunchi

Game Code - Yunchi

References

Acknowledgements

We thank the following people for giving us inspiration and for helping us to design and build our project:

The unknown creators of the popular web game Curveball, whose gameplay model we emulate in our project.

Skyler Schneider for creating a greatly improved VGA controller module.

Herman Yang for allowing us to borrow his camera equipment.

And finally, Bruce Land for helping us to debug many complex and difficult aspects of our project.

Sean Chen, ECE '11

(click portrait to see previous project from ECE 4760)

Canon PowerShot SD800 was our first love...

...but then we met its younger sister!