The purpose of our project, Speed Zero, is to automatically find the X-Y zero of a part in the CNC.

We developed a system that can automatically find the X-Y zero on a fixture plate in a CNC machine with limited user input.

Zeroing a CNC machine can be a very tedious and involved process and existing automated solutions are expensive. Our project aims to automate this process quickly and without breaking the bank. The process of manually zeroing can take an operator over 5 minutes if they are having a bad day and if a shop is making thousands of parts per year, this time waste adds up. Creating a automated zeroing system would allow the operator to place a fixture in the machine and start the zeroing program and then go off and be working on something else while the device was zeroing the machine. This would increase efficiency and reduce operator error.

Motivation

The motivation for this project comes from Jay’s experience on the Cornell Baja SAE project team. Jay is one of the five students certified to use the CNC machine in Emerson machine shop and he is called upon quite frequently to CNC parts for the team. He has a lot of experience manually zeroing parts in the machine and was annoyed at how tedious the process can be. Jay decided that it was time to put his ECE education to work and come up with a better way to solve this problem.

The motivation for this project comes from Jay’s experience on the Cornell Baja SAE project team. Jay is one of the five students certified to use the CNC machine in Emerson machine shop and he is called upon quite frequently to CNC parts for the team. He has a lot of experience manually zeroing parts in the machine and was annoyed at how tedious the process can be. Jay decided that it was time to put his ECE education to work and come up with a better way to solve this problem.

Logical Structure

The basis of our design comes from the fact that machines and computers are good at doing the same thing over and over again.

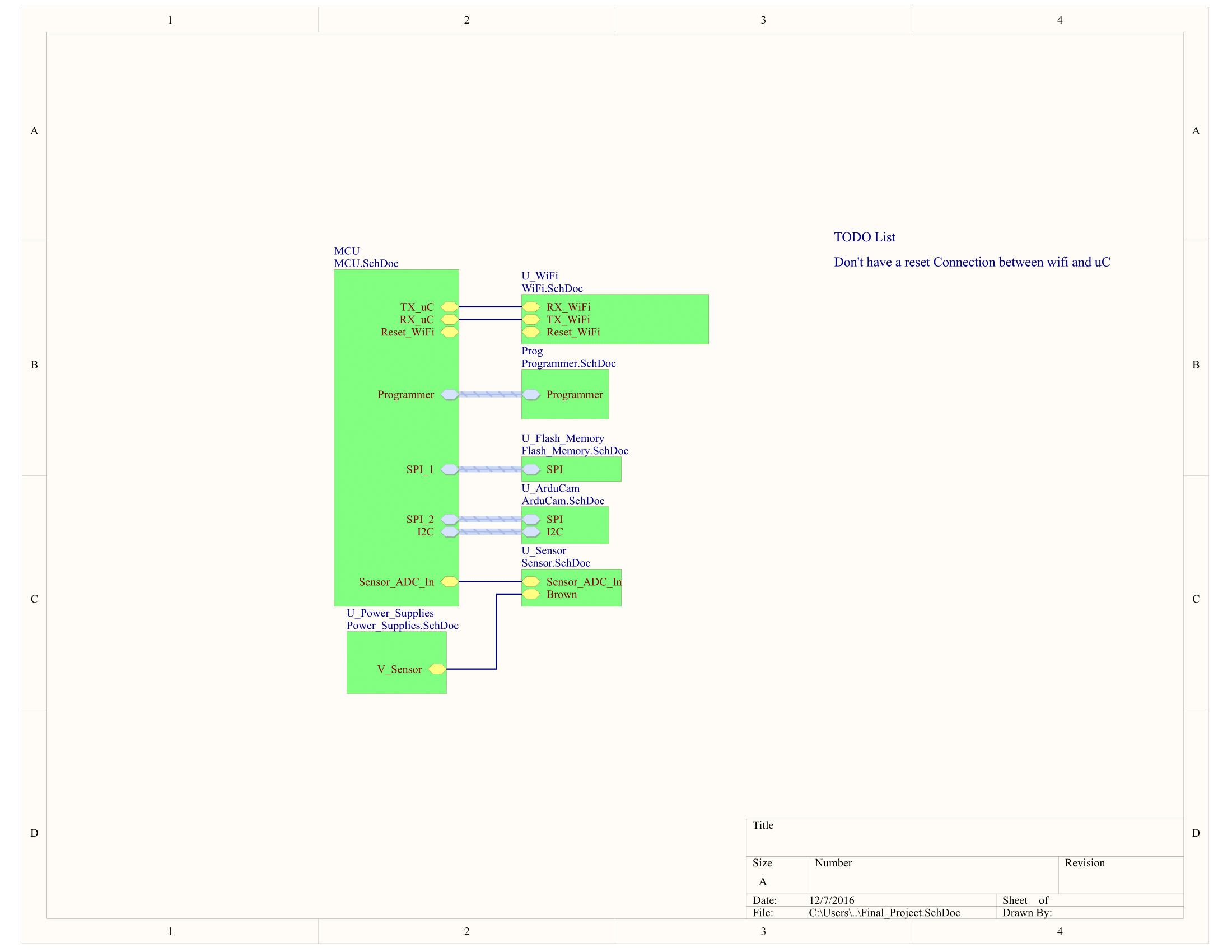

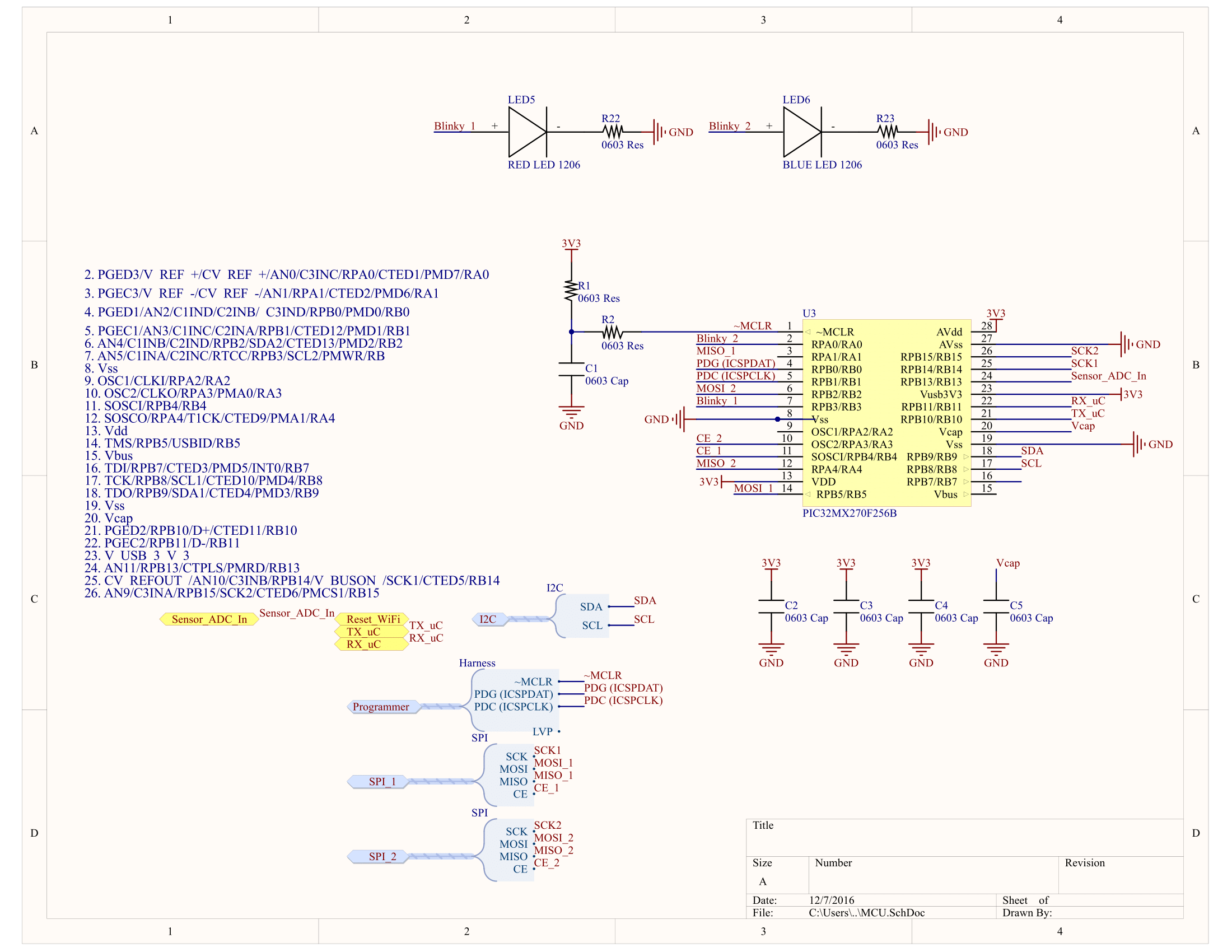

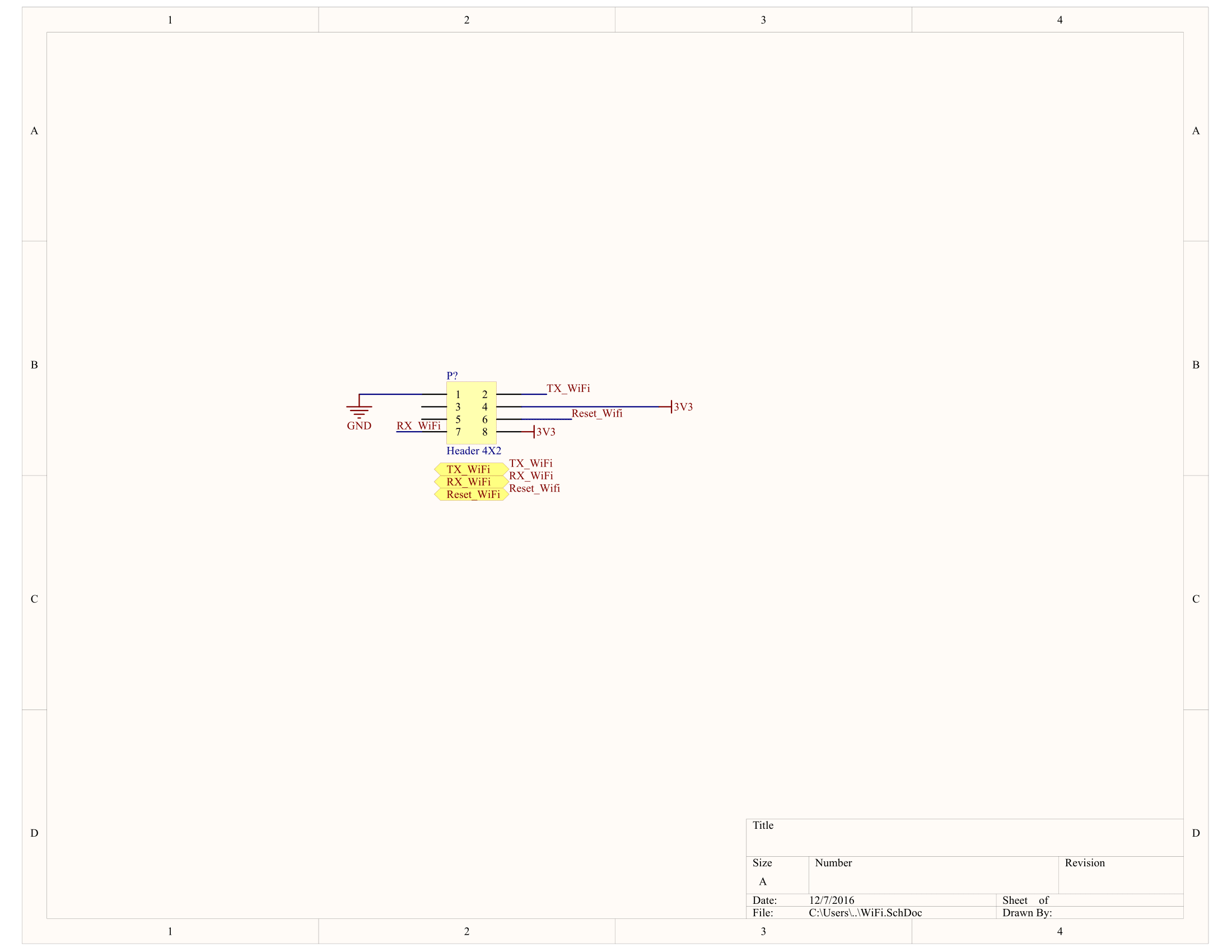

Our system integrates a Pic32 with a camera, induction sensor, and a WiFi module along with several python scripts on a computer to accomplish our goal.

The device is placed in the spindle of the machine. Then a preliminary zero is set over the fixture plate by the operator. The computer application then sends a calibration script to the CNC machine and the device takes a picture at several locations on the fixture plate. These images are then transported over WiFi to the computer application. These images are then processed using opencv and then GCode is generated to move the machine to a preliminary zero. GCode is an assembly like language that the machine understands. Once the device is at the preliminary zero, it uses the induction sensor to locate the edges of a bore and locate a more accurate zero.

The device is placed in the spindle of the machine. Then a preliminary zero is set over the fixture plate by the operator. The computer application then sends a calibration script to the CNC machine and the device takes a picture at several locations on the fixture plate. These images are then transported over WiFi to the computer application. These images are then processed using opencv and then GCode is generated to move the machine to a preliminary zero. GCode is an assembly like language that the machine understands. Once the device is at the preliminary zero, it uses the induction sensor to locate the edges of a bore and locate a more accurate zero.

Initial Prototyping

In order to break this monumental task down to smaller pieces, we took an incremental approach to designing both the hardware and software.

For the hardware, we set a breadboard system to mimic the PCB. This allowed us to verify the PCB functionality as well as write software that could easily be transfered over to the PCB.

The firmware was written in submodules, focusing on getting the individual pieces working. The camera had an existing Ardiuno framework and we were able to get that working with an Arduino. This is a very useful tool to have in case we started having some glitches in the camera, we could back up and use the Arduino interface to verify if it is the camera or not. Below is a picture of the camera module working using an Arduino and serial interface for transferring the image

In the same line of thought, the WiFi module's software approach was done using usb-serial first.

We reccommend that in the future students be incouraged to use these kinds of development methods because it not only allows for faster development, but also give the students a hardware ground truth to fall back on when everything starts going wrong.

Overview

Hardware design was very important to our overall functionality. The device needed to be robust enough to survive in a machine shop environment and easy enough for us to prototype in the timeframe of the project. The hardware design was comprised of two main components, the circuit board design and the casing design. These designs were then integrated together to form the overall final project result.

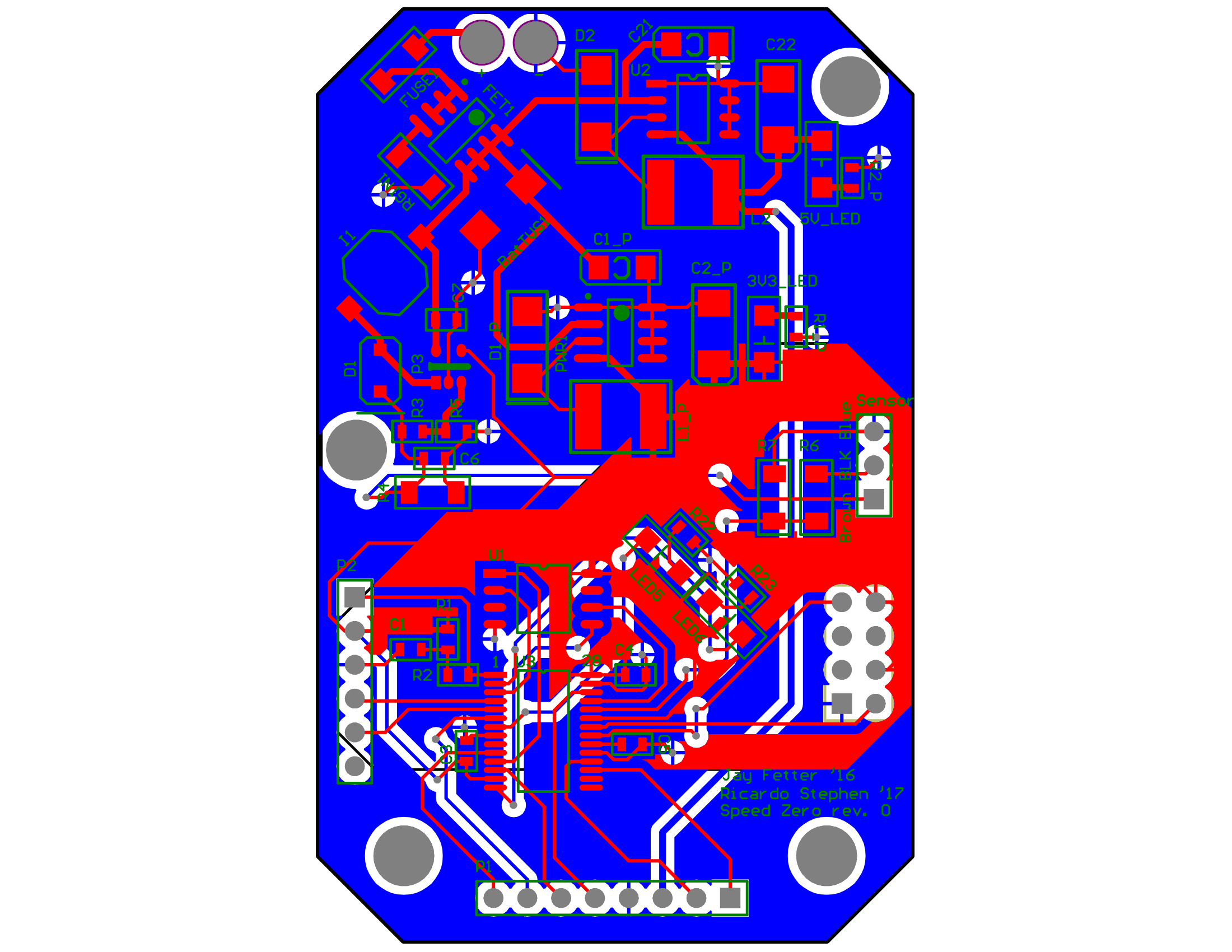

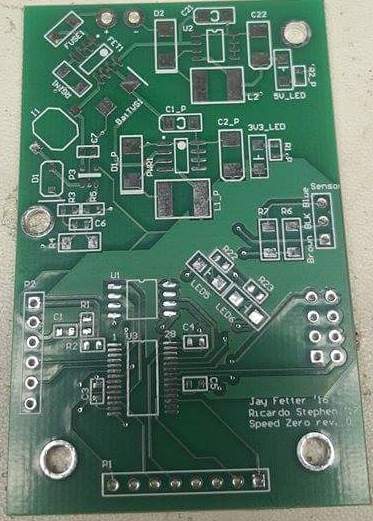

PCB Design

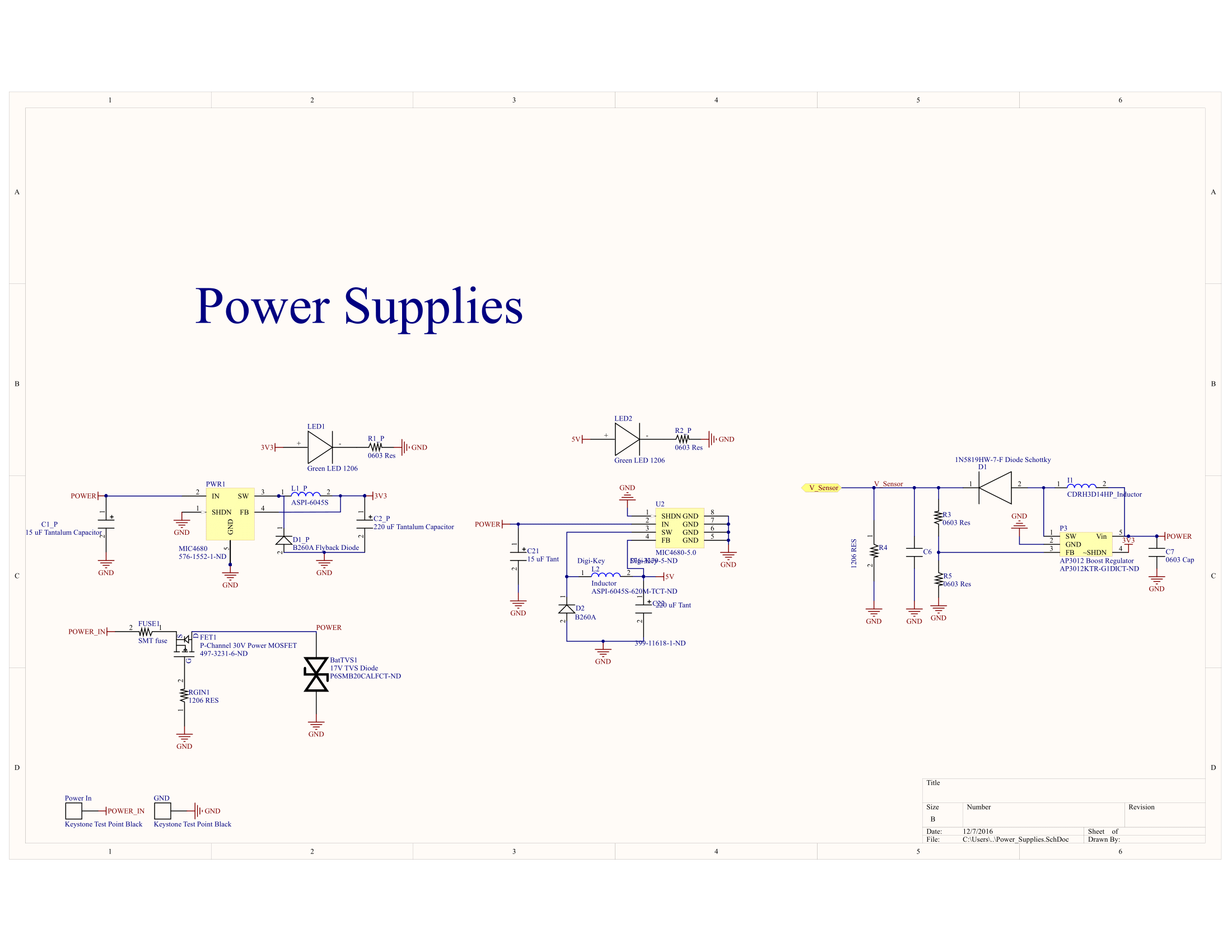

The decision to make a PCB (printed circuit board) for this project was driven by the goal of robustness of the system. This was a system that would be in the spindle of a CNC machine and we didn’t want to risk any loose wires and having to take the whole casing apart in order to debug the problem. We were also confident in our design skills to make a functioning PCB within the timeframe of the project.

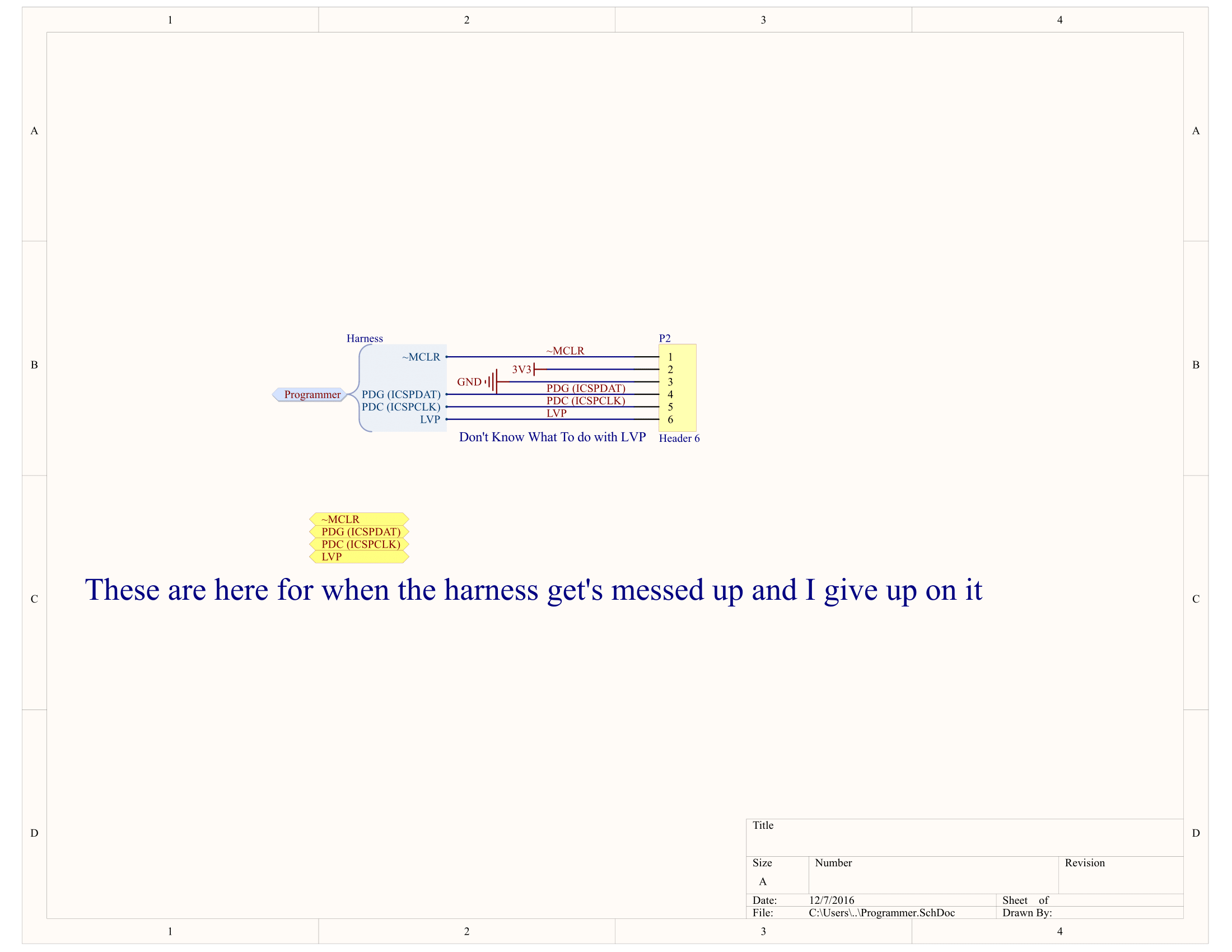

The basic PCB functionality was a Pic32 to interface with the camera, induction sensor, wifi module, and programming pins. Then 3 power supplies were added to regulate the 7.4V Li-Ion battery to 5V, 3.3V, and 16V respectively. The board was designed to only have components on a single side in case we had to fabricate the board ourselves using either the MakerSpace’s PCB mill or acid-etching it ourselves.

This board was then sent to Advanced Circuits for fabrication. The board was completed and ordered on 11/15 because of the two week turn around from the manufacture. During this time, the outer casing was designed and manufactured as well as time spent on devising a bring-up plan.

The bring-up for this board was done in the following manner:

- Test functionality of the power supplies

- Test programming the PIC

- Blink the on-board LEDs

- Test WiFi

- Test Arducam

The order of these stages was very important to make sure that we didn’t fry any of our components because there was really not a lot of time to reorder components by the time the board came in.

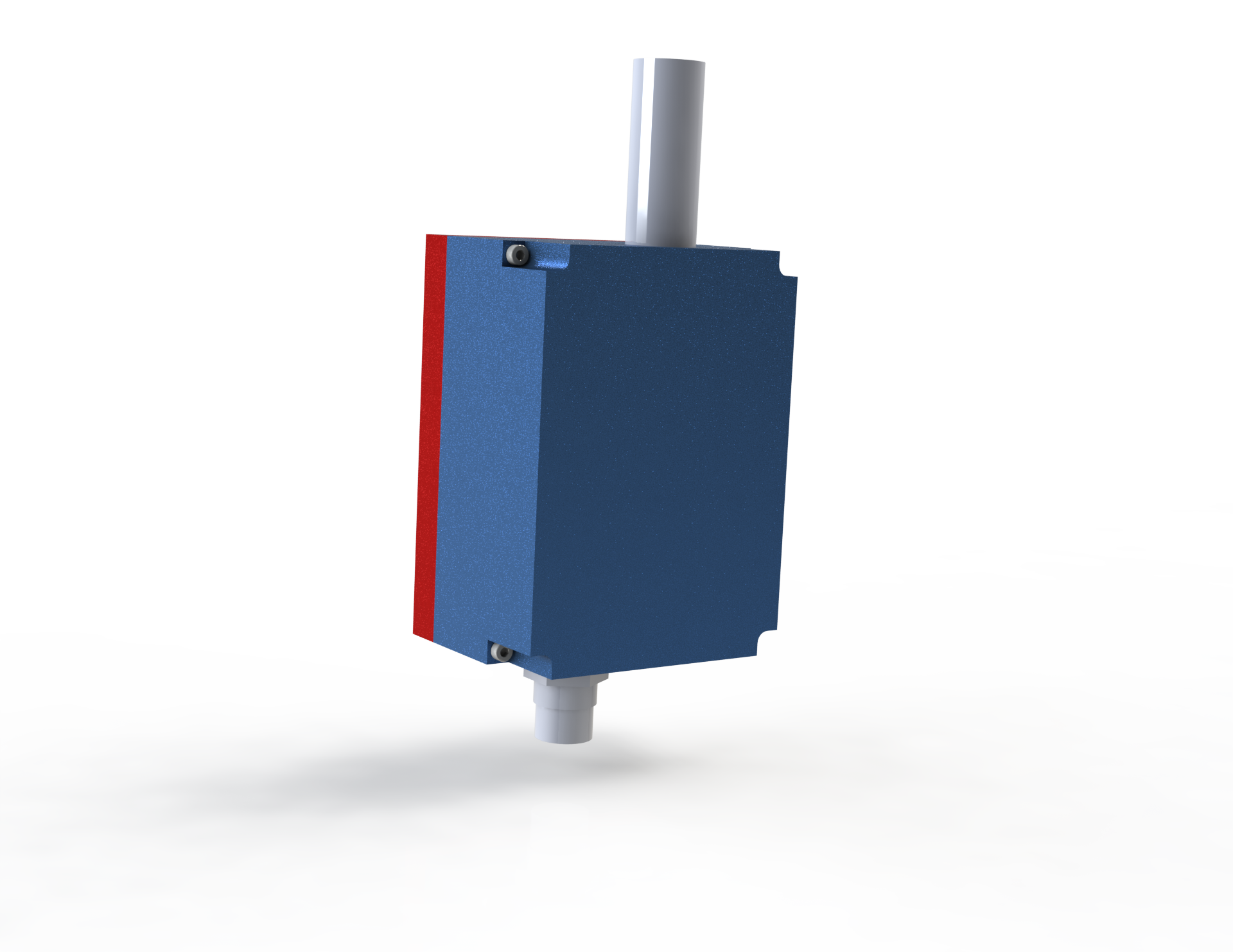

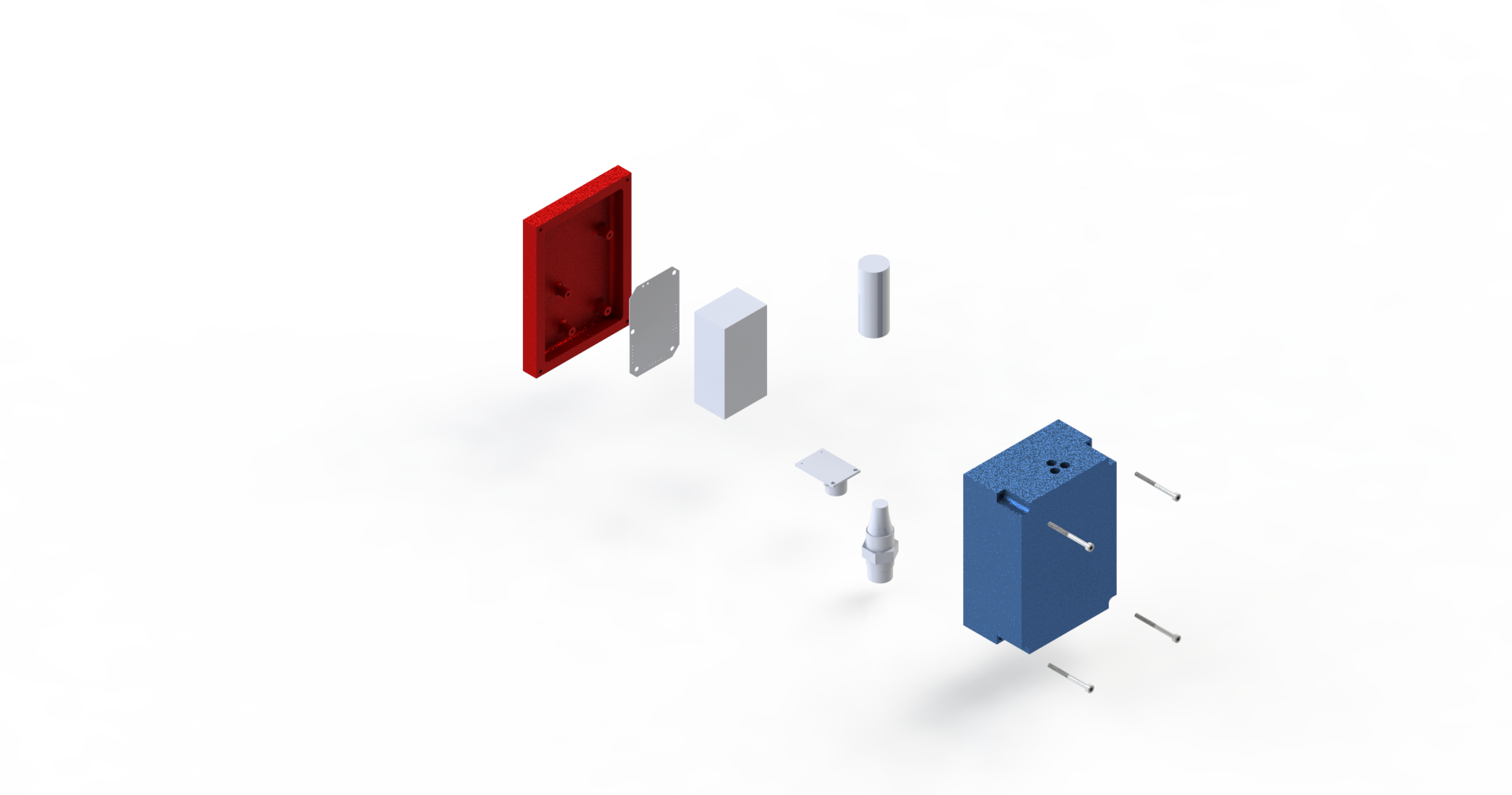

Enclosure Design

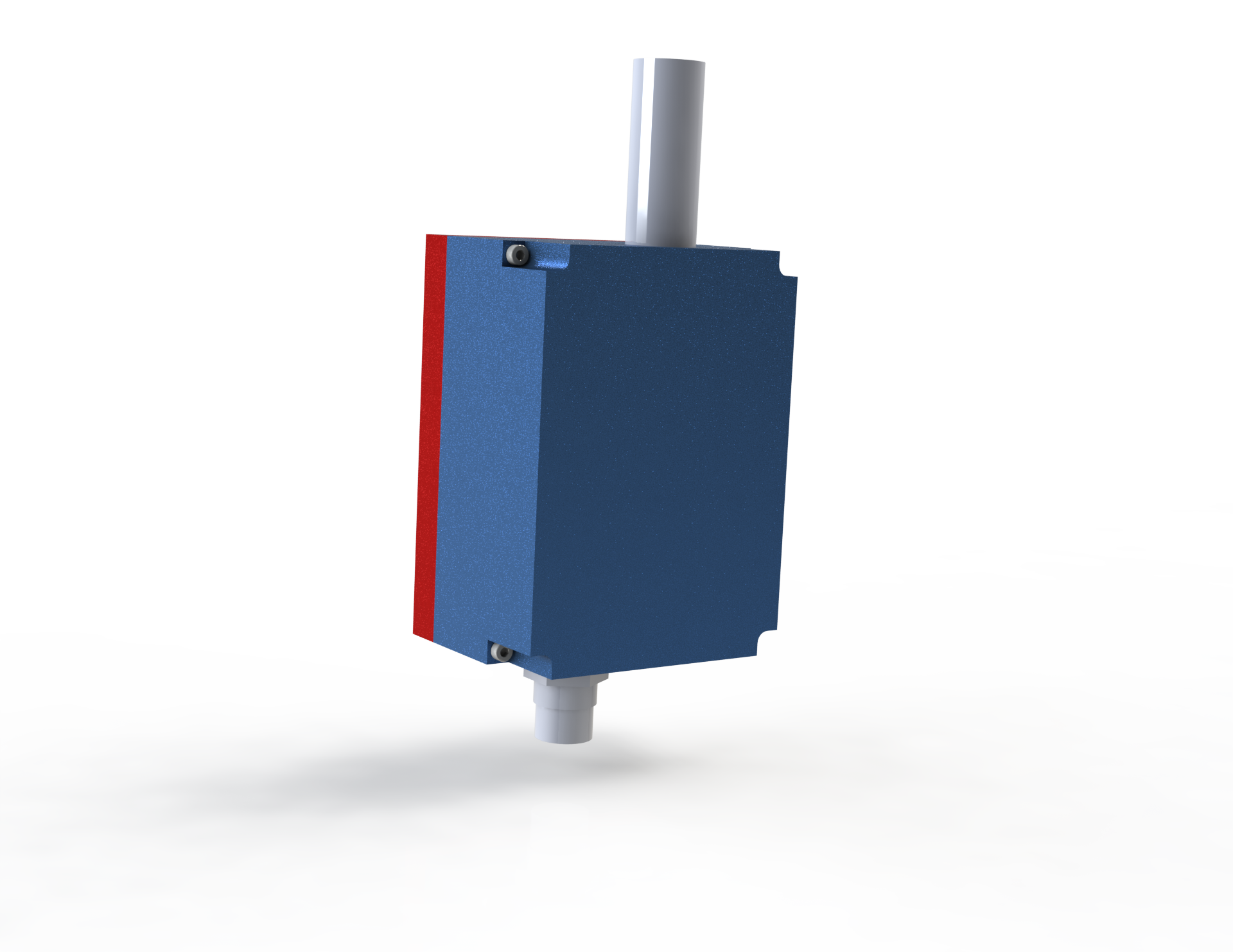

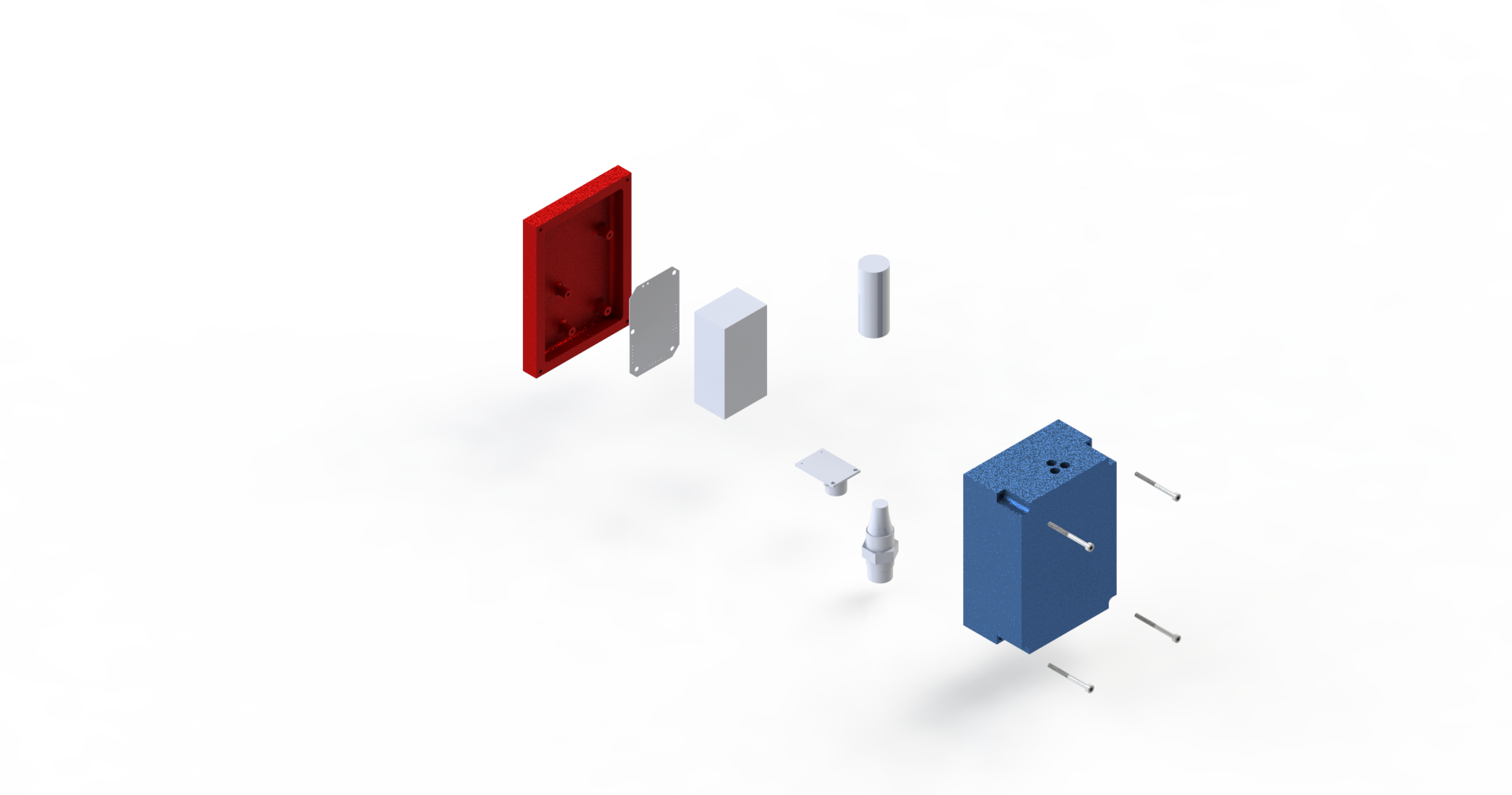

The enclosure for the project was designed to be durable and as rigid as possible. The enclosure was designed to be a two piece system, with half the casing containing mounts for the PCB and the other half having holes for the induction sensor and camera as well as the adapter so it can be mounted in the CNC. These were designed using the Solidwork CAD program, which can be found in the Phillips 318 computer lab as well as the Swanson computer lab in Rhodes hall.

For manufacturing, the aluminum portion of the casing was made using the CNC while the other portion was 3D printed using the MarkForged 3D printer in the Rapid Prototyping Lab. It was decided to 3D print half of the casing for timing reasons, otherwise it would have been made out of aluminum. The spindle attachment was made out of some scrap steel. An exploded view of the system is provided below.

Firmware

The first phase of the firmware design process consisted of establishing good code practices. First, we vowed to enforce strict adherence to good abstractions and the use of encapsulation-based hierarchy. Plibs provided us with a sufficient interface for working with peripherals, and we would build all of our hierarchy on top of it. As a side effect, we further promised ourselves to provide good interfaces through our header files; the header files allow users to configure any peripherals or lower level interfaces used by the module, and they would only expose the interface consistent with the abstraction we hoped to provide, and nothing more. We had to break such rules in some parts of our implementation, as will be noted below, but we would frown upon such code for more critical designs. Furthermore, we eschewed from the use of macro functions. Objects-like macros are great for not having to worry about the types of architectural registers and configurations. However, function-like macros have too many unintended consequences [1], and the performance gained by dodging a jump is not worth it. Furthermore, function-like macros are the reason we couldn’t fully parameterize our AT interface in the header file; now any poor soul would have to wade through my source to modify the change the UART channel used by the AT interface, effectively breaking an otherwise perfect abstraction. We believe in taking a stance against function-like marcos.

A major step in abiding by the rules we established for ourselves was to not use protothreads. The concurrency provided by protothreads was considered dangerous potential cause of bugs, and so was avoided for simplicity. Additionally, the given protothreads implementation was heavily coupled; it included threading, an interface for reading and writing from serial, and system-level configuration. All of the functionality in the file could only be used in the main file (else, multiple-definition errors would be thrown). Additionally, the serial abstraction was bad; it had silent side-effects like using ‘\r’ as terminators, echoing any read data, and not writing back any terminating characters for printed data. None of these configurations were applicable to the serial wifi-module we were working with. The protothreads serial functionality is a false abstraction; it promises to have use for general serial applications, but it requires so much source-level modification that it is no different from a user writing their own code on top of the plib uart interface. The authors will not comment on the use of protothreads as a construct by themselves. We prefer a state-machine approach to embedded systems.

Our actual implementation first started with system-level functionality in the main.c files. The main file contains the directives for configuring the clock (taken from protothreads). It also contains an init method, which is for setting more more clock, configuring the GPIOs as digital instead of default analog, enabling the interrupts, initializing submodules, and initializing global resources (ie a wifi packet for use with the wifi module). The main function simply calls the init method before executing the standard execution loop.

Next, a useful debug interface was designed in the debug.c and debug.h files. The debug interface offers on, off and toggle control over a single led on the board. It also includes a debug error handler, which is used to indicate an error condition by turning on the LED and spin indefinitely.

Then, delay.c and delay.h were added to provide access to a millisecond delay function. The implementation initializes Timer5, to interrupt every millisecond and update a counter. The delay function uses the counter and the given delay time to calculate the time when execution should continue, and it spins on the counter until the equals the computed value. The current implementation has certain flaws; it unsafely does not disable the interrupt before reading the counter, and it does not support wrap-around functionality. However, such imperfections were considered trivial.

Afterwards, we worked to integrate the wifi module. Our module uses AT commands over a serial interface, so the encapsulation hierarchy used was plib for the serial interface, an AT abstraction for working with the given AT interface, and Wifi abstraction for application-level wifi functionality. The AT abstraction can be found in at.c and at.h. The at_write_cmd allows users to call string-based AT commands as specified by the wifi module’s AT interface [10, 11]. The at_read_packet function allows users to read AT packets, which are responses and notifications from the AT interface. The at_write_raw command allows users to write raw strings to the AT interface; it avoids the complication of a postpendended ‘/r/n’ that occurs when using at_write_cmd, which is useful when specifying the data to send to the receiving module. Lastly, a at_write_cmd_until function was provided as an optimization, since many interactions with the at interface required writing to the interface until an okay response. The wifi.h module was then implemented on top of the at interface. The module’s initialization function configures the wifi module to act as a softAP and sets up a server to run in the network. It also allows users to send and receive wifi packets, which consist of an application-specific header and payload. All the headers were defined in the wifi_interface.h file for the sake of organization, especially when creating a host-side application. Additionally, the wifi module includes a wifi_send_debug method to send debug messages over wifi.

The next step was to add control code for the induction sensor. This simply consisted of a reading digital values from an input pin, and sending it to the host-side application over the wifi module.

Then, support for the camera was implemented. The camera module consisted of a SPI interface for working with the chips on the module, and an I2C interface for interfacing with the sensor. The sensor’s source is defined in ov2640.h, ov2640.c, and ov2640_reg.c. The sensor support was implemented by first writing simple functions to read and write over I2C as per the protocol defined in [12]. This interface was then used to configure the module in ov2640_init. Then, an interface for selecting the size of the jpeg was also provided via the ov2640_set_jpeg_size function. A lot of the configuration required writing large amounts of configuration values, which can be found in ov2640_reg.c. The arducam.h and arducam.c interface was also likewise implemented. It provides three functions for capturing images: arducam_start_capture to start the image capture, arducam_capture_done to indicate when the capture is complete, and arducam_wifi_send to send the captured image over wifi.

The final parts of the firmware design are contained in the main.c file. Various functions to incrementally test the each modules can be found in the main.c file. The slave_mode function was used in runtime, and it simply serviced user requests for resizing the image, getting an image, reading from the induction sensor, and restarting firmware execution. The main function simply calls it after initializing the system and firmware modules.

Python Framework

The computer side of the project was designed using python. Python was chosen because of it's versitile use and it's compatibility with both the serial and the opencv libraries that were needed for the project. There were 4 main python scripts that where used in the final project (although we wrote a lot more along that way for testing).

The first script would initiate communication with CNC and send a calibration program over serial. The second script would request that the device take and send a picture over WiFi. The third script would detect the bore using opencv. The final script would take the information from opencv and decide where to move the spindle for it to be at zero. Then it would generate the GCode and sent it over to the machine.

Results

Our results for this project were pretty awesome. We were able to actually construct a device, put it in the CNC, and get feedback! We were able to receive images from inside the CNC and then run them through an opencv script and detect the bore on a fixture plate!

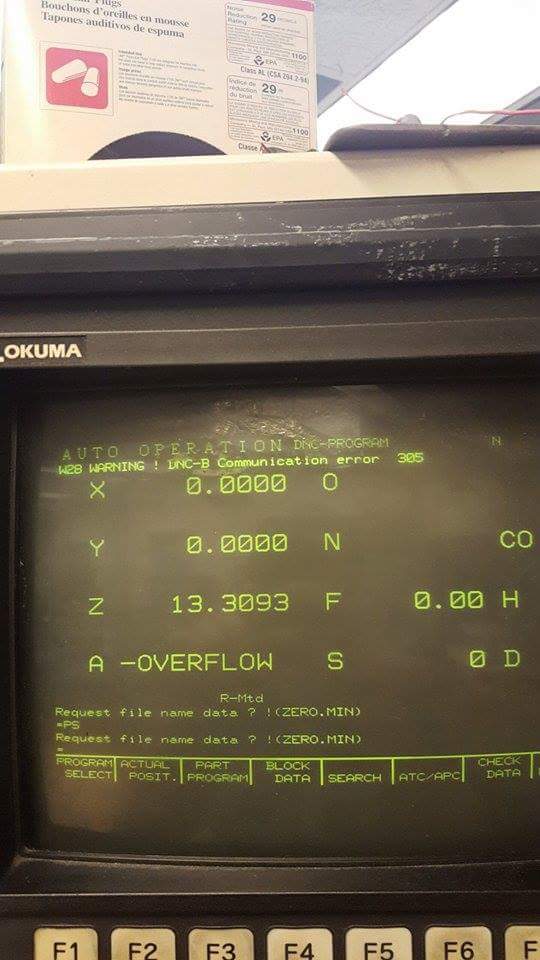

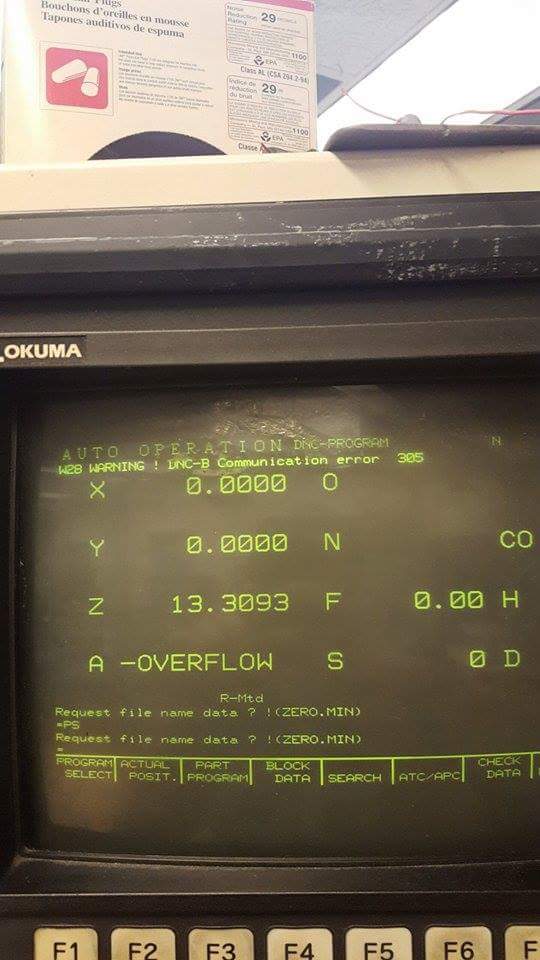

The results were semi-mixed because of issues with interfacing with the CNC, which was an afterthought because we were more focused on working on the embedded side of the project. We knew going into this project that the Okuma in Emerson is an unpredictable beast and cannot be trusted to make anything easy for you. The specific problems we faced are discussed in the Conclusion section of this report.

If you ignore the CNC control, all the subcomponents of the project performed up to spec! We were able to receive images over WiFi to a base station, which could then detect the center of a bore. We were also able to poll the induction sensor through our WiFi interface!

Below are some images that were taken using the device while it was inside of the spindle. These are examples of images that were used during the calibration phase of the device. The first image can be considered your baseline image. The second image represents a linear move of 1.5" in the X direction. In the Okuma's coordinate system that is a direct horizontal move. However, you can see from the images, that it is not directly horizontal in the camera's coordinate system.

-

-

Here is an example of our bore detection from opencv:

You can see from that image that our opencv script was able to identify the main bore on the piece and locate the center of that circle.

You can see from that image that our opencv script was able to identify the main bore on the piece and locate the center of that circle.

We were able to manually use the induction sensor and get within our threshold accuracy of 0.05” or 50 thou. This was chosen because of the tolerance stackup in our casing. This process took about 30 seconds and was very repeatable. So while not completely hands off, still a major improvement for a CNC operator.

The camera on the other hand varied greatly with it’s accuracy. We did not have a lot of time to tune the image recognition due to limited access to the machine shop. The shop would only be open during the day from 9-12 and 1-4:30pm and it was very difficult finding time to make it there when we were both very busy with our classes. If we had access to the shop after 6pm, we would have been able to get a lot more testing images and been able to solidify our image algorithms.

The camera’s accuracy should be able to get us within < 0.01”, but due to our inaccuracies in the casing setup and our image algorithm, it’s error was more like 0.5”.

In order to insure that this design was safe, a GCode command called an Optional Stop or an M1 command was sent after each movement command issues by the device. This requires the operator to manually click a button to continue execution of the program. This was implemented to ensure that the operator has the time to look at where the machine is going to move before it actually does to ensure that it is going to be safe.

Conclusion

Our project was met with mixed results. From an microcontroller perspective, it completely exceeded our expectations. We were able to have a fully functional PCB on our first revision!

However, we ran into trouble with the CNC machine and its functionality. Our initial plan on taking advantage of the CNC’s “drip-feed” architecture, where instructions are sent slowly and buffered on the machine ended up not working due to constraints with the machine. The machine had built in protection for detecting a timeout on the serial line. This meant that we would not be able to hold a serial connection with the machine and then send instructions after polling the sensor. We would need to run a calibration program and then run another program to generate the origin. This ruined our ideal smooth functionality of the device.

This made our plan for automating the induction sensor readings impossible because we were planning on slowly moving the sensor across the part in small incremental steps and then reading the sensor at each step and sending the state over WiFi to the computer. Since we would have to precompile the GCode programs, we would not be able to poll the sensor due to the fact we would not be able to correlate a reading with a location in the machine.

This made our plan for automating the induction sensor readings impossible because we were planning on slowly moving the sensor across the part in small incremental steps and then reading the sensor at each step and sending the state over WiFi to the computer. Since we would have to precompile the GCode programs, we would not be able to poll the sensor due to the fact we would not be able to correlate a reading with a location in the machine.

There were several small mistakes/unimplemented hardware features that would have been nice to have. The most important one is the lack of an external on-off switch. This was an oversight and was bearable for the project term, but if this was going to be a device, an on-off-charge switch would be a necessity.

Another unimplemented feature of the hardware design was not achieving IP67 on the casing. Since the CNC commonly uses coolant during the machining process, the inside of the machine is frequently damp and if the device was not sealed, overtime the coolant in the air would eventually cause a failure. Also, the risk of an errant coolant spray hitting the device would be too high without making it fully waterproof.

To achieve this, the casing would be redesigned so that it would become a single CNC-ed component with a metal face shield that would seal against an o-ring. The spindle attachment would also need to have an o-ring seal to protect against the environment.

Another flaw with the hardware design was not perfectly aligning the camera axis with the spindle axis. This complicates the math for the image processing and adds unnecessary error to the entire system.

Ethical Considerations

During the course of this project we followed the IEEE Code of Ethics. We ensured that our project would not harm or interfere anyone elses project and took steps to make sure we did not damage any of the expensive equipment in the machine shop.

The machine shop is a dangerous place and a momentary lapse can mean serious harm to you or someone else. Great care was taken to ensure that the auto-generated GCode was executed in a safe and reliable manner. Code blocks called optional stops were inserted between each of the GCode movement commands to allow the operator to verfiy that these commands were safe to execute before running them. When the machine was running, all the Emerson CNC rules were followed, which include, but are not limited to: safety glasses, hand over the emergency stop, CNC door closed, long hair tied up.

We also sought to help others with their projects when we could. We worked with other groups to help them debug their issues if it was either with their circuitry or their communication protocols. However, since we were mostly working outside of the labspace, we were not able to assist as much as we would have liked to.

We made sure that we were technically qualified to be using the CNC machine in the Emerson shop before undergoing this project. Jay took MAE 1130, which is a prerequisite for becoming a Blue Apron, or CNC certified. Ricardo has also undergone the Makerspace PCB mill training and has become familiar with the CNC operation during the course of the project. We would always make sure that we were not going to be damaging anyone or anything when we operated the machine.

CNC machines are very dangerous and should be operated with the proper training and caution. Do not try to replicate this project if you are unqualified. Doing so may result in bodily harm or a broken CNC machine.

Division of Labor

Ricardo worked on the microcontroller firmware writing drivers for the WiFi module and the Arducam camera. He also wrote several scripts for interfacing with the WiFi module on the CNC side of the device.

Jay worked mainly on the hardware portion of the project. He built, designed, and populated the PCB. He designed the enclosure for the electronics and manufactured them himself using the CNC, manual lathe, and the MarkForged 3D printer.

Both Ricardo and Jay worked on the website and report.