"a real-time video object tracking / shape recognition device, and a fun game library to demonstrate its abilities"

project soundbyte

We have created a real-time video object tracking / shape recognition device, and a fun game library to demonstrate its abilities.

For our project, we wanted to push the video sampling and processing capabilities of the ATmega644 8-bit microcontroller. Using a high-speed analog-to-digital converter as an input device, we were able to sample a reasonably high-resolution grayscale image from a color camera's video output. Using this grayscale image, we are able to track objects and recognize shapes that stood out from the background by a customizable threshold.

We created a game called Human Tetris to show off the system's shape recognition capability. In this game, players must contort their bodies into shapes displayed on screen in a given amount of time. To demonstrate the potential for our device to expand to a larger game library, we also implemented a port of Brick Breaker and another ineractive game called Whack-a-Mole. Brick Breaker shows off the object tracking capability, where players must physically interact with the bouncing ball to keep it on screen and break bricks. Whack-a-Mole requires a player to flail around on the screen to hit all of the moles before they disappear.

High Level Design

Almost all major gaming consoles are moving to user input systems involving tracking all or part of a player's body (see Nintendo Wii Remote, PlayStation Move, and XBox Project Natal). Our project setup is similar to Project Natal, which Microsoft claims to be a "controller-free gaming and entertainment experience", using a multiple-sensor device (with at least camera and microphone) placed by the TV. We have demonstrated the feasibility of a basic motion-controlled gaming system using low-cost parts.

The system is based off of an ATmega644 microcontroller with two peripherals: a high-speed flash analog-to-digital converter, and an onscreen display device for video overlay. With just 4 kilobytes of RAM and a 20MHz clock speed, the MCU needs these peripherals to take some of the load off of its processing capabilities. Since we wanted to provide a real-time camera feed on the display (like a "mirror" for the player), and since the ATmega644 is not fast enough to generate a full color NTSC signal, we decided early on that we would use a color video camera module which outputs an NTSC signal directly.

The system accepts a color NTSC video signal, filters out the DC component, and samples the video stream at real-time speed. This sample is stored as a grayscale image in the MCU's memory which can be processed by the application. The onscreen display overlays shape information and game data for user interaction.

High-level block diagram

Based on this system, we have implemented a video arcade game called Human Tetris, based on a Japanese game show event. In the game, contestants are faced with an oncoming solid wall with a shape cutout. The players must fit through the shape, or else they are thrown back into a pool of slime. A few clips from the show are shown in the YouTube video below:

Our game displays a shape on the screen which the players must fit into within a short amount of time. If they succeed, they are faced with more difficult shapes and shorter amounts of time until they are inevitably "slimed". The game provides the player with real-time feedback by displaying the video on the screen with the device's interpretation of their shape.

Our project involves two standards: NTSC and SPI. NTSC is well-defined and is used as our input and output medium. SPI is more of an agreement than a standard, and our project uses it internally to the specifications of the microcontroller and on-screen display device.

Tetris is copyrighted and trademarked thoroughly, so attempts to turn this project into a product would have to be preceded by licensing talks or altering the aesthetics. "Human Tetris" is a commonly used description for the game show our game is based on, but the actual game play is not that of Tetris.

Hardware

Prototyping

Our finished product is neatly tucked onto a 2.5" x 3.8" custom printed circuit board, but for most of the design stage, we prototyped on an STK500, a solderless breadboard, and the ATmega644 custom target board. Our earliest prototyping simply involved working with the ATmega644 on the STK500, with the surface-mount peripherals soldered to breakout boards on a solderless breadboard, attached to the STK with long, loopy wires. We also used the large protoboards as an external power source to feed our substantial power requirements. Slowly, we weaned off our dependence on the STK and moved to using just the solderless breadboard and the target board, with an on-board power circuit. Once the PCB was set up, everything was contained directly on the board.

Design & Components

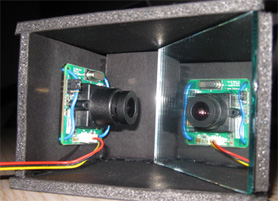

Camera mounted in mirror enclosure

At the start, we decided in our high-level design that it was essential for the user to have real-time feedback of their position on the screen. This allows the player to correct their position on screen or move to an object. Since our microprocessor is not capable of outputting color NTSC, we decided to use a camera which outputs an NTSC signal directly. Then, we could split the signal for display and processing. We chose the CM-26N color video camera because it was moderately priced and simple to use: simply plug in power, and it outputs a video signal. It also has a few features we were not aware of when we bought it, such as auto-brightness. One thing that we did not realize until setting up the system was that a camera pointed at the player would not produce a "mirror" effect like desired; instead, if a player moves to the right, he will move left on the screen. To remedy this, we used a low-tech solution: we point the camera at a mirror to flip the image it sees and displays on the screen, so that the game feels more natural to the player.

The signal from the camera goes through an RC input filter which biases up the signal swing to about 0.5V to 2.0V. This is based on the MAX7456 datasheet's "Typical Operating Circuit", which specifies a 75 Ohm load resistor to ground and a 0.1uF input coupling capacitor. Originally we had intended to create two separate circuits for display and processing using current buffers, and a shift/gain op-amp for input into the analog-to-digital converter. However, it was simpler and worked just as well to use the same signal as an input to both devices; it did not produce any detrimental effects.

The signal from the camera went into the analog input of the TLC5540 flash ADC. Since this signal swung from about 0.5-2.0V, we set up a bottom reference voltage of 0V and a top reference voltage of 2.0V, using a combination of the ADC's internal voltage divider and a parallel resistor. This provided a good level of resolution for our application. We set up a clock output from the MCU to go into the sampling clock port of the ADC, so that we could control the clock speed through software. The 8-bit parallel output from the ADC went to the 8 pins on the MCU's port C. During prototyping, we experienced a lot of noise on these high-frequency digital signals, which produced some bad reads and unexpected behavior. The custom PCB brought these components must closer together with good traces between the two devices, which essentially eliminated all of these problems.

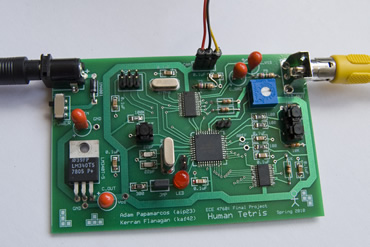

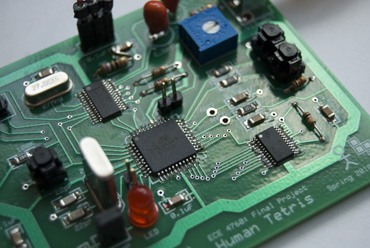

Overall hardware components [large image]

The MAX7456 on-screen display (OSD) was used as our primary output device, to overlay characters and data on top of the video signal going from the camera to the TV. The SPI interface between the MCU and the OSD allowed high-speed communication for user feedback. It also took a lot of processing demands off of our MCU, since we could just tell the OSD what to do over SPI quickly, and the OSD would take care of the video overlay and timing issues. The OSD also provided some incredibly useful synchronization pulses for vertical sync and horizontal sync in the NTSC signal. We used these outputs to tell our MCU when to sample the video. We had a lot of trouble during the prototyping stage with bad writes to the OSD, most likely due to noise in the high-frequency communication on the SPI wires connecting the devices. Similar to the case with the ADC, when these components were carefully placed on the custom PCB, all of these issues disappeared, and the SPI communication could be cranked up to several MHz.

While we were originally planning on using the ATmega644 MCU, we wanted to sample the part, and we could only get samples of the ATmega644PA. These microprocessors are virtually identical (the 'P' is a low-power version of the chip, and the 'A' represents a manufacturing change). Virtually none of the hardware or software needed to be changed to accommodate this new chip. We opted to run the chip at the maximum clock speed of 20MHz for highest performance, but this is still below the threshold for direct video capturing or generation, which is why we had to use the two peripherals. We were also limited to the 4K of RAM, which limited the amount of pixels from the sampled image we could store. Otherwise, the MCU acted a central hub for the entire circuit: everything was controlled by the MCU, and it implemented all of the game and user interface logic.

We determined that the current drawn by the total circuit was close to 0.6A (camera ~120mA, OSD ~430mA, flash ADC ~20mA, atmega644pa ~12mA), so we used a power adapter and created a power circuit that supplied up to 1 Amp of current. We used a 12V DC power supply, a 1A rectifier (1N4001), and a 5V voltage regulator (LM340T5). We also included a power switch to break the connection from the supply to the power circuit.

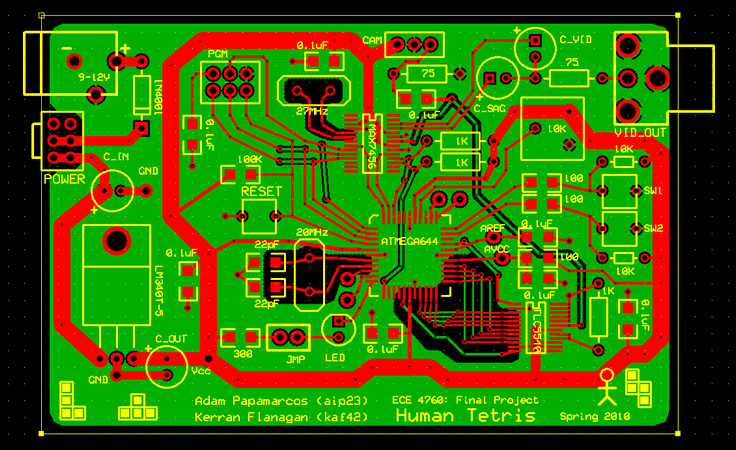

Our finished product contains an on-board power circuit with power switch, reset and user interface buttons, blinking LED heartbeat (with jumper to turn off), camera and program headers, exposed RX/TX ports for serial debugging, three surface mount chips (ADC, MCU, OSD), two crystals, several resistors and capacitors, a ground plane to reduce noise/interference, and an RCA jack for video output to a TV.

Custom PCB

Close-up of custom PCB [large image]

About half way through the design stage, we realized that a custom PCB could be very beneficial to our project. After getting approval from Bruce Land, we decided that we would attempt to design and build a custom PCB after we had finalized our hardware design, budget and time permitting.

After we received and soldered components to the PCB, we found one small connection problem on the NTSC input circuit, which destroyed the signal. The resistor's lead in the RC circuit had to be moved to the other side of the capacitor [corrected PCB design file in schematics]. After a bit more debugging, the PCB worked perfectly. It essentially eliminated all of the noise and other issues we were having with our hardware. There were no longer any bad reads from the ADC or bad writes to the OSD. Everything behaved as it was designed, despite the high frequency signals we were sending between components.

Software

Our software consists of several major sections, corresponding to various hardware pieces and the various tasks required to achieve our goal. The first thing we developed was the driver for the on-screen display device, since that would be our only real output. Then we worked on getting video signal input. With input and output functioning, we moved on to data processing. Once we had a handle on how many resources we had left (in terms of memory and cycles), we moved onto writing the logic for program flow. With a simple shape-tracking library now at hand, we fleshed out the test code into Human Tetris and added Brick Breaker as a quick demonstration of the shape-tracking code's potential.

SPI Driver for On-screen Display

Talking to the MAX7456 On-Screen Display through the ATmega644's Serial Peripheral Interface proved to be an interesting challenge. Thankfully, someone had written an open-source library for doing so based on the ATmega162 architecture. Porting that to run on the ATmega644 was just a matter of reading data sheets and swapping register values for macros. However, the open-source library was based on bit-banging. Since Bruce Land covered using the ATmega644's hardware SPI in lecture, we upgraded our driver to use that. Writing to the SPI works very well, and works as fast or slow as we can set it. Unfortunately, we never got SPI reads to work and are not sure why. But since we only needed the MAX7456 for output and it has such a well-designed interface, this was not a problem. One way reading could have helped would have been to confirm writes, since noise was a major problem prior to using the PCB. To combat the noise, we just reset all configuration options right before every frame, and hoped for the best on transmitting the frame data.

Our first surprise lesson in writing all of the datasheet came when we tried flashing a complete set of new characters to the OSD's EEPROM character memory. Hardware constraints limit this action to only 8 characters at once (per on-off cycle). Since our output goals involved using the OSD to display much more than text, we needed a better option than programming and booting it 32 times over every time we altered our OSD images. Hooking up the OSD's reset line to an output that we could toggle allowed us to use one run of the microcontroller to program the entire character memory.

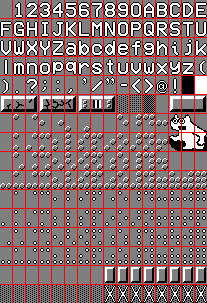

Custom character set

Custom characters themselves were a cinch thanks to the same person that wrote the open source SPI driver. There exists a web page (see references) that will take a PNG image of all 256 characters and return a C header file of character data ready to be used by the open source driver. Our only issue here was that for some reason having an array of PROGMEM array pointers caused all the data to land in the Data memory, which was way above our 4kB limit. Using a rather long function to load the character data on a per-character basis solved the problem.

Part of our need for custom characters came from one of the OSD's limitations, it only displays 13 rows of 30 characters each in NTSC mode. Also each character pixel is either black, white, or transparent. But each character is 18x12, so NTSC "pixel" level display is still possible. We split each 18x12 character into a 3x2 grid of 6x6 sub-characters, giving us a 39 x 60 symbol screen resolution. 6 sub characters means 64 possible combinations of them, so we wrote makedots.m in Matlab to take a 6x6 pixel symbol and output all 64 combinations as an image ready to send to the character map conversion website. By keeping them in a clever order in PROGMEM, we could get our desired 18x12 output character by using 6 bit flags per character and then just adding the address of the blank to the sum. To get a 18x12 block from 6 pieces of 6x6 data, we just take the majority vote of the 6 pixels, with ties going to blanks.

NTSC Sampling

As one might imagine, getting a video signal into the ATmega644 proved to be quite a challenge. Talking to our flash ADC was rather trivial, since its only input from the micro controller was a clock. Things got less trivial when we realized the suggested clock minimum frequency was 5MHz, which is 1/4 of our own clock speed. We set up Timer 2 to control an output bit, so it could run independently of the CPU. Prior to using the PCB, we setteled for 1.25MHz as a balance between noise and temporal resolution. Once we had the PCB, running the ADC clock at 5MHz worked best.

NTSC signal behavior. [Stanford EE 281, Laboratory Assignment #4 "TV Paint". See References]

With the clock set up, we had an 8 bit digital version of NTSC flying at our MCU. If we tried to read it all in, we would be out of RAM in 400 microseconds. Down-sampling was a necessity. 39x60 pixels not only down-sampled nicely to the OSD, it took up just over half our RAM. So we can only hold one "frame" at a time. Loading 39x60 out of a 242x338 signal meant sampling roughly every 6th pixel in every 6th line of an NTSC frame. Lucky for us, our OSD not only output NTSC, it output the horizontal and vertical sync pulses. We connected those directly to the ATmega644's hardware interrupts, so we could get fairly accurate responses to the start of every frame and the start of every line. The "porches" gave us time to context switch to the ISRs and do the book keeping necessary for down-sampling. 60 samples on a 51.5us line of data meant one sample every 0.85us, which is 17 cycles. To achieve this level of accuracy, we inserted NOPs into our C code until things lined up correctly. We also used microsecond delays and NOPs to center or reads along the visible data part of the horizontal line.

To get the sampling perfect, we needed something better than the imperfect display of our NTSC output. We connected up the UART and wrote a method to dump out a frame of data on to it. However, dumping a frame of data takes almost a minute, so we had to make it due so only after a button push. This made for excellent comparison of test images to read results, which were visualized using MATLAB. We also wrote a MATLAB function to read directly from the UART, which was sometimes more convenient. Viewing our raw data on the computer helped us notice when we had only a few pixels of either porch data or bad reads.

Input Filtering

A few pixels of bad reads would have been okay, but prior to using our PCB we had more than a few bad reads. There was substantial noise all over the circuit, and since we were mostly just moving data around, this was a big problem. We tried out several different approaches to filtering. First, we screened out all bad pixel reads and replaced them with the average of their neighbors. However, bad reads often occurred in clusters, so this did not work. Using a fixed gray value as a replacement worked better. Between down sampling and the noise, our output had a lot of flickering elements. Applying a simplified Gaussian Blur gave smoother results, but that went against our general goals of shape-matching. Averaging two frames of input together would have been great, but that would have required more RAM than we had. We tried out sampling more lines (so higher vertical resolution) and averaging on the fly, but the PCB arrived and squelched noise before we could get the timing right in that code.

Output Filtering

The PCB result was sharp and flawless, but the down-sampling effect was still causing an annoying flicker. Not wanting to have to slow down our game(s) just for the sake of flicker, we came up with a method of filtering our output based on our previous output, a moving average box filter of sorts. We still didn't have the memory to duplicate even a 13x30 output frame, but by repeating characters across many addresses we could use the address itself to store information. Looking at the last two lines of our character set, you can see we increment the address when there is positive info and decrement it when there is negative info. By capping the ends (so as to not overflow or underflow), we can display a nice running average over time with minimal memory and cycle cost. Our increments and decrements are set to 3, so it takes less than 3 frames for white to black changes and it takes at least 3 positives or negatives in a row to create a noise blip.

To avoid output flicker from frequently updating the OSD, we don't flush our output buffer to the OSD until the end of our main loop. Keeping this output buffer around is also what lets us do time-averaging of our output to avoid flicker. Another flicker that can appear is a nauseating collection of video signal artifacts when the OSD is displaying characters that are pixel level grids. For this reason our standard background is a loose 1 out of 4 pixels instead of a tight checkerboard pattern.

Timing

In order to run our games, we needed some sort of time base. Since we had a 20Mhz crystal and 20 is not a power of 2, we had to use Timer 1 to get a time base on the order of milliseconds. We settled on a 5ms time base as a balance between ISR executions and accuracy. That time base controls the game state and timers, but the main loop is actually triggered by the NTSC sync pulses. The vertical sync ISR activates the horizontal sync ISR for reading in a frame if a flag is set and the horizontal ISR sets the flag down when reading in a frame is complete. The main loop does the opposite, running when that flag is not set and raising it when finished. This way every time main executes it has fresh input. We run our tracking mode as fast as possible and our games at half that in order to make the OSD flicker less during gameplay.

We have 2 buttons for input, "toggle" and "enter", which are sufficient for a menu system. We run a de-bouncing flag-raising state machine to get meaningful input from sampling them at around 30Hz. We run a heartbeat LED at one second per cycle, so we can tell if things are working fine or not. In addition to the heartbeat LED, we keep a "Game Timer" at a 5-millisecond time base for game play purposes. We also sample the on-board ADC at 1Hz to get specified black level, which can be set by a trimpot to tune the game(s) to different environments. This live tuning is a bit more useful than just viewing exact values from a UART frame dump and guessing at a good threshold for detection.

Menu and System State

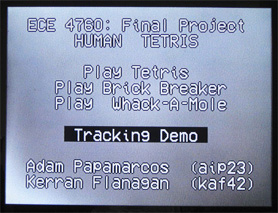

Main menu

Our menu allows users to choose between the three games and the tracking mode. One button is used as a toggle to go to the next option and the other button is used as enter to select an option. The menu is a simple state machine. Each frame it displays the title, our names, and all the options with the current selection highlighted.

The state of the system is another state machine with states for menu, each of the games, tracking mode, and a win/lose screen. Each "frame" through the main loop activates the code for the appropriate state. The initial warning message is displayed for a few seconds the first time the menu state is activated, which is when the system turns on.

Games

The game state machines are based on the 1 millisecond base of "Game Time", and the logic is independent of the frame rate. This means we can speed up or slow down our frame rate as needed and not affect the game mechanics. The main state machine simply loads the input data from the video stream then calls out to game logic for each frame. In our game modes, we display the player's body as a collection of 12x18 character sized blocks for aesthetic value and decreased noise, as opposed to the 6x6 dots used for tracking mode.

Our Human Tetris game does the following. Each shape is displayed for 5 seconds. It is loaded into memory once, then player is displayed each frame using the moving average code (described above) to preserve the shape information. Once the shape and player are in the output buffer, simply looping through that buffer tells us how many "blocks" of the player's body are outside the shape. If more than a certain tolerance are outside, the player loses. If not, the game advances to the next shape until a victory screen is reached. The number of misses is only checked at the end of a shape's time.

We implemented a rather nice content pipeline for the Human Tetris shapes. One can place an arbitrary amount of images in a directory, and then run the MATLAB script imgs2progmem to get out a C header file with a single array holding all the shape data in program memory. The only restrictions are the input images must be 39 x 60 and black and white. It should be noted that the shapes are effectively stored as a bit vector (1 bit per block) in the C file. This compresses each shape to a mere 293 bytes, so we could fit a lot in program memory. The artist should keep in mind that the game currently displays shapes and players on a 13x30 grid (via averaging), so care should be taken when drawing the 39x60 sized shapes.

To demonstrate the extensibility of our shape tracking code, we also implemented a few other games:

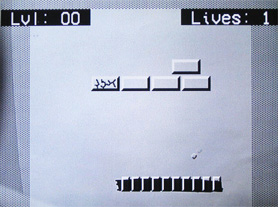

Example of Brick Breaker game

Our first additional game is Brick Breaker. The game world consists of bricks (3 characters wide), a bouncing ball, and the player's "bar" which is represented by character-sized blocks as in Human Tetris. The ball is actually on a 39x60 grid and is displayed as such. The ball bounces indefinitely and consistently. If it falls off the bottom of the screen the player loses a life. If it strikes a brick it damages the brick. If a player destroys all the (breakable) bricks before losing, the player will advance to the next level.

We have also added the Whack-a-Mole game to our project. The mole is composed of 2 rows of 3 characters, giving it a 36x36 pixel footprint. The moles are randomly drawn and removed, loosely based on the current time. The player must get his/her block representation onscreen to collide with a mole in order to "whack" it. Doing so removes the mole from the screen and awards the player with points. When the game time expires, the player's score and any remaining moles are visible.

Results

Speed and Accuracy

We can read in and display an image of 39x60 pixels at 30 frames per second. That is the meaningful maximum, since NTSC is actually only 30 frames per second of information. The signal runs at 60 frames per second, sending every other line every other frame. We only read on every other vertical sync to de-interlace the signal. Our output tends to flicker a little as a result of down-sampling (as opposed to averaging) the NTSC image data. There is currently a little streaking in the output where characters are shown due to noise in the OSD.

Real image, captured image, and interpreted image after applying threshold.

Tracking Demo

An object being tracked, with its interpretation displayed in real-time

To demonstrate our speed and accuracy, we wrote a tracking demo that simply reads the NTSC signal and displays our full resolution output as fast as it can. In object tracking mode, we simply clear the output buffer, load in the input at 39x60 resolution, and display that. This demonstrates the accuracy and speed we were able to achieve.

At 30 frames per second, there is minimal lag on our interpretation of the image. We do not do any filtering in tracking mode. There is a little flicker along the edges due to our down-sampling method. This tracking mode proved very handing for general debugging and especially for getting the timing right for reading in an NTSC frame.

Game Safety and Usability

Anyone capable of movement can play the games in this project, and difficulty can be adjusted by adjusting one's difference from the camera. In this way the game can be adjusted for players of various sizes. The game is also only looking for dark colors, so light colored materials can be used to exclude parts of the image. Brick Breaker is a nice alternative to Human Tetris for any physically disabled players that might stumble upon our game.

Conclusions

Results vs. Expectations

When we drafted our proposal, our vision of the finished product is very similar to the device we have created. Much of the same hardware devices and software techniques we planned on using ended up in our final design. The main hardware drawback we experienced was that the NTSC decoder we had original planned on using was too fast for our MCU. Instead we had to select and use an ADC which would run independently and we could choose when to sample. In effect, since we only cared about the black-and-white signal, this was all we needed to achieve the same sampling effect we had originally planned on.

We have exceeded our expectations in both spatial and temporal resolution. We originally hoped to just barely have enough resolution for a stick figure and to process the image once every few seconds. We currently have enough resolution to recognize each other in debug images and are processing it all many times a second.

Project Extensions

This project had a very short time frame. As such, there are a number of improvements we wish we could have implemented, but did not have time to add.

One element is our shape recognition capability. Currently, the extent of our shape recognition is simply applying a threshold to a grayscale image, to create a black and white representation of the scene. In software, we could add a fairly simple algorithm for more intelligently detecting humans or other large important objects. This could be done by taking the black and white threshold image and searching for the largest few clusters of pixels. This would remedy the problem of background objects incorrectly being identified as part of the player(s), which had caused some issues while demoing. This was temporarily fixed by adjusting the threshold to be very high, but this proved to be a poor representation of the player, as seen in some demo photos. More advanced image analysis could also be done, so long as there was enough idle time in the MCU. With more intelligent object recognition, the shapes of the player would be much more well-defined.

Our image resolution was constrained almost entirely by the MCU's small amount of internal memory. The combination of the external ADC and the running clock rate proved to be perfectly capable of taking enough samples to fill up the image at the given resolution. We had to insert assembly-level stalls to slow the sampling of the NTSC signal down to the correct rate, to achieve an even sampling across the entire line. To capture a higher resolution image, we could have used either external memory or some sort of image compression. External memory may have been hard to interface with, since pieces of the image would have to be sent while the sampling is taking place. This could have been done, for example, by sending data from a single line to memory during skipped lines, since not every line is captured. If there is not enough time between sampled lines, then data could be sent across multiple frames or take advantage of interlacing, since presumably sequential frames are highly correlated. However, this would add a lot of complexity to both hardware and software. Perhaps better would have been some sort of basic image compression, even as simple as only storing the threshold image. While sampling is being done, the threshold could have been applied directly to the samples, so that only a single Boolean bit would be stored for each individual pixel. This would compress our internally stored image by a factor of 8 (since 8 bits were used for each pixel of the grayscale image), without any loss of functionality. Using either of these techniques, we could have increased the spatial resolution of our image, perhaps to achieve better object definition.

To make the game more immersive, we could have added sound. Currently there is no audio feedback on any level, which feels strange in a video game. Sound effects would be fairly straightforward to add, but would require hardware changes to support the audio circuitry and speaker connection. Adding sound would make the game much more natural feeling and enjoyable, potentially deepening gameplay.

Conforming to Standards

Our project uses standard NTSC input and therefore the camera could be swapped. It also produces standard NTSC output, so any device capable of displaying that can be used.

We also use SPI internally. This is not exactly a standard, which probably explains why we only have it working in one direction.

Intellectual Property

We used open source code licensed under GPL v2 used as basis for our OSD SPI driver. The GPLv2 states that any code linking against the GPL v2 code must also be within the GPLv2 license. This probably limits our ability to monetize the project as it stands, since ownership cannot be claimed on GPL v2 code.

Tetris and its artwork are copyrighted and trademarked, so at the least we would have to change the name and some artwork in order to capitalize on our design. Brick Breaker is a new name for Breakout, and there is likely some ownership on the original design but currently many knock-offs exist.

Tetris ® and © 1985~2010 Tetris Holding. Tetris logo, Tetris theme song and Tetriminos are trademarks of Tetris Holding. The Tetris trade dress is owned by Tetris Holding. Licensed to The Tetris Company. Game Design by Alexey Pajitnov. Logo Design by Roger Dean. All Rights Reserved. All other trademarks are the property of their respective owners [Tetris.com].

Breakout is an arcade game developed by Atari, Inc and introduced on May 13, 1976 [Wikipedia].

The games we implemented are someone else's IP. Our driver is GPL-licensed, so no one can make money on our code unless they rip that out first. As Cornell University undergraduate students, we own our original ideas. If this project were to be published, the Tetris aesthetics would have to be replaced.

Ethical Considerations

We have done our best effort to conform to the IEEE Code of Ethics in the design and execution of this project.

Our project is essentially a motion tracking device for the consumer entertainment market. Since it is a class project, it has been created with no intention of profit and in accordance with the beliefs of the GPL. This combination of facts means it was created purely for learning and for fun. Therefore it seeks only to make the world a better place.

The game is based on video output, which means there is a chance it will trigger photosensitive seizures in some users. We have added a warning before the menu screen to alert users to this. We also take that opportunity to warn players about the dangers of motion-based games. Extreme play motions have been known to sometimes cause harm or damage. However, when played safely, our game(s) will improve the health of the user through the physical and mental activity involved.

We have been honest in the reporting of our results, and have strived to write code that does not lie. Our reported resolution is accurate, and our frames per second benchmark is as accurate as our clock will allow.

Because our game is based on video input, a user’s appearance and physical fitness will affect their interaction with the software. For this we recommend users consult with their doctor before playing and wear dark colors to aid the camera in tracking them.

Legal Considerations

Our project is a simple video home entertainment device. It does not release excessive EMF and should not cause any interference with other electronics. We have placed warnings in the game about the dangers involved in playing.

Appendices

A. Source Code

Source files

- Lab5.c (20KB) – all the ISRs, initialization code, tracking output code, and main state machine

- human_tetris.c (6KB) – human tetris game logic and data handling

- brick_breaker.c (8KB) – brick breaker game logic including physics and data handling

- whack_a_mole.c (5KB) – whack-a-mole game logic and data handling

- max7456_learn.c (15KB) – contains code for writing to the OSD's progmem

- max7456_spi.c (9KB) – our OSD driver

- uart.c (5KB) – uart code from Bruce Land

- LICENSE.TXT (18KB) – GNU general public license

Header files

- lab5.h (3KB) – time constants and input and output functions

- Levels.h (1KB) – progmem array storage of brick breaker levels

- Shapes.h (9KB) – progmem array storage of human tetris levels

- macros.h (1KB) – register setting and mathematical macros

- human_tetris.h (1KB) – exposes game state machine function

- brick_breaker.h (1KB) – exposes game state machine function

- whack_a_mole.h (1KB) – exposes game state machine function

- max7456_characters.h (176KB) – progmem arrays for all our custom characters

- max7456_learn.h (3KB) – exposes our functions for sending custom characters to the OSD

- max7456_spi.h (5KB) – exposes useful OSD driver functions

- uart.h (1KB) – exposes necessary UART functions

MATLAB scripts

- makeDots.m (2KB) – produces the 64 different 18x12 characters for arrangements of 6x6 dots

- imgs2progmem.m (2KB) – takes a directory full of images and outputs C code shape arrays for brick breaker

- cap.m (1KB) – used to grab MCU's image dump from serial interface

Download all files: code.zip (44KB)

B. Schematics

Hardware schematic [full-size image]. Download schematic file: humantetris.sch (36KB).

Custom printed circuit board layout [full-size image]. Download pcb file: humantetris.pcb (31KB).

C. Parts List

| Part | Source | Unit Price | Quantity | Total Price |

|---|---|---|---|---|

| ATmega644PA (8-bit MCU) | Empire Technical | sampled | 1 | $0.00 |

| MAX7456 (on-screen display) | Maxim-IC | sampled | 1 | $0.00 |

| TLC5540 (flash ADC) | Texas Instruments | sampled | 1 | $0.00 |

| CM-26N (CMOS color camera) | SparkFun | $31.95 | 1 | $31.95 |

| custom PCB | ExpressPCB | $25.00 | 1 | $25.00 |

| 12V power supply | owned | $5.00 | 1 | $5.00 |

| color TV | owned | owned | 1 | $0.00 |

| RCA video cable | owned | owned | 1 | $0.00 |

| RCJ-014 (RCA jack) | DigiKey | $0.66 | 1 | $0.66 |

| HC49US (27MHz crystal) | DigiKey | $0.63 | 1 | $0.63 |

| header pins | lab | $0.05 | 15 | $0.75 |

| jumper | lab | lab | 1 | $0.00 |

| MP200 (20MHz crystal) | lab | lab | 1 | $0.00 |

| 2.1mm power jack | lab | lab | 1 | $0.00 |

| 1N4001 (1A rectifier) | lab | lab | 1 | $0.00 |

| LM340T-5 (5V regulator) | lab | lab | 1 | $0.00 |

| SPDT slide switch | lab | lab | 1 | $0.00 |

| push button (NO) | lab | lab | 3 | $0.00 |

| 10μF capacitor | lab | lab | 4 | $0.00 |

| 0.1μF capacitor | lab | lab | 8 | $0.00 |

| 22pF capacitor | lab | lab | 2 | $0.00 |

| 100kΩ resistor | lab | lab | 1 | $0.00 |

| 10kΩ resistor | lab | lab | 2 | $0.00 |

| 1kΩ resistor | lab | lab | 3 | $0.00 |

| 300Ω resistor | lab | lab | 1 | $0.00 |

| 100Ω resistor | lab | lab | 3 | $0.00 |

| 75Ω resistor | lab | lab | 2 | $0.00 |

| Total | $63.99 |

D. Tasks

This list shows specific tasks carried out by individual group members. Everything else was done together.

Adam

- Hardware design

- High-level design and circuit schematics

- Built target board for testing

- Hardware prototyping on whiteboard

- Custom PCB design and soldering

- Project website

Kerran

- Software design

- SPI driver for OSD

- Custom character set

- NTSC processing

- Menu system and games

- Game content pipelines

Please see our daily laboratory log for more details: dailylog.txt (4KB)

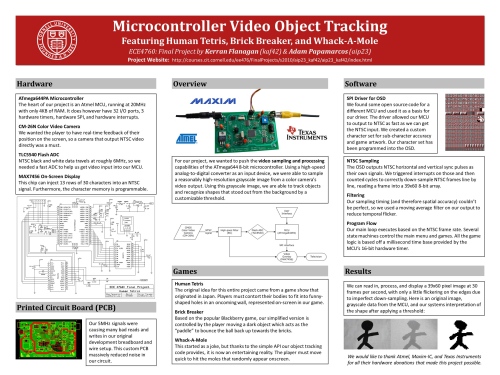

E. Poster

We created a project poster for ECE day, which can be viewed and downloaded below:

ECE Day project poster [full-size image]. Download PowerPoint file: poster.pptx (1.8MB).

References

This section provides links to external reference documents, code, and websites used throughout the project.

Acknowledgements

We would like to thank ECE 4760 Professor Bruce Land and all TA staff (especially our lab TA Jeff Melville), for help and support during the labs and over the course of the project. We thank them for the long lab hours and the parts stocked in the lab.

Additional thanks go to Mary Byatt, for the idea of incorporating Human Tetris in our microcontroller project.

We would also like to thank several vendors for parts donations. We sampled several ATmega644PA chips from Empire Technical Associates, MAX7456 chips from Maxim-IC, and TLC5540 chips from Texas Instruments. We also sampled several other parts from Maxim-IC and Texas Instruments (including op-amps, decoders, etc) which did not make it into our final design.