Glove MIDI ControllerAnson Dorsey (ajd53), Eric Gunther (ecg35), Jonathon Smythe (jps93) |

ECE 4760 |

| Introduction | High Level | Hardware | Software | Development and Testing | Results | Video | Conclusions | Appendices |

Introduction

Back to topMIDI (Musical Instrument Digital Interface) is a protocol that was established in the early 1980's to standardize the emerging field of digital instruments. A MIDI controller is a piece of a hardware or software, typically a keyboard, that allows a user to connect to a digital synthesizer and play any instrument. Our final project for ECE 4760 was to prototype a glove MIDI controller that transmits MIDI signals corresponding to individual finger taps. We attached a piezoelectric vibration sensor to each of 8 fingers on a pair of leather driving gloves. The gloves are attached to a microcontroller which processes the taps and outputs MIDI signals via a standard MIDI output port. Additionally, the user can select a variety of note mapping schemes and presets for the gloves via a user interface in MATLAB.

High Level Design

Back to topRationale

Two of our group members are avid musicians, and we sought from the beginning of the semester to do something music related. Our initial plans were to develop a music visualizer, but we quickly jumped on to the glove MIDI controller following a late night brainstorming session. After researching the Final Projects web page we deemed the project feasible and novel enough to satisfy our requirements. In particular we were inspired by the Trumpet MIDI Controller project.Background Math

Before beginning, we first needed to determine that the microcontroller could perform the necessary tasks in real-time. Prior to calculation, our chief concern was whether the ADC could sample fast enough to obtain a good representation of 8 sensor signals with minimal latency. We were advised by course staff that the ADC speed should be prescaled by at least 32 to allow for settling of the lower bits, this corresponds to a sample rate of 500kHz. Divided among 8 sensors, this is a maximum sample rate of 62.5kHz, or a period of 16 microseconds. After taking a few snapshots of sensor "presses" on the o-scope, we determined that the electrical response to a press was about 25ms long. Due to hardware constraints discussed below, we deemed a sample period of 125 microseconds was appropriate; it yields a per-sensor sample rate of 1ms.The MIDI protocol specifies a digital signal with a baud rate of 31.25KBaud (+/- 1%). Since the MIDI data format consists of a start bit, data byte, and a stop bit, the baud rate is equal to the clock rate. We prescaled a timer by 8, and trigger an ISR (resetting to zero on trigger) at a count of 64.

16MHz / 8 = 2MHz. 2MHz/64 = 31.25KHz.

The ISR, running at 31.25KHz, clocks out any data bytes remaining in the buffer.

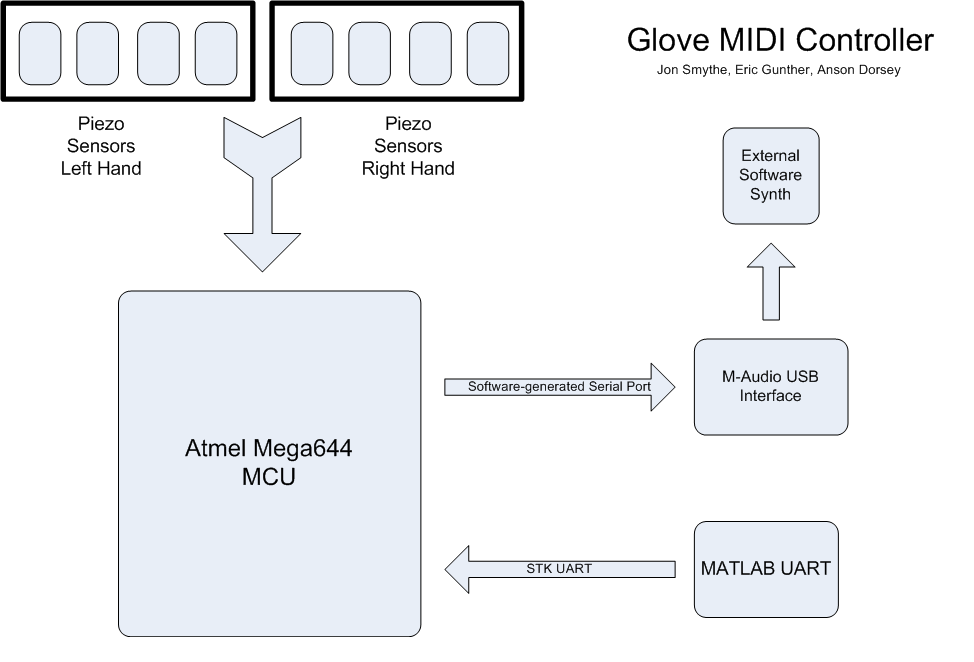

Logical Structure

Hardware/Software Tradeoffs

Our budgetary limitations forced us to choose a sensor that was sub-optimal for our needs. The inexpensive flex sensors we chose are designed to sense vibrations, not pressure touch; they sense differential flex, so the output voltage level is directly proportional to how fast the sensor tab flexes. As a result, we had to compensate for the sensor output by performing a kind of integration in software. Memory was not a huge concern for us, so we were able to initialize several arrays to track the prior states of the sensors. However, state-tracking introduces an inherent latency to our project; for every prior state we tracked, 1ms of latency was added to the time it took for an action to turn into a MIDI message.Since real-time operation was our goal, latency was one of our chief concerns. The human ear starts to lose the ability to detect delay around a latency of 30 milliseconds. The software drivers that take our project's output and synthesize sound run generate about 10 milliseconds of latency (sometimes less), so we aimed to generate no more than 15 ms of latency. The direct implication of low-latency software is that the MCU had to make a decision regarding user input within 15 samples, and as such we spent a great deal of time calibrating our code in order to make the controller effective.

The MIDI controller projects from past years generally used the hardware UART to send MIDI messages. We wanted to be able to control the mapping of the sensors to any MIDI note via an external client (MATLAB), so the UART was dedicated to interfacing with another computer. As a result, we were forced to code the message output on a pin, bit-by-bit. We needed to control the output in an ISR, so our handmade serial output was designed to be very lightweight; we never encountered timing problems with the ISR, sampling sensors, or input interpretation.

The Mega644 can only multiplex 8 channels into its ADC, so we were forced to sequentially sample each sensor. By using a slightly-lower-than ideal sampling rate, we were able to sample each sensor at a rate sufficient to process user input.

Applicable Standards

MIDIBecause our prototype is a fully functioning MIDI controller, it must meet the standard MIDI 1.0 specifications. This requires us to send data through our MIDI port at a baud rate of 31.25 Kbaud, and ensure that the data follows the correct standards. Most MIDI messages (specifically the ones that we are concerned with) consist of a status byte followed by one or two data bytes. The status byte includes the message type (note on, note off, pitch wheel, etc) as well as the channel (MIDI supports up to 16 simultaneous channels over one connection). All our data travels through channel 0, which should not be a concern considering our controller does not function as a MIDI thru device. If a user wants to use our controller in conjunction with other MIDI controllers they should make sure it's the first one in the chain.

Hardware Design

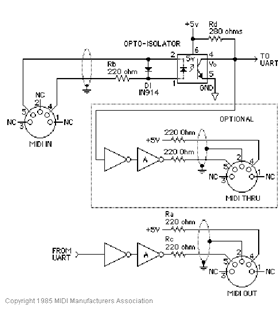

Back to topThe MIDI protocol specifies the interface hardware according to the following schematic:

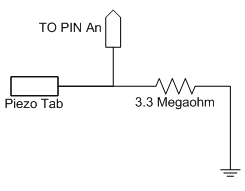

The piezoelectric sensors typically produced a voltage range of 25V-50V for a normal press and up to 70V when struck very hard, according the high-impedance oscilloscope. Since the ADC can only convert voltages in the range 0-5V, we needed to reduce the range of sensor output. The specifications of the sensor indicate the piezoelectric creates current on the order of nanoamps; we treated the sensor as a current source and fine-tuned resistor values from an initial order-of-magnitude guess until we discovered the value that provided a good range for a reasonable "press." This was an inexact science, so it took a good deal of time, but the resulting circuit worked perfectly for our needs. Please see below for an example circuit, shown for only one sensor.

Software Design

Back to topMicrocontroller Code

Sampling the sensorsWe needed to sample the piezo sensors at an appropriate rate, while allowing enough processing time to transmit MIDI over our serial port. Initially we sampled the sensors as fast as possible, but quickly decided to time the samples using the hardware timer TIMER0. Every .125 milliseconds we acquire a digital sensor value from the ADC, allowing us to sample the entire setup in 1 ms. The ADC accepts input from any of the 8 pins on PORTA, so every time a sensor is sampled we continue a cycle through all ADMUX control values.

In order to register a valid press we had to create a system similar to the debouncing used in earlier labs. If the ADC returns a sensor value of over 10 (out of 255) we begin incrementing a counter for that sensor. Once the counter indicates the voltage has been high for 10 milliseconds we create a MIDI message. Then the noteWait counter is signalled which counts to 200 milliseconds before allowing another press to be registered. Depending on whether or not the sustain mode is on, these presses either always send note on messages, or alternately send note on and note off messages.

| Threshold | Value |

|---|---|

| sensorValue | 10 |

| sensorCt | 10 |

| noteWait | 200 |

Creating and Sending a MIDI Message

Creating a MIDI message is a relatively simple task. Each individual message is only a single byte, and each acknowledged sensor press requires three bytes of information (note on status byte, note and velocity). The createMIDImessage method writes each byte in successive order to the MIDI_buffer array of bytes. Given there were few memory constraints to our project, the MIDI message buffer can hold 32 bytes of information, which allows for the unlikely case of all 8 sensors pressing down and registering a note before a single message could be sent.

The MIDI_buffer was an extremely important aspect to sending out the messages without any losses. At any point the user could create multiple MIDI messages before others had been sent through the COM port. In fact, just one sensor press corresponds to a message of 3 bytes, which already requires 3 slots in the buffer. The buffer operates as a ring buffer such that when the last slot in the buffer is filled, the next slot moves back to the beginning. Thus, we had to establish two pointers, an output tracker to remember which location in memory contains the next byte to be sent, and a write tracker to remember which byte is open for the next created MIDI message.Each time a message is sent or created the respective tracker is incremented to the next slot in memory, and wrapped around at the end.

Sending a MIDI message required us to write our own serial communication port. Granted we could have done this through the UART which outputs on PORTS D.0 and D.1, but we decided early on to use our spare port for communication with a computer interface. Since MIDI operates at 31.25 kbaud (+/- 1%) we decided to create an appropriately timed ISR using TIMER2. The baud rate for MIDI corresponds specifically to the rate at which individual data bits are sent, so an entire message (1 start bit, 8 data bits, 1 stop bit) takes about 320 microseconds to send. We chose to send the bits through PORTC0, each time cycling through the 8 bits in each memory slot.

User Interface

Since the controller connects to an interface at the computer, we also had to consider scanning the COM port for input from MATLAB. We found that this caused problems with sending MIDI output, so we created two modes (playing and note mapping). When switch 7 (connected to PORTB0) is pushed the user enters the note mapping mode which requires a selection from the computer interface. Once the user clicks the program button (either manual or preset) the glove re-enters the playing mode.The user interface is a MATLAB program that runs on a computer connected to the microcontroller via a serial COM port. The interface opens a serial connection at a baudrate of 9600 (the same as used for Hyperterm connections for previous labs) and sends messages when the user clicks one of the two program buttons (manual and preset). The interface displays dropdowns for each finger so the user can map any note to each finger, and includes presets for Major scales, Minor scales and drum kits. The drum kit is made possible because of the General MIDI (GM) specification which requires synthesizers to map certain percussive sounds to standard MIDI notes. We also implemented a checkbox for the user to turn the sustain mode on or off.

Development and Testing

Back to topThe development of this prototype began with the piezo vibration sensors. We quickly came to the realization that the sensors were not particularly suited for our project, but we decided to make due and see if we could come up with a solution. The sensors output a voltage of up to 70 V (for almost 90 degree deflection), but generally output around 30 V. Since the MCU operates on 0-5 V we had to create a voltage divider that would bring the input to this level, however this initially caused a problem because the sensors have very large output impedance. Our initial tests were with a comically long series connection of 1 Mohm and 100 kohm resistors, and we eventually settled on 3.3 Mohm resistors to create the appropriate signal conditioning.

Once we were able to print the digital values of the sensor input on Hyperterm we began to assess how we could establish a finger tap. Timing was not a concern as we were able to sample the sensors at a very fast rate (1 ms per sensor) and we recalled from earlier labs that a human cannot realistically press faster than a period of 30 ms. Thus we developed a system of counting the number of samples above a certain threshold for each sensor. This allowed us to turn lights on and off with a press of a sensor, though we noticed a discrepancy in when releases were registered as pushes. All initial presses switched an LED, but only some of the releases did the same. This was a problem we would come to face later.

The next step was to send MIDI messages through our MIDI out circuit. This would have been easy through the UART but we needed our spare COM port to interface with the computer (to adjust settings on the glove). Thus we decided on creating our own serial port to send data at the specific baud rate required by MIDI. It was easy to create signals at this frequency but actually getting them to register with the computer was a challenge. Because we did not have a proper MIDI controller or synthesizer, we had to rely on a MIDI to USB connection with our computer and the oscilloscope. After failing to get our MIDI messages received, we chose to turn the computer into a MIDI controller and scope its output. After some tweaking we managed to create identical signals but still could not send MIDI messages that could be interpreted. Ultimately this problem was caused by our MIDI out circuit which did not have the appropriate buffers on our MCU output.

Now that we were able to play simple tunes on our computer, we decided to develop an interface in MATLAB that could map any note to each finger, as well as map presets for different scales and drum kits. This interface required connection through the spare serial port, though this was not able to occur in parallel with MIDI processing. Thus we created a two state system where the MCU could operate in a programming mode or a playing mode. This was triggered by a switch which was surprisingly difficult to implement given we were quickly running out of ports.

We decided to make an attempt at adding new sensors to the project as to increase the functionality of the controller. First we thought of integrating the output from an accelerometer placed on the top of each glove in order to establish a variety of octaves. However, this was rendered useless by the note mapping interface and deemed unfeasible due to how noisy the accelerometer data would be. Then we attempted to make use of a spare pressure sensor that we found in the lab. We attached a thin hose to the sensor and made it so that the user could adjust the pressure with their mouth. When we scoped the output from this makeshift sensor we found it to be rather noisy and with a very low dynamic range. Even if we amplified the signal the noise would make the output useless in creating an appropriate MIDI message. Our last, and most promising, attempt was with ESD foam which changes resistance when pressed. We were able to create a voltage divider with the ESD foam and scope the output from presses, but again the results were futile. First off, latency was a big problem as the output responded rather slowly to user input (on the order of a second). Also, the ESD foam had a very low dynamic range and we could only create voltage swings of about 250 mV with a lot of noise.

Finally we returned to the problem of the sensor registering a press but not necessarily a release. We found that with most MIDI instruments like piano and percussion this was not a problem as a note on would eventually decay as in real life. However, synthesizers require note-off message because they don't have a naturally occuring decay. Since we couldn't rely on releases to get the job done, we decided to implement a sustain mode that would turn the note on after one press and off after another press. This was difficult because some releases would cause a note off message to send. Eventually we implemented a waiting period where a sensor could only register a note on message once every 50 ms. This wasn't a fool proof solution, but definitely helped.

Results

Back to topSpeed of execution and accuracy

The glove responds immediately to a finger tap, thus our scheme to avoid latency problems was successful. Since we are operating with a MIDI synthesizer all musical output is as accurate as the given MIDI synthesizer allows. Our MIDI signals are output at a near perfect accuracy and the timing always falls within the required 31.25 kbaud rate. Below are two figures showing how accurate our MIDI messages are (note that the discrepancy in the middle of the scope is the note byte). The left is actual MIDI out from a MIDI controller and the right is our signal. As per MIDI specifications, our signal falls within 1% accuracy.

Usability

The usability of our product is debatable. It's certainly functional but it takes a while to get acquainted with the sensitivity of the sensors. The glove is generally accurate though at times it fails to register a note, most likely due to the noisy sensor output.

Video

Back to topConclusions

Back to topMeeting Expectations

We successfully met our initial goal, creating a MIDI controller glove that was playable. Once we reached this milestone, we tried to implement add-ons. We successfully added a serial control interface that allowed for note re-mapping via MATLAB. Our next goal was to add more sensors that could be used to implement additional MIDI messages such as aftertouch and, ideally, pitch modulation. We experimented with sensors that we could find for free in the lab; the first thing we tried was an air pressure sensor that you could control with your mouth to modulate up (increase pressure) or modulate down (decrease pressure). We made an admirable attempt, but ultimately failed because the sensor was designed for pressures far below 1 atm. Next, we worked to make a dual-footpad sensor from ESD foam. This also proved useless, as the ESD foam was too slow to respond as well as being horribly imprecise, leading to undesirable pitch bends.We feel that we met expectations. It would have been nice to use sensors better suited to our purposes, as opposed to sensors that were inexpensive enough to fit in the budget. In retrospect, if we had decided on an idea sooner we could have made a stronger attempt to sample better sensors.

Standards

Our design conformed perfectly to the MIDI protocol; we know this because we played various instrument patches using an M-Audio USB interface into Garageband.Intellectual Property

We didn't draw directly from anyone else's code, or use code from the public domain. We did, however, draw some ideas from the "Trumpet MIDI Controller" and "MIDI drum controller" projects from the course project archive. We doubt that there is any publishing value to our project, but it is novel enough that a private enterprise might be interested in the concept. There are, however, other people who have created designs similar enough to prevent any chance of gaining a patent.Ethical and Legal Considerations

While designing our project we did our best to follow the IEEE code of ethics. Our project doesn't have many social or legal ramifications, but we felt that it could be viewed as a consumer device. As such, it must be completely safe to be used by untrained hands. There weren't any unsafe voltages involved but we took great care to design our project in a way that no other products would be damaged, especially expensive synthesizers or computers (software-synthesizers). However, our product isn't for everyone. Animal enthusiasts might take issue with our base platform, a leather glove. Furthermore, some people with disabilities might not be able to enjoy the MIDI glove controller. However, despite our best efforts we couldn't design something that is totally fair to all people; by nature the MIDI glove requires the full use of the hands and fingers. We have aspired to design inventive solutions to the problems we faced while working on this project. We hope that we have made our own small contribution to improving the understanding of technology. We carefully noted where we drew on the code and designs of others, so that they could be properly attributed upon the completion of our project. We are most pleased with our ability to work as a team as suggested by IEEE principle #10, we feel that we all benefitted from this experience.Appendices

Back to topCode

GloveMIDIController.zipCost Details

| Num | Item | Part Number | Vendor | Cost ($) |

|---|---|---|---|---|

| 1 | STK-500 | N/A | ECE 4760 Lab | 15.00 |

| 1 | White board | N/A | ECE 4760 Lab | 6.00 |

| 1 | Leather gloves | N/A | Donated* | 0.00 |

| 8 | Piezo Film Vibration Sensors | MSP1006-ND | Digikey | 19.20 |

| 1 | DIN 5-Pin Female | CP-2350-ND | Digikey | 0.72 |

| 1 | MIDI Cable | N/A | Donated** | 0.00 |

| 1 | MIDI to USB Converter | N/A | Donated** | 0.00 |

| 1 | 10-pin Jumper Cables | N/A | ECE 4760 Lab | 0.00 |

| 1 | 2-pin Jumper Cables | N/A | ECE 4760 Lab | 1.00 |

| 2 | 1-pin Jumper Cables | N/A | ECE 4760 Lab | 0.00 |

| 1 | LM358 Op-Amp | N/A | ECE 4760 Lab | 0.00 |

| Resistors | ||||

| 8 | 3.3 Mohm | N/A | ECE 4760 Lab | 0.00 |

| 2 | 220 ohm | N/A | ECE 4760 Lab | 0.00 |

| Total | 41.92 |

* Gloves donated by Mr. Smythe

** MIDI cables donated by Kevin Ernste

Task Division

Anson - circuitry, MCU programming (specifically software serial port), pressure sensor experimentsEric - MCU programming, MATLAB interface, website, ESD foam experiments

Jon - circuitry (specifically all work on glove and sensor calibration), testing

Acknowledgements

First and foremost we'd like to thank Bruce Land for teaching us everything we now know about microcontrollers and for making this class as interesting as it possibly can be. Thank you to our TA Tim Sams and lab consultant Jeff Melville for their help during our lab period throughout the semester. Also, thank you to Kevin Ernste of Cornell's Digital Music department for providing us MIDI cables and adapters, and to Mr. Smythe for giving up his rather nice leather driving gloves.References

Data sheetsPiezo Film Vibration Sensors

DIN 5-Pin Female

LM358 Operation Amplifier

Vendor Sites

Digikey

Sources

Trumpet MIDI Controller (MIDI Output)

Physiological Simulator (MATLAB Interface)

The MIDI Specification

Cornell Digital Music