Introduction

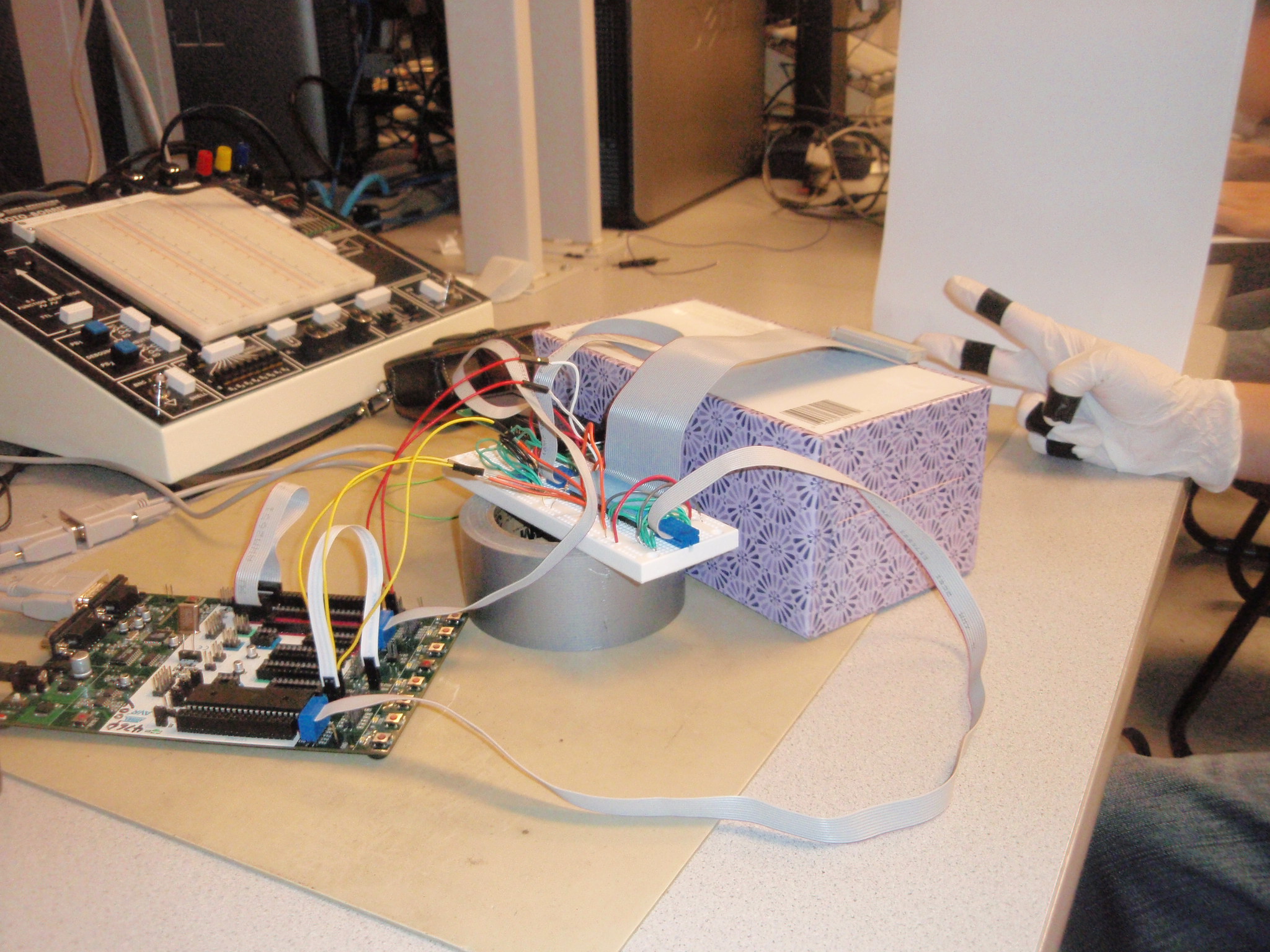

We created a rock paper scissors game that utilizes a CMOS camera to determine what hand the human player plays. The player is required to wear a glove that has black tape taped on each finger. When the player plays the hand, the camera captures in the image, and based on the quantity of black pixels present, processes and decides whether the player has played a rock, paper, or scissors. The system also generates a random hand, and determines whether the player has won or not, and outputs this onto an LCD screen.

High Level

We started this project out trying to build a camera-based simultaneous localization and mapping system based on image profiles. However, after getting the camera to work, we realized that the speed at which pixels are read from the camera into the STK500 is much too slow for our purposes. Thus, we decided to switch gears and work on a different project that also utilizes the CMOS camera we had working. To this end, we decided to make a rock paper scissors game, as it is a game that everyone knows how to play.

Our project has two main components, the camera and the LCD screen. The LCD screen outputs the current state of the game to the player, while the camera reads in the image to determine what hand the player has played.

After pressing a button to start the game, the screen outputs "ROCK", "PAPER”, "SCISSORS", "SHOOT!!!". At the "SHOOT!!!" cue, the CMOS camera begins reading in the image. As the camera reads in the grayscale pixels, it thresholds the pixels to either black or white. We can do this because we are using a controlled environment, in which the player wears a white glove with black tape on the fingers, and the background is completely white. Thus, we know that there will only be black pixels on the fingers. Using the quantity of black pixels, we determine which hand the player has played. At the start, the game is calibrated by having the player show a scissors to the camera. The camera reads in this image to determine how many black pixels it should expect for a scissors hand, as well as a bound for the differences between the three hands.

In terms of tradeoffs, we chose the CMOS camera because we obtained the module from a previous ECE 4760 group, which allowed us to cut the costs by one third of its original price. We also were debating between using a black/white TV screen versus an LCD screen to output the game's status. However, we determined that having to continuously print out NTSC signals to the screen would be too much of a burden on the STK500, and thus decided to go with the LCD screen. To the best of our knowledge, there are no relevant standards, patents, copyrights, or trademarks to our project.

Hardware Design

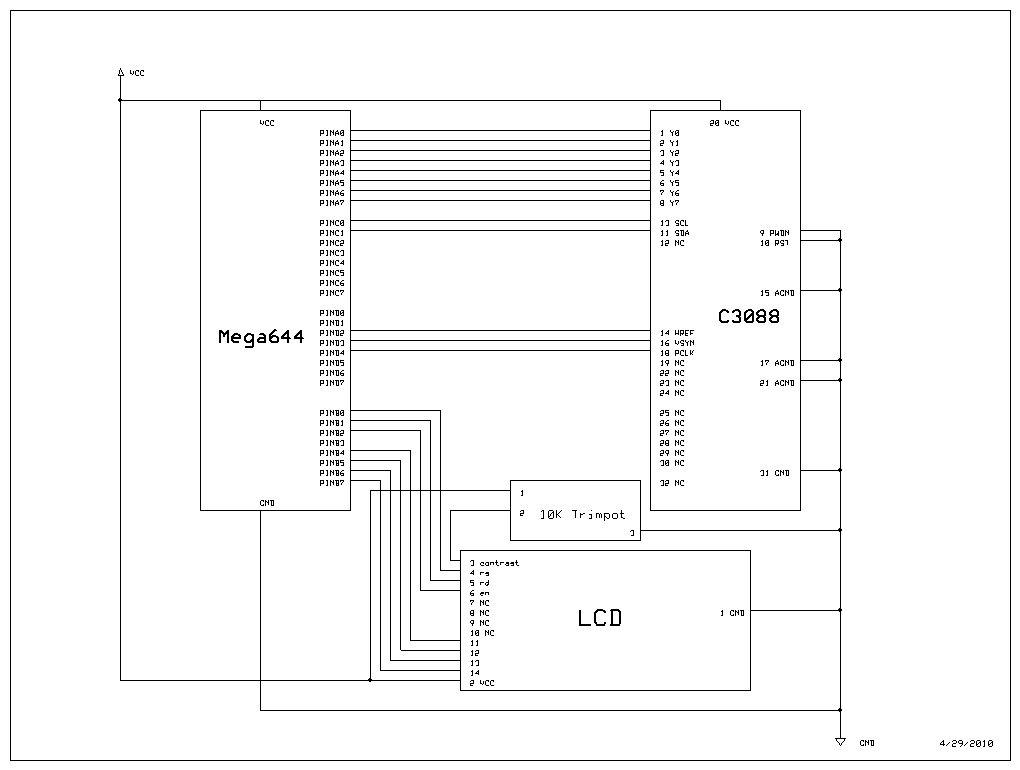

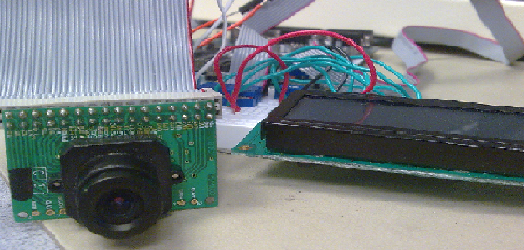

The hardware of the system involves a CMOS camera and an LCD display. For the CMOS camera, we chose the C3088 color sensor module with digital output. It uses OmniVision's CMOS image sensor OV6620. We chose this camera because it provides an easy to use digital interface, and because most of the projects involving cameras in the previous years used this module. Knowing that previous groups have used this camera, we were confident that we would also be able to get it to work. The pin connections for the camera are included in the schematics in appendix B below, where port A of the STK500 is connected to the digital output bus from the camera. I2C communications between the camera and the Mega644 are connected through pin C0 and C1 on STK500. We also set pin D2, D3, and D4 to take the timing signals HREF, VSYNC, and PCLK, respectively, from the camera. The LCD is connected to port B on the STK500 in the same way that we have done in the previous labs. The game environment is set up using two empty boxes, one holding the camera in place and the other supporting the white screen. The distance between the camera and the screen is about 10 inches apart.

Software Design

Main Operation

The overall structure of the code is based around a simple state machine. The state machine starts by having the user calibrate the camera. This is done by having the camera capture an image of the player showing scissors, and then calculating the number of black pixels in this image. The number calculated serves as a threshold in the future to figure out what hand the player is playing. After calibration, the state machine goes into a start state, and waits until the player presses the start button. When the start button is pressed, the state machine transitions to the game state, and cues the player to show his hand. After capturing the image of the player's hand, the hand is processed by calculating the number of black pixels in it, and using this, the system figures out what the player has played. The system then compares the players hand to a randomly generated computer hand, and displays whether or not the player wins or loses. Finally, the state machine transitions back to the start state, and waits for another button press to start the game over again. Throughout the various states, the LCD screen is printed to notify the player of the current status of the game.

Camera Configuration

To configure the camera for our needs, we had to program the registers in it to adjust a couple of settings. This is done by using the I2C protocol on the Mega644's TWI (Two-wire interface). Although the TWI provides the ability to use an internal pull-up resistor, we chose to use 2 external resistors that were 5.1K ohms each to leave less room for unaccountable errors. The code for programming the camera's registers was taken from the 3D Scanner group from last year, and involved two files: i2cmaster.h and twimaster.c. The camera settings were determined by looking at the datasheet for the OV6620. Some of the most notable choices we made were adjusting contrast to maximum to generate a stark difference between dark and light pixels for our threshold operation, using grayscale images, and choosing a frame size of 176x144. We chose this frame size because the Mega644 has 4KB of memory, and since each pixel is a byte, this is not a lot of space to work with. More details on the actual size of the image stored are given below in the image processing section.

Image Capture

Capturing an image from the camera proved to be a lot more difficult than we expected. The camera outputs a clock signal that notifies the MCU of when a new frame, line, and pixel are available. The pins that notify the MCU of a new frame, new line, and new pixel are VSYCN, HREF, and PCLK, respectively. However, after extensive testing, we realized that the MCU is not fast enough to read in a frame line by line. Because of this, we opted to capture images vertically. What this means is that we read in one pixel per line every time a new frame is available. This means that we must read as many frames as pixels/line (176 frames), which greatly slows down the process. In addition, the image cannot move significantly while the camera is capturing the image. Since the Mega644 has only 4KB of EEPROM, we chose to down sample the input image by 4x3, resulting in an image of size 44x48. This results in a use of 44 bytes * 48 bytes = 2.112 KB, which keeps us well under the limit, and gives us ample space for other variables and code. We experimented with higher resolution images, but the recognition performance is already decent for this down sampled size. As the MCU reads in each pixel from the camera, it thresholds it to black or white, by setting every pixel greater than a value of 16 to white, and everything else to black. At the same time, a count of black pixels total is made while reading in each pixel.

Hand Recognition

The method for recognizing hands is very simple. We experimented with machine learning approaches involving linear classifiers such as perceptrons and support vector machines. The great thing about linear classifiers is that we can train them offline through a program such as Matlab, and simply store the learned weights in the MCU. In addition, the MCU just has to extract features, and then do a couple of multiplication and addition operations to compute the classification. However, we soon realized that using learning algorithms was an overkill for our problem. Thus, we settled on simply looking at the number of black pixels in the image. The most important part of this kind of recognition is the calibration step. In the calibration step, we capture an image of scissors to figure out how many black pixels should be seen for a scissors hand. Since the scissors hand will show two fingers, we choose the correction term as half of this amount to estimate the number of black pixels seen on a single finger. This way, with the correction in place, any approximation between 1 and 3 fingers worth of black pixels will be classified as scissors, anything greater as paper, and anything less as rock.

Generating Random Hands

To generate random hands, we simply keep a counter that continuously increments itself as the MCU operates. We take this number modulo 3 to generate a random hand, as we assume that the time at which the player presses the button to start the game will be sufficiently random.

Design Results

The resulting system we designed is capable of playing rock paper scissors with little or no errors in determining the correct hand that the player played. The biggest problem with our system is the speed of execution. Because we are forced to capture an image by vertical lines, and thus wait 176 frames to capture an entire image, the player must keep his hand in front of the camera for around 20 seconds for the Mega644 to capture the image. This is quite annoying, and the player cannot move his hand during this time. In addition, because of the small field of view of the camera, the player's fingers must be in a small, specified region so that the camera can see the black tape. However, as long as these two constraints can be satisfied, the system is able to correctly identify the player's hand every time.

In terms of safety, our game performs fine. Because of the slow and steady pace of the rock paper scissors game, there is no risk of injury or other hazards. In addition, there is little interference as the only two major pieces of hardware we used were the CMOS camera and the LCD screen. Also, our game can be played by any person with the use of their hands and fingers, as long as they put on the glove with black tape provided.

Conclusions

Although we were successful in making the camera capture the image, and the gesture recognition algorithm worked accurately as expected, the flow of the game is slowed down because the system requires too much time to take the image due to limited processing speed of the Mega644. This issue could be improved by using a camera module that supports serial communication instead of using the port from the microcontroller to transfer the image data. In this project, we referenced and used a TWI library created by Peter Fleury called TWIMaster, which was also used in previous projects. In addition, we also referenced ideas for the vertical line image capture from Inaki Navarro Oiza. The other parts of the game were designed by applying the knowledge we learned in the lecture and other engineering experiences. We believe this project could be extended to other low cost microcontroller based computer vision applications.

This project follows all the IEEE Code of Ethics lines. There is no aspect in this project that is harmful to any individual and the community. The conflict of interest is involved in this project since rock paper scissors game is simple and fair. The game is made so that any individual can play and no form of bribery is involved in this project, since the computer gives hands randomly. The project improves the understanding of technology in the way that it combines computer vision algorithms, which are usually computationally extensive, and low cost microcontrollers. We have acknowledged all the sources of help in this report and we are more than willing to accept any criticism on our work.

Appendices

Appendix A: Budget and Parts List

| Part | Source | Unit Price | Quantity | Total Cost |

|---|---|---|---|---|

| Total | -- | -- | -- | $49.5 |

| STK500 | 4760 Lab | $15 | 1 | $15 |

| C3088 Camera Module | From Older Project | $15 | 1 | $15 |

| LCD (16x2) | 4760 Lab | $8 | 1 | $8 |

| Breadboard | 4760 Lab | $6 | 1 | $6 |

| 40-pin Flat Cable | 4760 Lab | $0 | 1 | $0 |

| 40-pin DIP Socket | 4760 Lab | $0.5 | 1 | $0.5 |

| 1-pin Jumper Cables | 4760 Lab | $1 | 5 | $5 |

| Latex Glove | Previously Owned | $0 | 3 | $0 |

| Wire, Misc | 4760 Lab | $0 | -- | $0 |

Appendix B: Schematics

Appendix C: Code Listing

camera.c

i2cmaster.h

twimaster.c

Appendix D: Distribution of Tasks

- Game system design

- Circuit schematic design

- TWI library implementing

- Gesture recognition algorithm design

- Website design

Ling-Wei Lee

- Initial Software design

- Circuit schematic design

- TWI library implementing

- Gesture recognition algorithm design

- Final report

Kevin Tang

References

Datasheets

Atmel ATMega 644 Microcontroller

C3088 camera module

OmniVision OV6620 optical sensor

Vendor

We did not purchase any parts through vendors.

Utilized Libraries/Code Borrowed

3D Scanner by Ryan Dunn and Dale Taylor

Background Sites/Paper

Digital Camera Interface by Inaki Navarro Oiza

Acknowledgements

We would like to thank Bruce Land for his help on getting the I2C working for the camera. We would also like to thank our TA Tim Sams for his help. Finally, we'd like to thank Peter Kung for lending us the CMOS camera.

Latex glove with black tape on the fingers to simplify the gesture recognition algorithm

C3088 CMOS camera module used for capturing the gesture of player