"A standalone face recognition access control system"

project soundbyte

We created a standalone face recognition system for access control. Users enroll in the system with the push of a button and can then log in with a different button. Face recognition uses an eigenface method. Initial testing indicates an 88% successful login rate with no false positives.

There are currently commercially available systems for face recognition, but they are bulky, expensive, and proprietary. Our goal was to create a portable low-cost system. Our design consists of an Atmel ATmega644 8-bit microcontroller, a C3088 camera module with an OmniVision OV6620 CMOS image sensor, Atmel's AT45DB321D Serial Dataflash, a Varitronix MDLS16264 LCD module for output, a 9-volt battery, and a small wooden structure for chin support.

High Level Design

Our design is split into three different processes: training, enrolling, and logging in.

Training

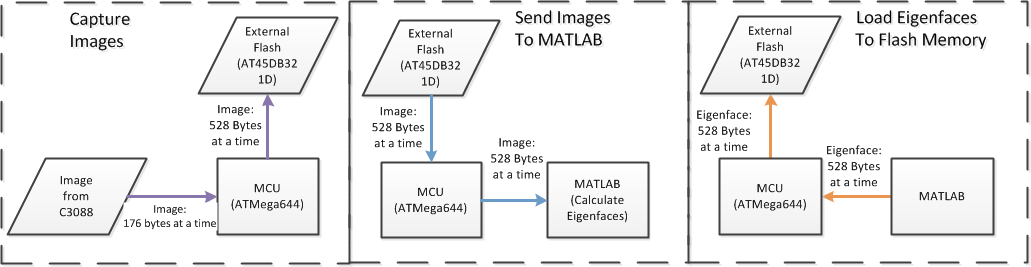

The training process is the only time we use a computer; once this step is complete, the system is completely standalone. If we were to sell this system as a consumer product, we would ship the system pre-trained. The training process consists of teaching the system to key in on the most important features of a face. To do this, a large number of facial images are taken and sent to Matlab to help the system determine the distinguishing features of a face. We use Matlab to create the eigenfaces, which are the principle components of the training set (See Principal Component Analysis). Because the images were too large to be held on the microcontroller, we captured an image a line at a time, sending each line to flash before the camera sends the next line. We then send the image through the microcontroller to Matlab over the serial port. Once all of the training images are in Matlab the eigenfaces and average face are created (see Background Math for more information). Finally, the eigenfaces and average face are sent to flash memory through the microcontroller. Once the eigenfaces and average face are in flash, the system is completely standalone.

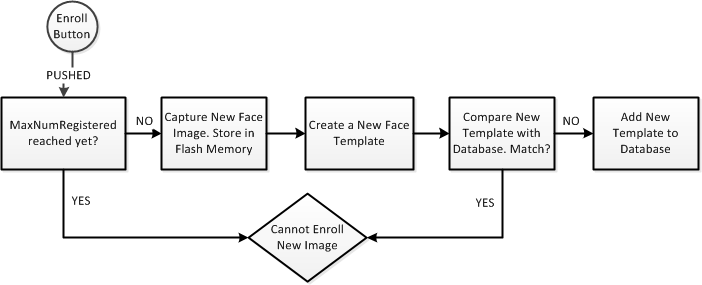

Enrolling

Before a user can login to the system, he first needs to register his face and enroll it into the database. To enroll into the system, the user presses the enroll button, which will capture the image. Before the image is captured, the current number of system users is checked; if the maximum number of users is met the new user cannot be enrolled. We set our maximum to 20 users. If the maximum hasn't been met, the user's face image is captured and is once again sent to flash memory 176 bytes at a time (same as in the training process) and is stored there temporarily for calculation. The image and eigenfaces are all pulled back to the microcontroller 528 bytes at a time to calculate the new user's "template", which is a short vector describing the user's correlation with the eigenfaces. (see Background Math for more information). The template is then compared with the previously stored templates; if the new template is too close to a previous template, the user cannot be enrolled. We defined the "closeness" between two templates as the cosine of the angle between them. If there are no matches, the new template is added to the database in flash memory to save it in case of a system reset.

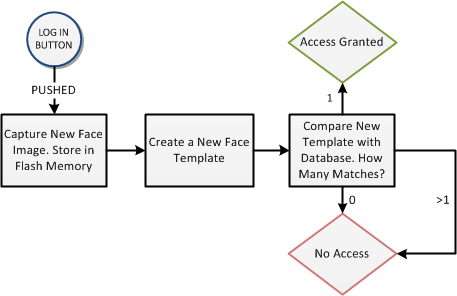

Logging in

The Logging In process is initially very similar to enrolling. The user presses the login button to take his picture, store it in flash memory and begin the logging in process. Again the newly captured image and eigenfaces are pulled from flash back to the micrcontroller 528 bytes at a time to calculate the user's template. This template is compared with all of the previous templates. For the user to be logged in, their template needs to "match" (be close enough to) only one saved template; otherwise they will be "denied access". Again, the cosine of the angle between the two templates is used to determine template match. Whether or not the user was logged in, the top three matches are displayed on the LCD.

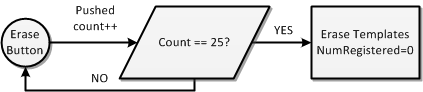

Erasing Users

We currently only have the system setup to have up to 20 enrolled users. However, for demo purposes we wanted the ability to enroll new users and erase the old ones. To do this we added a final button on the back of our protoboard for this purpose. The button needs to be held down for an entire second before the function to erase templates is called. A message is displayed to the LCD to let the user know that the enrollments have been erased.

Standards Used

For communicating with flash memory we used the Serial Peripheral Interface (SPI). For programming the camera we used the Inter-Integrated Circuit (I2C) interface. The video signal was digital, so it was not NTSC, but understanding the NTSC standard helped us understand the camera output.

Intellectual Property

The eigenface method is in the literature, so we have no intellectual property concerns. We plan to try to publish our results, so we are fully disclosing our design.

Background Math

When one thinks of face recognition, one immediately thinks of finding features of a face: eyes, nose, ears, cheek bones. But who's to say that these are the most distinguishing characteristics of a face, and that they are the best features by which a face should be described? And what if these features are correlated? Instead of hard-coding features for detection, we decided to find the orthogonal features that most optimally describe our large training set by using Principal Component Analysis. This creates an orthogonal basis of principal components for our training set. The basis vectors are known as eigenfaces, and can be thought of as characteristic features of a face. All new faces will be described as a linear combination of these eigenfaces. This is equivalent to projecting the new face onto the subspace spanned by our eigenfaces. By using only the eigenfaces with the highest eigenvalues, we ensure that our projection maintains the most face-like energy from the image. This method was proposed by M. Turk and A. Pentland in 1991.

The system is divided into three different processes: Training, Enrolling, Recognizing.

Training

The training portion of the system consists of creating the eigenfaces based on a set of training faces. The goal was to create as many eigenfaces as possible and keep the M eigenfaces that had the highest eigenvalues.

Given N training images, create a matrix where each column is a face vector of length 176 * 143 = 25168.

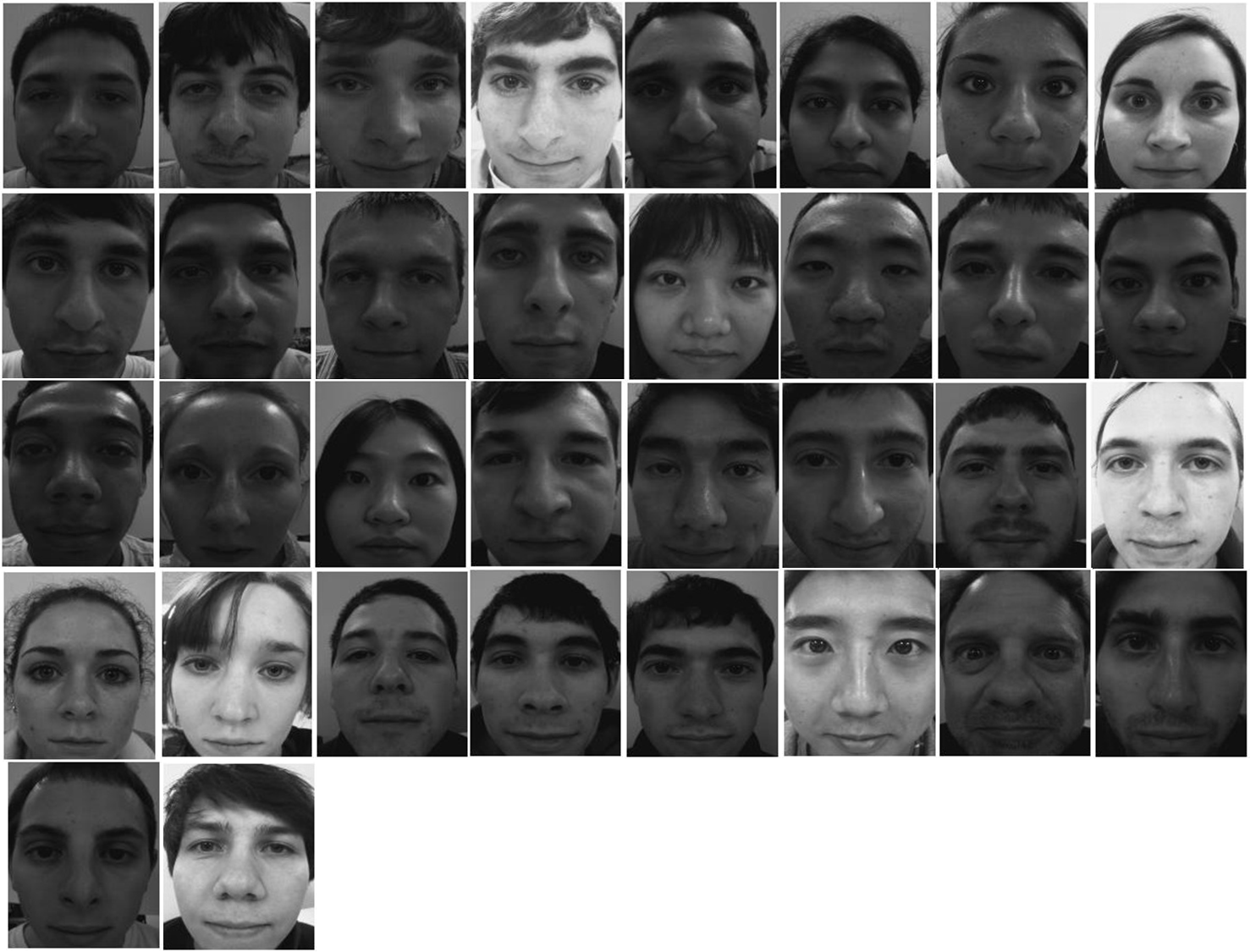

We used a total of N=40 training faces to create the eigenfaces. Below are the raw images captured (before any processing) of some of our volunteers; not all of these faces were used to create the eigenfaces.

To create the eigenfaces we use the following algorithm:

- Normalize each face

- Calculate the mean and standard deviation of each face. This is like brightness and contrast.

- Normalize each image so that each face is closer to some desired mean and standard deviation

- Calculate the mean image based on the M normalized images.

- Calculate the difference matrix using the new mean face.

- Find the eigenvectors of D * D'. However, this is too large of a matrix so it can not be done directly.

- Find the eigenvectors v of D' * D.

- Multiply the resulting eigenvector by D to get the eigenvectors of D * D'.

- Take the eigenfaces that correspond to the M eigenvectors with the highest value.

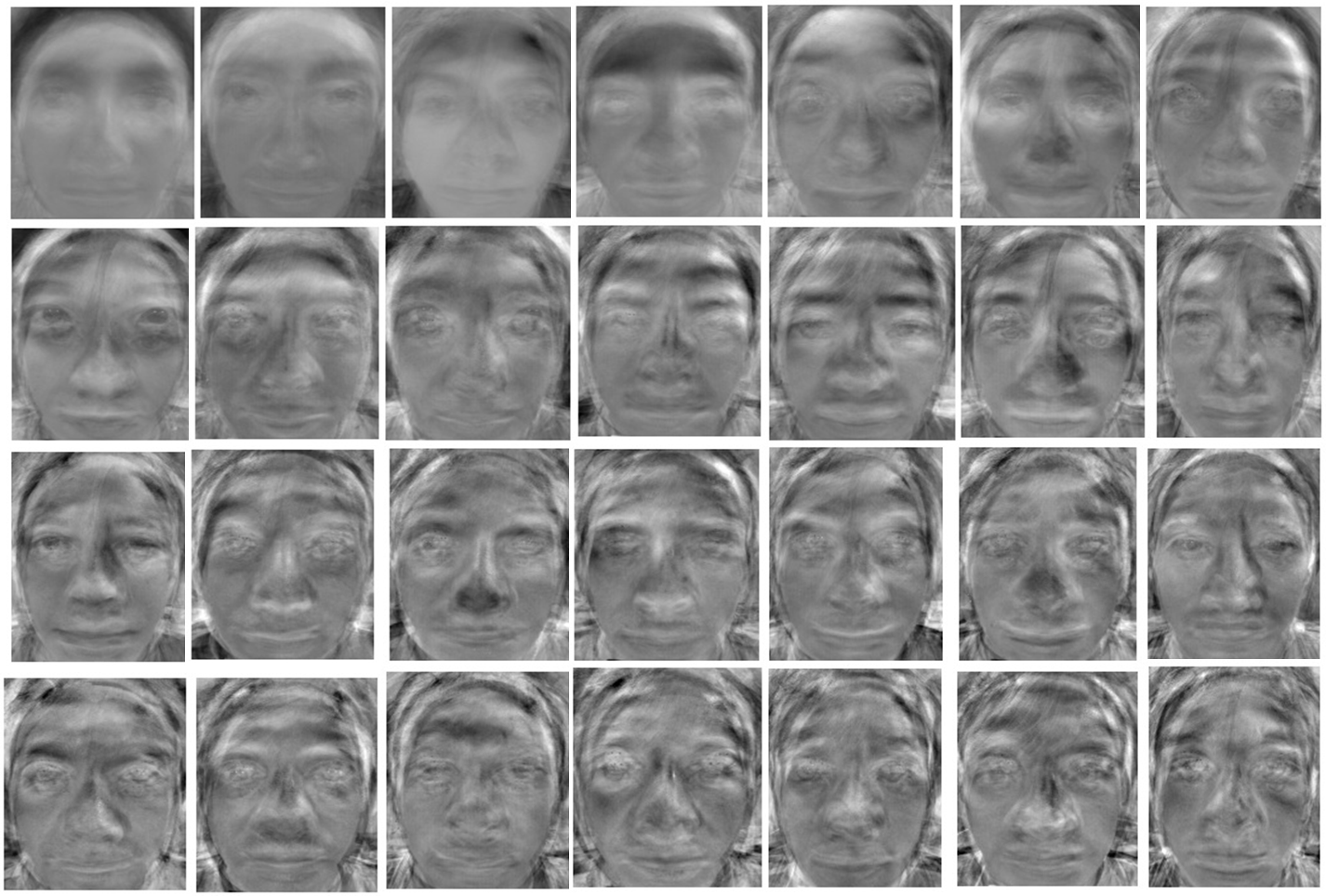

Below are 28 of the 30 eigenfaces we created. For enrolling purposes we use only the top 25 eigenfaces.

Enrolling

The enrollment process consists of creating a user "template" for comparison when he tries to log in again later. The template is an M-length vector (M is the number of eigenfaces used to create the face space) that represents the correlation of the user's face with each eigenface. We use M = 25.

To create a user template from the 176x143 face image, we use the following algorithm:

- Follow the same steps as above to normalize the new face image to the desired mean and standard deviation used in the eigenface calculation.

- Create the "difference" face by subtracting the average face from the normalized new face

- Dot the "difference" face with each eigenface. The value of the ith dot product is the ith element of the template. T is the template, F is the difference face, and E is the eigenface.

Logging In

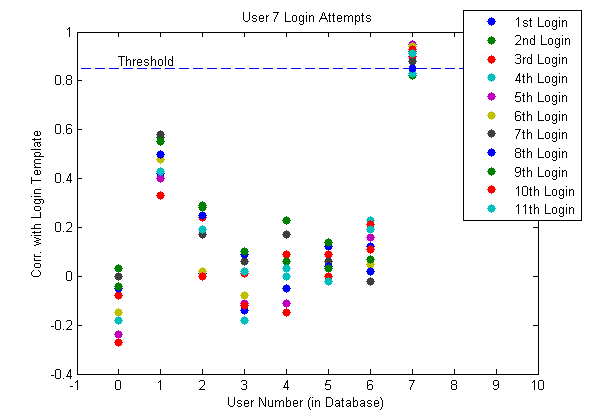

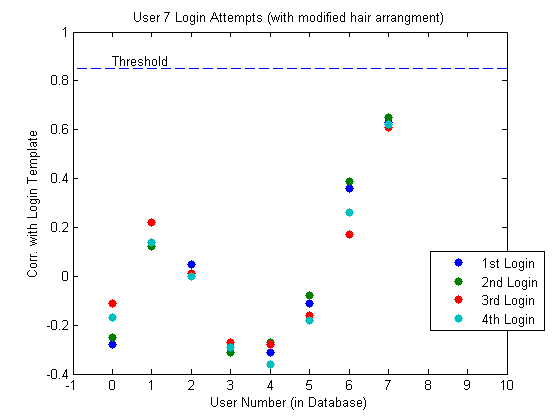

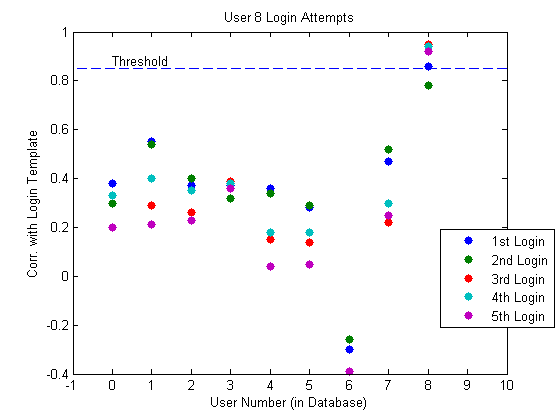

When a user attempts to log in, a new face template is created from the newly captured image as described above. This template is then compared with every other saved template. The measure of correlation we use is the cosine of the angle between the different templates. If the correlation between two templates is above the desired threshold then a "match" has been found. After testing, we decided to use 0.85 as a threshold. This was small enough to reduce false negatives while high enough to eliminate false positives.

To log in a user with their new 176x143 image, we used the following algorithm:

- Follow the same steps as the enrollment process to create a new template, T_new

- Calculate the correlation of T_new with all of the stored templates to find the number of matches.

Below is a visual example of the projection of a face onto our eigenface space. The image on the left is the image captured by the C3088 camera, and the image on the right is the reconstructed linear combination of eigenfaces defined by the user's template. This person was not used to create the eigenfaces. We never actually create this reconstructed image on the microcontroller. We only store the template.

Hardware and Software

Circuit Board attached to wooden structure

Atmel At45DB321D Flash Memory

We fastened all of our hardware together for portability. A wooden structure with a chin plate is used to hold the camera in place and to normalize the position of a user's face during image capture. An LCD is fixed to the top of the wooden structure to provide system feedback to the user. A printed circuit board connects all of the electronic components including the microcontroller, flash memory, and all three buttons. The board layout was designed by Bruce Land for ECE 4760. We reused the board from a previous group. The board is attached to the side of the wooden structure so the user can easily find and press all of the buttons.

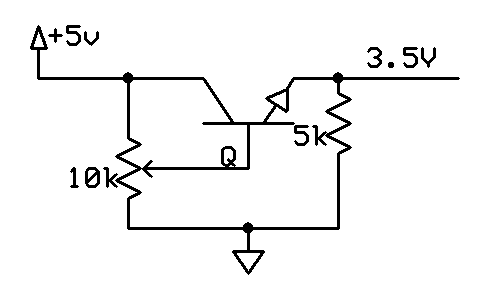

Flash Memory and the Serial Peripheral Interface (SPI)

The ATmega644 only has 4 kB of RAM. This is not enough to hold even one 176x143 image. We need to store a possible 50 images for the eigenfaces. For external memory, we use the AT45DB321D serial dataflash from Atmel. It stores 2 MB in 4096 pages of 528 bytes per page. This chip requires a Vcc of 2.5 - 3.6V. We chose 3.5V so that logic high from the flash would be read as logic high on the microcontroller, which has a Vcc of 5V. We used the voltage regulator circuit to the right to supply 3.5V to flash.

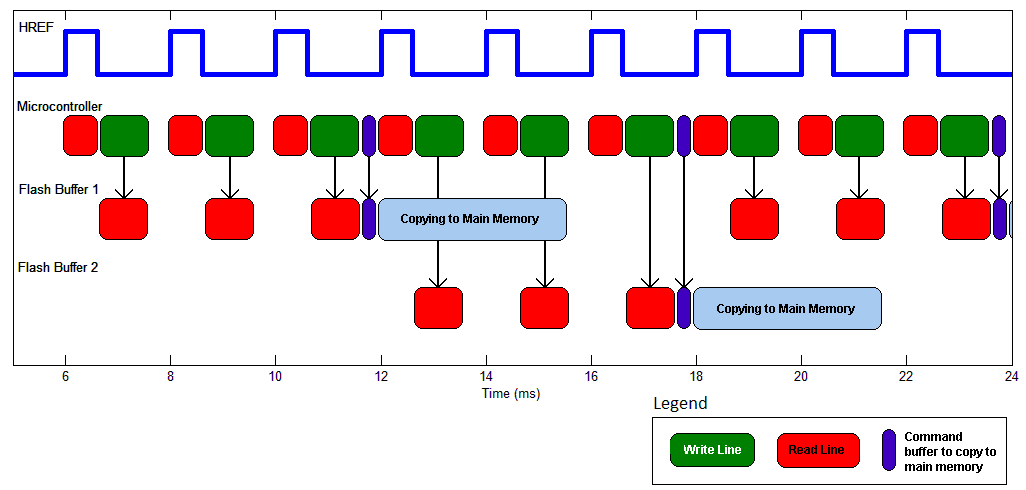

In addition to main memory, the chip has two 528-byte buffers. You can read from buffers or main memory. To write main memory, you must first write to a buffer, and then copy the buffer to main memory. The latter is an internally timed operation which takes about 3 ms. The speed of the former depends on how much data you clock into the buffer and at what rate.

Communication with flash memory uses the Serial Peripheral Interface (SPI), which is a full duplex serial communication protocol. The ATmega644 has dedicated SPI hardware which makes coding easy. We configured the microcontroller as the master and flash as the slave. The microcontroller outputs a clock on pin B.7 to flash through a 1k resistor. The microcontroller outputs data on pin B.5 to flash through a 1k resistor. Flash outputs data to the microcontroller on pin B.6 through a 330 ohm resistor. Chip select is pin B.3. Brian wrote a general purpose library called flashmem.c for communicating with flash. A description of its functions is below.

- void spi_init(): Configures the microcontroller SPI parameters.

- unsigned char readStatusRegister(): Returns the contents of the flash’s status register.

- unsigned char isBusy(): Returns the most significant bit of the flash’s status register. The assertion of this bit indicates the flash is busy.

- void writeBuffer(unsigned char data[], unsigned int n, unsigned int byte, char bufferNum): Writes the first n bytes in the data array to buffer 1 or 2, depending on bufferNum. The data will be copied to locations [byte : byte+n-1] in the buffer.

- void readBuffer(unsigned char* data, unsigned int n, unsigned int byte, char bufferNum): Reads bytes [byte : byte+n-1] in the buffer and copies it into the data array.

- void bufferToMemory(unsigned int page, char bufferNum, char erase): Copies the data in the buffer to a page in main memory. If erase==0, this page must have been previously erased for this operation to work. After this command is sent, the flash will be busy for about 2.5 ms. If erase==1, this function will first erase the page, and then copy the data. This will take about 25 ms.

- void readFlash(unsigned char* data, unsigned int n, unsigned int page, unsigned int byte): Reads bytes [byte:byte+n-1] at the page specified in main memory. The result is copied into the specified data buffer.

- void eraseBlock(unsigned int block): Erases pages [8*block : 8*block+7] in main memory.

- void eraseFace(unsigned char k): Erases blocks [8*k : 8*k+7] in main memory.

Memory Layout

For indexing ease, we thought of main memory in terms of "faces" of 64 pages each. We were having some issues with pages and blocks of pages being randomly corrupted and erased, so we needed to move around the eigenfaces from their original pages in memory. Currently, Face 51 is used as the temporary space for the newly captured image. This face is erased immediately after the user's template has been created and analyzed. Faces 7 through 36 are used to hold the eigenfaces, not in value of importance. There is an index array in the final FaceRecSystem.c to index correctly into these eigenfaces. Finally, the Mean Face is stored in face 37.

Camera Module and the Inter-Integrated Circuit (I2C) Interface

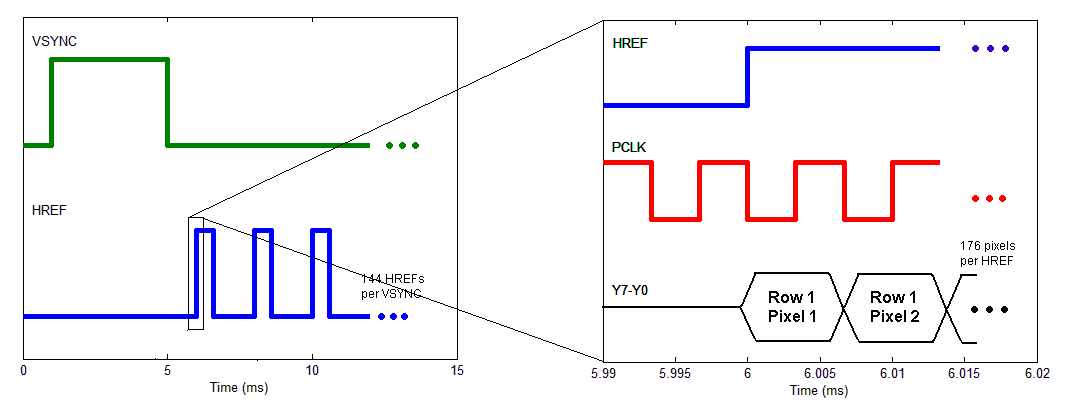

We used the C3088 camera module for image capture. This module uses OmniVision’s OV6620 CMOS image sensor. Camera settings are programmable through a standard Inter-Integrated Circuit (I2C) interface, which is a two-wire, half duplex interface. The camera outputs VSYNC, HREF, and PCLK control signals and an 8-bit parallel digital video output Y7-Y0.

I2C Interface

As with SPI, the ATmega644 has dedicated hardware for I2C. We used an I2C library written by Peter Fleury to create two general purpose functions for the C3088:

- unsigned char camera_read(unsigned char regNum): Returns the contents of the camera register regNum

- void camera_write(unsigned char regNum, unsigned char data): Writes data to the camera register regNum

We mostly used default settings for the camera. Apart from the default settings, we chose to:

- Reduce frame size from 352x288 pixels to 176x144 pixels.

- Reduce frame rate from 60 frames per second (fps) down to about 5 fps.

Video Signal

The OV6620 image sensor outputs an 8-bit parallel gray-scale digital video signal on pins Y7-Y0. It also outputs PCLK, VSYNC, and HREF for timing. The format is similar to the analog NTSC standard. See the timing diagram below. PCLK is a 300 kHz clock. A 4 millisecond (ms) pulse on VSYNC indicates the start of a new frame. After this, data is output row by row. Every row, HREF goes high and data is subsequently sent out on Y7-Y0, one pixel per rising edge of PCLK. HREF is high for about 0.6 ms, during which time 176 pixels are clocked out. HREF is then low for about 1.4 ms before starting the next row.

Image Capture

One of the biggest technical hurdles at the beginning of the project was image capture. The ATmega644 only has 4kB of RAM, and a 176x143 pixel gray-scale image is over 25 kB. Thus we were forced to read the video signal and write it to flash simultaneously. To achieve this, we ingest one line (176 pixels) from the camera when HREF is high, and then write it to the flash buffer when HREF is low. Writing a line to the buffer takes about 0.9 ms. Every 3 lines, the flash buffer fills up and we command flash to copy the buffer to main memory. This is an internally timed operation which takes about 3 ms. By alternating buffers every 3 lines, we are able to keep up with the video signal. See the timing diagram below.

Interfacing and Testing with Matlab

We decided that the best way to view the images captured by the camera was to send the captured image from flash memory to Matlab. The easiest way to communicate with Matlab is to use the serial port and the uart as defined by Joerg Wunsch in uart.c and uart.h.

Interfacing with Matlab proved to be a little more complicated than we originally expected because of the amount of data we were trying to transfer at once. The SerialConnect.m Matlab file was split into three separate sections: setting up the connection, receiving data, sending data. The serial connection was setup based on the COM port the microcontroller was connected to as well as the desired baud rate (which must match definition in uart.c). The receive portion of the script is based on receiving chunks of data 66 elements at a time or 528 bytes; we found that this is the maximum amount of data that could be sent to Matlab over the uart in one buffer. The send portion of the script prints to the uart the number of "faces" it is sending, where in flash memory the data is supposed to go, and then the data is sent one byte at a time. We found that if we sent more than one byte at a time and didn't pause in between print statements the microcontroller wasn't able to read in. This unfortunately slowed down flash programming significantly. It took about 3 hours to load all the eigenfaces onto flash.

A MatlabLib.c file was also written to set up the data transfer on the microcontroller side. It was also split up into three different functions. The first is a set of print statements that sets up Matlab's read program to expect the correct amount of data depending on the number of faces and the size of each face being sent over the uart. Another function called dumpFrame() splits up the data and sends it over the uart in blocks of 66. The final function, called readFrame() scans for the amount of data that is being sent over the uart and then does individual scans until it reads all of the data.

These two uart interfaces were used in many of the different processes of the project to move data back and forth between the camera, flash memory, and Matlab. In addition to being necessary to calculate the eigenfaces and send them to flash, this was a very useful debugging tool. There are a number of functions throughout the different C files that use the interface to send data.

- sendFacetoMatlab(unsigned char face): read face 'face' from flash a page at a time and sends it over to Matlab. WE specifically used this to send test images from the camera and previous writes into Matlab to check their correctness.

- pageMatlabFlash(int pagNum): reads one page from Matlab and sends it to page pagNum in flash Memory.

- faceMatlabFlash(char face): sets up the page numbers for a specific face so that pageMatlabFlash can send and receive the correct data to flash memory.

Problems

Our biggest problem was that every so often, our eigenfaces would get corrupted in flash memory. At first we suspected ESD, so we covered flash in an ESD-free bag when transporting our device. We also suspected faulty wiring, so we re-soldered flash, simplifying the wiring and eliminating loopy wires. We also slowed down SPI by a factor of 2. Perhaps some of these things helped since we haven't seen any more corruption, but we have also begun to handle flash with more care, so it is not clear what the problem was.

Results

Accuracy

We tested our face recognition system on 12 people. We conducted 25 normal login attempts, 22 of which were successful. The three unsuccessful login attempts (i.e. false rejections) were barely under the threshold for acceptance. There were zero false acceptances. This was a strict design constraint. A physical access system can have a 10% false rejection rate but should never recognize user A as user B. False rejections are resolved by trying again, but false acceptances usually mean a security breach. Selected test results are below.

We also conducted 10 login attempts with added variables to test the limitations of our design. Such variables included hair modification, glasses, smiling, sticking out tongue, head tilting, etc. We found that our system was sensitive to hair, head tilt, and glasses, but not as sensitive to smiling or sticking out tongue. Selected test results are discussed below.

User 7 Normal Login

The plot below shows 11 login attempts by User 7. In all 11 trials, User 7 correlates most closely with herself. Nine of these logins were correlated enough to be successful. Two were just under the threshold. In her last login attempt User 7 was sticking out her tongue, but her correlation was still above the threshold.

Login Attempts by User 7

User 7 Login with Hair Modification

The plot below displays a limitation of our design. Our system is sensitive to hair arrangment. The plot shows 4 more login attempts by User 7, but this time she let her hair down before trying to log in. Her template in the database was created with her hair pulled back. Letting her hair down brings her correlation well below the threshold.

Login Attempts by User 7 with her hair let down

User 8 Normal Login

The plot below shows 5 login attempts by User 8. Four were successful. We suspect that the failed login (attempt 3) was due to User 8 slightly tilting his head during image capture. This is indicative of a limitation of our design. Our system is sensitive to changes in head orientation in both pitch and roll. If we had time, we would have expanded our structure to include a place to rest the forehead. In Login Attempt 5, User 8 was smiling, but the systems still recognized him.

Login Attempts by User 8

Glasses

User 11 enrolled with his glasses on and then failed to log in with his glasses on in three login attempts. The correlation was around 0.60. He removed his glasses and re-enrolled. After this, he could log in (without his glasses) repeatably. This indicates that our system is very sensitive to glasses. This makes sense since none of our training images included glasses. Our face space was chosen to exclude glasses features, so the projection onto the face space of a face with glasses is rather sensitive.

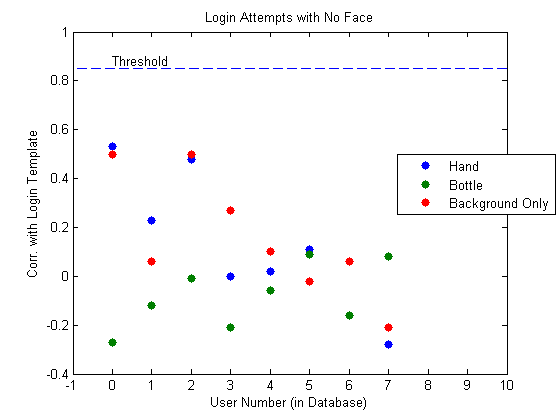

No Face

We performed three tests where we took pictures of non-face objects: hand, water bottle, and background only. In all of these tests, login was unsuccessful.

Login Attempts with no face in the picture

Speed

At the beginning of our project we identified run time as a potential problem. The major contribution to run time is calculating the unknown user template. A template is really 25 dot products, one for each eigenface. Each is a length 25168 dot product. This is 630,000 multiplies. In addition to this, we need to load all the eigenfaces from flash to the microcontroller over SPI running at about 100 kB/s. By doing integer multiplications instead of floating point, we were able to bring the run time down to about 15 seconds.

Safety

There are only two possible safety concerns in our design. One is that we ask you to touch your chin to the chin plate. There is a possibility of spreading germs. In a real system, we would probably make this plastic instead of wood, and provide disinfectant to clean the chin plate. The other possible safety concern is that we have some sharp exposed ends of screws. They are not near buttons, the chin plate, or places where you would grab our device to move it.

Usability

Given more time, we would have tried to find more optimizations to reduce runtime. That being said, 15 seconds is a reasonable time for an access control system.

You only need to keep your chin on the chin plate while the image is being captured. This is less than a second. We found that people were confused about this because they couldn't see the LED. The LED is on and only on during image capture. Given more time, we would move the LED to a more visible location.

Since we have a portable system, it can conform to people of all sizes.

Conclusions

Results vs. Expectations

Our results were better than we expected. We were able to show a reasonable login success rate (88%) with reasonable run time (15 seconds). For this we were extremely satisfied. It was a little disappointing that our system was so sensitive to hair modifications, head tilt, and other sometimes unidentifiable variables. Some of these are limitations of the eigenface method.

If we were to repeat this project from scratch, we would do a few things differently. First, we would improve mechanical structure to include a forehead rest or some other head position normalizer. Second, we would use something other than serial dataflash. This could improve run time and would have prevented the data corruptions that plagued our last week. If we improved run time, we could use more eigenfaces, further improving our successful login rate and making our system more robust. We would have also liked to use more training faces, but it was difficult to find more than 50 people in a few days to train our system with.

Conforming to Standards

We were able to communicate with flash memory and with the camera. This means that we successfully conformed to the SPI and I2C standards.

Intellectual Property

Our design poses no intellectual property problems. The eigenface method is well known in the literature. All aspects of our design that we didn't develop ourselves are properly cited in our references below.

We will try to publish our design so everything we did will be in the public domain.

Ethical Considerations

We strived to abide by the IEEE Code of Ethics during this project.

Facial recognition brings up a number of ethical issues. For many people, the mere idea of automatic facial recognition hints at George Orwell’s “Big Brother is watching you.” However, we do not see any ethical issues for our project since all participants are willing and there is no passive recognition. That is to say that no one is being recognized against their will, and every instance of recognition is initiated by the user. It’s not as if we are creating a real-time video facial recognition system using a large public database. No user can be recognized without first registering.

Another concern with many biometric systems is that the biometric template is stored in a database that could be compromised. Even though the captured picture is stored in flash during enrolling and logging in, it is erased as soon as the template is created. It is only stored in flash memory for 15 seconds. The templates themselves do not store the actual face, and the problem of recreating the face from the template and the eigenfaces is underdetermined and cannot be solved. These measures protect the user's identity from a data compromise.

Legal Considerations

Our project is a simple standalone device. It does not emit any appreciable EMF radiation and does not cause interference with other electronics. We made sure to get everyone's permission before publishing their names or images on this website.

Appendices

A. Source Code

Source files

- FaceRecSystem.c (19KB) – the code for enrolling and logging in users using the flow diagrams from the project overview.

- camToMatlab.c (5KB) – the code used to take images, and transfer them from flash memory to Matlab. Used to load training images to Matlab through the serial port.

- MemToMatlab.c (5KB) – the code used to test sending data from flash memory to Matlab through the serial port.

- MatlabToMicro.c (6KB) – the code to move the eigenfaces from Matlab through the microcontroller to the flash memory through the serial port.

The following source files are the libraries we created or borrowed for the project.

- camlib.c (4KB) – the library for setting up the camera and interfacing with it, written by Brian Harding

- flashmem.c (8KB) – the library that sets up the flash memory and controls the reads, writes, and erases, written by Brian Harding

- Matlablib.c (2KB) – the library that sets up the data to be sent to and from Matlab, written by Cat Jubinski

- uart.c (4KB) – an implementation of UART, written by Joerg Wunsch.

- twimaster.c (6KB) – the I2C master library, written by Peter Fleury

- lcd_lib.c (9KB) – the library for the LCD, written by Scienceprog.com

Header files

- camlib.h– written by Brian Harding

- flashmem.h– written by Brian Harding

- MatlabLib.h– written by Cat Jubinski

- uart.h – written by Joerg Wunsch

- i2cmaster.h – written by Peter Fleury

- lcd_lib.h – written by Scienceprog.com

Matlab scripts

- SerialConnect.m (3KB) – used to receive face images from flash memory and send eigenfaces back to flash memory. Written by Cat Jubinski

- getEigFaces.m (6 KB) – used to create the eigenfaces and simulate enrollments and logins in MATLAB. Written by Brian Harding

- efaces0501.mat (592 KB) – Eigenfaces used in the system.

- meanface0501.mat (12 KB) – Mean Face used in the system

Download all files: Code.zip (44KB)

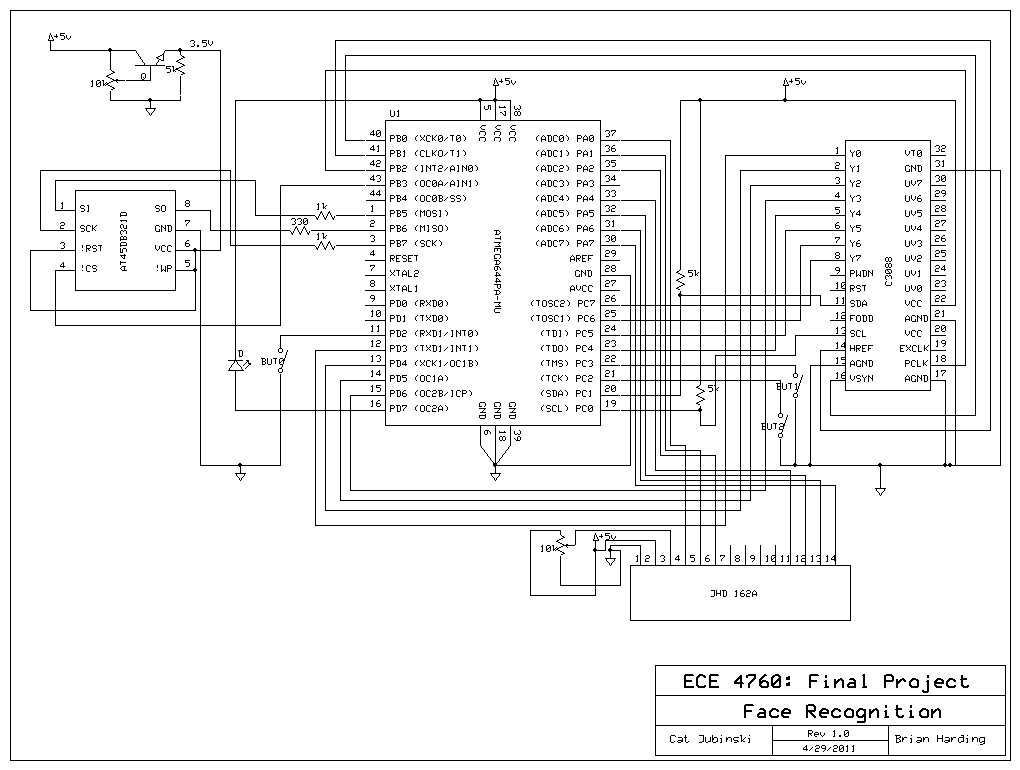

B. Schematics (VSYC and HREF may be reversed!)

Hardware schematic [full-size image]. Download schematic file: faceAccess_schematics.sch (36KB).

C. Parts List

| Part | Source | Unit Price | Quantity | Total Price |

|---|---|---|---|---|

| ATmega644 (8-bit MCU) | ECE 4760 Lab | $8.00 | 1 | $8.00 |

| C3088 with OV 6620 (Image Sensor Module) | ECE 4760 Lab | $0.00 | 1 | $0.00 |

| AT45DB321D (Serial Flash) | DigiKey | $3.87 | 1 | $3.87 |

| Varitronix MDLS16264(LCD Module) | ECE 4760 Lab | $8.00 | 1 | $8.00 |

| PC Board + Power Regulator | ECE 4760 Lab | $0.00 | 1 | $0.00 |

| Wood & Screws | Lowes | $5.00 | 1 | $5.00 |

| 9-Volt Battery | Lowes | $3.00 | 1 | $3.00 |

| Push Buttons | lab | lab | 2 | $0.00 |

| Header Pins | lab | $0.05 | 18 | $0.90 |

| Total | $28.77 |

D. Tasks

This list shows specific tasks carried out by individual group members. Everything else was done together.

Brian

- Interfacing with Flash Memory (SPI)

- Programming the Camera (I2C)

- Building Mechanical Structure

- Soldering the PCB

Cat

- Sending Training Images to Matlab

- Sending Eigenfaces to Flash

- Designing Website

E. Pictures

Below are some pictures of us working in the lab. Photos courtesy of Bruce Land.

References

This section provides links to external reference documents, code, and websites used throughout the project.

Datasheets

- ATmega644 (8-bit MCU)

- C3088 (Camera Module)

- OV6620 (Image Sensor)

- AT45DB321D (Serial Dataflash)

- LCD (Display)

Reference Code

We referenced preious projects image sensor code to create our camlib.c library.

Acknowledgements

We would like to thank ECE 4760 Professor Bruce Land and all TA staff (especially our lab TA Tom Gowing), for help and support during the labs and over the course of the project. We thank them for the long lab hours and the parts stocked in the lab. Thanks to Adam Papamarcos and Eileen McIver for brainstorming sessions and technical help.

We would also like to thank everyone who "donated" their face for creating eigenfaces and testing our system:

- Jon Altiero

- George Barrameda

- Michael Brancato

- William Bruey

- Daniel Charen

- Bonnie Chong

- Jimmy Da

- Danielle Feldman

- Cameron Glass

- Katie Hamren

- Daniel Hare

- Henry Hinnefeld

- Cindy Huang

- Adam Jackman

- Bruce Land

- Kevin Martin

- Eileen McIver

- James McMullen

- Aaron Miller

- Thomas Mitchell

- Joe Montanino

- Elise Newman

- Adam Papamarcos

- Pouria Pezeshkinam

- Stephen Prizant

- Hyundo Reiner

- Evan Respaut

- Nick Rho

- Gena Rozenberg

- Michael Schwendeman

- Ragini Shama

- Alec Story

- Kevin Ullmann

- Roger Varney

- Elizabeth Walker

- John Wright

- Jeff Yates