|

|

Introduction

| High Level Design

|

Program/Hardware Design

Results

|

Conclusion

|

Ethical Considerations

|

Appendices

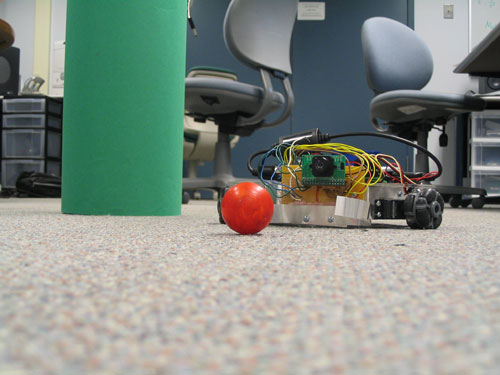

The SearchBot is a fully

functional model car that can be controlled wirelessly through the PC or autonomously

search for red balls scattered on a flat surface.

Autonomous vehicles are just now

being realized in labs around the world and will soon have major military and

commercial potential. The Adaptive Communications and Signals Processing

(ACSP) Group of Cornell University is studying the control of autonomous

vehicles in sensor network systems and have asked us to contribute a robot

vehicle to their research. The end result is the SearchBot, a car that

can both be controlled by a user or autonomously search for red balls. In

Controlled Mode, a user inputs an angle to rotate and distance to move on a

PC terminal and the vehicle, which wirelessly receives the request, moves

accordingly. In Autonomous Mode, the robot utilizes an effective

searching algorithm to locate red balls on the floor. Upon finding one, the SearchBot

pushes it back to a central base and continues searching for others. This project utilizes

a commercial robot chassis, a PDA for wireless communication and an optical

camera for vision. The SearchBot is an exciting application of many different

electrical engineering disciplines and illustrates the practicability of using

microcontrollers to making autonomous robots.

Rationale:

The SearchBot originated as a request proposed by Professor Lang Tong for his

sensor networks research. He needed a vehicle that can be controlled by a Matlab script on the PC to move around in a sensor field. We were intrigued by

this idea but were interested in having the vehicle perform some task

autonomously instead because it would be more challenging and applicable. Thus, we created the SearchBot, a vehicle that seeks out red marbles on the floor and

moves them to a

designated spot called the base. This vehicle will also be able to be

manipulated by the PC in a

different mode so it pertains to Professor Tong's research. The SearchBot was an

interesting project idea because it combined a variety of different interesting

ECE topics together into one coherent and practical application. We needed to

research computer vision, control systems, embedded C++ and wireless

communication in the process of planning and implementing our project, all of

which were foreign to us before this class.

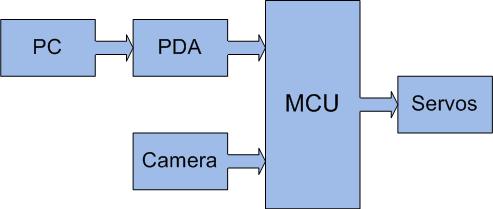

Logical Structure:

The SearchBot is a complex system that has many inputs and outputs connected to

a Mega32 microcontroller, which serves as the central control unit. In order to

simplify planning and keep construction efficient, we segmented our project

into various subsystems that communicate solely with the MCU. This greatly

benefited us both in design and during testing because it allowed us to

focus on each component individually with adding unnecessary complexity.

We identified the three main components of the SearchBot to be the PC/PDA/MCU communication link, optical camera sensor,

and servos controlling the vehicle. Figure 1 highlights how information and

control propagates through the system.

Figure 1: Information flow

In order to run the SearchBot, the user turns on the PC, PDA and microcontroller. An ActiveSync connection must be made and maintained between the PC and PDA. The user runs a issues commands through a Matlab script, which is propagated through the PDA to the MCU using RS232 serial. The Mega32 processes these commands and the car responds accordingly.

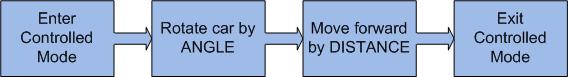

Controlled Mode: This mode allows a user to run a Matlab script to control the movement of the robot. An angle and distance are sent to the MCU as inputs and the car moves consequently based on its calibration. Note that it is probably preferable to adjust the car in Calibration Mode before using the car for precise movement. The high level proposal for Controlled Mode is as follows:

Figure 2: Controlled Mode

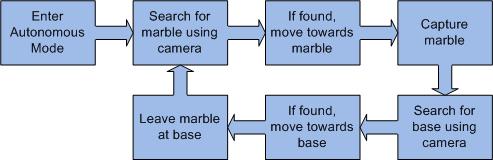

Autonomous Mode: This mode allows the car to move freely on its own and sense its surrounding using an optical camera. When the user initiates Autonomous Mode, the car immediately turns 300o while scanning for red. This makes it important for the area to be completely free of red to avoid false triggers. If the target color is not found, the car moves a foot and does another vision sweep. This continues until the SearchBot locates a red object. The vehicle then locks onto the object and move towards it, scanning the camera and making dynamic adjustments to its motion. Upon reach the target, the car captures it using a catchbin attached to the front of the chassis. The robot then searches for a base identified by green. We made the base large so it is easily discovered. The SearchBot pushes the ball to the base and then moves back to release it. The process is then repeated until the user enters a new instruction. Autonomous Mode is illustrated by the following graphic:

Figure 3: Autonomous Mode

Calibration Mode: in order to convert a distance or angle input into a time to turn wheels, scaled variables are needed. We found these variables changes with battery voltage, floor surface friction and weight on the car so it is handy to have a way to change these values. A separate mode on the car is set these calibration variables and have them saved to EEPROM.

Software/Hardware Tradeoffs:

This project depends heavily on both software and hardware but few tradeoffs

exist. The SearchBot is heavily hardware-driven because both the car

itself and its optical sensor are hardware components. Car control and image

extraction, meanwhile, were inevitably software-oriented. In hindsight, the only

tradeoff we made was in the way that we implemented wireless control on the

SearchBot. Rather than building a complex RF communication system, we reasoned

it was practical to utilize a PDA's WLAN capabilities and just program the

communication protocols necessary between the individual

components. In the end, this saved us a lot of time and was a logical (but

expensive) decision.

Standards:

This project utilizes many components that interact with each other and

conforms to the standards set for the different communication protocols. The PDA

uses the RS-232 serial standard to interact with the Mega32. The camera connects

to the microcontroller using the I2C standard. Additionally, we used the 802.11b Wi-Fi standard to transfer information between the PC and PDA.

A final standard used was RGB color encoding in the camera.

Patents and Legal Considerations:

The SearchBot is an academic not-for-profit endeavor and does not infringe on

any copyrights or patents. We referenced some open-source coding and referred to

other academic projects that used the same optical camera but these sources

are cited and credited in this report. We bought or sampled all of our hardware

components and are using them within the realm that they were designed for. The

entire design and construction aspects of the project were our own.

The SearchBot includes many subsystems that must be developed individually and then integrated together. The communications portion of the project involves transferring information wirelessly from the PC to the MCU. A novel way to implement this was to utilize a PDA to serve as the middleman and thus separate communication links were written for PC/PDA and PDA/MCU connections. Moreover, the car needs a robust state machine in order to move around, search for balls and obey commands from the user. Finally, there is the challenging aspect of reading from the optical sensor and completing the fast imaging processing necessary to help maneuver the car in autonomous mode.

User Control Specifications:

Minimally, the car needs

three bytes to control its actions. The user should input them in a specific order to

achieve the correct response. The convention we have created is as follows:

| 1 | 2 | 3 |

| MODE | ANGLE | DISTANCE |

MODE is the main control line and the car will move according to the following table:

| Mode |

Functionality |

| 0 | Controlled Mode: - moves the car ANGLE degrees and DISTANCE centimeters. |

| 1 | Autonomous Mode: - begins autonomous search of balls; all other parameters ignored |

| 2 | Autonomous Base Mode: - begins autonomous search of the base first and then finds balls; all other parameters ignored |

| 3 | Calibration Mode: - determines the rate at which wheels move forward and rotate. These rates change with battery life and car weight and can be readjusted in this mode - ANGLE specifies scaledRotate (must be > 0) - DISTANCE specifies scaledMove (must be > 0) |

Accepted input values:

MODE - must be 0, 1, 2 or 3

ANGLE - can

take on all integer values

DISTANCE - can take

on all integer values

PC Connection:

The user interacts with the car using a PC

terminal that is connected to a PDA through ActiveSync. We wrote a Matlab script

that takes as input three parameters specified by the user. The script creates a

text file called carcontrol.txt that stores these three parameters. It then runs an executable called runPDA.exe,

which allows the PC to communicate with the PDA.

This executable is a modified version of an open source C# application called RAPICSharp,

introduced to us by Bruce Lei of the ACSP group. RAPICSharp was a simple GUI

that allowed users to transfer files to and run programs on the PDA. We wanted

to use it capabilities but did not require the GUI so we modified the file so it

transfers carcontrol.txt to the PDA and

then ran an executable called serialComm.exe when called.

PDA Serial Communication:

The Dell Axim PDA is

connected to the microcontroller using a

RS232

cable. When the user runs the Matlab script on the PC, the parameters

specified will be transferred to the PDA and serialComm.exe is run. This

embedded C++ program opens carcontrol.txt (which contains user requests) and extracts the three bytes of data.

This is stored into a buffer along with a start byte of 127 and a stop byte of

126. serialComm.exe then opens the COM1 port and

configures it to match the RS232 protocol. We decided to use a 9600

baud rate with one stop bit and no parity checking because this corresponds to the

serial communication protocol of the microcontroller. The buffer is sent through

the serial port and the link is severed immediately afterwards. The program then

closes and may be called again later. Although

not implemented in this project, the PDA can be further advanced by adding port

reading to receive data from the microcontroller. Information on reading from

the serial port can be found at

MSDN.

MCU Serial Communication:

To instantiate serial

communication on the Mega32, we referred to the

ECE476

serial page and modified an example given by Bruce

Land. In this code, the Mega32 initiates an

interrupt when a transmission packet is received from the serial port. Our protocol states that

three bytes of data will be passed in each packet along with a start and stop

byte with values 127 and 126 respectively. As in the example, we set UBRRL to

103 to set the baud rate to 9600, making the serial line fast and robust. Upon

being received, the data is stored appropriately

into a buffer and the r_ready flag is set to high. The car will then update its

system variables and follow

the new instructions given by the user.

Car Control:

The microcontroller serves as

the brain of the car, processing input from the camera and PDA to move the wheel

motors appropriately. Timer0 is used to generate 0.5ms interrupts that

calibrates timing for the system. We chose 0.5ms because it is both short enough

to instantiate a nice PWM signal to move the servo motors while being long enough not

to cause interrupt collisions. Every 25ms, the car refreshes its state

machine by first checking for a new input from the user (through the PDA) and

then updating its state variables. The car's state machine is as follows:

Figure 4: Car control state machine

If the user sets MODE = 0, the car enters Controlled Mode immediately. The car takes values DISTANCE and ANGLE from the UART and calculates how long it needs to turn the servo wheels in order to move the corresponding distance and angle. Two scaling factors scaledRotate and scaledMove are used to relate user input to time; this means the car must be calibrated before use. After calculating these times, the car is first rotated by calling rotateCar() and then moved by calling moveCar(). A short 5ms delay is implemented between the two movements.

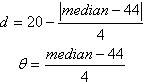

If the user sets MODE = 1, all other data is ignored and the car is put into Autonomous Mode. The car first initiates the camera and looks for a red ball on the ground. If the camera finds red, it determines the median x-pos of the red values of the line with the most accepted pixels. This value ranges from 1 to 88 corresponding to the horizontal resolution and the car uses this information to move towards its target, adjusting both angle and distance traveled dynamically based the median point's distance from the center. These motion values are calculated using the following equations:

If no marble is found, the car turns 30o and scans its camera again. It continues this sweep for 300o and then moves forward 30cm. The car continues this motion indefinitely until it finds a ball. Upon reaching the ball, the car encloses it with the catchbin and pushes it to a green-colored base. To do so, the car follows the same sort of algorithm as it did to search for balls. Variables autoState and autoMode are used to remember what the car is currently doing and what it is searching for respectively. Note that any new input from the PDA will cause the car to exit Autonomous Mode and follow new user instructions.

Note that there are two other modes that the autonomous car can enter. MODE = 2 makes the car find the base before it searches for balls. This is easily implemented given our state machine and makes it convenient for the user to get the vehicle in a good position to begin searching. MODE = 3 calibrates the car, which is done by saving the input values to EEPROM and updating current scaledMove and scaledRotate values.

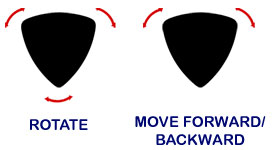

Servo Wheels Control:

To move the car, three Parallax

continuous rotation servos were used. Each servo needs 6 volts to run and have a

control line that accepts a PWM signal with a period of 20ms. Three output lines

are used to control the three wheels and four AA batteries act as the 6V power

source. A 0.1μm capacitor is placed between Vcc

and ground to eliminate high-frequency noise. To calibrate each motor, a

pulse with a duty cycle of 15% is sent in and a potentiometer on the servo

was turned until the motor stopped rotating. The wheel will now turn clockwise

or counterclockwise if the duty cycle is smaller or larger respectively. A PWM

signal is generated on the MCU when the car specifies it should move and the

length of the pulse depends on the vehicle's motion. When the car rotates,

all three wheels must either be turned clockwise (duty cycle of 5%) or

counterclockwise (duty cycle of 25%). When the car moves forwards or backwards,

only the front two wheels are turned on and are rotating in opposite directions.

A diagram illustrates car motion.

Figure 5: Servo wheel motion

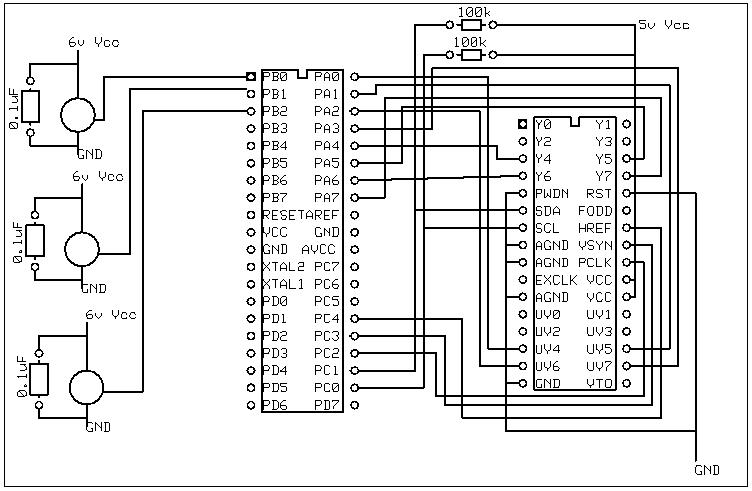

Camera Control:

The camera component of the project

involves several parts. The first is being able to communicate with the camera

to change the settings. This is done by the MCU using

the I2C protocol to modify the different control registers

on the camera. The next part involves syncing with the camera and reading data

from the two 8-bit output buses called Y and UV. Finally, we need to do some

image processing to find where in the image the marble or base is located.

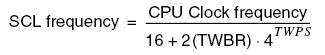

I2C Communication: The OV6620 image sensor is very robust and features quite a variety of settings. One of the more interesting and challenging aspects was to figure out what the settings are and determining the optimal setting for our car. I2C, a protocol originating from Philips, is used to create a two-wire bidirectional communication interface between the MCU and camera. Since Philips retains the name of I2C, Atmel calls it "two wire interface" (TWI) and makes it available on PINC.0 and PINC.1 of the Mega32. The I2C protocol has a master/slave architecture and uses two pins for communication, both of which need to be connected to a 10kohm pull-up resistor to be active. The master puts out a clock signal on SCL line and all the slaves sync up to this clock. The speed of this clock is set by the microcontroller through the formula:

In order to create a fast communication link, we designed the SCL frequency to be 100kbps. In doing so, we determined that TWSR (status register) and TWBR (bit rate register) should be set to 0 and 72 respectively. On the second line, the master sends a packet of data which transmits three bytes. After an initial start bit, the MCU sends the address of the slave (which is 0xc0 in this case) as the first byte. Subsequently, the second byte declares the target register on the camera and the third byte sends the data to be stored in that register. A stop bit ends the transmission. The slave must acknowledge that the transmission is received or the packet must be resent.

Camera Settings: In order to receive a decodable output, we first must establish the proper settings on the camera. Firstly, we decided that having a low resolution would not hinder the car's operation significantly so we changed the default of 356x292 to a more reasonable 88x72. This tradeoff gave us more flexibility in collecting and processing data.. The frame rate was also decreased to about 4 fps to allow for better synchronization between the microcontroller and camera. Finally, we changed the color encoding from YUV to RGB to make our image processing faster.

Receiving Data: The camera has several control lines that make data collection possible. The Vsync line sends a small pulse to signal the beginning of a new frame. The Href line becomes high at the same time and stays high while valid pixel data is available. Finally, pixel data can be collected whenever the Pclk line goes jumps from low to high. Two data buses, Y and UV, pass color data to the microcontroller. The Y line passes GRGRGR... values while the UV line sends GBGBGB... values, where R, G, and B represent the red, green and blue components of the pixel respectively. Note that between each line of pixels, there is a significant downtime when no valid data is sent, conveniently giving us a large interval to do image processing.

Processing Data: Since we do not have enough memory to store an entire frame or time to process data on the fly, the SearchBot compromises by storing a line of pixel data at a time and processing it during the downtime between lines. For each pixel on a line, we compare the raw data to a predetermined color, saving the x-pos of the pixel if a match occurs. For example, when we are searching for a red marble, we take pixels when the R value more than doubles both G and B. By storing an array of the x-pos of all accepted pixels and a counter of the size of the array, we can quickly find the median point of the correctly-colored pixels and determine where the marble or base is. We used while loops to determine when the clocks changed values, an algorithm that we referenced from a project by Inaki Navarro Oiza (see appendix).

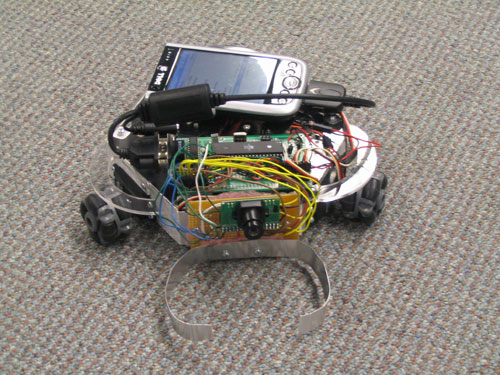

Car Construction:

We used an Acroname PPRK robot

chassis to make the SearchBot. This body has three servo wheels and is uniquely

shaped in a triangular fashion. The PPRK chassis is particularly advantageous

because the car can rotate about its center of mass and can maneuver

in small spaces like the ACSP lab. We attached a 9V battery to power the

MCU and camera and housed it inside the underbelly of the car. 4 AA batteries

are also needed to power the servo motors. Bruce Land's custom-designed

prototype board was constructed and attached to the top of the car with

velcro. The PDA is attached in a similar fashion. The camera is fastened to the

front of the car using screws and thus faces forward. Aluminum metal shaped like

a U is also added to the front of the car to serve as a catchbin for marbles.

The following pictures illustrate the final implementation of the SearchBot.

Figure 6: Car construction

The SearchBot was constructed in five weeks and currently meets all of the specifications that we originally intended. As planned, each part was independently constructed and the subsystems were integrated into one final product. We found this to be an efficient and productive process in creating such a complicated system.

PC/PDA/MCU Communication: The communication links of the SearchBot were one of things we considered in our project. The PC/PDA link required a Matlab script and modified open source program. The Matlab function was easily implemented due to our previous knowledge of the language. The C# open source executable proved to be slightly more challenging, both because we had to understand the functions of the program and then modify them to meet our needs. However, the most difficult aspect was sending RS232 data from the PDA to the microcontroller because embedded C++ was completely foreign to us before the project. We utilized the MSDN help and eventually successfully sent transmissions to the microcontroller. One small problem that we had was that occasionally the PDA would trigger the receive interrupt on the Mega32 when we were not sending data and therefore caused some errors in the state machine. We fixed this by setting unique start and stop bytes to bookend our data packet so that all other receptions were ignored. Overall, the PC-to-PDA communication link is stable and efficient, making it a very excellent design decision.

Car Construction: The assembly of the SearchBot chassis is pretty straightforward. We bought an Acroname PPRK kit and built our car with the included pieces. We originally planned to house the prototype board with the Mega32 in the underbelly of the robot but it did not fit. We moved it to the top of the car and placed the PDA beside it. The camera was attached to a solderboard which was screwed to the chassis. Overall, all the different hardware fit snugly on the car and we felt that it was not too crowded. We mounted strips of bendable aluminum to the front of the vehicle to serve as a catchbin. A recurrent problem is that the ball often bounced out of bin. We alleviated this hindrance by making the catchbin larger. Because of this change, the ball is successfully captured more than 80% of the time.

Camera: Using the camera for vision is probably the most integral and challenging element of this project. A lot of research was needed, particularly in camera terminology and computer vision. We ran into some problems early on trying to communicate with the camera using I2C. CodeVision provided some libraries that handled this protocol but we continually failed in writing to the camera. After much debugging, we discovered the problem was that the InitI2C function in the library did not work. We solved this error by eliminating it and inserting delays between writes. A further complication was that the camera had over 20 control registers and it was difficult to decipher the necessary changes to ensure proper output. Fortunately, we found another project which had similar requirements and used them as reference in deciding our setting changes. The AVRCam project is referenced in the appendix. Further tweaking of the frame rate and resolution finally yielded an acceptable data stream that was decodable.

After properly configuring camera settings, we had to receive and decode pixel data. This proved to be quite difficult because of timing issues. After much debugging, we found that the most effective way to read data is to slow down the frame rate to about 4 fps. Since we only require one image to move the car for about a second, this is an acceptable tradeoff. With the raw data, we then had to extract the color channels to determine color and maneuver the car. Since we got out RGB data, we decided to set a threshold on how much larger the target channel must be compared to the other two. After extensive testing, we found an optimal solution that both maximized range and minimized false triggering.

Car Control: Our car control was rather straightforward to implement but required a lot of tweaking in order to optimize motion. Controlled mode was easily implemented and works very well. Along the way, we noticed that the rate at which the wheels turned changes depending on a variety of factors (battery life, floor friction) and thus implemented a calibration mode to make it easy for the user to get accurate movements. Autonomous mode required a lot more work. Our search algorithm works well most of the time and should be able to find the ball within a 4 meter radius. However, there were problems in the way the car captured and released the ball due to the inelegance of our catchbin. We found that we got much better results if we added some pauses between movements so that the ball does not bounce around too much between the aluminum rails. Changing from a light ping pong to a heavier squash ball also helped. After much testing and fine-tuning, the SearchBot can now search and retrieve red balls effectively. Each ball is captured and pushed to a green-colored base, where it is released.

In hindsight, the SearchBot was perhaps too large of a project to tackle in such a short span of time. Almost every facet of this project was foreign to us at the beginning and it required quite a bit of research to get the robot running properly. However, the SearchBot proves to be a success and we are very pleased with its design, look and performance. The SearchBot almost perfectly matches our initial specifications and actually contains quite a few extra features. We were a little disappointed with our catchbins, which lost the ball 20% of the time, and would have made something more effective if we had more time (maybe by creating a gate using piezoelectric wiring). We would also have tried to make a more efficient searching algorithm if we did not have such severe time constraints. On the other hand, we are extremely impressed with the stability and effectiveness of our PC/PDA/MCU communication link, which has never failed and is very fast. The camera, which required tons of debugging and testing, was another success, able to find ping-pong balls from over 4 feet away on 88x72 resolution. Finally, we were very happy with the overall construction of the project, which we found to be attractive and complete. A video demonstrating the car's capabilities can be found here.

Further Developments: The SearchBot project can be expanded into many different directions so that it becomes a useful research instrument. A possible improvement is in the task that it performs. An ambitious person could definitely write up another novel assignment for the SearchBot to perform using CodeVision. Since the car and PDA has both transmitting and receiving capabilites built into its coding, one could also send information from the Mega32 to a PC terminal. One exciting possibility is to send camera data to the PC to be viewed by the user. Finally, one could add other sensors (acoustic, magnetic) and have the robot act as a multi-sensing autonomous vehicle, much like what the US military is currently researching.

Intellectual Property and Legal Considerations: The SearchBot, like all good engineering projects, could not be successful without referencing previous work. Designs by Youngchul Song and Bruce Lei of the ACSP group for previous autonomous vehicles were important inspirations for this project. The AVRCam project and Oiza's work provided us with helpful hints in designing and testing our camera. MSDN, Bruce Land's examples and the RAPICSharp open-source code were crucial in getting our PC/PDA/MCU communication link to succeed. These projects were all academic projects that are cited in this report and readily available online. As our project is academic in nature and is completely designed and constructed by us, we have not violated any intellectual property laws. A further legal consideration is that we are using a WLAN link that must conform to federal laws. As expected for consumer products, the Dell Axim is compliant with FCC regulations; its manual says “47 CFR Part 15, Subpart C (Section 15.247). This version is limited to channel 1 to channel 11 by specified firmware controlled in the U.S.A.”

Standards: As mentioned above, our projects used several different standards. For each, we have done the necessary research to confirm that we are in fact complying with its necessary specifications.

Throughout the SearchBot project, we consciously considered the IEEE Code of Ethics when making design and implementation decisions. The Code is as follows:

As with all engineering designs,

we made sure that our creation will not hurt any individual who may use or come

in contact with it. Since our robot was autonomous and thus is not always under direct

human control, extra precautions must be implemented so that it can be

immobilized quickly if it poses danger to anyone or anything. To facilitate

this, the vehicle can be turned off if the Matlab script is run with input “0.”

Moreover, a power switch is easy accessible on the top of the vehicle and will

turn off every

component in the vehicle if flipped.

In our

project, we referenced many different sources. To conform to point #7 in the

code of ethics, these sources were carefully cited and linked in our report so

they can be properly acknowledged for their contributions.

Inherently, this project is about enhancing knowledge in electrical engineering

and progressing research in sensor networks. Thus, the SearchBot relates

directly to points #5, 6 and 10 in the code of ethics. Throughout the project,

we have been dedicated to improving our creativity, learning how to troubleshoot

problems and becoming better engineers. Moreover, our project is directly

pertinent to research conducted here at Cornell University, which is relevant to

US military defense and has numerous civilian applications.

Code:

Schematic:

Parts:

| Part | Cost | Supplied by |

| Acroname PPRK robot vehicle | $325.00 | ACSP group |

| Dell Axim x51 PDA | ~$500.00 | ACSP group |

| PDA RS232 Serial Connection | ~$50.00 | ACSP group |

| OV6620 optical camera | $43.65 | ACSP group |

| Batteries (9V and 4 AA) | $0.00 | ACSP group |

| Mega32 microcontroller | $8.00 | ECE476 lab |

| Custom PC board | $5.00 | ECE476 lab |

| Solder board | $2.50 | ECE476 lab |

| Max233CPP | $7.00 | ECE476 lab |

| RS232 Connection | $8.00 | ECE476 lab |

| Wire, capacitors and resistors | $0.00 | ECE476 lab |

| Aluminum | $0.00 | Scrap |

| Red Balls | $0.00 | Scrap |

| Green Poster Board | $0.00 | Scrap |

Work Distribution:

| John: |

|

| Diego: |

|

References:

| Datasheets: |

| Code referenced: |

|

| Vendor Sites: |

|

| Background Sites: |