Introduction

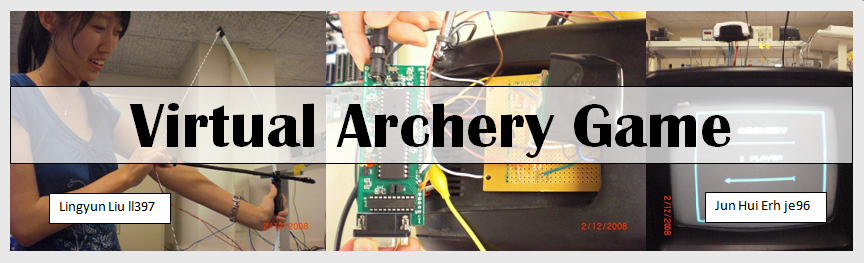

For our final project, we built a virtual archery game. The game simulates the firing of an arrow on a target without arrows flying around.

The purpose of this project is to attempt building an interactive arcade style game using an Atmega644 microcontroller. In our game, the user navigates the game only via the equipment provided (the bow and arrow). The user has two modes of game play to choose from – 1 or 2 player mode. In 1 player mode, the user is able to play a round of archery by shooting 3 shots. After 3 shots, the results will be summarized. For 2 player mode, the game consists of 3 rounds where each user has 3 shots in each round. The results after each round are summarized and the winner is announced after the final round.

^top

High Level Design

Rationale

Archery is not a very common sport and it is not conveniently played at home. Parents of young kids are generally reluctant to purchase an archery set since it is potentially dangerous due to the flying arrows. We had an idea that a virtual archery video game would be perfect for youths who are aspiring archers or just for entertainment. The idea is influenced by games such as Wii tennis and virtual golf.

Background math

Due to the fact that our black-and-white TV screen is 142 by 199 pixels, we have to draw an ellipse in order to make it look like a circle on the TV screen. The algorithm to draw an ellipse is “ A Fast Bresenham Type Algorithm for Drawing Ellipse” by John Kennedy.

Fast Ellipse Drawing

The main idea in the algorithm is to analyze and manipulate the linear equation so that only integer arithmetic is used in all the calculations.

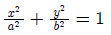

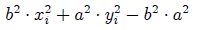

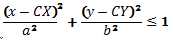

The equation,  represents the equation of an ellipse which is to be plotted using a grid of

discrete pixels where each pixel has integer coordinates. There is no loss of generality here as all ellipse with elliptical

center not at the origin could be translated into one that has an origin (0,0). We plot the ellipse by comparing errors

associated with the x and y coordinates of the points that we are plotting.

represents the equation of an ellipse which is to be plotted using a grid of

discrete pixels where each pixel has integer coordinates. There is no loss of generality here as all ellipse with elliptical

center not at the origin could be translated into one that has an origin (0,0). We plot the ellipse by comparing errors

associated with the x and y coordinates of the points that we are plotting.

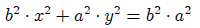

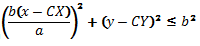

In order to avoid divisions, we re-write the above equation in the form  .

.

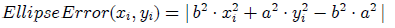

For a given point P(xi,yi), the quantity  is a measure telling where P lies in relation to the

true ellipse. If this quantity is negative it means P lies inside the true ellipse, and if this quantity is positive it means P

is outside the true ellipse. When this quantity is 0, P is exactly on the ellipse.

is a measure telling where P lies in relation to the

true ellipse. If this quantity is negative it means P lies inside the true ellipse, and if this quantity is positive it means P

is outside the true ellipse. When this quantity is 0, P is exactly on the ellipse.

We define a function which we call the EllipseError which is an error measure for each plotted point.

In the first quadrant the ellipse tangent line slope is always negative. Starting on the x-axis and wrapping in a counterclockwise direction the slope is large and negative which means the y-coordinates increase faster than the y-coordinates. But once the tangent line slope passes through the value -1 then the x-coordinates start changing faster than the y-coordinates.

Thus we will calculate two sets of points in the first quadrant. The first set starts on the positive x-axis and wraps in a counterclockwise direction until the tangent line slope reaches -1. The second set will start on the positive y-axis and wrap in a clockwise direction until the tangent line slope again reaches the value -1.

For the first set of points we will always increment y and we will test when to decrement x. For the second set of points we will

always increment x and decide when to decrement y.

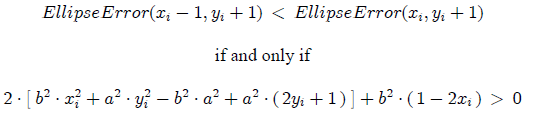

Our test decision as to when to decrease x for the first set of points is based on the comparison of the two values,

When this last inequality holds then we should decrement x when we plot the next point.

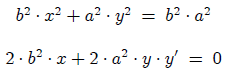

We can analyze the tangent line slope by implicitly differentiating the equation.

We will continue to plot points as long as  , i.e., tangent slope greater than -1. Four points,

corresponding to the four quadrants are plotted at one go.

, i.e., tangent slope greater than -1. Four points,

corresponding to the four quadrants are plotted at one go.

Logical structure

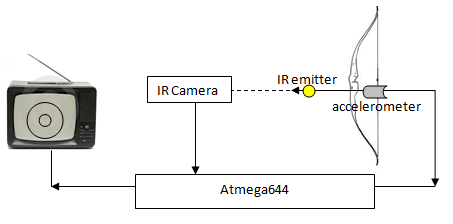

The video game has two modes: single player and two-player mode. In single player mode, a player fires three shots and gets his/her score after the third shot. The figure below shows the high level design for the game. The IR emitter is positioned on the tip of the arrow while the accelerometer is attached to the end of the arrow. An IR camera will be stationed on top of the TV. The IR emitter and the accelerometer on the arrow will be connected to a 3.3 V source (from a voltage regulator). The IR camera will obtain the position of the IR emitter every two frames. The analog data from the accelerometer is inputted to the Atmega644 via its ADC input.

Hardware/software tradeoff

The major constraint we had is our decision to run the MCU on a 16MHz crystal. This limits the amount of code that could be run during the vertical synch the video since only a limited number of CPU cycles can fit within the vertical synch period. We originally thought about making the game color, but that turned out to be impossible with just our Atmega644 MCU running at 16MHz. Also, the additional hardware like Analog Devices AD724 RGB to NTSC Encoder and ELM 304 NTSC Video Generator may be too much for our budget.

Also, we have to stagger most of the calculations so that they can be carried out in many consecutive vertical synch pulses instead of just one. For instance, as we had to draw 6 ellipses on the screen, we staggered the calculation by drawing one every frame incrementally, resulting in a pleasant animation effect. Therefore, it is more tedious on the software design part to implement a counter to keep track of what section of code to run in which cycle of the vertical synch.

Standards and trademarks

We used the NTSC standard for generating video signal. We generated a non-interlaced, black and white TV signal, which means our game can be played on any standard NTSC CRT TV. Our game didn't involve any IEEE standards.

Since our infrared devices are used for detection and not communication, we do not have to conform to standards published by the Infrared Data Association (IrDA).

^top

Program/hardware design

Hardware design

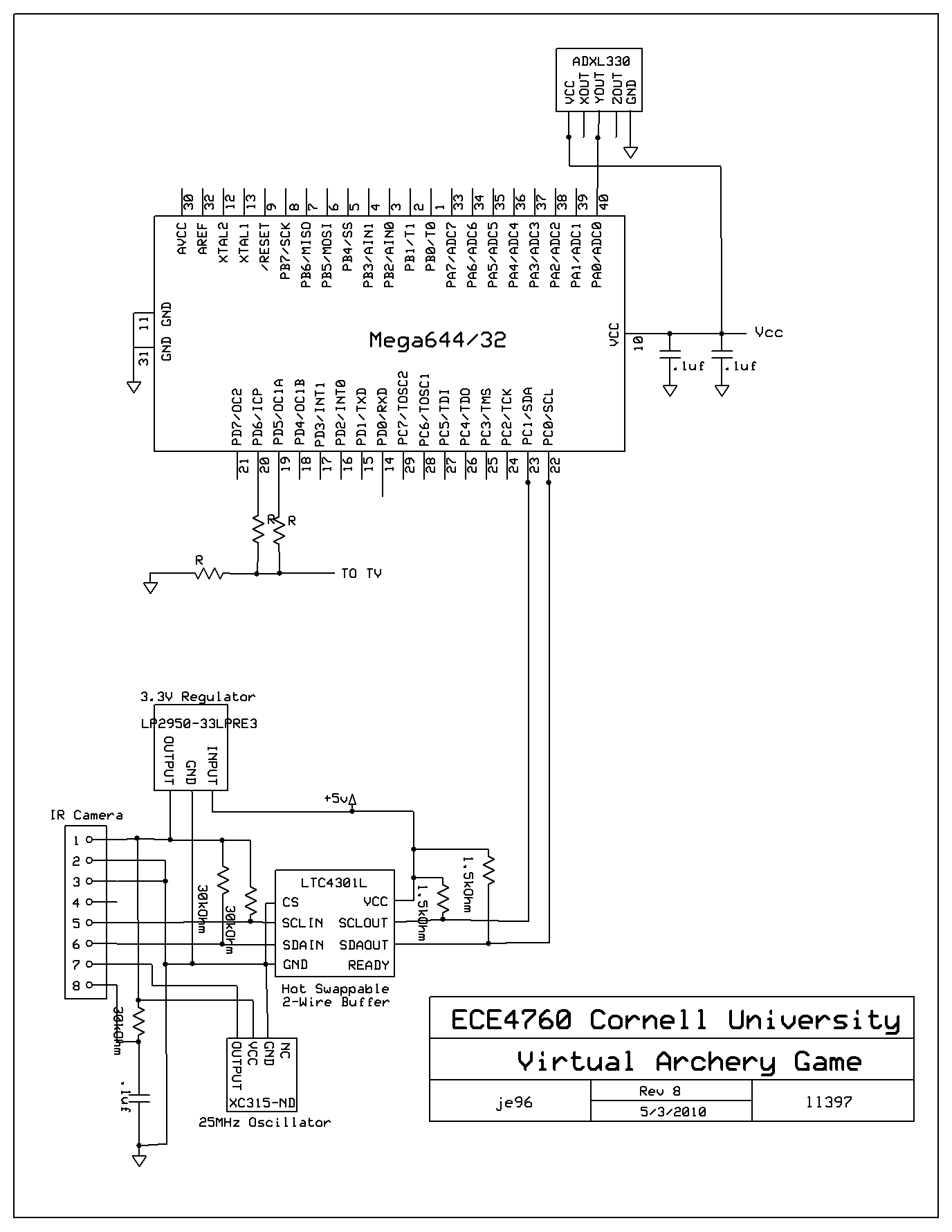

The hardware schematic can be found in the Appendix section.

Overview

We used the protoboard of the Atmega644 as our MCU. This was by far the most time consuming aspect of the project due to the large number of components to be soldered. Other major components are the IR camera, accelerometer and the video output circuit.

IR Camera

The IR camera we used was from the Wiimote. It was chosen due to its wide viewing angle (33 degrees horizontally and 23 degrees vertically), its long range (about 20 feet) and the fact that the camera comes with built in image processing. This allows us to obtain a 1024x768 resolution.

The schematic used to connect the camera is based on kako’s schematic. The only difference we did was instead of building a crystal oscillator as shown in the original schematic, we bought a crystal oscillator (XC315-ND) to clock the camera.

The camera communicates via I2C protocol with the MCU. A hot-swappable 2 wire bus buffer (LTC 4301L) is used to isolate the SCL and SDA lines of the camera from the MCU. This is because the camera runs on a 3.3V voltage source while we are powering our MCU at 5V. The pull-up resistors used are obtained from kako’s schematic. In addition we also turn on the internal pull-up resistors in the Atmega644.

Accelerometer

The accelerometer used was an ADXL330. It was obtained from a broken Wiimote. It was chosen due to its low cost. The ADXL330, is a 3g, 3-axis accelerometer from Analog Devices. The X, Y, Z output of the accelerometer needs to be connected to a capacitor that is connected to ground. Since we obtained our accelerometer from the Wiimote with the required capacitors soldered in, we were able to read the output directly without additional components.

In our game, we used the Y-axis of the accelerometer as it is the direction of shooting of the arrow. It is connected to pin A0 (ADC pin) of the Atmega644.

Video output

The video output circuit is obtained from Lab 3. This is because the functionality of the TV is exactly the same as from Lab 3.

Software design

All software code can be found in the Appendix section.

Overview

Our code uses the MCU for the following task:

- Initialize and read data from Wiimote IR Camera via I2C interface

- Detect shooting motion using an accelerometer connected to an ADC pin

- Generate video for the game

- Transition between different states of the game

IR Camera

We use the IR camera to read the position of the IR LEDs mounted on the arrow. Before using it, we have to initialize it and adjust its settings. This is done via the I2C protocol using the TWI interface in the Atmega644. The library used for communication via the I2C protocol was taken from last year’s 3D scanner project (i2cmaster.h and twimaster.c).

The camera’s device address and process of initialization was done following instructions in WiiBrew. In writing the initialization of the camera and setting its sensitivity setting, we used Johnny Lee’s code written for PIC18F4550 and kako’s Wii IR camera example code written for the Atmega168. The sensitivity setting we used is the max sensitivity setting suggested by inio on WiiBrew. We found from trial and error that this was best able to detect our IR led accurately.

The IR camera returns X and Y coordinate of the IR led. Each coordinate is encoded in 10 bits where the X coordinate has a range of 0 – 1023 and Y coordinate has a range of 0 – 767. Our screen has a 144x199 resolution. We converted the X, Y coordinate to the coordinates on the screen using the equation below where Ix1 and Iy1 are coordinate obtained from the camera.

x = (Ix1 >> 3) + 5;

y = (Iy1 >> 2) + 5;

This translates into a range of 5 – 132 and 5 – 196 for the X and Y coordinates on the screen. This gives us a sufficient resolution that covers almost our entire screen.

When the camera is unable to detect an IR source, it will output a 0xFFFF value for both X and Y coordinates. Due to overflow, this translates into the coordinates (132, 5) on the screen. Since we draw the point the user is pointing, when the LED is out of range from the camera, it will be seen as a point on the upper right corner of the screen.

Detect shooting

We detect shooting by reading the value from the accelerometer on the ADC pin (pin A0). When the arrow on the bow is not released, the value from the accelerometer is at a fairly steady value (between 80 – 85) which is different at each try. We obtain this value during initialization and copied it into the variable thres. When a shot is fired, the value from the accelerometer drops from thres to a value close to 0 and returns back to thres. Thus, we detect a shot has fired if value from ADC < thres/4 and set the shotFlag to 1. We set the shotFlag back to 0 only after we have done all the processing needed in the game play. This prevent the system from being triggered by false positive since after a shot is fired, the value at the accelerometer might oscillate somewhat before returning to its original value. Since the processing time is sufficiently long (about two refreshes on the TV screen), this prevents us from recognizing 1 shot as multiple shots.

The fraction 1/4 used to detect the shot was obtained via trial and error. A large value will cause any small movement to be detected as a “shot” (this will cause unintended shots to be recognized) while a smaller value will require the “shot” to be fired as a sufficient speed (this will be more difficult to use for the user). The value used was to find a balance between these two problems.

Video

The video generation code used is from Lab 3 and video_codeGCC644.c. The ISR used to generate the horizontal and vertical sync and displaying on pixels on the screen is unchanged.

We added three methods to draw and erase on the screen:

- video_erasechar(char x,char y,char c): erase character c at position x,y.

- video_erases(char x,char y,char *str): erase string str starting at position x,y.

- video_putsymbol(char x,char y,char c): draw an 8x8 symbol at index c in symbolbitmap at position x,y.

symbolbitmap is a table of 8x8 symbol stored in program memory. About half of the bitmap used is obtained from the Duckhunt video game project from Spring 2005.

In the video generation code used, we are only able to the screen during the vertical synch (when linecount equals 231). We have 60 line times to do so which translates to about 4ms. This severely limits the amount of processing we could do at each frame.

In each frame, we need do the following processing:

- Read value of accelerometer from ADC. This is executed at every frame.

- Obtain data from IR camera of the position of the LED. This is executed every alternate frame (when frame flag is 0).

- Draw and update screen. This is executed every alternate frame (when frame flag is 1).

Even by alternating between reading data from camera and updating the screen, there is still insufficient time to draw large objects or when transition between states in the game (each state has a different screen requiring erasing everything in previous state and draw new things in the new state when we transition between states). This is done by breaking up the erasing and drawing aspects of each state transition to small steps and updating each step at each new frame. This is controlled using the count variable.

Game play

The game play was the most time consuming part in writing our code. Due to the number of states required to be displayed, it was a lot more complex than what we had written for Lab 3. Due to the memory constraints faced, we had to be as efficient as possible software wise. Thus, we had to combine certain states together to be within the memory limit.

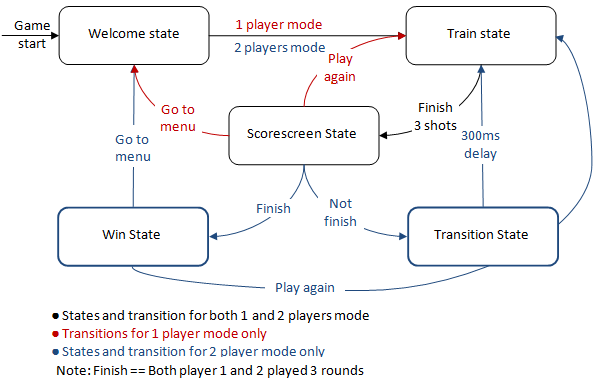

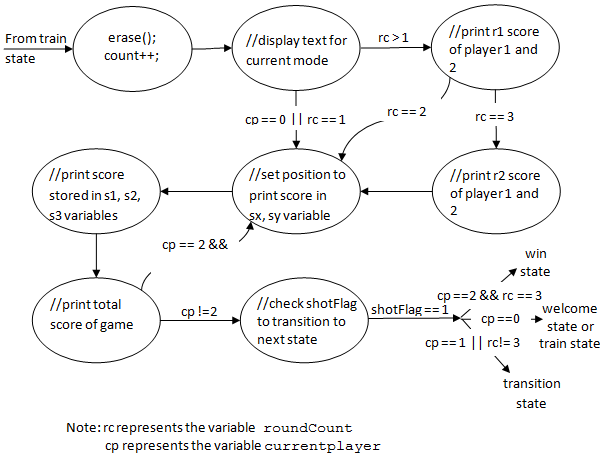

A simplified state machine of our game is shown below.

We first start the game by initializing all variables and flags to its default value. The games start in the Welcome state. At each state transition, we erase data from the previous state using erase(). We then draw the new display incrementally. This is controlled by using count variable.

An overview of each state is described below.

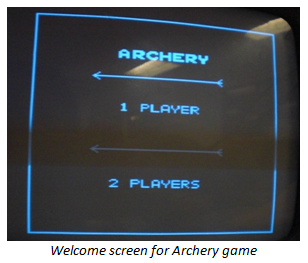

1. Welcome state: This is the state the game starts with. An example of it is shown in the figure to the right. In this state, the user is able to select to

play the game either in 1 player or 2 players mode. The user navigates the game by pointing the arrow at the appropriate section. The user’s selection will be marked

by an ‘X’ beside the text (not shown in figure). The user makes the selection by “shooting”at their selection. Both selections will transition to the train state. We

set currentplayer to 0 in 1 player mode and set currentplayer to 1 and roundCount to 1 in 2 player mode.

1. Welcome state: This is the state the game starts with. An example of it is shown in the figure to the right. In this state, the user is able to select to

play the game either in 1 player or 2 players mode. The user navigates the game by pointing the arrow at the appropriate section. The user’s selection will be marked

by an ‘X’ beside the text (not shown in figure). The user makes the selection by “shooting”at their selection. Both selections will transition to the train state. We

set currentplayer to 0 in 1 player mode and set currentplayer to 1 and roundCount to 1 in 2 player mode.

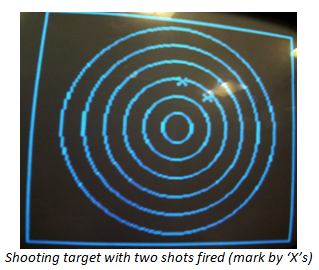

2. Train state: This is the state where the shooting target is drawn. The shooting target consists of 6 ellipses

(due to the vertical skew of the screen, it appears as a circle). They are drawn using Bresenham’s ellipse algorithm which requires computation of each points on the

ellipse. The algorithm is explained in more detail in the following section. This is computationally intensive, thus, we only draw a circle in each frame.

2. Train state: This is the state where the shooting target is drawn. The shooting target consists of 6 ellipses

(due to the vertical skew of the screen, it appears as a circle). They are drawn using Bresenham’s ellipse algorithm which requires computation of each points on the

ellipse. The algorithm is explained in more detail in the following section. This is computationally intensive, thus, we only draw a circle in each frame.

The user is able to fire 3 shots on the target. Each shot is marked by an ‘X’. After each shot, we calculate the score and stored it in variables s1, s2, s3 (or p2s1, p2s2, p2s3 for player 2 in 2 players mode).

After 3 shots is fired, we have a 500ms delay before transitioning to the scorescreen state.

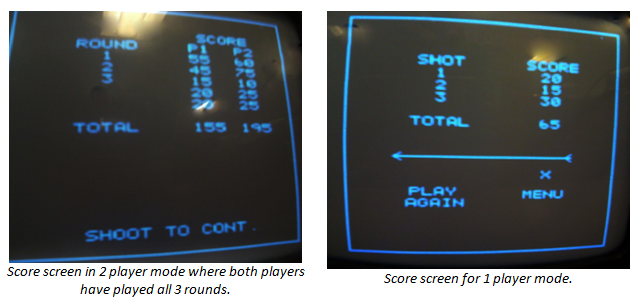

3. Scorescreen state:This was the most challenging state to write. This is because the score screen is different depending on the mode the game is played in (1 player or 2 players). This is further complicated in the 2 player mode where we have to print the score for previous rounds and the score for the current round.

We determine the current mode the game is in by examining the variables currentplayer and roundCount.

- currentplayer: Set to 0 in 1 player mode. Its value is either 1 or 2 in 2 player mode which indicates the current player (either player 1 or 2) played the game.

- roundCount: Not used in 1 player mode. Its value is either 1 or 2 or 3 in 2 player mode, the value indicates the current round the game is in.

We made a state machine to determine the different states depending on the currentplayer and roundCount flag. Each state is represented by the value in count.

In 2 player mode, since we want to print the score of the current round of both players, we set a flag p2print that is only used when currentplayer is 2 (this indicates that player 2 played previously). p2print is a flag indicating whether we have print the score of player 2. When currentplayer equals to 2 (this indicates that player 2 played previously), we will first print the score player 1 has for this round and check if p2print is set. If it isn’t we will set p2print and set the values stored into p2s1, p2s2 and p2s3 into s1, s2 and s3 respectively and transition to the state that prints the score.

A simplified state machine is shown below. In our implementation, some states are represented into multiple count values. And some states are combined into a single count value. The state machine drawn is a template we used when we were coding it up and represents a fair representation of the method we used.

4. Transition state: This state is only used in the 2 player mode. It is a screen that shows for 300ms the current round of the game and which player’s turn is it in the game.

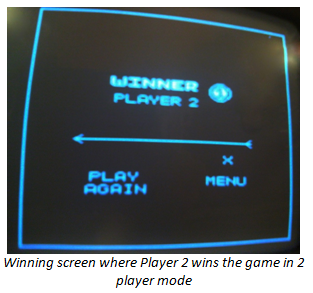

5. Win state: The state is only used in the 2 player mode. It is a screen that shows the winner of the game or announces that

there is a tie if the total score of both players are equal. Similar to the score screen for the 1 player mode, the user is able to select to return to the welcome

screen or play another game in the 2 player mode.

5. Win state: The state is only used in the 2 player mode. It is a screen that shows the winner of the game or announces that

there is a tie if the total score of both players are equal. Similar to the score screen for the 1 player mode, the user is able to select to return to the welcome

screen or play another game in the 2 player mode.

Bresenham Algorithm for Drawing Ellipses

Due to the screen being vertical skewed; we need to draw ellipses that are seen as circles. We drew the ellipses using Bresenham’s Algorithm for Drawing Ellipses. The implementation is based on “A Fast Bresenham Type Algorithm for Drawing Ellipse” by John Kennedy. The math behind the algorithm is described in the High Level Design section. The method we used to draw the ellipses follows the algorithm described.

Score calculation

We drew 6 ellipses as our shooting target. A shot in the innermost ellipse has a maximum score of 30 points. The points decrease by 5 at each larger ellipse. Shots outside the ellipses have 0 points.

Thus, in calculating our score, we first have to determine if the point shot is in an ellipse. We do this by building a lookup table. For the X coordinates on the ellipse in the first quadrant, we built a table of its corresponding Y coordinate. For a point (x,y), the index of the table is |x-CX|. If the value obtained from the table is <|y-CY|, this indicates that the point lies outside of the ellipse. In addition, points where |x-CX| > a (where a is the horizontal length of the ellipse) also lies outside of the ellipse.

Reused code/design

For the software code, we reused or referenced the following sources:

- I2C protocol: i2cmaster.h and twimaster.c from last year’s 3D scanner project.

- IR camera initialization: Johnny Lee’s code written for PIC18F4550 and kako’s code written for Atmega168.

- Video generation code: From Lab 3.

- Bitmaps: From Duckhunt video game project in Spring 2005.

Failed attempts

Software

A major problem in starting to program our code was the communication of the camera via the I2C protocol. Despite following the instructions by the line by line, we were persistently not able to address the slave device (IR camera). After discussing this problem with other groups who are using I2C protocol, we found out that we should use the i2cmaster.h and twimaster.c libraries from last year’s 3D scanner project.

In designing our game play, we first planned to have a different set of states for the 1 and 2 player mode . This was initially successful when we have not fully written all the states. However as we increased it in complexity, we quickly ran into memory problems. Thus, we had to scale down the complexity of the different states of the game. When we first combined the scorescreen state of the 1 and 2 player mode, we had a different task to be executed for each count value. This caused the code to be really long and we eventually ran into memory constraints. We noticed that many of the steps in the different modes of the game are fairly similar but they are just executed at different times and displayed at different positions. Thus, we constructed a state machine that simplified our code although it was more logically difficult to write.

Another challenge for us was in writing the score calculation. Due to having to draw ellipses to have the appearance of a circle, we were not able to compare the distance of the point shot to the center of the circles

with the radius of the circles to determine the score obtained. We first tried to determine whether a point is inside the ellipse using the equation  . We stored the values 1/a2 and 1/b2

in a table in 8:8 fixed point format. This gave us really bad accuracy as we do not have enough bits to represent the decimal points. We then attempted to write fixed point 16:16 format. In this implementation, we ran into

overflow problems in long. In addition, without writing it assembly, the method will not run sufficiently fast for our score calculation. After having a lot of problems with the ratios 1/a2 and 1/b2 due to its small value

we decided to use a larger value hoping it will give better accuracy. The equation

. We stored the values 1/a2 and 1/b2

in a table in 8:8 fixed point format. This gave us really bad accuracy as we do not have enough bits to represent the decimal points. We then attempted to write fixed point 16:16 format. In this implementation, we ran into

overflow problems in long. In addition, without writing it assembly, the method will not run sufficiently fast for our score calculation. After having a lot of problems with the ratios 1/a2 and 1/b2 due to its small value

we decided to use a larger value hoping it will give better accuracy. The equation  was used. Using this, we ran into overflow problems in ints. We then observed that the ratio b2/a2 for

all our ellipses is between the values 2.4 – 2.6. We thus tried to approximate this to 2.5. This gave us much better accuracy in detecting the smaller ellipses; however error was still high in the outermost ellipse. This

was especially true in detecting points outside all the ellipses. We eventually used the lookup table method explained above.

was used. Using this, we ran into overflow problems in ints. We then observed that the ratio b2/a2 for

all our ellipses is between the values 2.4 – 2.6. We thus tried to approximate this to 2.5. This gave us much better accuracy in detecting the smaller ellipses; however error was still high in the outermost ellipse. This

was especially true in detecting points outside all the ellipses. We eventually used the lookup table method explained above.

Hardware

A major source of problem for us was bad connection. Due to our inexperience in soldering, the circuits we built in the beginning were not soldered properly and had faulty connections. This caused our electronics to be working one day and not working the next. This was a major source of stress in the beginning as we wondered whether we burned our electronics.

Another problem was the IR camera. We initially followed kako’s schematic in building our circuit for the camera. However, we persistently were not able to obtain a clock signal to clock the camera on. We eventually bought a 25MHz clock oscillator to clock the camera.

^top

Results

Execution

Timing constraints was a major issue in our project. When transitioning between states, it was not possible to erase the screen and put display on a new screen at the same time. This required us to break them into smaller intermediate steps that could be completed in the specified time. This complicated the logic of our state machine but allowed a clean running of our game and also gave an animation like effect in certain instances (drawing of the ellipses in train state). The game runs without jitter and flicker. There is no also no noticeable lag between the user’s movement and the game’s response.

Accuracy

The game performed accurately as we expected. No problems of false positive of wrongly recognizing a shot was experienced. The position the user is pointing also accurately translates to the screen. The only time that inaccurately marks a point is when the area the user is pointing is out of the range of the camera. However, since this is outside the bounds of our shooting target and is not used to determine the user’s selection when navigating the game, this does not affect the game play or interaction with the game.

Safety

Archery in general is a rather dangerous game with flying arrows all over the place. Thus utmost care was place in our design to prevent harm caused by projecting arrows. We tightly secured the arrow to the bow. A small clamp is also built that limits the amount the bow could be pulled back. This prevents overexcited users from pulling the bow to hard to cause the arrow to fly off from the bow.

The infra red used to communicate with the game is also sufficiently low intensity that it will not cause burns or be of any fire hazard.

Interference

The only transmission used (aside from regular wires) is via infrared. To the best of our knowledge, this transmission method does not interfere with the work of any other groups.

Human Factors and Usability

We believe our virtual archery game will be very usable. However, there exist some human factors that we need to take into consideration. For instance, the user holding the bow may stand too far or too near from the IR camera, resulting in loss of signal. Also, there might be reflecting surfaces that result in multipath of the IR signal when it travels to the IR camera. To prevent this, we need to have clear instructions to advice users on the appropriate distance he/she should stand from the IR camera. Also, we should advise users to clear the playing space so that no obstructions, hot objects, or reflecting surfaces can interfere with the IR signal.

^top

Conclusions

Meeting expectations

We met our objective of creating a virtual archery game that is fun and not dangerous to play. The user interface is friendly – the player can just point his arrow to a menu and shoot at it to select an option. Furthermore, the game provides a two-player mode where friends can compete with each other. The only regret that we have is not being able to implement it in color. We also hoped to do it on a larger TV so that the text and circles appear larger and easier to see and read. On the other hand, having a small black-and-white TV means that the player does not need to stand far away from the TV to aim at the target.

Intellectual property consideration

We have benefited from websites that documented the various parts of the Wiimote and how to extract the IR camera from it. We acknowledge the use of the following sites.

- A Guide on extracting the IR camera

- I2C interface

- Kako's website on Wii IR camera

- WiiBrew

- Johnny Lee’s Blog

We reused the I2C library found in last year’s 3D scanner project. When initializing the IR camera, we consulted code posted by open source developers. In addition, we also used some bitmaps from the Duckhunt video game project (Spring 2005). The ellipse drawing algorithm we used was written in the paper A Fast Bresenham Type Algorithm for Drawing Ellipse.

The IR camera is developed by PixArt for the exclusive use of Nintendo Wii, but we did not find any clause in the Wii agreement that disallow the use of parts. In addition, this is not for commercial purposes, so we do not foresee any violation of intellectual property problems.

Ethical Consideration

From the IEEE Code of Ethics,

1. To accept responsibility in making engineering decisions consistent with the safety, health and welfare of the public, and to disclose promptly factors that might endanger the public or the environment.

When designing our game we tried to eliminate any kind of flickering or video artifacts on the screen. There is a potential danger that flickering might cause seizures in some individuals. Playing video game for a long period of

time is not very healthy, however our game is not addictive and it requires players to move to shoot, so we are confident that our game doesn't harm the players or the environment in any way.

2. To avoid real or perceived conflicts of interest whenever possible, and to disclose them to affected parties when they do exist.

We avoided any possible conflict of interest there might be between us and Nintendo by making our game in black and white and acknowledging the use of the Wiimote’s IR camera and accelerometer.

3. To improve the understanding of technology, its appropriate application, and potential consequences.

This project helped us understand how to use a microcontroller to generate video and take in signals from peripherals such as accelerometer and IR camera to create an interactive video game.

4. To seek, accept, and offer honest criticism of technical work, to acknowledge and correct errors, and to credit properly the contributions of others. We accepted help from our TAs and Professor Bruce Land and we would like to thank them for their advice. We have also credited any designs or patents that are relevant to our project.

5. To be honest and realistic in stating claims or estimates based on available data. All our claims and expectations for the video game are honest and realistic.

Legal Consideration

Since our project is sole just for personal use and not for profit, there are no legal considerations for our project.

Safety Consideration

Strong infrared radiation can be a health hazard to the eyes and the vision. However, the level of infrared radiation required in our game is similar to those used in remote controls. Hence, we do not perceive any safety risk to the user.

^top

Appendix

Software Code

circle.c – main code for video generation

ircamera.c – code for reading data from IR camera

twimaster.c – functions for I2C communications

multASM.s – assembly language multiplication for 8:8 fixed point representation (written by Bruce Land)

video_codeGCC644.c – video generation functions (written by Bruce Land)

ircamera.h

video_codeGCC644.h

Hardware schematic

Cost details

| Item | Part no. | Vendor | Quantity | Cost |

| ECE4760 PCB | - | ECE4760 Lab | 1 | 4.00 |

| Atmega644 | - | ECE4760 Lab | 1 | 8.00 |

| Power Supply | - | ECE4760 Lab | 1 | 5.00 |

| B/W TV | - | ECE4760 Lab | 1 | 5.00 |

| Small solder board | - | ECE4760 Lab | 1 | 1.00 |

| Max233 CPP | - | ECE4760 Lab | 1 | 7.00 |

| 3.3V Regulator | LP2950-33LPRE3 | ECE4760 Lab | 1 | FREE |

| Wiimote - IR Camera - Accelerometer |

- ADXL330 |

Ebay.com | 1 | 2.50 |

| Hot Swappable 2-Wire Buffer | LTC4301LCMS8#PBF-ND | Linear Technology | 1 | SAMPLED |

| 25MHz Crystal | XC315-ND | Digikey | 1 | 1.88 |

| Bow & Arrow | - | Amazon.com | 1 | 11.00 |

| Alligator clips and jumpers | - | ECE4760 Lab | 8 | 4.00 |

| SOIC16 | - | ECE4760 Lab | 1 | 1.00 |

| Total | 57.38 |

Task Division

| Task | Jun Hui | Lingyun |

| Building Protoborad | X | |

| Soldering | X | |

| Building Bow and Arrow | X | X |

| Video Code | X | X |

| Fast Ellipse Drawing | X | |

| Communication via I2C | X | X |

| ADC for accelerometer | X |

References

Data sheets

Code/schematic borrowed from others

- I2C code and library from 3D Scanner project (Spring 2009)

- Wiimote IR camera initialization from kako

- Wiimote IR camera schematic from kako

- ECE 4760 Lab 3 for NTSC video streaming code

- Bitmaps from Duckhunt video game (Spring 2005)

Vendor Sites

Background sites

- I2C protocol

- WiiBrew

- Procrastineering – Working with PixArt camera directly

- Analysis of infrared ray sensor part for pointing of Wiimote control

- A Fast Bresenham Type Algorithm For Drawing Ellipses

^top

Special Thanks

We will like to thank the following people/organizations:

- Linear Technology for the LTC4301L Hot Swappable 2-Wire Buffer

- 3D Scanner project for their I2C library

- Duckhunt video game project for their bitmaps

- The TAs for always having lab open and patiently answering our questions

- Bruce Land for teaching us microcontrollers and never fail to answer our questions