Introduction top

"A skin color detection game implemented in a DE1-SoC FPGA board"

Color detection algorithms have become more popular as technology continues to advance. Many are the applications where this topic is of crucial interest. As we traverse in an era where autonomous tasks are desired, object detection has become crucial. In this project we have designed a system, completely in hardware using a DE1-SoC FPGA board, capable of tracking the motion of human skin. We do this by processing pixel information from decoded signals obtained from an NTSC camera. Based on this, we have designed a game which we titled "Catch Bruce if you Can" that consists of matching the position of a cursor, which contains coordinate information of the user's hand, with a randomly generated image of Professor Bruce. Successfully matching the position of the cursor with Prof. Bruce's image will increment the score by one point. The game also displays randomly generated images of the members of the group (Alberto, Shaan and Godly). However, hitting any of those images with the cursor will reset the score back to zero. Our system provides a pretty accurate and reliable skin-detection algorithm and a very fun game to play, with different level of difficulties.

High Level Design top

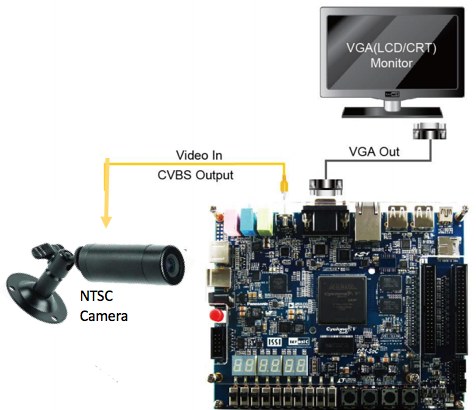

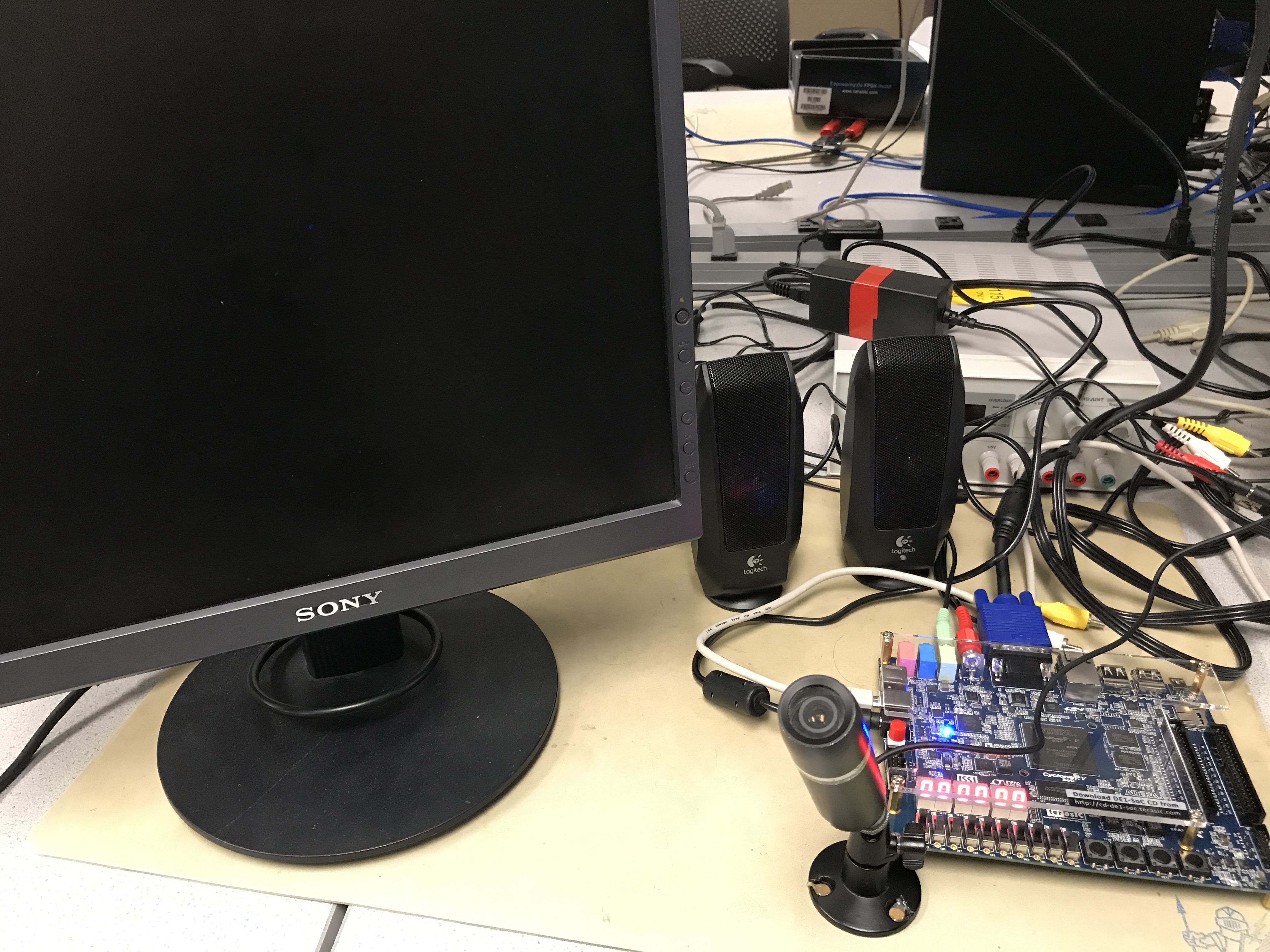

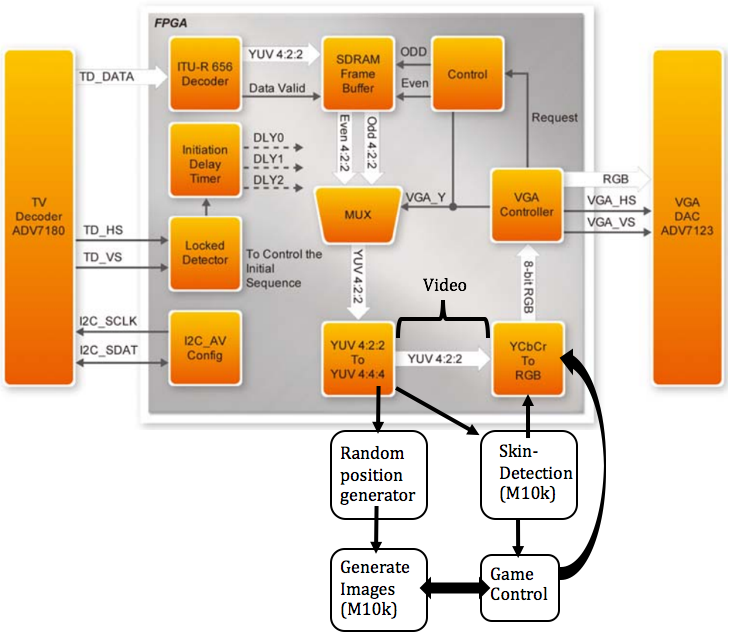

Our system is composed of a DE1-SoC FPGA board, an NTSC camera and a VGA display, as illustrated above. The NTSC camera records video, which is processed by the FPGA's internal video decoder. The encoded information is in the form of YCrCB 4:2:2 (YUV 4:2:2), which is then sent to the SDRAM frame buffer. A VGA controller generates data requests and performs an odd/even signal selection to the SDRAM frame buffer. Other modules for data conversion from YCrCb to RGB are used, which gets the adequate data to be printed on the VGA screen. The basic hardware block diagram of the video system module is shown in orange blocks in Figure 1 below. In addition to the video system, which is provided by Terasic in the DE1-SoC CD-ROM (rev.F Board), our design includes modules with our implementation of the skin detection algorithm, image and game generation. These modules process information taken from the output of the "YUV 4:2:2 To YUV 4:4:4" module to perform the designed algorithm as shown below.

Fig.1 Communication between the FPGA and TV decoder (orange blocks) and our added design (white blocks)

As it can be seen in the figure above, the data obtained from the YUV4:2:2 to 4:4:4 block is used in the implementation of our system. The output of this block provides the necessary YCbCr color values to perform pixel processing for the skin detection algorithm. We also generate images using M10k block and random generator modules whose activity is controlled by the game control unit or state machine. In addition, information from the skin detection algorithm is taken by the game control unit to send commands/flags to other modules like the one in charge of printing the current score on the screen. All these modules need to send data to the YCbCr to RGB module at the end in order to display the necessary information on the VGA screen. Figure 1 above summarizes the whole system. Details on how these modules work are provided in the following sections.

Hardware top

TV Decoder

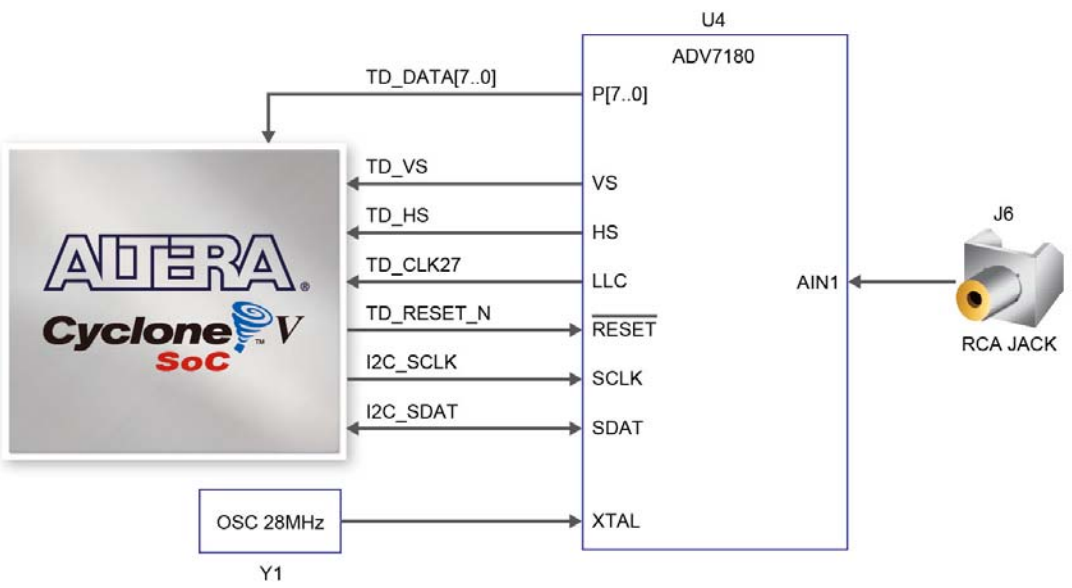

As mentioned earlier, the DE1-SoC board is equipped with an TV decoder chip (ADV7180 from Analog Device). The ADV7180 automatically detects and converts a standard analog baseband television signal, which in our case is an NTSC (National Television Standard Committe) signal into 4:2:2 component video data. The TV decoders is compatible with various types of video devices. Given the capabilities of the DE1-SoC board, the registers in the TV decoder can be accessed by the Cyclone V SoC FPGA or the High Performance System (HPS). This is set through I2C communication protocol between the video decoder and the target device. For our project, the FPGA side of the board accesses the TV decoder and performs all the hardware processing. Figure 2 below illustrates the connections between the FPGA and the TV decoder. Please see the device datasheet here for more details.

Fig.2 Communication between the FPGA and TV decoder

VGA Controller

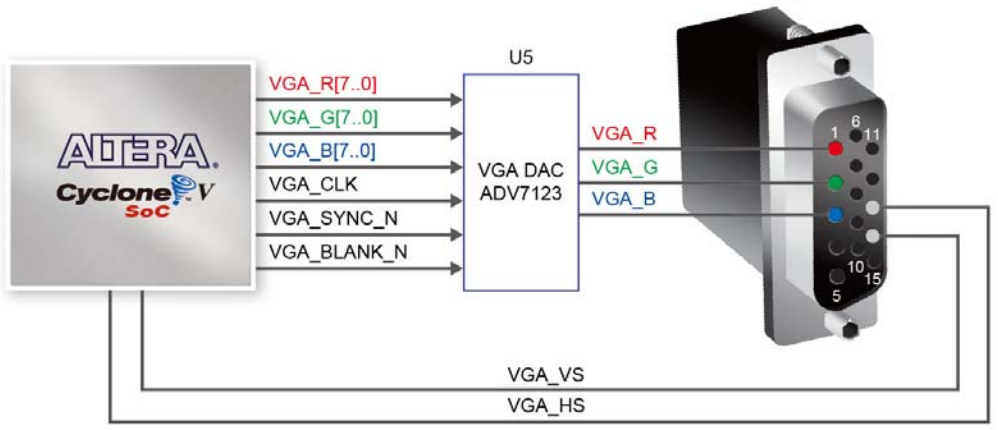

A VGA controller generates the synchronization signals and outputs data pixels serially to display the desired information on the VGA screen. A VGA port has five active signals, including the horizontal and vertical signals, vsync and hsync, and three video signals for the red, green and blue beams, as shown below. It is physically connected to a 15-pin D-USB connector on the DE1-SoC board, which is populated for VGA output. The signals are generated directly from the Cycle SoC FPGA. A 10-bit Digital-to-Analog converter (ADV7123) converts the digital signal to analog to represent and display the fundamental colors (red, green and blue) on a VGA screen. Figure 3 illustrates the connections between the FPGA and the VGA module.

Fig.3 Signals/communication between the FPGA and VGA module

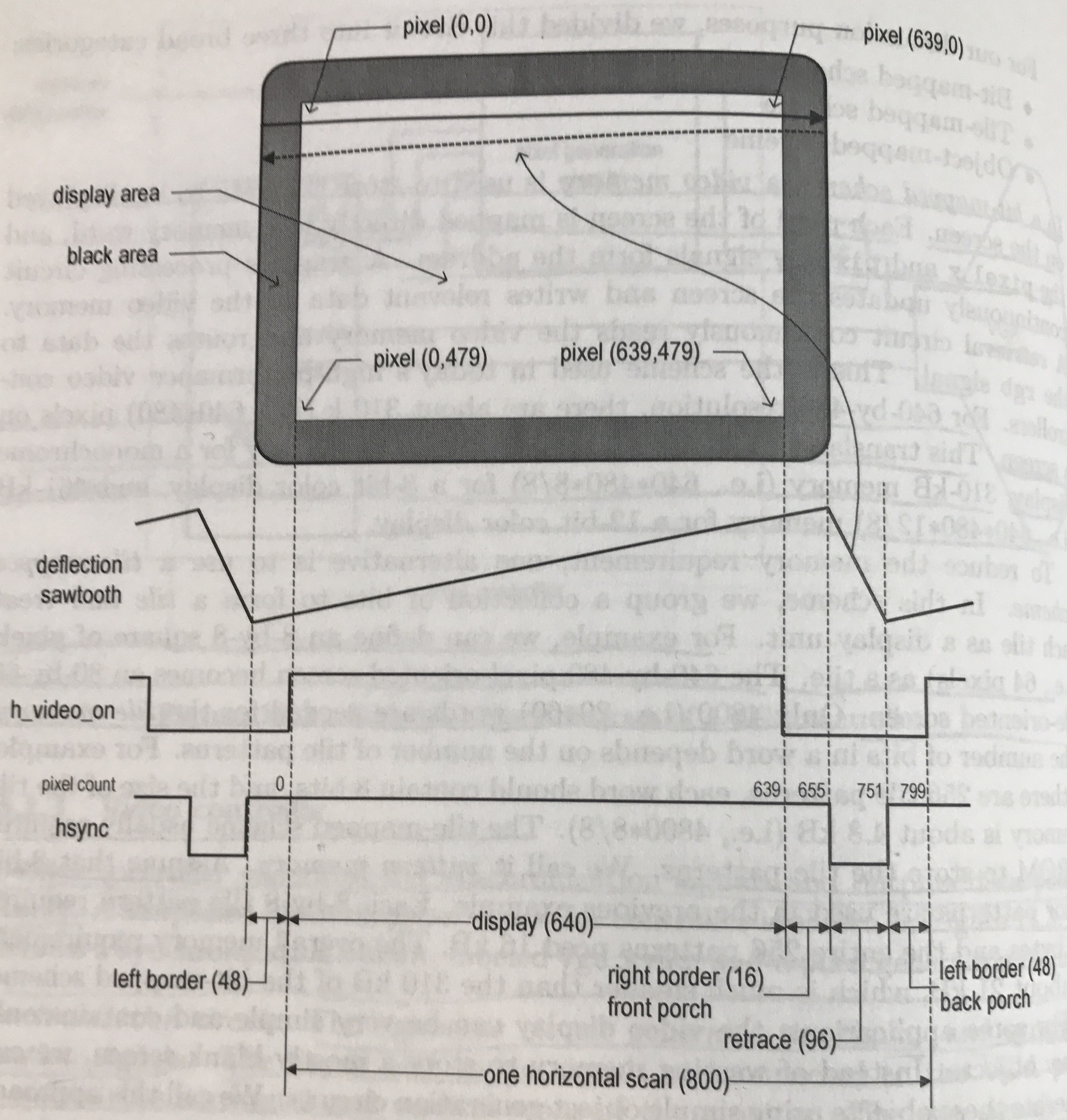

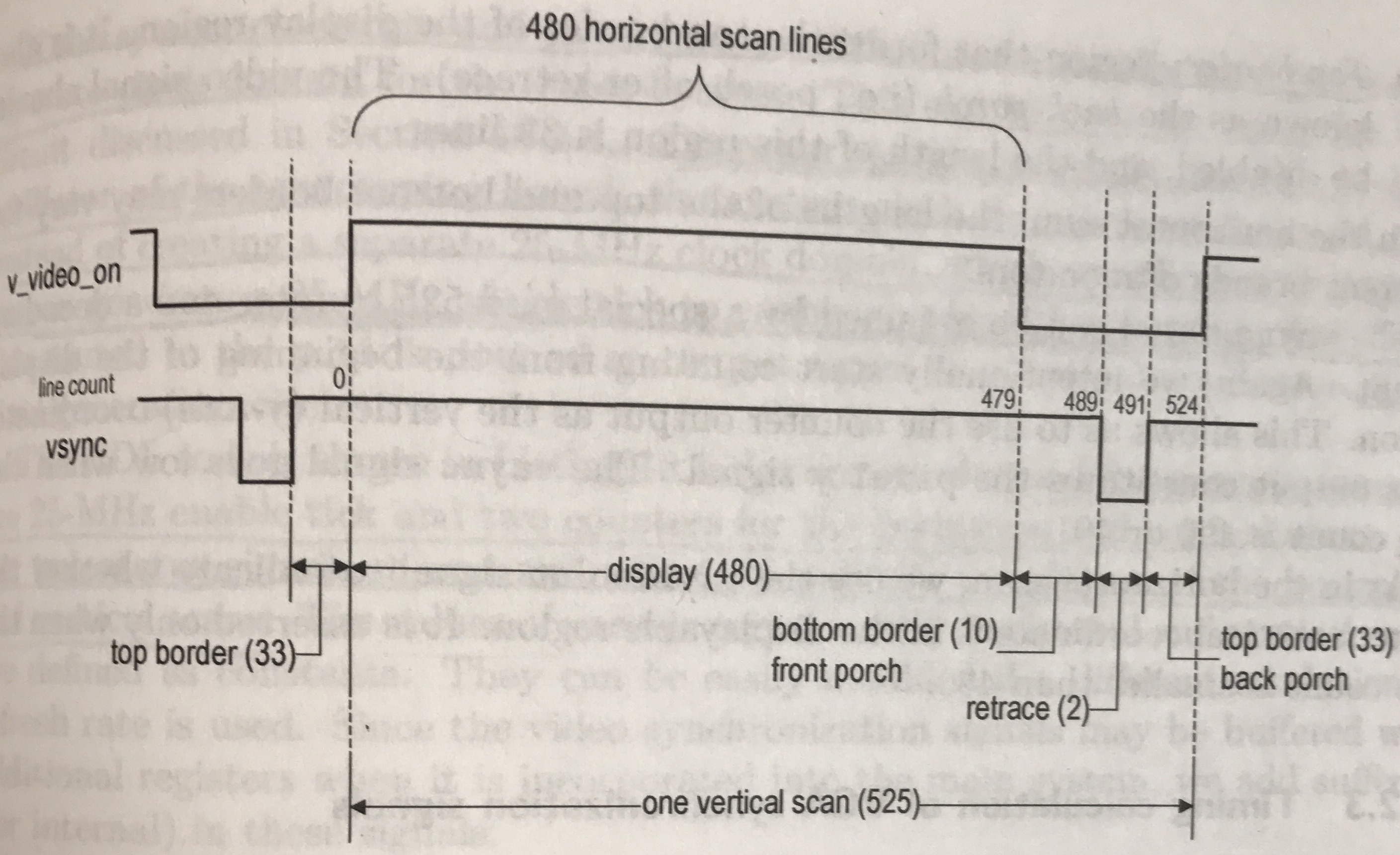

A vga_sync circuit generates the timing and synchornization signals. The vsync and hsync signals are connected to the VGA port to control the horizontal and vertical scans of the monitor. The two signals are decoded from the internal counters, whose outpus are the relative positions of the scans, which essentially specify the location of the current pixel. The hsync signal specifies the required time to traverse (scan) the entire screen, as illustrated in Figure 4 below (left). With a 27-MHz clock (pixel rate), 27 million pixels are processed in a second. During the vertical scan, the pixel cursor moves from top to bottom, and then returns to the top. This corresponds to the time required to refresh the entire screen. The time unit of the movement is represented in terms of horizontal scan lines. A timining diagram is shown below for the a horizontal and vertical scans.

Fig.4 Timing diagrams for horizontal (left) and vertical (right) scans

As it can be seen from the figure above, the horizontal scan takes more clock cycle than the vertical counterpart. Both of them have a front and back porch, which represent the time when the hsync and vsync signals are ON but there is no video signal, that is, no frame is displayed on the screen. In addition, retrace only occurs when scan signals are OFF; this is the time when the screen blanks to start a new line or new frame. All of this has been abstracted away in the TV system module provided by Terasic. However, a huge portion of this project involved mastering how the TV and VGA controllers perform their particular tasks. This is important given that we need to be able to accurately meet the timing requirements for each row displayed on the VGA monitor, as well as provide the correct data to be written to memory.

Memory

The DE1 board features a 64MB SDRAM chip, which consists of 16-bit data line, control line and address line connected to the FPGA. As mentioned earlier, the SDRAM is used to buffer the digital data obtained from the video recorder, from which the VGA controller generates data requets and performs an odd/even signal selection. Figure 5 below illustrates the connections between the FPGA and the SDRAM module, as well as the necessary signals.

In addition, in order to increase the speed of color detection, M10k blocks were used, which run in parallel and are selected through a mux based on a select value. M10k blocks were also necessary for generating the different memory initialization (mif) files for the game. The details of this is covered in the Design Implementation section.

Fig.5 Signals and connections between FPGA and SDRAM module

Design Implementation top

We started off our design with the TV project provided by Terasic. The project can be found in the DE1-SoC CD-ROM (rev.F Board), which can be downloaded here. The file provides the baseline for video recording from an NTSC camera. The project uses the SDRAM to buffer video data and provides the VGA controller module necessary to display video on a VGA screen. As part of this project, it was important to learn how video is processed and later displayed on the screen. As mentioned earlier, our hardware setup consists of an NTSC camera connected to the video input on the FPGA board (yellow composite video jack). Our hardware design does video and pixel processing for color detection as well as for displaying video on the VGA screen. State machines were created to perform the algorithm for color detection and to keep track of the score and overall functionality of the game. The following subsections explain the hardware design and implementation of our system.

Skin Detection

Being able to detect skin color is a crucial part in our design. For this, we implemented a detection algorithm based on YCbCr values. It is important to note that YCbCr skin-color ranges vary from person to person; thus, our design works with a specified YCbCr range for skin color. Further adaptations of the design can involve working with any skin color.

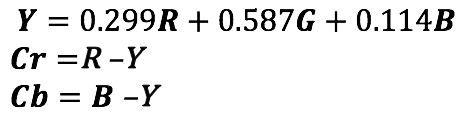

YCbCr is a family of color spaces used as a part of the color image pipeline in video and digital photography systems. Color is represented by luma (which is luminance or brightness, computed from nonlinear RGB, constructed as a weighted sum of the RGB values), and two color difference values Cr and Cb that are formed by subtracting luma from RGB red and blue components, respectively. The following sets of equations show the relationship between RGB and YCrCb color schemes:

The explicit separation of luminance and chrominance components makes this color-space attractive for skin color modelling, which is why we selected it for our design. We experimentally found that the luma value does not play an important role in the skin detection. We found this by changing the luma limits in our algorithm. However, the Cr and Cb components were crucial in the effectively detecting skin color. Through experiments we were able to adjust these values for the desired color spectrum to be detected. These parameters were adjusted for Shaan's skin and the range was narrowed down to limit the spectrum of colors to be detected as much as possible, reason for the inability of the system to detect different skin colors.

From the code provided by Terasic for video capture on the DE1-SoC board, it was pretty straight forward to process YCbCr values. As shown in Figure 1 above, the ITU 656 module decodes the input video signal into YCbCr values, which are then passed to the SDRAM buffer and later to the "YUV 4:2:2 to 4:4:4" module. The outputs of this module are the individual Y, Cb and Cr values which are used for color detection.

In order to increase computation speed and improve the real-time performance of our design we parallelized the system by dividing the 640x480 screen into blocks of size 80 by 60; thus, the screen is divided into an 8 by 8 blocks array, each block containing . This scheme was borrowed and adapted from the Fruit Ninja project designed by Yuan Cui and his teammates. Each 8x8 block is passed through a thresholding algorithm which processes pixel values to determine the possible existance of skin color. The thresholding takes each pixel as an input and compares the current Cb and Cr values with the predefined range for skin color. If a the pixel value falls within the specified range a flag is raised, indicating that a skin color has been detected. This flag is then used to draw the position of the hand in the form of a cursor on the VGA screen.

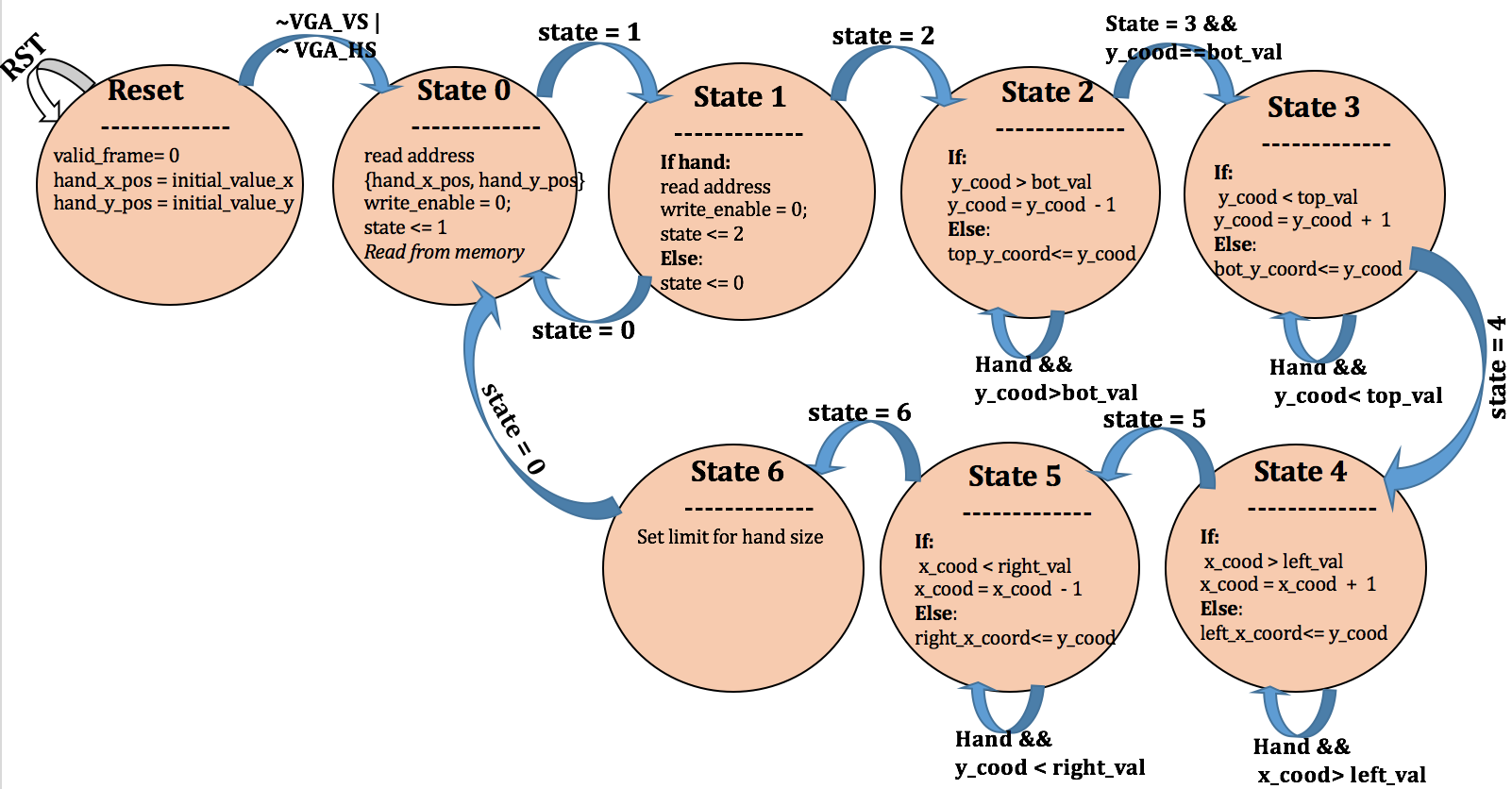

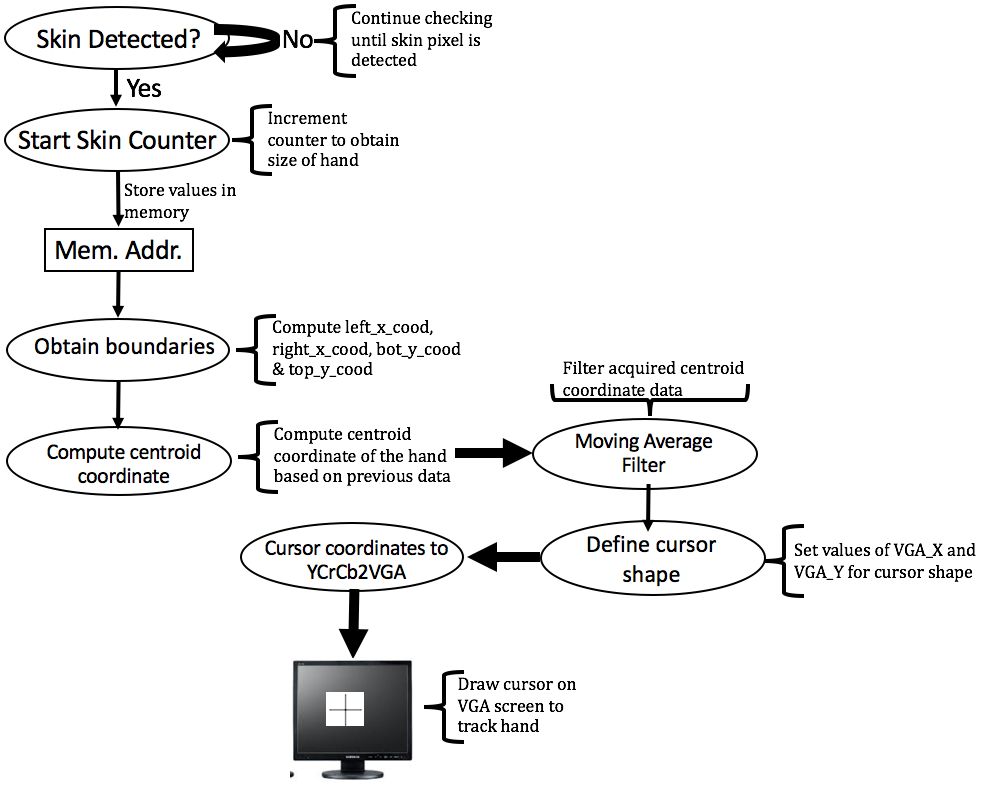

In order to mimic the position of the hand with respect to the camera on the VGA screen, a cursor representing the centroid of the hand is drawn in real time. First, the detection algorithm passes the thresholded value to a counter that starts incrementing as soon as it a skin value is passed. After a specified number of counts, each one containing skin color values, data is written to memory (M10k blocks) with the x and y positions of the detected skin pixel block (this is basically the size of the hand). This occurs after the completion of every frame, that is, when the last pixel of a frame has been reached. Then, a state machine reads the available data from memory to map the hand position with the cursor on the screen. Figure 6 below shows the state machine used for performing this operation.

Fig.6 Skin detection state machine

In the above state machine we constantly read from memory to extract the addresses of the blocks containing skin information. We first check the addresses in state 0 of the FSM, from which it transitions to state 1. In this state, we check if a skin pixel is detected and store the x and y positions in a counter. In state 2, we check for the top boundary (smallest y value) of the hand block by decrementing the y value until skin is no longer detected or the y coordinate reaches the lowest possible value (y = 0). This value is stored in a variable (top_y_cood), which is used in state 6 to compute the y coordinate of the hand centroid. The state machine then transitions to state 3, where counter variable continuously increments as long as there is skin pixels stored in memory at the current position. When a predefined bottom boundary has been reached, the FSM stores such value in the bot_y_cood variable. The same procedure takes place in states 4 and 5, this time for determining the left and right boundaries of the hand block; the boundaries are stored in right_x_cood and left_x_coord variables for the right and left boundaries respectively. In state 6, the four values representing the 4 boundaries are used to compute the centroid of the hand block and thus print the cursor at such position. In order to accurately track the motion of the hand with the cursor a moving average filter of 10 samples was implemented; this smooths out short-term fluctuations in input data from the hand and improves the whole performance of the game. The output of the filter provides filtered x and y coordinate positions of the centroid of the hand; these values are then passed to then passed to the YCbCr2RGB block which prints the cursor on the VGA screen. The following block diagram shows the data flow of the skin detection and hand centroid generation.

Fig.7 Skin detection and hand centroid generation on VGA screen

Memory: SDRAM and M10k Blocks

As mentioned earlier, the SDRAM on the Cyclone V was used for buffering video input data received from the NTSC camera, from which the VGA controller generates data requets and performs an odd/even signal selection to eventually displaying the video singal on the VGA screen. Doing this allowed us to process data and perform pixel thresholding for the skin detection algorithm.

In addition, a separate memory type was used for storing color pixel information before displaying it on the screen. The Cyclone V FPGA on the DE1-SoC board contains dedicated memory

resources called M10K blocks. Each M10K block contains memory bits that can be configured to implement memories of various sizes.

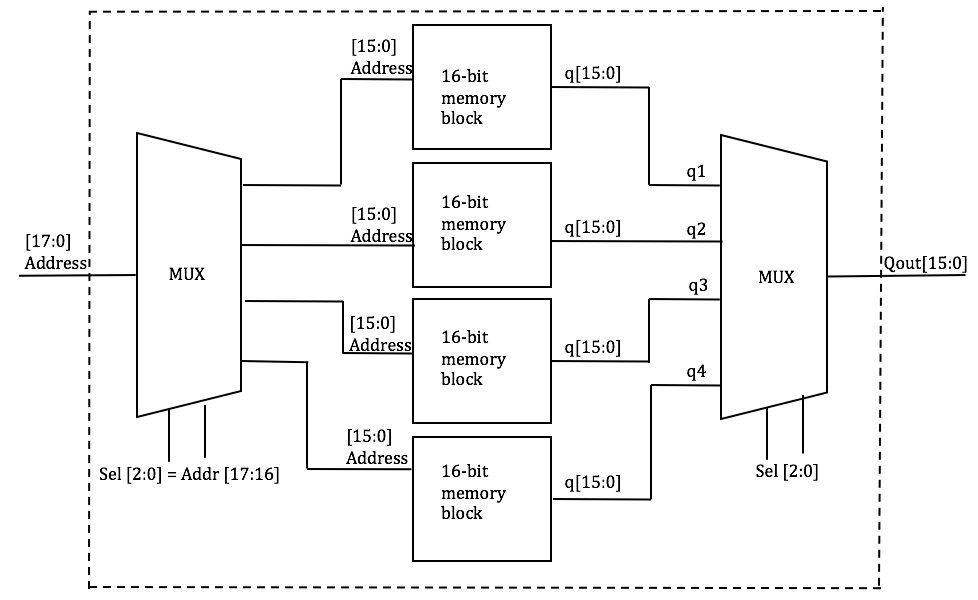

In particular, an M10k memory block was used for implementing the skin detection algorithm. An 18-bit address block was used for storing the skin color information. In order to implement this 18-bit address block of memory, we clubbed together four 16-bit blocks of memory generated using the IP wizard, using a multiplexer; this allowed faster overall processing of pixel information. Figure 8 below illustrates how the M10k block was partitioned into four 16-bit memory blocks to parallelize our system.

Fig.8 M10k blocks design

As it can be seen in the Figure above, an 18-bit memory address is used to create four 16-bit memory blocks, which are controlled by a 2-bit multiplexers. The select lines for the muxes are the 2 MSBs of the address; thus, the bit addresses are basically the remaining 16 bits, which are controlled by the select bits. The output of the whole memory block is a 16-bit address controlled by a second mux which passes one of the four 16-bit memory values depending on the select line.

Game Generation

As mentioned earlier, the game consists of randomly generated images that appear from the bottom of the screen and undergo a trajectory on the screen. A pseudorandom number generator was written in hardware to produce the random-like sequence. The random number generator was implemented by right shifting a seed at every cycle of a pre-defined clock, which is much slower than the system clock (27MHz). Each image has its own random generator function which triggers the position at which they deploy to the screen. The time of life of the generated images (time the images are visible on the screen) is controlled by a counter that sets increments at every clock cycle. Thus, we can easily increase/change the difficulty level of the game by simply reducing this counter variable. During the time that an image is displayed, a state machine continuously checks for any pixel matching between the cursor and the image. Upon a match, a flag is triggered to generate the second/funny image at the same position where the match occured. As it can be seen in the Results section of the report, the image swap occurs instantaneouly as soon as an image pixel overlaps a cursor pixel. A flag is used to send a signal to the module in charge of displaying the score on the screen. We write the score on the screen by first setting the coordinates of the number to be printed on the screen and directly writing to the YCbCr2RGB module with the specified color to be displayed.

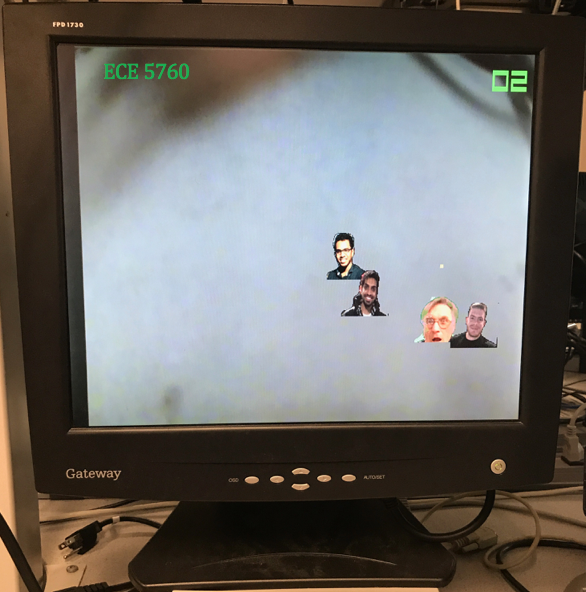

Memory Initialization File

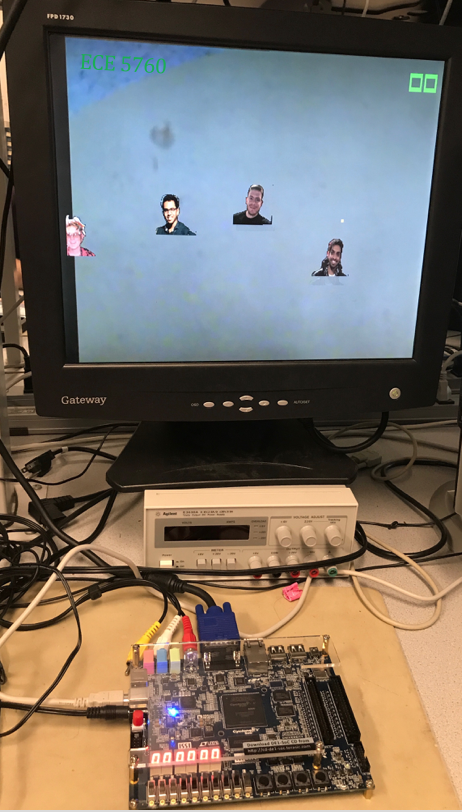

In order to display the images on the VGA screen for the game we used Memory Initialization Files (MIF files) which were generated using a MATLAB script. The MATLAB script converted JPEG images into 16-bit color images. These mif files were then loaded onto M10k blocks of 4096 16-bit words of memory. We generated eight M10k blocks for the eight images used. Figure 9 below illustrates the M10k block setup (top) and the generated images on the VGA screen (bottom). We made the background of the images white in MATLAB and while drawing on the VGA screen we had a condition where, if we detected a pure white color we would send the camera input to the VGA rather than the image, thus effectively making the background transparent.

Fig.9 Generated image files displayed on the VGA screen

Score Increment

As mentioned earlier, the score increments by one upon a successful match of the cursor pixel and Prof. Bruce's image pixel. However, if a cursor pixel matches another image pixel the score is reset to zero and the user has to start over to re-gain the points. As mentioned above, we write the score on the screen by first setting the coordinates of the number to be printed on the screen and directly writing to the YCbCr2RGB module with the specified color to be displayed. This was a little tedious because in order for the number to be visible on the screen we need to pass a set of ranges for x and y so that the lines making the numbers are thick enough for people to see from far.

In addition, In order to make the game more interesting, two switches were programmed to control the level of difficulty of the game. The combination of SW6 and SW7 provides 4 levels of difficulties, the last combination (11) being the hardest level, or what we call it, the "God" level.

Also, given that the cursor is purposely displayed rather small on the screen (small square with very few pixels), the cursor needs to travel more to hit the target and thus obtain a point. This makes the game more difficult because of the other random generated images which get in the way. This way we made the game more challenging, while it is still playable and enjoyable.

Results top

Our final product is a system capable of processing video input from an NTSC camera, detecting skin color, and displaying a cursor on the screen that mimics the motion of the hand. The system operates in effectively real time, keeping up with the speed of the FPGA's TV decoder, pixel processing and conversions to RGB. The cursor follows the motion of the hand quite accurately, despite the video noise from the background. The final results are shown in the following dection.

Project Demonstration

Shown below is the demonstration of our project. As it can be seen, our system is able to detect skin color for a specified color spectrum, and the cursor is able to follow/mimic the motion of the user's hand quite accurately.

Video: Final product demonstration

Issues Faced and Future Work

One of the main issues we faced during the development process of our design was the response of the cursor to the motion of the hand. After the implemenation of the skin detection algorithm, we noticed that the cursor had a very slow response to hand motion change. We mapped the output of the skin detection module to one of the LEDs on the FPGA to see if the skin was being detected as designed. As expected, the LED became brighter when skin color was detected and got dimmer as the distance of the hand from the camera increased. With this we made certain that the detection part was not the problem. After some testing we came to the conclusion that the system did not have enough memory to drive such a large, real-time computation, which was the reason behind the very slow response of the cursor. Because of this, it was necessary to parallelize the system using M10k blocks, which was the technique emloyed in our final design.

Another issue we faced was background noise in the video input signal. At the first stages of the design, the system was not only detecting skin but also other rather dark colors. In order to overcome this issue we narrowed the color spectrum to detect only Shaan's skin, which is a brownish color. This definitely improved the detection part and made the game easier to play, as the cursor better tracked the motion of the hand. However, it is worth mentioning that our design is susceptible to lighting. As we could see experimentally, isolating the camera from artificial lighting (flourescent light for example), certainly improves the detection part as well as the response of the cursor to changes in hand motion. A future step for this project could be to implement a filtering algorithm to filter out unwanted signals, such as ambient or fluorescent light. Another improvement to the system could be to integrate sound via the 24-bit audio Codec available on the DE1-SoC board. Different sounds could be produced based on the stage of the game. For example a background song could be played while the game is being played at one level of difficulty; different songs would be played at different levels of difficulties of the game. This is a nice feature to add as it uses another hardware available on the board.

Overall, despite the issues and we faced, we were able to significantly reduce all sources of possible errors that might have impacted our design. In fact, as it can be seen in the video above, the game is quite entertaining and user friendly. As far as skin detection is concerned, our system accurately detects user-specified skin colors by significantly narrowing the spectrum to the desired color. We are happy with the results and the fact that we were able to remove most limitations.

Conclusions top

The design and implementation of our design met the specifications we had set. We were able to develop a game, completely on the hardware side of the DE1-SoC FPGA board, based on color detection. The system is not entirely perfect, due to perturbation from the surroudings, but this is completely sufficient for demonstration and proving the concept of object detection and tracking on an FPGA board. In fact, our project can serve as the starting point of a more robust system where object detection is necessary. Overall, we are happy with the results we obtained and the topics we learned during the design and implementation of the project, specifically video and VGA control.

Intellectual Property Considerations

We appreciate the sample code provided by Terasic on video processing (DE1-SoC CD-ROM (rev.F Board)) on the DE1-SoC boards. The sample code was profoundly read and understood before moving onto our actual project implementation. We also appreciate the examples set by the Fruit Ninja and Pseudo Touch Screen Game final projects developed in 2014 and 2016 respectively. Although we implemented a different system and worked with a different FPGA board, their reports were one of the first things we read while researching about object the topic, especially on object detection on FPGA.

This website was created by modifying the one created by Alberto, Shaan, and Boling for their ECE 4760 final project (two of the authors, Alberto and Shaan are members of this team).

We have acknowledged all use of code and have abided by all copyright statements on both code and hardware. We have also given credit to all hardware devices that were used in this project.

Appendices top

A. Project Inclusion

The group approves this report for inclusion on the course website. The group also approves the video for inclusion on the course youtube channel.

B. Program Listing

Files for the project can be seen here.

C. Division of Labor

The work done for this project was evenly spread among team members. The contribution of all members was crucial for testing and optimizing the system. The development of this project is the result of the collaboration of all members of the group who spent many hours working on this fascinating project.

References top

This section provides links to external reference documents, code, and websites used throughout the project.

References

Acknowledgements top

We would like to give a special thanks to our professor Dr. Bruce Land for all the recommendations, support and guidance that he provided to us throughout our work on this project. Prof. Land was of valuable help in getting this project to its completion, as well as in understanding the material for this class. We would like to also thank him for building an amazingly rewarding class from which we learned tremendously. Also, we would like to thank the TAs, especially Mohammad, for the continuous support and guidance throughout the semester.