An audio controlled game where the player controls a spaceship that is avoiding oncoming meteors.

Introduction

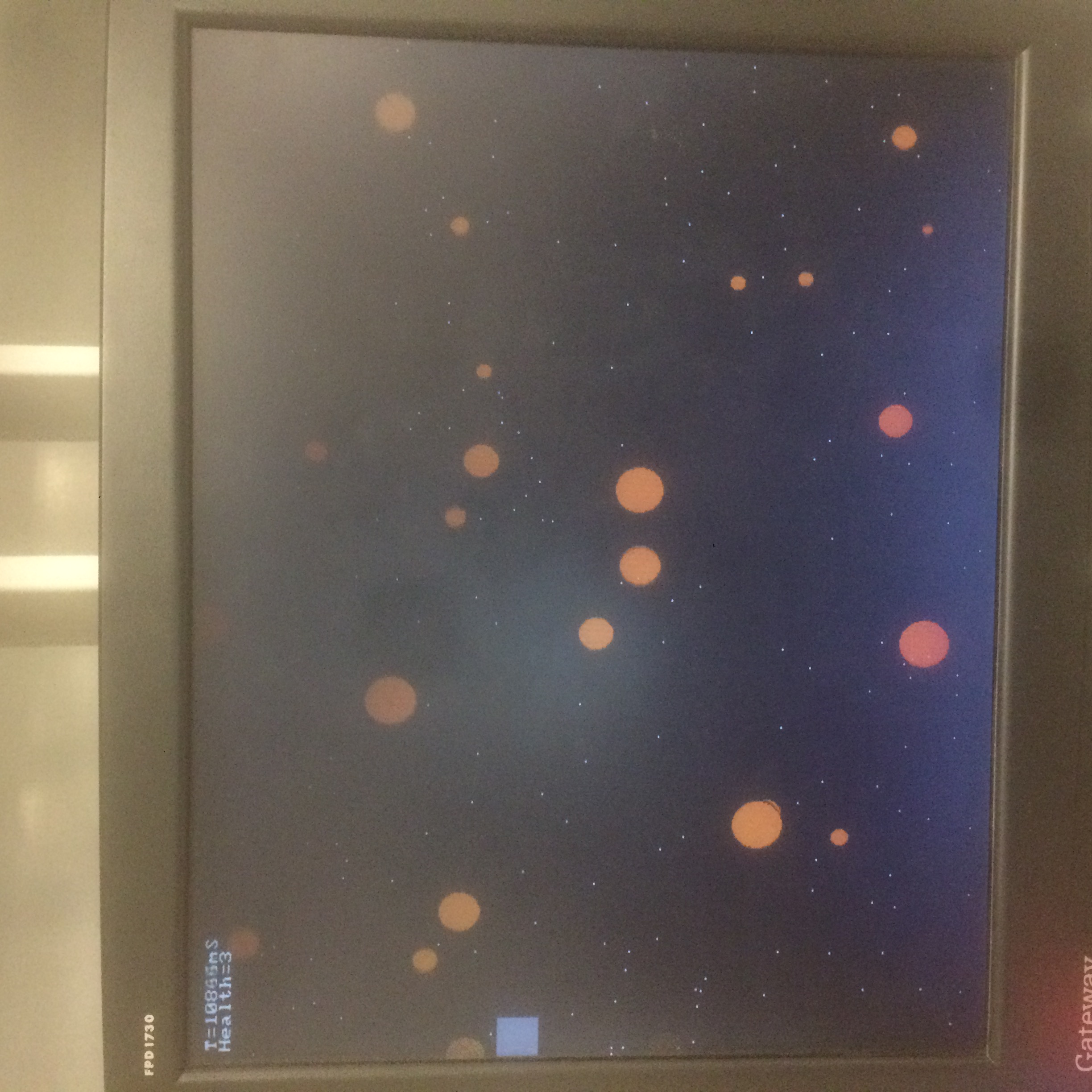

For our 5760 final project, we created a simple video game that uses a player’s voice as the control mechanism. The setting of the game is that you control a spaceship that is flying through space and there are meteors flying towards you that have to be avoided. The player moves the spaceship up and down depending on how loud the player speaks into the microphone and tries to avoid the meteors.

High Level Design top

Rationale

We chose this project because it seemed like one that could take advantage of the various features of the DE1-SoC board and also produce a fun and interactive end product. In particular, the audio processing component of the project could be efficiently done on the FPGA hardware, while the game logic would be well-suited to running on the ARM core. Additionally, communications between the HPS, FPGA, VGA monitor, and microphone could be easily managed through the Qsys interface.

Background Math

In order to differentiate between different words, we need to analyze the player’s voice and find key differences between words. A common approach to this task is to find the mel-frequency cepstrum, composed of mel-frequency cepstrum coefficients (MFCCs), of audio samples, and then compare them to determine what word was spoken. In order to do this, we first take the Fourier transform of the audio signal, then we map the powers on the frequency spectrum to powers on the mel spectrum, and finally we take the discrete cosine transform (DCT) of the mel powers to obtain the MFCCs. We speak about these steps in more detail below.

FFT

A fast Fourier transform (FFT) is an algorithm to compute the discrete Fourier transform (DFT) of a discrete signal in lower time complexity than directly computing the transform from the canonical formula for the for the DFT. The resulting values from the FFT represent the frequency information of the input signal in the form of the power of the signal at different frequencies. Since we are sampling audio at 48kHz, we chose to perform a 128-point FFT, but on averaged audio sample blocks, where we divide the incoming audio samples into blocks of 4, and then average each block. As a result, each FFT is performed on slightly over 10ms of audio samples, which should be enough to capture the fundamental frequency of the human voice, which is approximately 100 Hz.

MEL

The mel scale is a mapping between the actual frequency (in Hertz) of a sound and its value in mels. The motivation for the mel scale is that it more closely represents how the human ear perceives difference in pitches. In order to map the powers of an FFT to mel powers, triangular overlapping windows are used. The windows are found by first dividing the sampled frequency range into equally spaced segments on the mel scale. Then, a triangular window is created, centered at each frequency except the first and last, with the ends of the window at previous and following frequency. Since the frequencies are equally spaced on the mel scale, they are not equally spaced on the frequency scale, so the resulting triangular windows are not symmetric in shape. Each mel powers can then found by multiplying its corresponding window value with each FFT power. In practice, it is common to use approximately 12-13 mel powers in the range of human-generated sound frequencies (commonly cited as ending at 6 kHz or 8 kHz). Therefore, we chose to use 12 mel powers between 50 Hz and 8 kHz, resulting in the window frequencies (in Hz): {50, 205.61, 393.51, 620.4, 894.36, 1225.17, 1624.61, 2106.93, 2689.32, 3392.55, 4241.69, 5267.01, 6505.06, 8000}.

Logical Structure

Our overall design utilizes the FPGA to take in the audio from the player and run the FFT on the audio, and the HPS to perform the MEL calculations, classify the word, keep track of the game state, and write to the VGA.

The FPGA constantly samples audio from the microphone through the codec and once enough samples are captured it passes the data into the FFT module sequentially. Once the FFT module completes calculating the frequency spectrum, it writes the spectrum to an array on the FPGA and signals the HPS that there is new data to read. With the FPGA processing audio all the time, it allows the HPS to make the decisions as to whether a sample is valid/useful and what to do with it. This makes debugging significantly easier and reduced our design time.

Hardware top

On our FPGA, we had an FFT module using Altera IP, as well as a state machine to interact with the audio codec to receive and store audio samples, send the audio samples to the FFT module, and read and store the FFT output.

We decided on a fixed-point variable streaming FFT, with length 128, 18-bit input, and 26-bit output. The FFT was created using the Altera IP wizard and then controlled by the hardware state machine. As the FFT module expects its input in a burst, with one sample each 50 MHz clock cycle, but we only receive an audio sample every 48 kHz, it was necessary to create an on-board 18x128 bit buffer to store audio samples until we were reading to send them to the FFT module. Similarly, the FFT module also fires out its output at the rate of one value per cycle, so we also created an on-board buffer to store the FFT output, which would then be read from by the HPS. Rather than storing both the real and imaginary FFT output, we first calculated the magnitude of each output sample and stored that, thereby cutting in half the amount of space needed to store output values. To avoid more complicated hardware computations, we used the following approximation for complex magnitude: first, we take the absolute value of both the real and imaginary parts. Then, we take the smaller of the two values, multiply it by 3/8, and add it to the larger value. This is quite close to the complex magnitude when the magnitude of the real and imaginary parts are close, and is relatively close to the complex magnitude over the entire space.

Our state machine is responsible for interfacing with both the audio codec and the FFT module. In the first state, it checks for read data in the audio FIFO. If there is data, it then requests to read the left channel audio data, waits for an ack from the bus, and then reads the data. It then requests to right the right channel audio data, waits for an ack, and then reads that data as well. We chose to only store the left audio data, but it is necessary to read both channels from the FIFO even if they are not both used.

Once the data is read, we add it to the aud_avg register and also increment the aud_counter variable, which keeps track of how many samples are stored in aud_avg. Since aud_counter is a 2-bit register, it will overflow back to 0 every 4 samples. Therefore, when aud_counter equals 0, we know aud_avg contains the sum of 4 audio samples. We arithmetic right-shift the value of aud_avg by 2 to divide it by 4 and then store the result in the fft_in array, which holds averaged samples to be sent to the FFT. We also increment the aud_counter variable on each store so that we know when we have gathered 128 samples by looking at this counter.

Once we have 128 samples, we begin the data transfer to the FFT module by raising the sink_valid and sink_sop (start of packet) flags, and loading the first sample into sink_real input. We then load a new sample every cycle, which the FFT module will read. On the cycle we send the 128th sample, we also raise the sink_eop (end of packet) flag, to signal the data transfer is finished. After that, we wait until the FFT module raises the source_valid and source_sop flags, signaling it is ready to begin the data transfer for the FFT output. We store these values into the fft_out array continuously until the source_eop flag is raised, signalling the end of the data transfer.

In order to allow the HPS to read from the fft_out array, we created three parallel I/O ports in Qsys: fft_addr, fft_data, and fft_valid. fft_addr is an output from the HPS and is used to index into the fft_out array, and the corresponding data value is sent back to the HPS on the fft_data input port. To prevent the HPS from reading data while it is being written, we use the fft_valid flag. During FFT computations, fft_valid is low, and it is raised when the output of the FFT has been fully written to the fft_out array. The HPS only reads from fft_out when fft_valid is high.

Software top

The HPS in our design performed two main functions:

1. Runs calculations on the audio spectrum received from the FPGA and uses that data to move (or not move) the spaceship

2. Keeps track of game state and updates all VGA graphics

These parts are quite different so we will discuss them separately.

Audio Detection

IThe HPS is responsible for processing every FFT output and figuring out if the FFT is actually the result of a player speaking and if so, classify the sample as a loud sample or a soft sample. The length of each FFT sample we receive is only around 10 ms, which is shorter than most voice commands, so the HPS has to be able to capture the entire input command and not just the first one. The first step is differentiating between noise and actual input. To do this, the HPS calculates the maximum peak for every FFT and uses a threshold chosen by us. If the threshold is met we know that a command is being sent. Initially when the threshold is met after a period of it not being met (no command), the HPS starts a timer. If all the next FFT’s received in that time period do not meet the threshold we can safely assume that the first one was just noise and we go back to listening. However, if we see more FFT’s that meet the threshold, we start saving them into an array. We keep collecting data while the FFT’s meet the threshold until either there is another timeout or until we collect a certain maximum number of FFT. We have a maximum number of FFT’s to make sure there is a maximum latency from when the user says something to when movement occurs on the screen.

Once all the FFT’s are collected, we convert the FFT powers into log mel powers and then take the discrete cosine transform of these powers to obtain our final MFCCs. We heavily referenced the work of Jordan Crittenden and Parker Evans for these calculations. We then use the first two coefficients to classify the audio sample. We take the sum of the absolute values and use a threshold that determines a loud command from a soft command. If the command is loud it will move the player up, and if the command is soft it will move the player down.

Gameplay and Graphics

The HPS is also in charge of all the gameplay mechanics and graphics. The three main objects in our game are the main player that is represented as a spaceship (gray square), the meteors, which are represented by brown circles, and the background stars that are just white pixels. All these objects are moving and are updated every frame. Initially, when the game starts, we have just the spaceship visible and soon meteors start flying in from the right side of the screen. To do this, we use a large array of meteor structs that are initially invalidated so they are not drawn. Periodically, a new meteor is validated and given a random y position, x velocity, and y velocity to be added to the screen. Every frame, each valid meteor and all the stars’ positions are updated by their respective velocities and redrawn. Then, collisions between the meteors and player are detected using simple bounding boxes. To make this more efficient, we only check for collisions with meteors in the columns the spaceship is present because the spaceship does not move on the X axis at all.

These two components of the HPS needed to be combined in a way that did not cause glitches or flickers in the gameplay graphics, but also made sure we did not miss any audio inputs from the user. To do this we decided on a set frames per second (FPS) for the game of updating the screen every 16ms, which correlates to ~60FPS. This means the gameplay graphics and state only are updated then, so there is 16ms of downtime. We use these 16ms to constantly check if new data is available from the FPGA and performing the audio calculations on them to update the spaceship’s locations.

Other Attempts

Our original goal was to differentiate between spoken words such as “up” and “down” to control the position of our spaceship. Word classification is not particularly simple so we tried quite a few variations we tried in order to classify a signal. We first experimented with varying how much of the audio signal we used. A few options involved using just the first FFT that passed the threshold, just the last FFT, a FFT from the middle, or a combination of the three. For all of these options we did not find any strong correlation to different words in the coefficients. The second main thing was ordering the magnitude of the coefficients and then looking for correlations between the peaks and different words. We also experimented with different thresholding limits and timeouts, but again we did not find any variations changed out results. When we thought we had found a good combination of some of our parameters, we tried training a few neural networks in python to classify words based on the raw coefficient values or the order of peaks and unfortunately were still unable to find a classifier that was accurate enough. Given that our application is a realtime game, we thought that the control should be fast and accurate, so when our best classifier was giving us ~65% accuracy we decided that we will switch to controlling the position with volume instead of different words. This worked for us because the magnitude of the coefficients correlated well with the volume spoken.

Results top

Overall, our game was a success. A player can control the position of a spaceship using their voice and the game executes with little to no flicker or jitter. The response time between an audio command and movement on the screen is more than good enough for a video game and the game has two modes which allows for relaxed gameplay or more intense gameplay if the user wants it. Despite us not being able to consistently classify different words, we were able to design a game that used volume as the control mechanism instead and were satisfied with the outcome.

Usability between different players is a small issue because loud to some people is not loud to others. This means that the player needs to adjust to the set threshold we have, but again that can make the game a little more interesting. Also, there is an issue with the environment the game is being played in because the ambient noise level could be completely different causing misreadings of loud or soft commands. There is no saftey hazard for our project.

Conclusion top

Potential Changes

Given more time, we would have liked to increase the accuracy of our word detection to the point where it could be used without severely impacting the playability of the game. For example, our implementation of the FFT may not have been completely optimal: in particular, the block averaging scheme for the samples likely resulted in a loss of information and might have been improved on by using a rolling average or simply not averaging and taking a longer FFT instead. Also, since we sample 10ms for each FFT, we are only barely able to capture information at the fundamental frequency of the human voice, and may potentially miss some information. Therefore, it would probably be a good idea to try increasing this time window by performing a longer FFT, more averaging, or a combination of the two.

Additionally, we could have improved on our methodology for differentiating between the MFCCs of different samples. For example, we did not have enough time to acquire enough test audio samples from a variety of speakers to be able to more accurately determine what coefficients to best differentiate on. Another scheme would involve looking at how the MFCCs change over time, either by finding patterns or by computing deltas or delta-deltas between the MFCCs (i.e. the “velocity” or “acceleration” of each of the coefficients). Since the frequencies of speech change greatly over the course of a word, identifying patterns in time, not just frequency, is very important to successful word recognition.

Ethics/Legal Considerations

We used Altera’s FFT module in our design and also based our MEL calculations off of Jordan Crittenden and Parker Evans’ old 5760 project. There are no other legal or FCC considerations.

Appendices top

A. Permissions

The group approves this report for inclusion on the course website.

The group approves the video for inclusion on the course youtube channel.

B. Source Code

All code can be found here

C. Work Distribution

The work was distributed as following:

- Research and high-level design: Curran and Brian

- FFT control: Brian

- Hardware state machine: Curran and Brian

- Sound recognition: Curran and Brian

- Game design: Curran

- Report: Curran and Brian

References top

This section provides links to external reference documents, code, and websites used throughout the project.