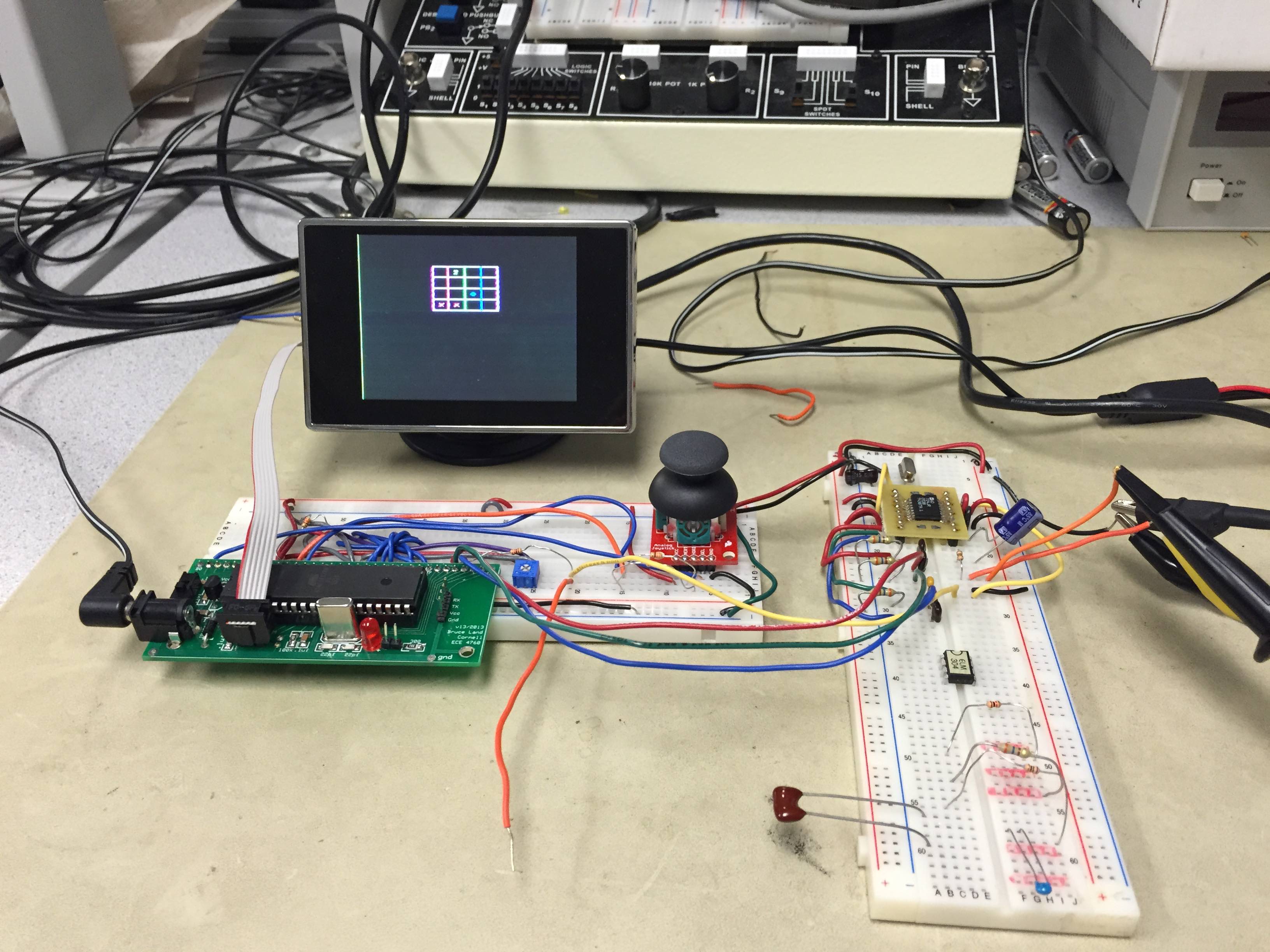

Figure 1: our project setup in lab

Chen Yang (cy255), Jeffrey Niu (jn286)

Our project is to make a color video game that runs primarily on the ATmega 1284P. To do this, we adhered to the NTSC standard for color video. The sync signals used for NTSC are generated on the ATmega 1284P itself, and all game logic is implemented on the microcontroller as well. To actually encode the color signal, we used an AD724 chip. This is necessary because the color video signal is created by modulating a 3.58MHz signal, and our MCU only runs at 16MHz.

Figure 1: our project setup in lab

We chose this project because color video is so prominent in our lives, and we were curious about how it works after doing black and white video in lab 3. For our project, we referenced the many previous color video projects, but we did not reuse the code from previous years. This was mainly because color video requires writing AVR assembly, and the code from previous years was written for the CodeVision compiler and was not compatible with GCC.

The design of this project can logically be broken down into three parts: the hardware design, the low level software that works with hardware, and the game processing. Both the hardware and software design will be covered in detail in the Program/Hardware Design section, but the overview is as follows:

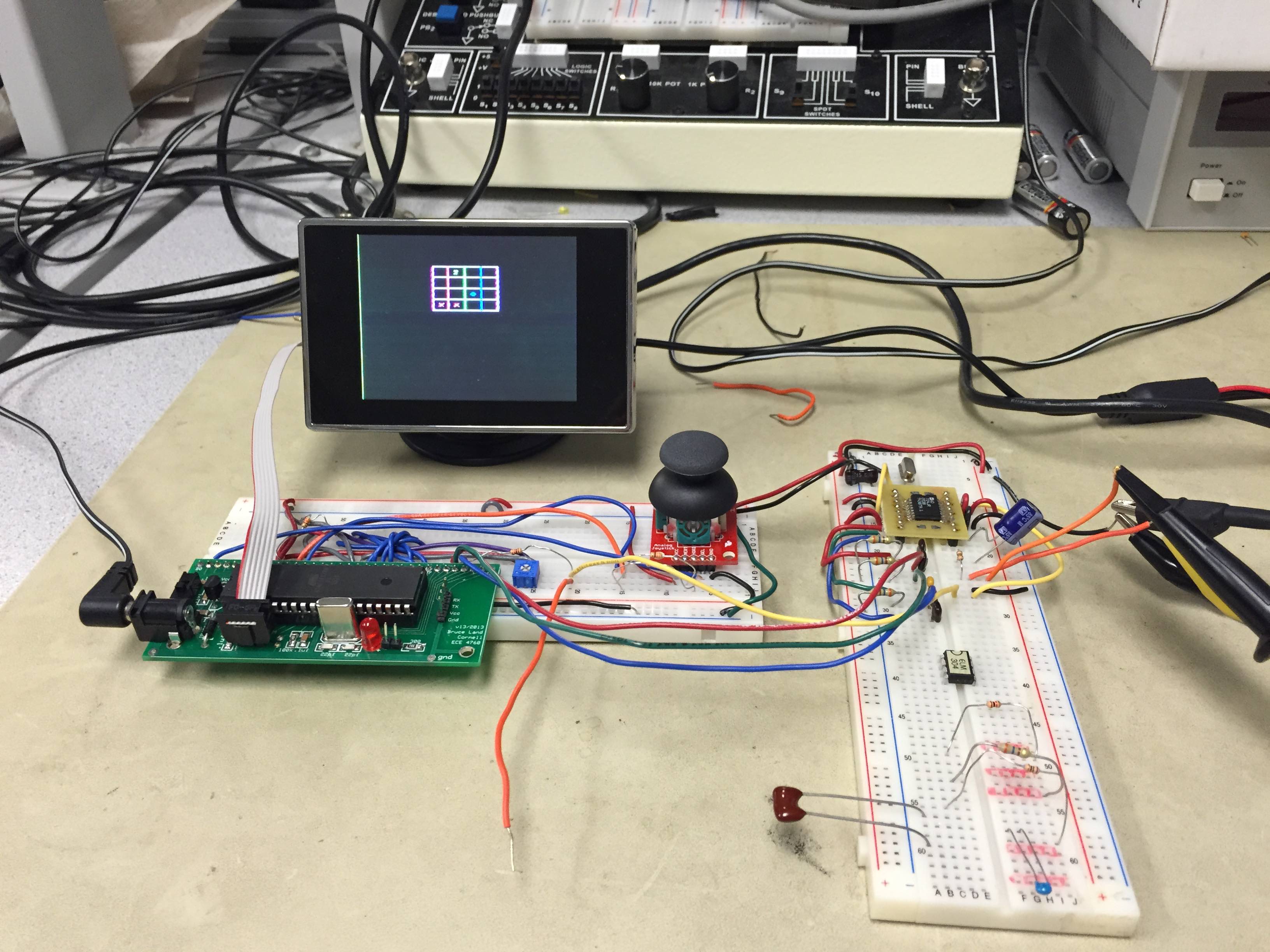

Figure 2: block diagram of key components

The hardware includes a joystick, the microcontroller and its logic board, the AD724 and its supporting peripherals such as a ~3.58MHz crystal, and an LCD TV with composite video input. The joystick takes user input; this can be up/down, left/right, or activating the built in push button. The microcontroller receives this input and processes it accordingly. It then outputs RGB signals as well as a sync signal to the AD724, which encodes the information into a composite signal, which is then output to the TV.

The low level software interfaces with the hardware. This involves using the ADC to take input from the joystick, using the MCU timers to accurately generate the sync signal, and using assembly code to quickly output data to the GPIO pins for the video signal.

The game logic is processed entirely on the microcontroller. After each frame is displayed, there is time during the vertical blanking period for doing calculations. All game logic is implemented here and will be detailed in the Software Design section.

Using additional hardware increases costs and adds complexity to the physical design and circuit. However, additional hardware can significantly simplify software design and enable the microcontroller to perform tasks it cannot perform on its own. For our project, our approach was to use hardware as needed but only if needed. We used the AD724 to encode the color signal because our microcontroller is too slow to effectively perform that task. However, we did not use the ELM 304 sync generator used by most previous color video projects. By writing the video output code in assembly and by having relatively fast and simple game logic code, we were able to do sync generation on the ATmega 1284P without additional external hardware.

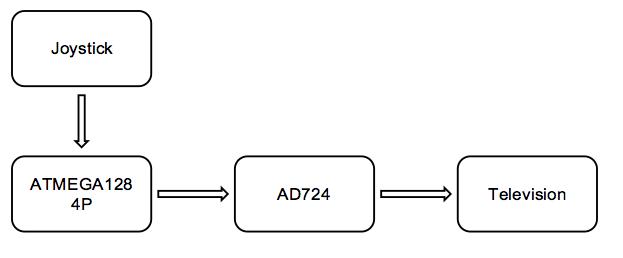

The centerpiece of our project, the color video generation, is based on the NTSC color video standard. At a high level, the signal is sent to the TV by outputting bits/bytes at the right time. There is no direct correlation between bits and pixels; the picture on the TV is drawn depending on what analog signal the TV is receiving at a specific point in time. Each frame is drawn line by line. The signal that represents a line is shown in figure 3 below:

Figure 3: theoretical signal of 1 horizontal line according to NTSC standard

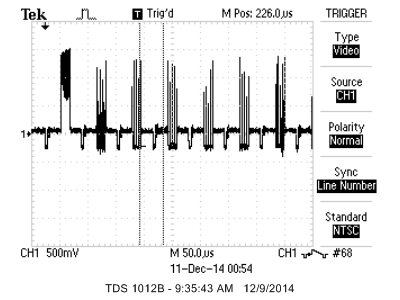

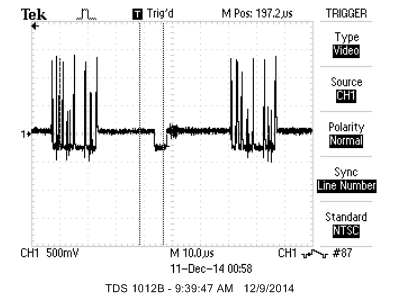

Figure 3 is what a line looks likes in theory. However, we did not fully adhere to the NTSC standard. Instead of a 4.7us sync tip, we used a 5us sync tip. Instead of a 63.5us line time, we used a 63.625us line time. These changes were made for ease of use. 60 frames per second is easier to work with than the official 59.94 frames per second. Also, while the NTSC standard uses interlaced video with 525 scan lines, we use 262 lines without interlacing. What this means is every frame we draw the same 262 lines instead of drawing odd or even lines every frame for 525 lines. An oscilloscope screen capture of one of our lines is seen in figure 4. In this screenshot, a horizontal sync pulse, a color burst, and the video signals for 5 white points are shown. There are several more screen captures showing lines with different content in the appendix.

Figure 4: scope screenshot of a horizontal line signal containing 5 white points

We could not find any information on the copyright of the JMan game. It was created for use in the CS 1130 course here at Cornell. Since we are using it for academic purposes, there should be no violations of copyright.

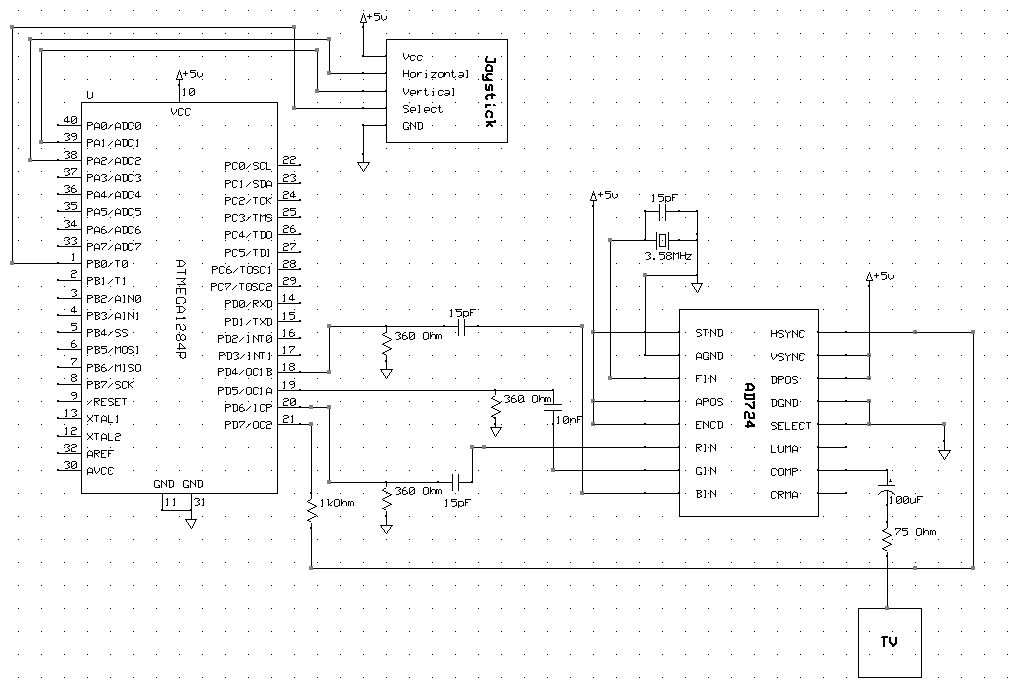

The key hardware used in this project are the ATmega 1284P, the custom board for the microcontroller that we have been using throughout this class, a joystick with a push button function, an AD724 color encoder chip, a 3.579545MHz crystal, an LCD TV with a composite video input, 2 breadboards, wires, and a number of resistors and capacitors whose values can be seen in the schematic.

The joystick with push button is composed of two potentiometers and a switch. Depending on how far up/down or left/right you push the joystick, the potentiometers will vary accordingly between Vcc (5V) and ground. These signals were connected to ADC pins A1 and A2. The ADC would then convert the signals to a value between 0 and 255. We only accepted 255 as confirmed push to prevent false positives. Left/right and up/down on the joystick corresponded to left/right and up/down movements of the player-controlled character. The pushbutton is simply a switch. When the button is pushed, the pushbutton pin is grounded. Otherwise, the pin is floating. This signal was connected to pin B0 on the microcontroller, which was set as an input with a pull up resistor.

The AD724 is an RGB encoder. It was used to encode red, green, and blue signals into an NTSC compatible composite video signal. We set the chip to FSC (frequency of subcarrier) mode by pulling the SELECT pin low, and we connected a 3.579545MHZ crystal in parallel with a 15pF capacitor to the FIN pin. The crystal we used had a load capacitance of 18pF, but the closest we had was 15pF. Additionally, the internal capacitance of our board is nonzero would contribute to the load capacitance calculation, making 15pF a better choice than 22pF. For the sync signal, we connected pin D6 of our microcontroller to the HSYNC pin of the AD724. Our microcontroller generated its own syncs, so the ELM 304 was not used at all. Additionally, the HSYNC pin of the AD724 can accept a composite sync, so the VSYNC pin was not needed.

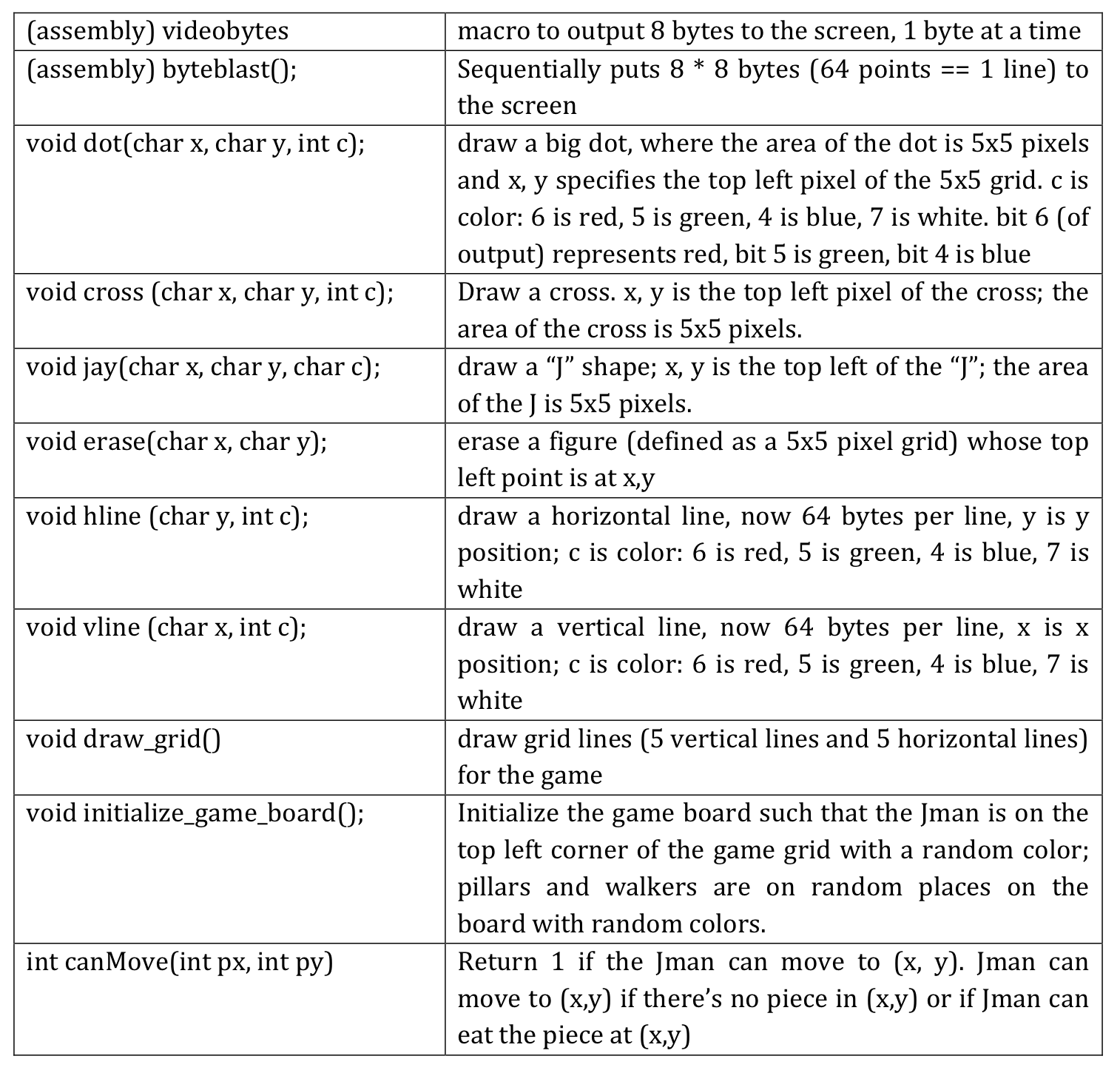

The software can be broken up into two major parts: low level code for interacting with hardware and game logic code for managing our game. The key functions are listed below in Figure 5.

Figure 5: listing of key functions

While doing research for our project, we found several previous ECE 4760 projects that did color video. Most of these projects used very similar code for handling the sync and display, so we originally planned to use the same code as well. However, we later discovered that their code was written for the CodeVision compiler, and we now use GCC. Because most of the display code was written in assembler, we were unable to use the old code. We did, however, heavily reference the 3D orthographic projection of a tetrahedron code from the course page on video generation, which was written for use with GCC.

One of the most challenging parts of the code was the generation of the sync signal. We modeled this off the example mentioned above. Timer 1 was set to count at full speed, and upon counting to line time, which was set to 1018 (1018 cycles at 16MHz is 63.625us) to equal the time of one horizontal line, we entered an interrupt service routine. In this interrupt, we output the sync pulse (horizontal or vertical depending on the line number) and then output 64 bytes of data to draw a horizontal line of color on the screen.

Outputting the data fast enough to be drawn in an NTSC line was a challenge. As seen in figure 3, the duration of the video content of a line is at most 52.6us. In order to output sufficient data in this time, we wrote the video output code in assembly. At a high level, we have an array of data (called screen) to represent our screen. We use a pointer into this array to correspond to where in the screen we want to draw. In order to draw, we load a byte from the array into a register and output that register on port D. The functions used for this, along with other key functions, are detailed in figure 5.

In our implementation, we output 1 byte of data at a time, but only 3 bits of this byte are significant. Each bit represents one color, red, green, or blue, and is fed into the AD724. This gives us a maximum of 2^3 = 8 colors. However, if we needed more colors, we could have outputted 8 bits and fed multiple bits, through different resistors, into each color input of the AD724 and achieved 2^8 = 256 colors. Alternatively, we could have packed 2 data points per byte to use memory more efficiently. This is important because the ATmega 1284P only has 16kB of SRAM. Originally, we tried using a 128x128 pixel game board, but we did not have enough memory. This could have been solved by packing 2 data points per byte instead of only using 3 out of 8 bits of each byte. Instead, we decided to use a 64x64 pixel game board. We could have created something with slightly higher resolution even with 1 byte per data point, but using a power of two made it much simpler when referencing bytes in our screen array.

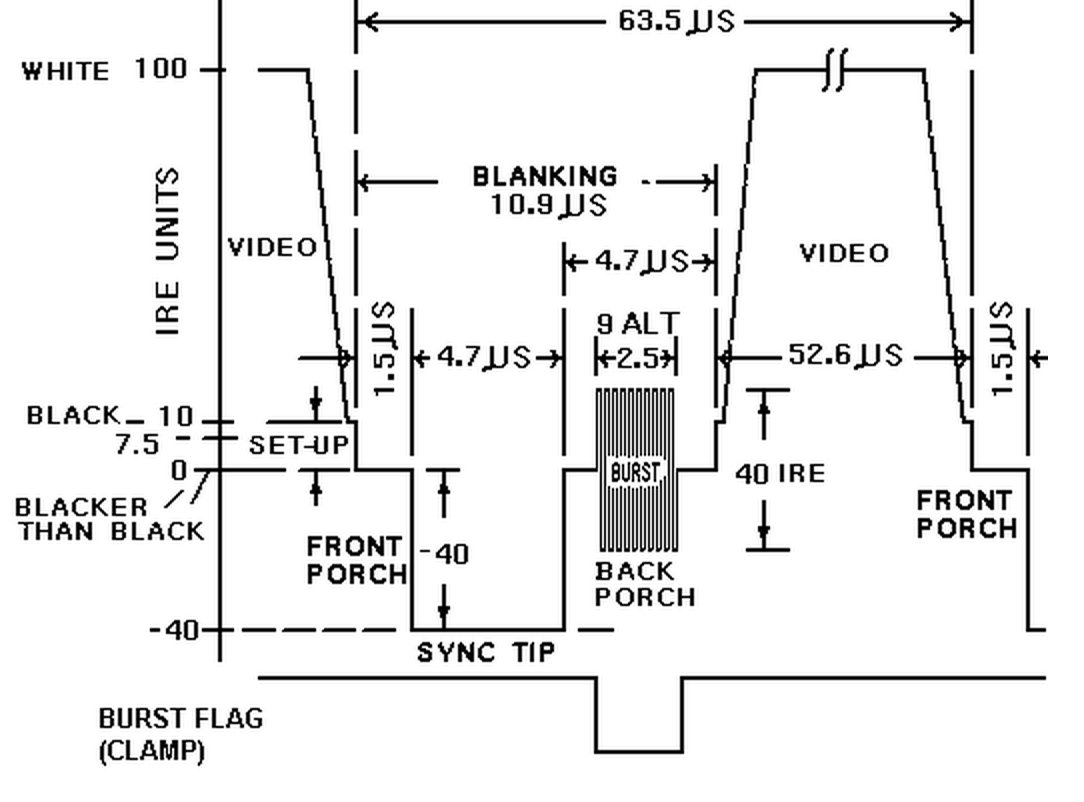

How to play?

The user controlled piece is the J shaped character, who is called Jman. There are also circular pieces called walkers and X shaped pieces called pillars. A pillar never changes its position, but it might change color; a walker never changes its color, but it might change position. Jman moves according to the user input, and the goal is to navigate jman to consume the other pieces. Jman can consume a green piece only if he is currently blue; he can consume a blue piece if he is currently red; he can consume a red piece if he is currently green. Each time Jman consumes an object, he obtains the color of that object. Each time a direction key is pressed, every pillar on the screen may change its color at random, and every walker will move a step in a random direction as long as it is not blocked by another piece.

Initialization

The game board is initialized such that the Jman is on the top left corner of the game grid (0, 0) with a random color (red, blue or green); pillars and walkers are on random places on the board with random colors as well. Each position on the board can hold only one piece.

How to take user input?

A user can play the game by controlling the Jman movement using a joystick. The joystick can move in four directions: up, down, left, and right. The Jman movement follows the user input directions. There are two potentiometers on the joystick; up and down movement controls one of them while left and right movement controls the other. The program constantly polls the inputs from the two potentiometers and determines Jmans next direction. The user can also push the select button on the joystick to reset the game.

How to win?

The user wins if all the pillars and walkers are consumed. The player loses if there are still walkers left that Jman cannot consume.

Variable Structure:

num_walkers: 2

num_pillars: 2

Grid_size: the grid is initialized to 4 by 4, so the grid_size is initialized to 4.

x[num_walkers+num_pillars+1]: x coordinate of all pieces, -1 if that piece is eaten

y[num_walkers+num_pillars+1]: y coordinate of all pieces, -1 if that piece is eaten

color[num_walkers+num_pillars+1]: colors of all pieces

board[grid_size][grid_size]: a 4 by 4 array indicates the elements on the board, -1 means there is no element in that position. If there is an element at that position, the value at that position is the ID of a piece. Each piece has a unique ID; the ID of a piece is the same as the index of the piece in the coordinate array. (index 0: jman, index 1 to num_walkers: walkers, index num_walkers+1 to num_walkers+num_pillars: pillars)

The end result of our project was a very playable color videogame. The colors were vivid and clearly defined. Our game only used red, green, blue, and white, but our project was tested to support 8 colors (including white and black). Additionally, our setup could easily be expanded to support 256 colors. The game logic was also fully implemented without glitches, and our joystick controller was very responsive. The learning curve to play our game is very low, and there are no particular requirements to play our game other than good eyesight, since our resolution is pretty low.

The one issue we were unable to fix was the slight flickering on the screen. The output was a smooth 60 frames per second without tears or hangs, but some of the lines would flicker slightly. This was most visible in the outside grid lines of our game. Additionally, there were very faint green lines flickering in the background. Originally, we suspected that the flickering was a result of choosing the wrong capacitor somewhere in our circuit, so we re-consulted the AD724 datasheets and tried many different capacitors. We found that using a larger coupling capacitor for the green color input pin on the AD724 reduced the green background flicker, but the flicker did not completely disappear.

Our project design was very safe. There were no projectiles involved, and we did not use any parts that could cause harm to anyone. We also did not use anything wireless, so there are no concerns of interference or violations of FCC restrictions.

Our project met most of our original expectations. The core game features were all implemented. We ended up choosing not to have non-interactive blocks on the game board as they were not significant and we had limited resolution, but we also added a reset button that we did not originally plan for. The reset button not only restarted the game, but also randomized where all the pieces started.

While color video generation was significantly more complex than we had anticipated, the end result was very rewarding. One aspect we found disappointing was how low the output resolution was due to lack of memory. If we were to do the project again, we would either pack 2 display points into each byte or add external RAM. Another possible expansion of our game is to add sound to the gameplay.

We did reuse some code. It was from the ECE4760 Video Generation page linked in the references. The specific source code we drew from is here

The only applicable standard used in our project was NTSC. We loosely followed this standard, but we did have some minor deviations. Our implementation was close enough to the standard such that LCD TVs would accept our signal and display what we wanted to display, but CRT TVs would distort our desired image.

Our actions during this project were consistent with the IEEE Code of Ethics. Our project presents no risks to public health or welfare, does not discriminate, and has no malicious action or intent. We were not offered and did not take any bribes during the creation of our project. We had no conflicts of interests with other parties, and we were honest and realistic about our goals and cost estimates in our project proposal.

We were also very ethical regarding copyright and patent issues. The idea of the JMan game is from the CS 1130 here at Cornell. We could not find any copyright information regarding the game after browsing through all available course material. Seeing as our project is purely educational, we think it is fair to use the JMan game idea in our project. Additionally, we did make minor modifications and are willing to make further changes if requested by the CS department. The second issue regarding copyrights is using CodeVision software. When we discovered that code from previous years could only be compiled with CodeVision software, we were tempted to acquire a copy of the software. However, our lab computers no longer have the software, and the trial version was too limited to use for our project. Instead of acquiring an unlicensed copy of CodeVision, we did not use any of the previous code and wrote our own code for GCC.

Because of our ethical actions listed above, there are no legal concerns regarding our project. We did not use anything wireless, so there are no concerns regarding FCC restrictions.

Figure 6: full circuit schematic

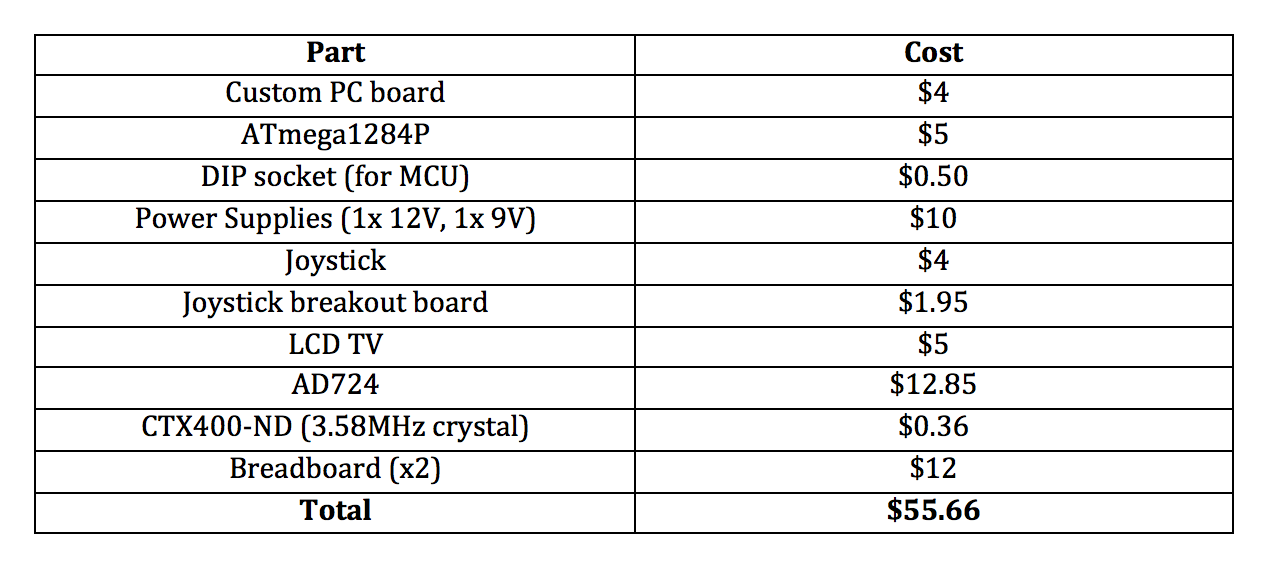

Figure 7: table of costs

Jeff: color and video generation code

Chen: game logic code, integration of game logic and video generation code

Both: acquisition and integration of hardware, troubleshooting and debugging, website and write up

ECE4760 page on video generation

ntsc-tv.com

Wikipedia page on NTSC

Previous ECE 4760 projects:

Color Video

High-Resolution Color TV

Apple II

Data sheets of components we used

AD724

ATmega 1284P

People who helped us:

Bruce Land

Eileen Liu

...thanks!

Figure 8: signal of many horizontal lines; the first line in the scope picture is an all white horizontal line

Figure 9: a line with some color components in it