Introduction

Our ECE 5760 project was aimed at combining Conway's Game of Life with music. The idea was to display the evolving states of the Game of Life and meaningfully map these states to an audio signal in real time. The motivation originally came from tone-matrix synthesizers, which allows users to dynamically affect the synthesis of an audio signal by clicking individual "cells" on the screen. We thought that combining this idea with a cellular automaton, which automatically evolves to change its state from one time step to the next, could make for an interesting music generator. Moreover, we believed that implementating this system on an FPGA presented the unique opportunity to tightly couple direct digital audio synthesis with the video signals controlling the automaton.

Luke and Weiqing with the Game of Life Music Synthesizer

Our final implementation consisted of a VGA for displaying the cellular automaton, a keyboard for user controls, a pair of speakers for outputing the audio signal and an Altera DE2 Development Board (which contained a Cyclone II FPGA). The interface allowed the user to modify the automaton directly, either by drawing individual cells, dropping in pre-defined objects (i.e. glider) or changing the rate of evolution. Through the keyboard, the user was also able to control the generation of audio by enabling "scan bars" and/or picking preset tone mappings (described in detail later).

A example of Conway's Game of Life implemented in Flash (borrowed from www.kenomaerz.de) is provided below for the reader's reference.

Example of Conway's Game of Life in Flash - borrowed from www.kenomaerz.de

High Level Design

The following subsections detail the rationale behind our project, our logical structure of the design, hardware/software tradeoffs and other useful background information.

Rationale & Sources for Idea

As mentioned in the introduction, our initial motivation for the project came from the tone-matrix musical generation tools that have become very popular on the internet. After talking with Bruce about the idea, he suggested that the project might be more interesting and better exploit the FPGA hardware if we were to replace the tone-matrix with a cellular automaton which evolved automatically in time. We liked this idea and thought that it would also offer an opportunity to combine concepts used from Lab 1 and Lab 3

After doing some more research, we found that this idea had actually been explored in a variety of past projects. The ones that most influenced our ideas and final product were GlitchDS, WolframTones and Grant Muller's Game of Life MIDI Sequencer. Each of these implementations used an automaton (either Conway's Game of Life or a Wolfram 1D automaton) to produce different sounds. We were particularly inspired by the GlitchDS which produced very "futuristic" and unique audio.

Therefore starting out, we intended to instantiate the Game of Life and synthesize our own tones (ideally unlike the common instruments) based on the cellular arrangement at any given point in time. We decided from the outset that it would be difficult to predict how different audio mappings would sound without testing them first. For example it wasn't immediately apparent whether some sort of direct mapping (i.e. based on the number or arrangement of cells) would produce music that was more interesting than say using the current state as some sort of probabalistic measure. Therefore we strove to make an interface that would be conducive to easily re-mapping the tones.

Background Math

Game of Life

Conway's Game of Life is a mathematical 2-dimensional cellular automaton, which evolves over time based on its past states (as can be seen in the flash version above). There are only four main rules:

- Any live cell with fewer than two live neighbours dies

- Any live cell with fewer than two live neighbours dies

- Any live cell with more than three live neighbours dies

- Any dead cell with exactly three live neighbours becomes a live cell

With these four simple rules (and extremely basic mathematics), many interesting visual structures can be created starting from a variety of intial conditions (i.e. gliders, oscillators, etc). A few examples are presented below for reference (taken from Wikipedia.org):

From Left to Right: Simple Oscilator, "Toad" Oscillator, "Glider". (Source:http://en.wikipedia.org/wiki/Conway's_Game_of_Life)

Direct Digital Synthesis

A Direct Digital Synthesizer (DDS) is a type of frequency synthesizer used for creating arbitrary waveforms from a single, fixed-frequency reference clock. In our project, we use this kind of synthesizer to synthesize the tone we want to play in real time. We experimented with a few different direct digital synthesis techniques, however they all relied on the same basic principles. The following is a basic diagram of a standard DDS:

The Frequency Control Register and the NCO part outputs a Pulse-Code Modulation (PCM), Pulse-Width Modulation (PWM) or Pulse Density Modulation (PDM) digital signal. For this project, we implemented these modules using Finite State Machines (FSM) on the FPGA (described in detail later). The Reference Oscillator provides clock for synchronizing the whole synthesizer. We used onboard 27Mhz and 50Mhz crystal and the Phase-Locked Loop (PLL) in FPGA to get the desired clock. Since the output now is digital signal, a Digital to Analog Converter (DAC) is here to convert the output signal into analog domain. We have a Wolfson WM8731 DAC with built in Low-Pass Filter (LPF) to do the job of the last two blocks.

Logical Structure

A high-level view of our system is presented below:

Our goal from the outset was to design a system that allowed a user to interact with Conway's Game of Life in a way much like the flash-version included above. Additionally, we wanted the user to easily be able to manipulate both the automaton and its mapping to audio in order to produce an interesting musical signal which could be played with basic speakers. As can be seen above, all of the control logic (both hardware and software) was imlpemented on the Altera DE2 Development Board. An external VGA monitor allowed the user to see the automaton as it evolved and manipulate it with ease via a simple keyboard interface. Finally a pair of basic speakers were attached in order for the music to be played in real-time, as the automaton evolved.

The hardware synthesized on the FPGA itself was broken into three logical pieces: automaton generation, audio synthesis and the user interface. The automton generation hardware simply calculated the next state of the Game Of Life given its current state, and updated all cell values in memory. Additionally, this hardware supported adding objects or even individual cells to the screen when the game was "paused". The audio synthesis hardware, as mentioned earlier, simply created multiple tones based on state information from the cellular automaton and routed them to the audio codec for playback on external speakers. Finally, the user interface consisted of many small hardware modules, the most significant of which was the NIOS processor. All of the key presses on the keyboard were routed to the NIOS which then drove the appropriate control lines for each of the affected hardware modules. Each of these pieces of our design is described in more detail later on in the report. An image of our setup is provided below:

Hardware & Software Tradeoffs

One of the main hardware tradeoffs that we made in designing our final system involved how to display user interface features without significantly reducing bandwidth to memory. More specifically, we originally considered storing both the automaton state information and user interface features (i.e. cursor, scan bars, etc) in memory, however we were concerned about dividing read/write access in time between the VGA controller, automaton state machine and the user interface control blocks. To avoid this problem, we made a major tradeoff by choosing not store any of the user interface features in memory, but rather multiplex the signal to the VGA controller in order to determine whether a pixel should come from memory (i.e. the automaton) or from one of the user interface generation blocks.

A second major tradeoff that we made involved choosing to use the NIOS for interpreting the signals from the keyboard as opposed to a hardware state machine. Initially, we included the NIOS in our design because we saw it as a simple way to centralize control of all the other hardware blocks. Moreover, we thought that it might be interesting for the NIOS to manage an independed user interface (i.e. sidebar) stored in the SRAM. The goal was to divide the screen into portions loaded from SRAM and portions loaded from M4K blocks. However, later on in the design process we decided it would be best for the fullscreen to be used in displaying the automaton, meaning the the NIOS's role in our design became limited to solely interpreting the keyboard signals. This simple task could have be implemented in hardware, however keeping it in software meant that changes to controls or varying basic timing parameters (i.e. the evolution rate of the automaton) involved only modifying a small base of C-code (which had a much shorter compile time than the FPGA design). Moreover, keeping the NIOS software in the design offered an additional avenue for debugging problems (i.e. we could leverage the built-in serial communication).

The final major hardware tradeoff we made was choosing to use direct digital synthesis methods instead of another kind of instrumental-modeling to produce our musical tones. We thought from the outset that with all of the overheads required for generating the automaton and user interface, we would have very little physical space available on the FPGA for a more complex synthesis method (i.e. nodal drum synthesis as in Lab 3). We also believed, that a simple, non-instrumental, tone synthesis would allow for a greater variety of tones and a more unique type of music. In retrospect, this hardware tradeoff was probably unjustified as our final design used under 30% of the total board resources. Moreover it allowed user to switch between tone generation modes to find what they found the most interesting.

Standards, Copyrights & Patents

Our project makes use of the common VGA Protocol and PS2 hardware interface. We also used code generated by Altera's software and from a previous 5760 project. See Intellectual Property Considerations in our Conclusion section for more specific details.

Hardware Design

A high-level illustration of our hardware design and organization is presented below:

Starting on the left-hand side of the diagram above, we see that the GOLStateMachine is responsible for updating the M4K Memory with the new states of the cellular atomaton, using control signals from the NIOS. These cells are then read out to the VGAController, however the signal is first piped through a series of mostly combinational blocks. The first of these is the MakeCursor block which adds a cursor to the video stream based on position data from the NIOS. After the cursor is added, the signal is run through the Draw Interface block which adds borders and any other interface features. Finally, the signal enters a series of 3 DrawScanBar modules, which place a scan bar at a location partially controlled by input from the NIOS. After all of these objects have been added to the video stream the VGA controller ouputs the corresponding pixels to the VGA interface. It's important to note, that all of the visual elements used in our project, excluding the automaton itself, are combinationally added to the video. Our main reasoning for this is that we wanted to avoid the complexity associated with mixing the automaton state data with other unrelated objects (like the scan bars). While this may have contributed to intermittent jitter problems (discussed later), in the end there were no observable video artifacts.

In addition to this video chain, our final design also consisted of a KeyboardController (pictured at the top) which was responsible for interpreting signals sent from a keyboard for user control. The NIOS processor accepted these signals and was responsible for updating the relevant hardware blocks (i.e. changing the speed in the GOLStateMachine). Finally the four blocks shown at the bottom of the diagram show the hardware responsible for creating the audio signal. PlayRowSound took each of the scan bar positions as input, and signaled the DDS module to play a tone corresponding to the number of cells in that row (based on user selectable mapping). Each of the 3 corresponding DDS modules then took this information and synthesized the sound in real time using a fixed sine table (instantiated as a ROM). The audio from each of these DDS modules was then summed together and sent to the audio codec which passed it out via the 3.5mm jack on the DE2 board to a pair of speakers.

The following sub-sections will describe each of these hardware elements in more detail.

GOL State Machine

The diagram below depicts the state-machine used for updating the automaton:

Beginning on the left-hand side, the state machine sits in the reset state while KEY0 on the DE2 board is pressed. In this state all registers (i.e. counters) are reinitialized and the screen is populated with a few demonstration objects to make the start-up more interesting. From here the state machine moves directly into the init state which similarly initializes a few other values that allow the state machine to read from memory on the next cycle. In the read state an entire line (or row) of the automaton is read out of memory one at a time. Once the End Of Line (EOL) is reached, the state machine moves into the update state where each cell in the "current line" is update one at a time based on its total number of neighbors. Finally, once the line has been completely updated the state machine moves into the write state which writes back the oldest line to memory. The process is then repeated beginning with the reading of a new line out of memory. Once the End Of Screen (EOS) is reached, the hardware moves into the done state where it waits for a pre-defined amount of time (set by the user) using a simple counter. Once the counter runs down, the calculation of the next state of the screen is initiated by returning to the read state.

The read/update/write process is difficult to visualize, so diagram below is provided to illustrate this sequence:

In the diagram above, the red cell is the current cell being updated. Each of the green cells is one of its neighbors, meaning that the new state (alive or dead) can be calculated as follows:

New Cell Update Equation

In otherwords, if the cell is currently alive and has more than 3 or less than 2 neighbors it dies (by overcrowding or loneliness respectively), otherwise if it was previously dead and has exactly 3 neighbors it comes alive (as if by reproduction). This new value for cell(i,j) is placed into the holding register array shown at the bottom of the diagram. Once the entire line has been indexed through, "new line 0" is written back to memory, line 1 becomes line 0 and line 2 becomes line 1. The next line is read out of memory (in its entirety) and stored into the line 2 register array. The process is then repeated until the last line is reached (N).

It's important to note that the done state shown in the state machine diagram above actually serves two purposes: to add a waiting period between consecutive states of the automaton and to allow the use to add cells (or clusters of cells) to the screen if the game is paused. Therefore, in actual implementation, the done state actually incorporates another drawing state machine. This state machine is shown below:

The state machine begins in the done state where it waits for a new user selected object (which is sent from the keyboard via the NIOS processor). Once a new object is received, the state machine transitions into draw where it then moves into a unique state corresponding to the object requested by the user. For example, if the user wants to draw a "glider" the state machine would then move into a glider-state where it draws the pixels that make up the glider one at a time. Once the number of pixels drawn = the number of pixels assigned (i.e. the number of total pixels that make up the glider) it has completed and therefore returns to the done state.

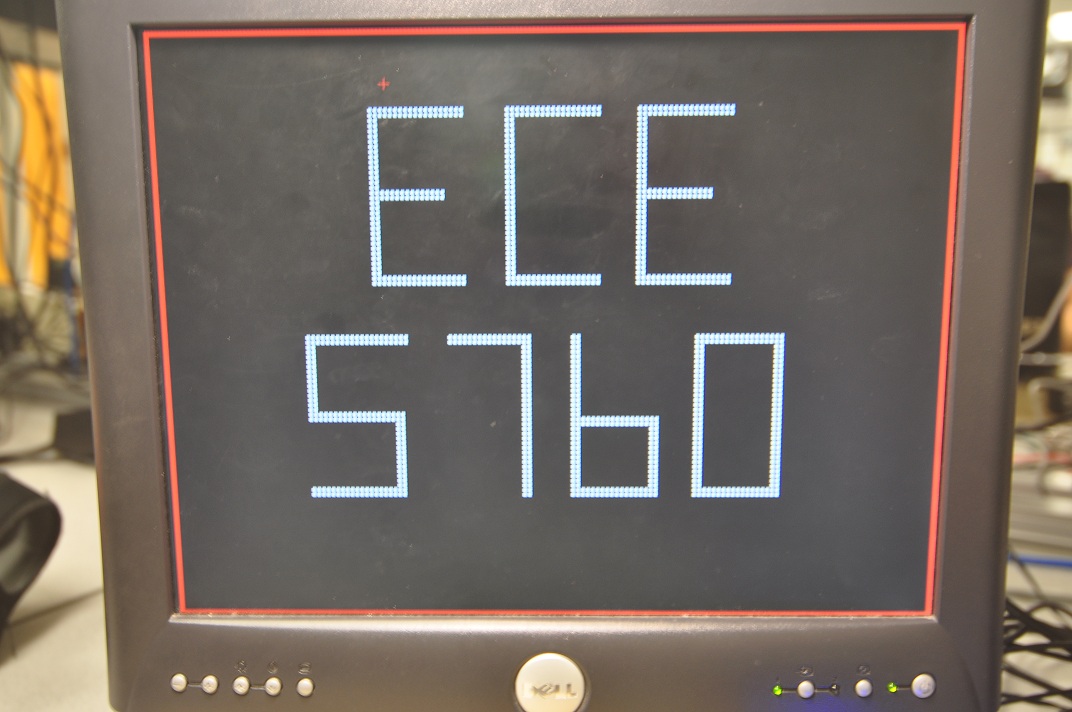

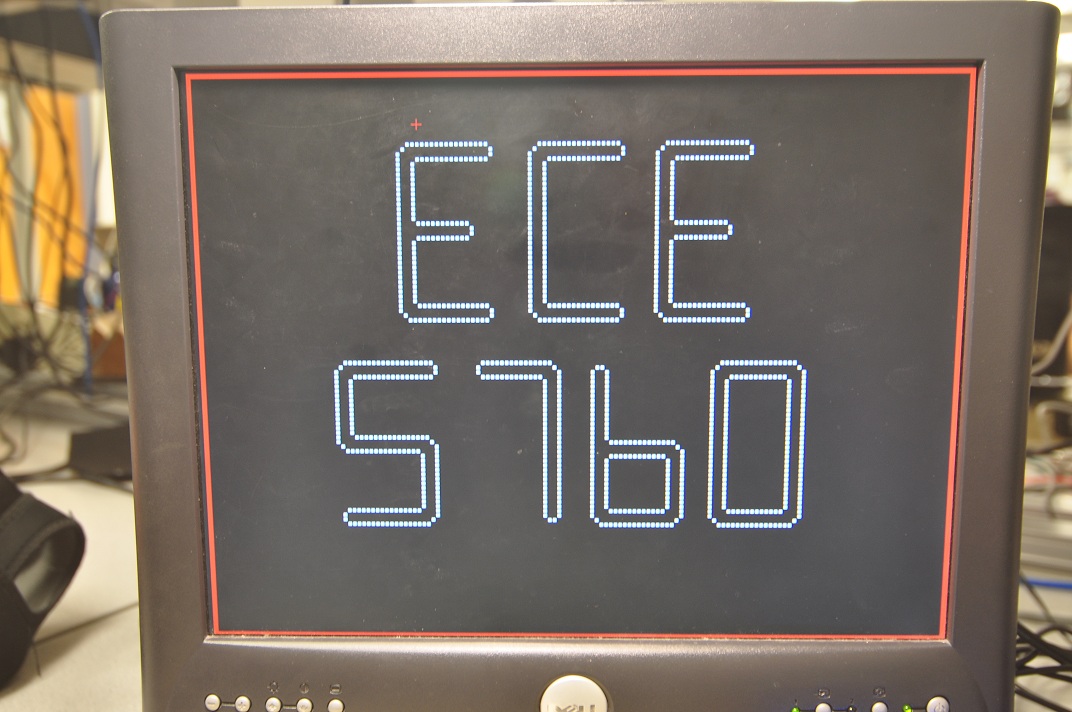

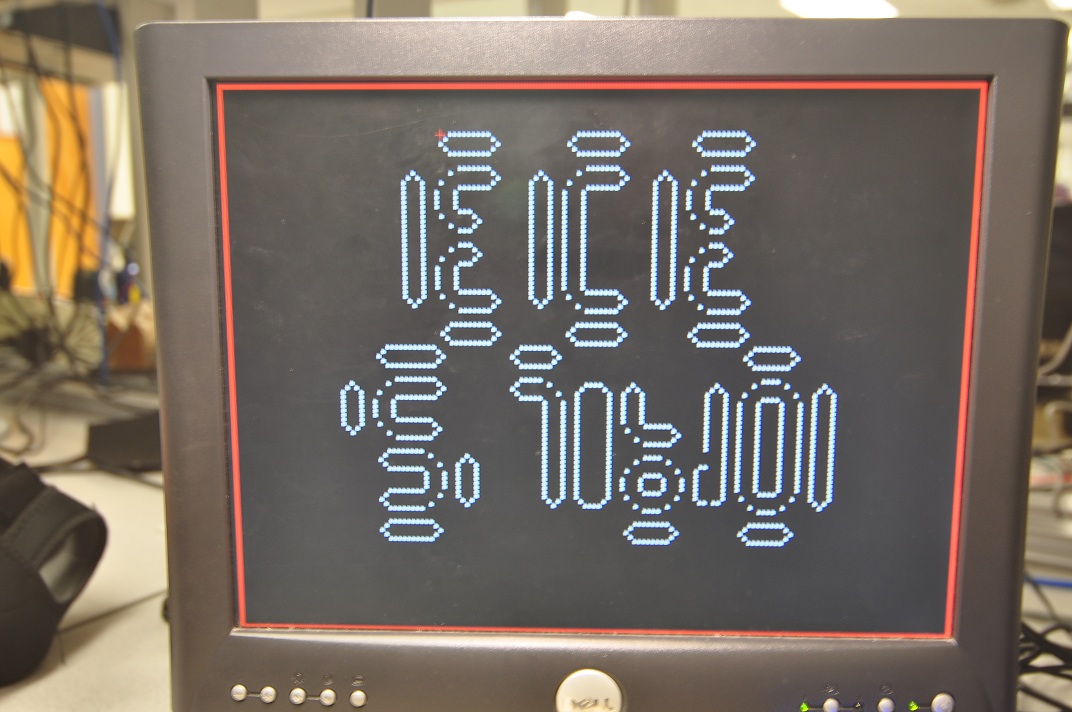

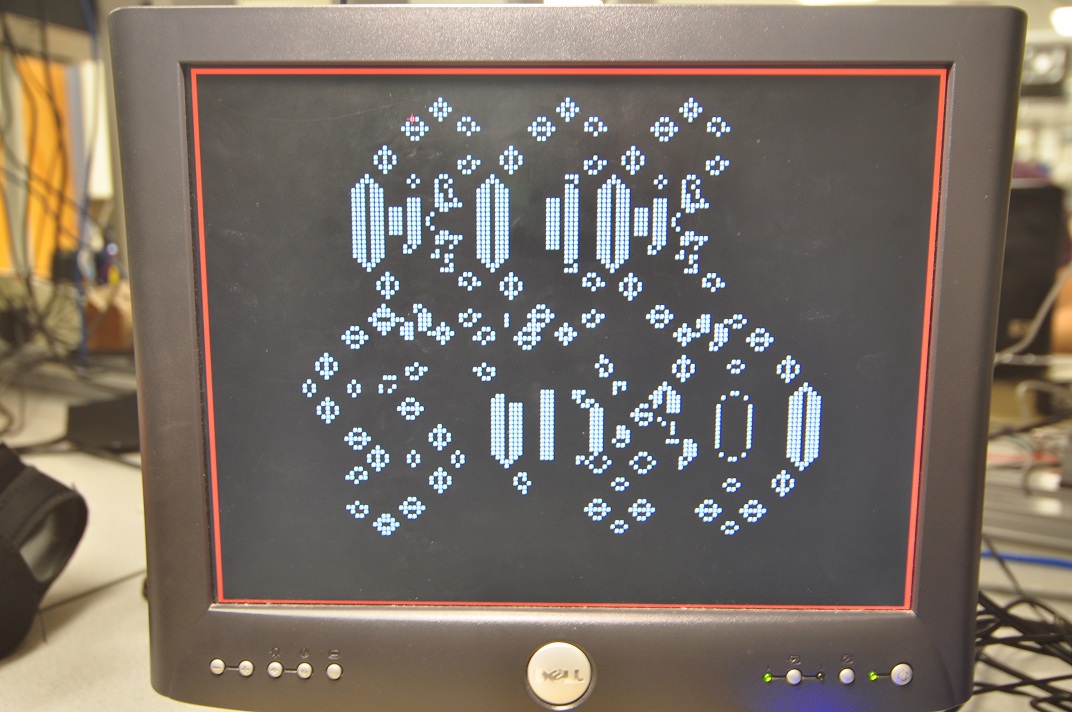

In order to draw "ECE 5760" and other objects that consisted of a large number of pixels, we used a simple MATLAB script (included with our code listing within the folder named "MATLAB Generated") that would output Verilog code which when placed inside of the Draw State Machine, would draw the object. We first began by drawing the object of interest in a matrix with Microsoft Excel, and then imported the matrix to MATLAB. The script would then index through all of the cells in the matrix and write a file with the necessary lines of code to draw that object. While a very simple solution, it was important as writing this code by hand would have been extremely tedious and time consuming (the code for drawing "ECE 5760 on the screen alone is 1096 lines).

Make Cursor, Draw Interface & Draw Scan Bars

As desribed earlier, the logic for drawing everything except for the automaton was placed in line with the automaton output so as to isolate the data. The structure for the MakeCursor, DrawInterface and DrawScanBars modules were all very similar and usually were composed of a few combinational lines that triggered of off the VGA controller position lines. An example of the general structure implemented is illustrated below:

The equation above shows the basic combinational logic used to determine any given pixel value. By chaining this logic together, we can effectively modify the screen to show anything that we want without having to store the pixel value in memory. For example, in the case of the cursor, the DrawCursor module accepts a position and uses that as the condition shown in the equation above. If the VGA controller is currently requesting a pixel for a location where the user's cursor is supposed to be placed, the module will drive the VGA color lines with the appropriate values for the cursor, otherwise it will supply the automaton data from memory.

Keyboard Controller

We chose to use an external PS2 keyboard for controlling the Game of Life and associated audio. To do this we borrowed a portion of Skyler Schneider's source code from last year's 5760 class. His code sets a signal high when a new keyboard "event" (i.e. a key press) is ready for reading. At this point we wrote an interface that would clock the event into a register for the NIOS to read. Once the NIOS had properly read the event it would set another signal high signifying that it was finished with the event and it could be cleared from the registers. This process is illustrated in the timing diagram below:

Looking at the timing diagram above, we see that eventReady is set high by the keyboard controller at time t=a. At this time a new value is availabled on the eventType bus that comes out of the keyboard controller. Our instantiated hardware then stores this value into the newEvent register (which serves as a buffer in the case that the NIOS isn't able to read the event by the time that the keyboard controller de-asserts the eventType line. Then at t=b, our hardware sets newEventRead high, signaling to the NIOS that a keyboard event is available for processing. Finally, at t=c the NIOS process sets the evenHasBeenRead line high, signaling to the hardware that it has processed the keyboard stroke and the buffer can be cleared in preparation for the next keyboard stroke.

NIOS Processor

When we began the project, we anticipated using the NIOS processor to control different parts of the user interface and essentially coordinate communication between hardware modules. We also saw the NIOS as offering an easy terminal interface for debugging. However, as our design evolved, we decided to use the entire screen for the automaton and simply place all the user control options on the keyboard. As a result, the NIOS became solely responsible for taking the new keyboard events (as discussed in the previous section) from the keyboard controller and driving the appropriate control signals. Once such example is the cursor position on the screen. When the user press one of the arrow keys, the event is transferred to the NIOS where the corresponding x,y position variables are updated. These variables are tied to PIO ports which in turn drive the MakeCursor module to update the position of the cursor on the screen. A list of keyboard keys and their corresponding actions are provided in the table below:

| Key Pressed | Action |

| Space | Pause the Game of Life |

| Backspace | Clear all cells (must be paused) |

| Page Up | Speed up the evolution rate |

| Page Down | Slow down the evolution rate |

| Arrow Keys | Move cursor |

| A, W, S, Z | Move cursor but in larger steps |

| F1 | Enable scan bar 0. Pressing it twice more incrementally decreases scan speed (third press disables it) |

| F2 | Same as F1 for scan bar 1 |

| 1 | Toggle scan bar 0 between 2 octaves |

| 2 | Same as pressing '1' but for scan bar 1 |

| 3 | Same as pressing '1' but for scan bar 2 |

| F3 | Same as F1 for scan bar 2 |

| g | Draw a glider (if paused) |

| o | Draw small oscillator (if paused) |

| l | Draw large oscillator (if paused) |

| e | Draw exploder (if paused) |

| p | Draw glider gun (if paused) |

| d | Draw a single cell (if paused) |

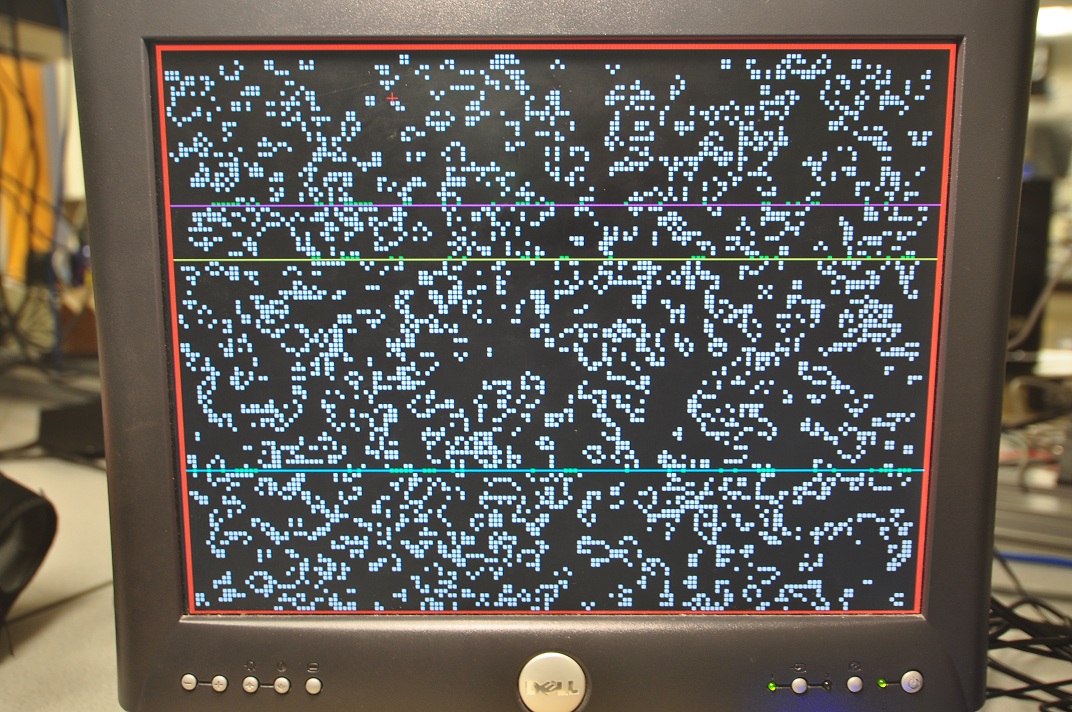

| r | Fill screen with randomly generated cells (if paused) |

| c | Draw chaotic structure (if paused) |

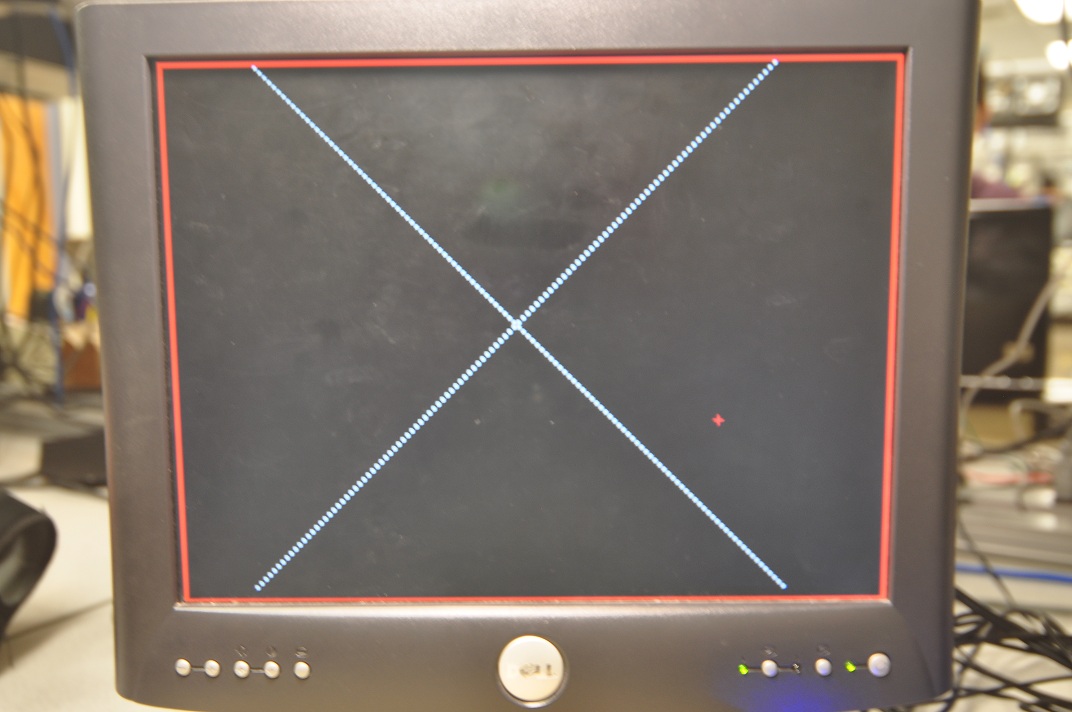

| x | Draw x on the screen (if paused) |

| 0 (numpad) | Number of cells alive in row determines tone (higher tone = more cells) |

| 1 (numpad) | Low-order bits of number of cells determines tone |

| 2 (numpad) | Number of cells alive in row determines probability a tone is played corresponding to the low-order bits |

| 3 (numpad) | Freezes all scan bars in place and plays tones with same mapping as pressing key 2 |

| 4 (numpad) | Freezes all scan bars in place and plays tones with same mapping as pressing key 1 |

| Home | Resets all scan bars and evolution speed of the automaton |

| `/~ | Draws "ECE 5760" on the screen with cells (if paused) |

M4K Memory and Video Mapping

For our project, we chose to use a 32KB single-bit addressable M4K memory module. In order to present the automaton in such a way that the user could discriminate individual cells, we needed to develop a scheme that would efficiently map our current state (stored in M4K blocks) to the VGA address space. The image below shows our general approach:

Our general approach was to simply coarsen the pixel mapping. In order words, a signal cell mapped to a 4x4 block of pixels on the screen (meaning we can a maximum resolution of 160x120). However, it's important to note that when the VGA actually read this data out, it only mapped the cell to pixels with lower order bits less the '11' (3). This last set of pixels was left black in order to provide visible spacing between the cells edges. We found that this simply strategy was more than sufficient to allow the user to make out individual cells on the screen. The assignment statement below demonstrates how we did this in hardware:

It should be noted that in our actual implementation, we triggerd off of VGA_X[1:0]=="2'b01" for drawing the vertical portion of the cell borders. We found that because of the pipelined nature of the M4K memory and the negative-edge clocking that we used for the VGA controller, this was necessary in order to obtain the structure described here.

DDS Module

The first basic sound synthesis technique that we implemented was a simple single tone sine wave generation with a ramping function to make the sound less harsh.

It's important to note that without basic ramping, playing the tone will cause unpleasant "clicking" noises on the speakers as you're effectivelly applying any impulse signal to which the hardware responds. This method is easy to implement, so we used it for testing earlier on in our design. The state machine we use to implement this technique is shown below:

In 'calc' state, t and ramp(t) and are updated with a fixed increment and sin(wp*t) is looked up from a sine table. It then goes to the 'pause' state to wait for the audio_CLK and store the output to output buffer for the I2C interface to read. Once a new audio clock cycle has begun, the state machine returns to the 'calc' state. When a note finishes playing, the state machine will go to 'done' state to wait for the next note to play (as signaled from the PlayRowSound Module).The theoretical waveform produced by using this technique is shown below:

However, as we discovered, a pure tone does not sound very interesting and a simple ramping function is inadequate. Our second idea was to use a square wave, saw tooth wave or pulse wave, which are rich in harmonics and pass it through a digital low pass filter to do some wave shaping before being enveloped. The following is a block diagram illustrates the implementation:

Again, after trying this technique we found that the tones were still a bit too harsh to be considered "musical". Our third and final implementation used a sine wave modulated with another sine wave:

We chose this synthesis method since it can be easily implemented based on the basic DDS module we have. In addition, it proves to have richer, lower harmonics. Moreover, it is easy to change harmonic components by changing the modulation frequency. The state machine used for synthesizing these tones is presented below:

Basically it is not so much different from the previous technique. It has one more state since for this synthesizer, we need to look up the sine table twice, so 'calc1' is added for the second look up of the sine table. The plots below show the theoretical results of using this technique:

We can see that the FM wave is rich in lower order harmonics. The harmonic component can be easily changed by tweaking the modulation term.

Envelope Function

The basic envelop function that we originally used looks like the following graph:

It is just a simple ramp up and ramp down. This removes the harshness in just playing a pure tone but still sounds a little bit dull.The actual envelop function we decided to use is much more complicated. It has an attach stage which ramps up very quickly. Then, it falls back a little bit is the dip stage. After that, it holds the strength for a period of time and decays away. By modifying the parameter of each stage, we can get tones varies from organ like sound to very techno sound:

Tone Choice

For the tones to be interesting, we needed to find a set of tones that will sound harmonic when played together. We do not need a lot of tones but we want the tones to cover a relatively wide frequency range. So we did not use a traditional major or minor tone set; instead, we chose a pentatonic scale for two octaves. A pentatonic scale is a musical scale with five notes per octave in contrast to a heptatonic (seven note) scale such as the major scale and minor scale. Thus, we have ten tones in all. We also prepared two sets of tones, namely, pentatonic major and pentatonic minor (which can be toggled between using designated keys).

Tone Mapping

Now we have the DDS module that can produce a set of pentatonic tones, we need to somehow map the game of life pattern to music. The first interesting scheme that we came up with (with Bruce's help) is see the Game of Life as a big state transition matrix. Each tone will represent a state in a state vector. The tone will be played according to the state probability. Just as in a Markov chain:

In the end, we did not use this mapping because it is not very direct and thus not that interesting for the user to watch. Our final implementation of tone mapping used a scan bar that scans at 3 different speeds through the screen. When the scan bar hits a new line, a note will be played. The tone and length of the note is determined by the cell count and speed of the scan bar. Each of the schemes tried is described below.

First Try (Mode 0)

The first try was to directly map the number of the live cell in a row to the tone that is played. For example, if we have 15 cells in a row and have 4 tones, when 0-3 cells are alive, tone1 is played; when 4-7 cells are alive, tone2 is played and so on. This is an easy and straight forward mapping, but has its own problems such as when the screen is densly populated, the tone diversity is low.

Mode 1

Due to the nature of game of life, we usually do not have many cells in a row, so the higher order tones will seldom be played. To address this problem, we remapped the tones using the lower order bits. So if we have the same situation of the 15 cells a row and 4 tones, the mapping will look like the following:

| Cell Count | 0 | 1 | 2 | 3 | 4 | 5 | 6 |

| Tone No. | 1 | 2 | 3 | 4 | 1 | 2 | 3 |

This mapping ensures more evenly distributed mapping of the tones so all tones will be played with relatively equal chance.

Mode 2

This mode is same as Mode 1 in tone mapping but we added some randomness to tone playing (i.e. the tone isn't always played when a scan bar hits a new line of cells). The probability a tone is played is determined by the live cell count over total cell count. So this adds more randomness to the mapping. The mode sound most interesting when there are more live cells.

Mode 3

In this mode, the scan bar is fixed at one position. The length of the tone and the speed of playing are still determined by the speed of the scan bar if it is moving. The tone mapping is same as mode 1. Similar to mode 2, there is randomness in whether the note is going to be played using the same scheme.

Mode 4

Mode 4 is same as Mode 3 but does not have the randomness, so a note will always be played. Interestingly, just fixing the bar and letting the evolving of Game of Life evolve to determine the tone sounds most interesting.

Software Design

As mentioned earlier, initially we had intended to use the NIOS to control a separate user interface on the screen (possibly run out of SRAM), however as our design progressed we decided that the UI wasn't necessary or particularly useful. That being the case, we decided to keep the NIOS but just to use it for processing keyboard input and sending appropriate control signals to other hardware modules. Using the NIOS for this task gave us added flexibility in designing and testing, as we could quickly modify different timing parameters and key mappings (without needing to re-compile the entire hardware design). An image showing all of the NIOS PIO used to interface with other custom hardware modules is shown below:

The main two signals that the NIOS takes in are the newEvent and new_event_ready which are used to process the keyboard signals (as described in the previous section). Once a new key press is read, the value is passed into a switch statement which decodes the event type and sets the corresponding signals. For example, if the user pressed one of the arrow keys, the software would update either the cur_x or cur_y signal which would then be used by the hardware to move the cursor position on the screen. The purpose for each of the PIO outputs from the NIOS is summarized in the table below:

| Signal | Description |

| [9:0] cur_x, cur_y | Determine the cursor positon on the screen |

| play | Plays/Pauses the evolution of the automaton |

| [23:0] period_gol | Sets the speed of evolution of the automaton |

| [3:0] obj | Encodes new object is to be drawn (i.e. glider) |

| en0,en1,en2 | Enables for up to 3 scan bars |

| s0,s1,s2 | Speed control for up to 3 scan bars |

| event_has_been_read | Notifies keyboard controller that key press was read |

Testing & Debug

VGA Image Jitter

One of the main problems that we encountered during debugging was an intermitent jittering and tearing of the image. The problem appeared to increase in severity (i.e. magnitude of the jitter) when more "live" cells were present on the screen, although it is acknowledged that having more cells on the screen could have simply made the problem more visible. The problem seemed to appear and re-appear even after changing lines of code that were unrelated to creating the image. For this reason, we believed that the problem was rooted in the place and route performed by the compiler. More specifically, we thought that sometimes the VGA controller was placed and routed in such a way that it didn't meet its critical timing deadlines for VGA monitor. About halfway through the project, we discovered that changing the clocking on the M4K memory (which was read by the VGA controller) from negative edge triggered to positive edge triggered seemed to resolve the problem. However, after another week or so of testing and development the problem seemed to re-appear. Again we tried switching the clocking back to the negative edge and the problem disappeared. From ths point forward, we didn't see any evidence of the jitter problem even as we continually re-compiled our design. In the future, this problem could probably be permanently fixed by using the Chip-Planner tool built into Quartus to fix the VGA Controller in a position that will minimize propogation delays along routes.

NIOS

Earlier in our testing, we found that sometimes after we re-compiled the entire design, the NIOS processor wouldn't respond to keyboard input and in fact would occasionally send (what appeared to be) random characters to the JTAG terminal. With Bruce and Darbin's help, we discovered that Quartus was missing the timing constraints file (a critical warning which we had naively been ignoring). After we generated this file and re-compiled, the problem disappeared.

Downloading the Design with the Altera Monitor

At points in our project, the Altera monitor would successfully download the design onto the FPGA, however when we tried to load code onto the processor, it would error saying that not processors were detected. The only way we found to resolve this issue was to close the program, reopen, load the "time_limited_sof" onto the board and then load the full version again. After this precise sequence the Altera Monitor would successfully find the processor and load our code.

Monitor Dependent Timing

Another problem we noticed throughout the project was that different VGA monitors displayed our UI and Automonaton better than others. More specifically, we noticed shifting of the image (not to be confused with jitter or tearing) on some monitors but not on others. Simply using the auto-set feature or repositioning the image manually sometimes resolved the problem, however often times it wouldn't. For example, on some monitors the vertical borders would be displayed so far apart that both could not simultaneously fit on the screen. This problem could be resolved by tweaking the addressing so as to tune the system for the monitor, however we never found a solution that worked equally well on all monitors.

Results

Our final design successfully displayed Conway's Game of Life on a VGA screen, allowed the user to add, draw and remove objects on the paused screen, add up to 3 scan bars which produced musical tones as they hit each line of cells and choose among 5 tone mappings to produce differents types of music.

Speed of Execution & Accuracy

The automaton was displayed without glitching and the evolution rate could be increased passed what was perceptable to the eye. Other than the intermittent glitching problems, which were not present in the final version of our design, we didn't discover any accuracy problems.

Safety

To our knowledge, our project doesn't pose any safety concerns. The DE2 boards and accessory hardware was always handled with care and kept on the ESD mats.

Usability

Our simple keyboard interface made interacting with and controlling both the automaton and music generation very simple and intuitive.

Interference

Our design did not make use of any external transmitters or other devices that produced substantial radiation. We are not aware of any interface issues created by our design.

Sample Ouput

The videos below demonstrate some of our projects features:

Our design was capable of filling the screen with random cells when the user pressed the "r" key (while paused):

Once populated with cells, up to 3 scan bars could be added to the screen, each producing independent notes:

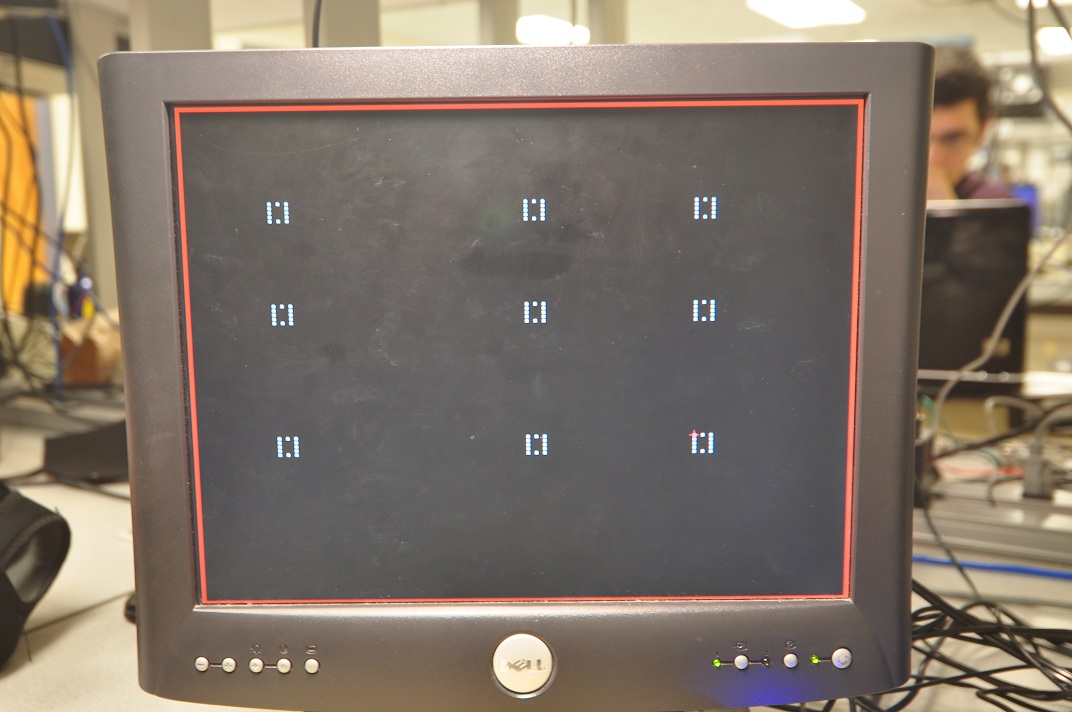

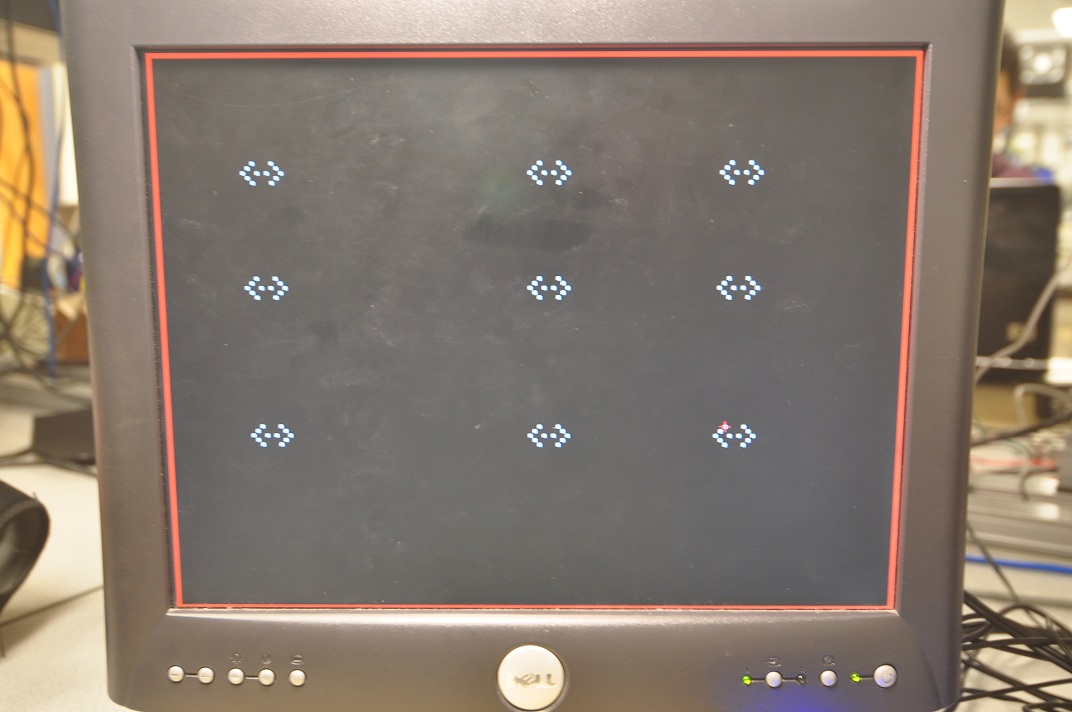

The user is able to add a variety of objects to the screen (while paused). An example of 2 glider guns is shown below:

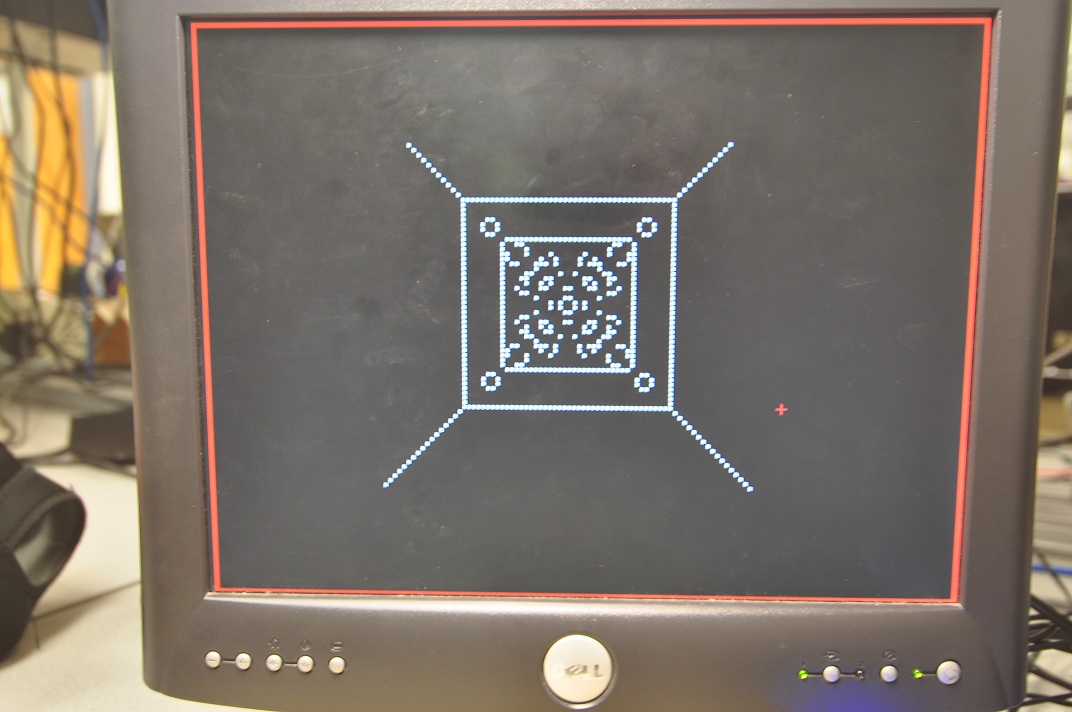

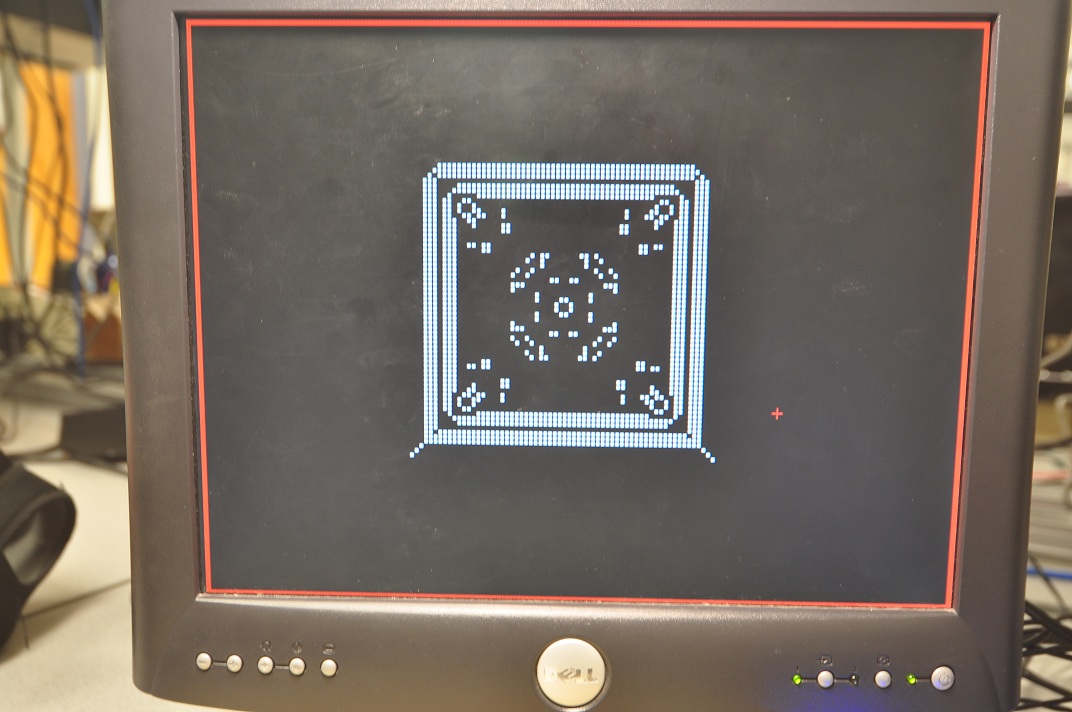

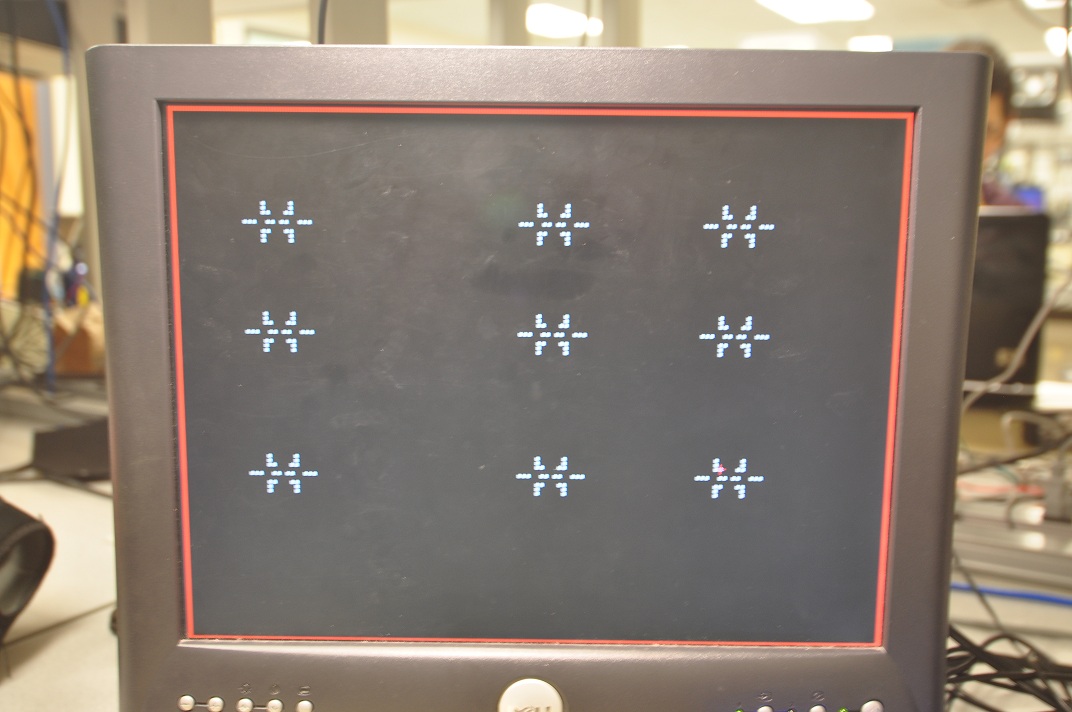

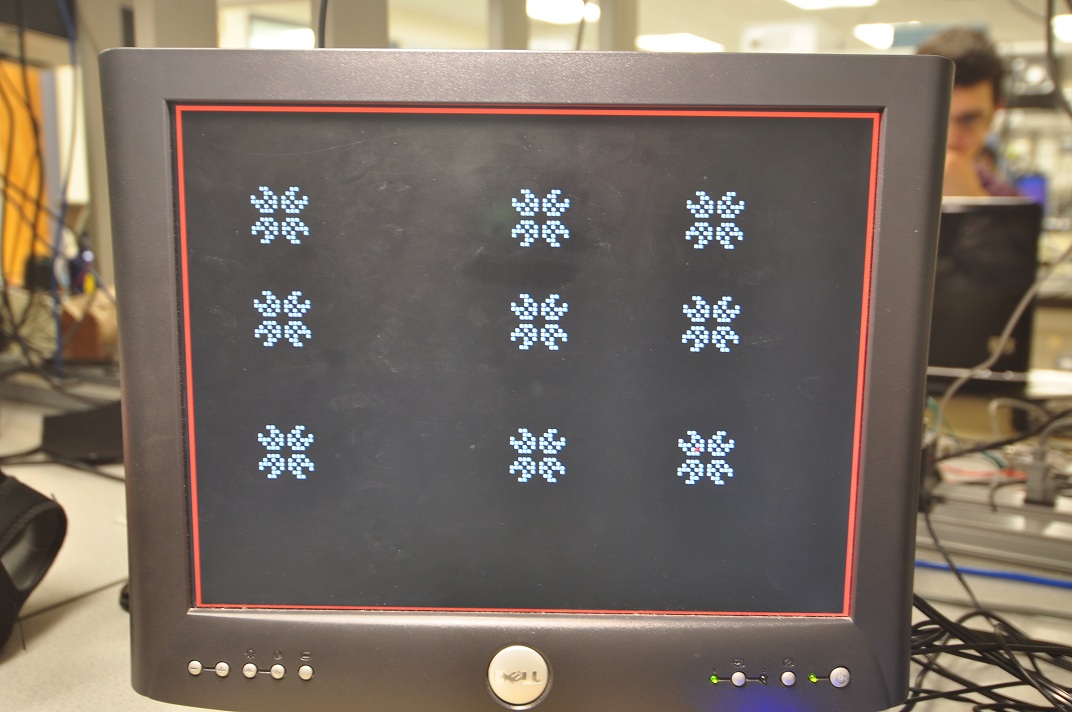

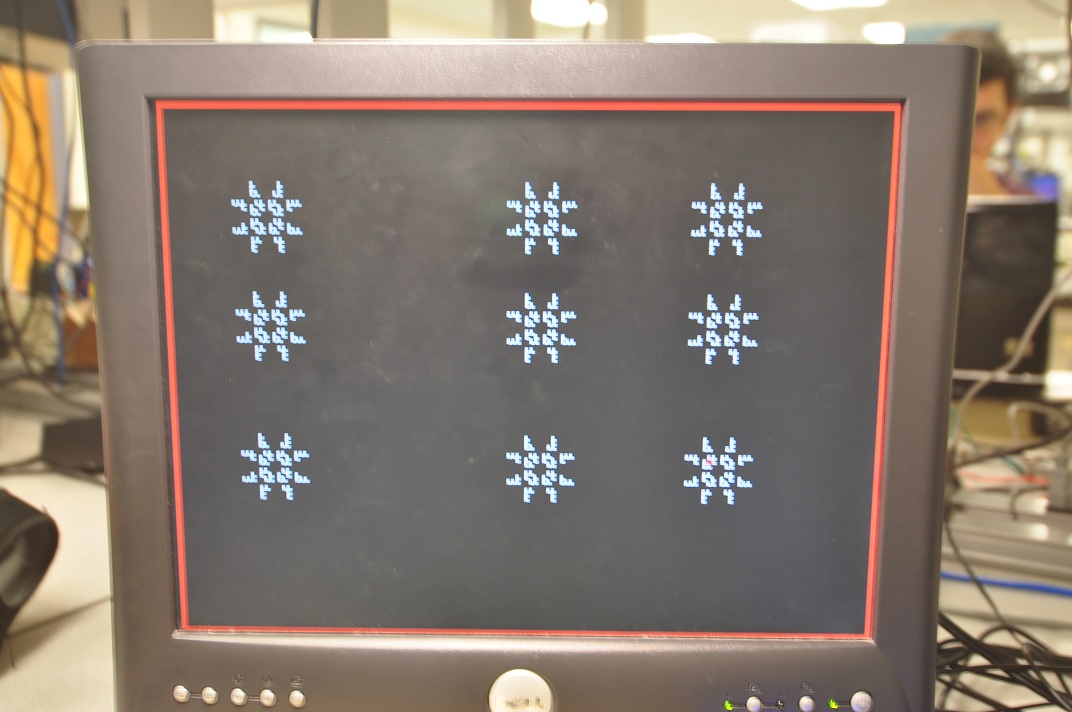

One particularly interesting pattern was formed by dropping in an "x" on the screen (by hitting the "x" key). A few steps of it's evolution are shown below:

The ECE 5760 pattern and its interesting evolution:

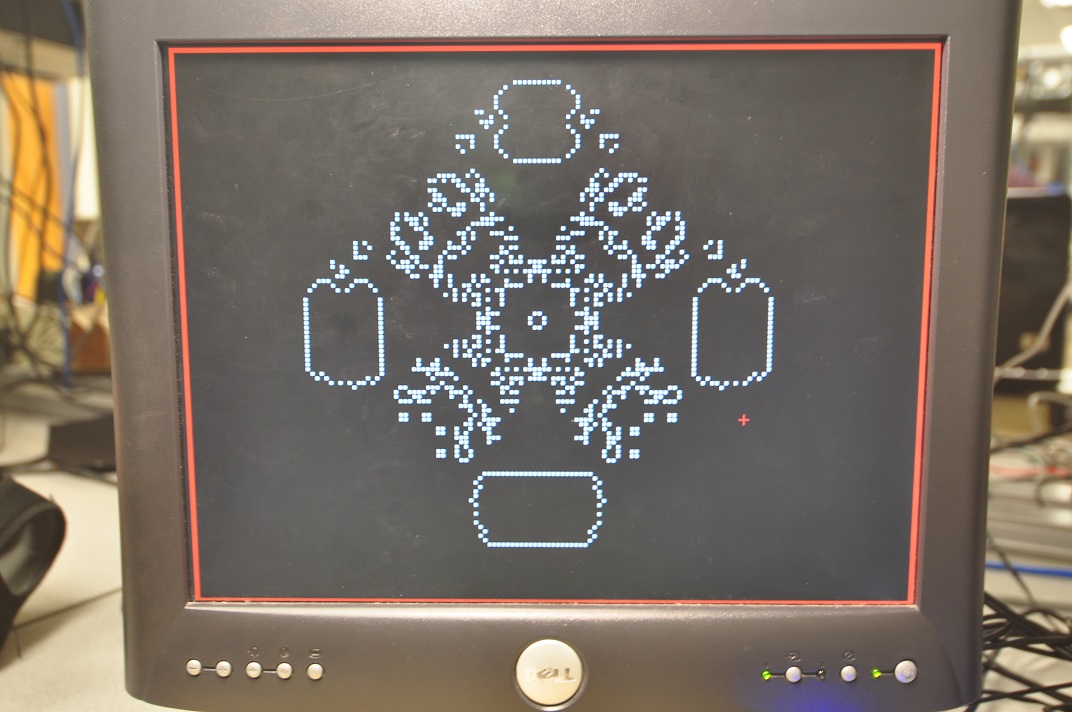

A set of "exploders" and their evolution:

Below shows a sample FM tone produced by our DDS module and its FFT:

Conclusions

Our final design met most of our initial expectations for the project. We implemented Conway's Game of Life and gave the user a few options for producing "music" from it. In addition, we had enough time to add features that make the Game of Life itself more fun and amusing to play with (i.e. the ability to drop in predefined objects or even speed up the evolution to see the steady state of any given pattern). The design didn't have any major bugs that we were aware of and was generally easy to control and produced a wide range of "music" depending on the mapping and initial conditions.

Given more time, we likely would have investigated more mapping schemes and or tone generation methods. More specifically, we think it would have been very insteresting to produce

Intellectual Property Considerations

In our final design we used a NIOS microprocessor, which was generated using Alter's SOPC Builder system along with the Altera Monitor. Additionally, we made use of the Megawizard functionality built-in to Altera's Quartus software package for instantiated our main video memory and PLL's (used for generating clock signals to the audio codec and VGA controller). Finally we used the VGA controller and Keyboard controller modules developed by Skyler Schneider, a previous 5760 student.

Acknowledgements

We'd like to thank Bruce for helping us to develop this idea and turn it into an interesting 5760 project, and both Bruce and Darbin for assisting us with debugging (in particular with our video jitter problems). In addition, we'd like to thank Altera for providing the DE2 boards.

Appendix

Division of Work:

| Task | Person Responsible |

| Game of Life State Machine | Luke |

| NIOS/Software | Luke |

| User Interface Modules | Luke |

| Tone Mapping Module | Luke & Weiqing |

| DDS Module | Weiqing |

| Website Design | Luke |

Code Listing:

Our code is available as a zip file here.

References