Motivation

For the final project we wanted to combine the concepts learnt so far in the previous labs as well as try something new. We thought of using the camera for this lab in order to understand how we can process video signals using FPGA. We also tried using the drum synthesis module that we designed in the previous lab and generate piano sound synthesis on a similar way. The challenges involved in this lab to integrate so many modules together and to detect skin precisely interested us more and motivated us to perform this project.

Abstract

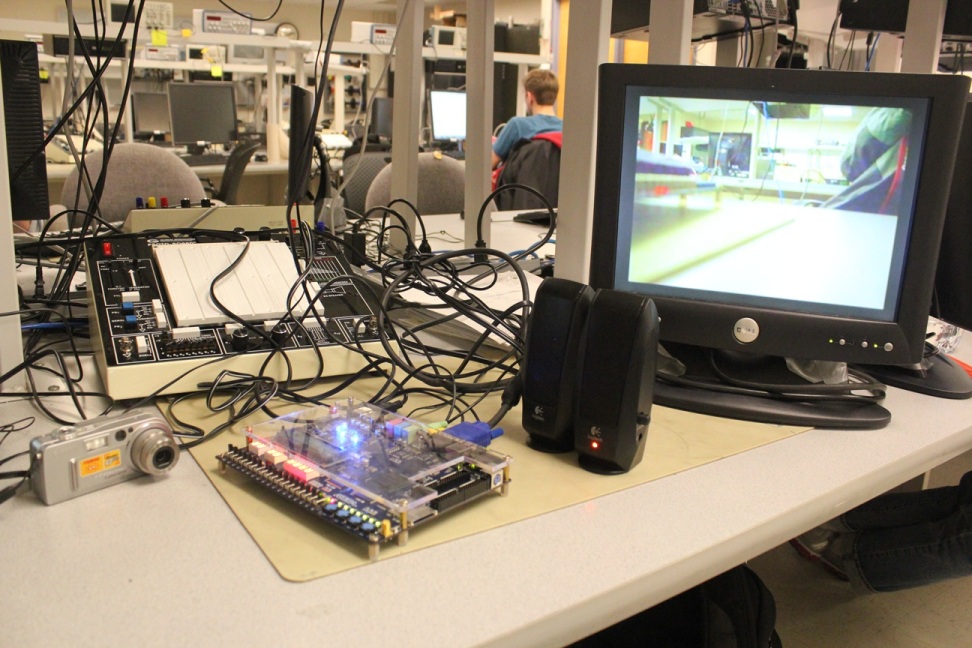

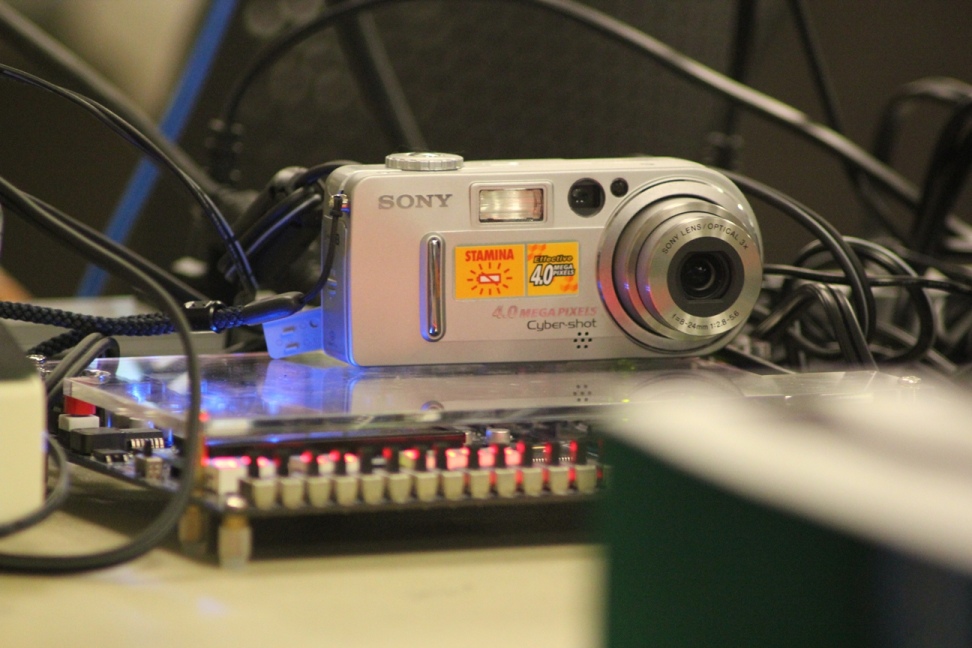

In this project, we built a system that detects the position of fingers on a sheet of paper using a camera and play a sound depending on the position. The camera was used to detect the skin and thus track movement of the fingers on the paper. The output of the camera could be seen on the VGA screen. The keyboard for piano and drum was also displayed on the VGA screen. Depending on the hit position of the finger the appropriate sound would then be played using sound synthesis for piano and drum. Thus we integrated all to give us an "Air Piano and Drum".

Design Overview

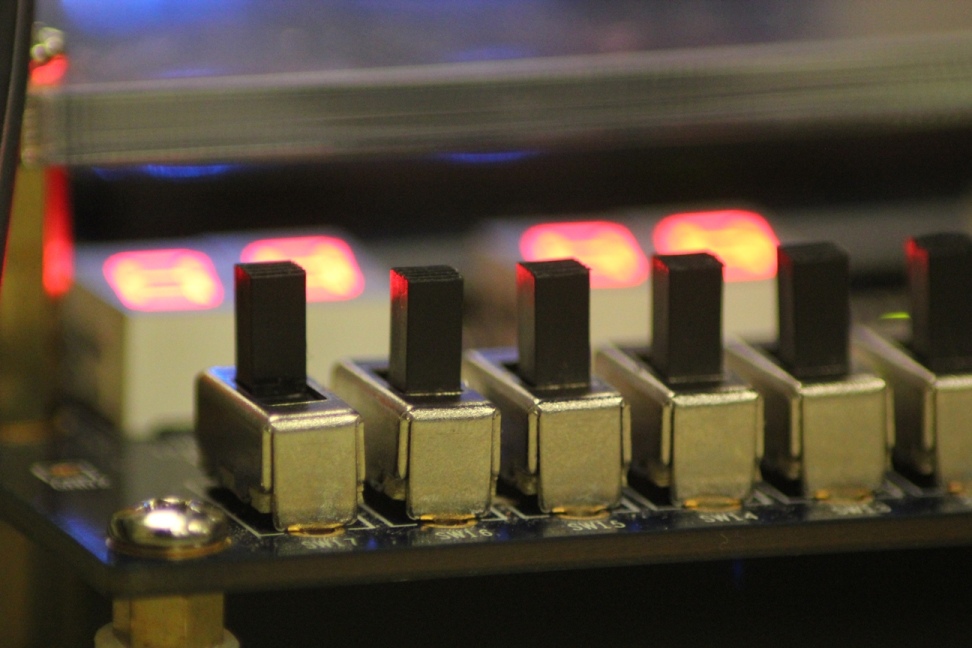

Our design consisted of mainly three modules. The first module is the main module that reads from the camera and detects our hand. We used colour averaging in order to accurately detect the skin. This module also generates keys on the VGA screen that enables us to see how the keys are pressed. The second module generates piano sound using Karplus-Strong algorithm. The third module generates drum sound using Leap-Frog algorithm and wave equations. Since we wanted both the instruments to be played at the same time, we used two DE2 boards each running the code for the two instruments. Thus we used two cameras to detect the hand movements from two different persons and according play the sound, one for piano and other for the drum.

For more details please on the design, please click on the 'Design' tab above.

| Project by Sandeep Gangundi, Xiyu Wang and Marc Vaz | Webpage designed by Sandeep Gangundi |