"GPS Navigation based on sound localization."

Project Sound Bite

Sound Navigation is a GPS navigation system that utilizes the ear’s inherent ability of sound localization to guide a user to their destination. It does this by using the user’s current GPS coordinates, the destination’s GPS coordinates, and the user’s head orientation to produce sound through two-channel stereo headphones that can be perceived as coming from the direction of the destination. The device represents a step towards a walking-friendly hands free navigation solution that allows a user’s attention to remain on their surroundings. Sound localization is also more intuitive so that the user can spend less time and attention to process the instructions. The navigation system also does not have language barrier problems.

High Level Design top

Rationale and Inspiration

This semester, ECE 4760 revamped its course hardware by upgrading to Microchip’s 32-bit PIC32 microcontroller from Atmel’s 8-bit Atmega1284 microcontroller, and as a group we were taken with the idea of implementing our own ‘upgraded’ version of a previous ECE 4760 project that we found interesting. As such, our project draws inspiration from and builds upon a past project, the Auditory Navigator.

Background

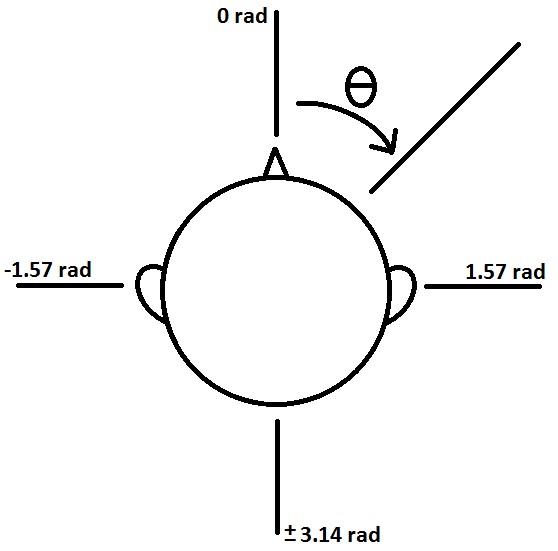

To generate sound that can be localized by one’s ears we implemented interaural time differences and interaural level differences (ITD and ILD), otherwise known as phase delay and amplitude attenuation proportional to the interaural distance, into the two digitally synthesized waveforms. Essentially, to synthesise a sound coming from someone’s side we would ensure the opposite sided channel was attenuated and delayed relative to the closer channel by some amount. By very roughly modeling the user’s head as a perfect sphere with two holes as ears diametrically opposite to each other, we used the angle between the following two vectors to calculate that appropriate ‘amount’ or level of each characteristic interaural difference: (1) From the user’s coordinates (idealized as their body’s center) to the destination’s coordinates and (2) from the center of the user to their anterior. The ITD was modeled by the equation

with C = 300m/sec, the speed of sound. The ILD is modeled by the transfer function

with C = 300m/sec, the speed of sound. The ILD is modeled by the transfer function

where

where

and

and  . The intensity of the further ear would be the magnitude of the transfer function:

. The intensity of the further ear would be the magnitude of the transfer function:

. However, our final implementation of the intensity difference between two ears is an angle based attenuation of

. However, our final implementation of the intensity difference between two ears is an angle based attenuation of

. In addition, a frequency shift was added between sound coming from in front and behind because we’d otherwise be unable to differentiate between the two directions with our simple model that doesn’t account for the outer ear structure.

. In addition, a frequency shift was added between sound coming from in front and behind because we’d otherwise be unable to differentiate between the two directions with our simple model that doesn’t account for the outer ear structure.

Hardware/Software Rationale

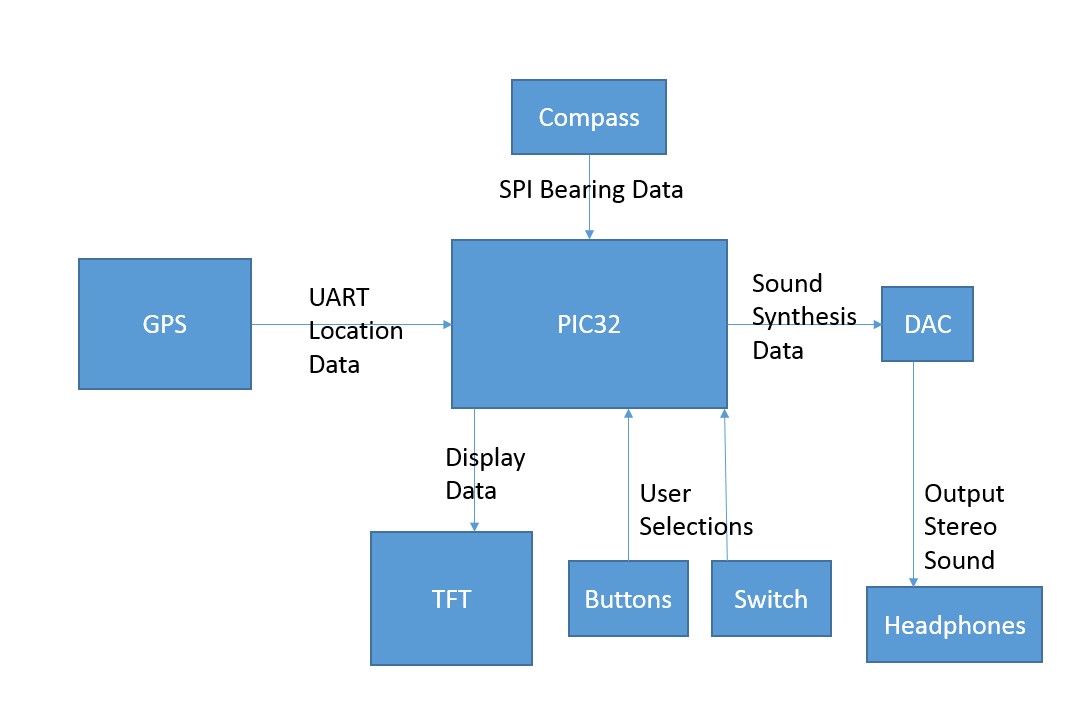

The final device’s main components consist of a TFT LCD display for allowing selection of pre-programmed destinations, a digital compass for determining the user’s head orientation, a GPS receiver for obtaining the user’s location, a two-channel DAC for producing stereo sound through the user’s choice of headphones, and a PIC32 microcontroller running a modified version of Adam Dunkel’s Protothreads to bring it all together. To use the device one simply needs to power it on, wait for a GPS fix, select a destination, and then proceed to wear the device after ensuring the correct headphone channel is in each ear.

The bearing of the user could have been calculated in software by using the user’s past locations. However, a hardware digital compass is added for more accuracy of the user’s bearing. This also made the device more user friendly as a simple turn of the head would make the sound change directions rather than needing to walk back and forth until a bearing could be calculated. A off the shelf GPS receiver was also used so we could focus more on the sound localization navigation rather than building our own GPS. Building the GPS itself would have been enough work for one final project.

Logical Structure

Headphones are first plugged into the headphone jack. Then,the user uses the buttons to select the listed destination from the TFT LCD display and turn on the switch to start the navigation. The GPS, PIC32, Compass, and DAC will be packaged and run in the background.

Standards

The only standard considered in our design was the NMEA 0183 format the GPS receiver used to communicate its data over UART. We only used longitude and latitude from the GGA NMEA sentence for our final implementation.

Existing Patents and Papers

A number of psychoacoustic related patents and papers exist on more advanced implementations of localized sound production through two-channel audio. A simple search will turn up many.

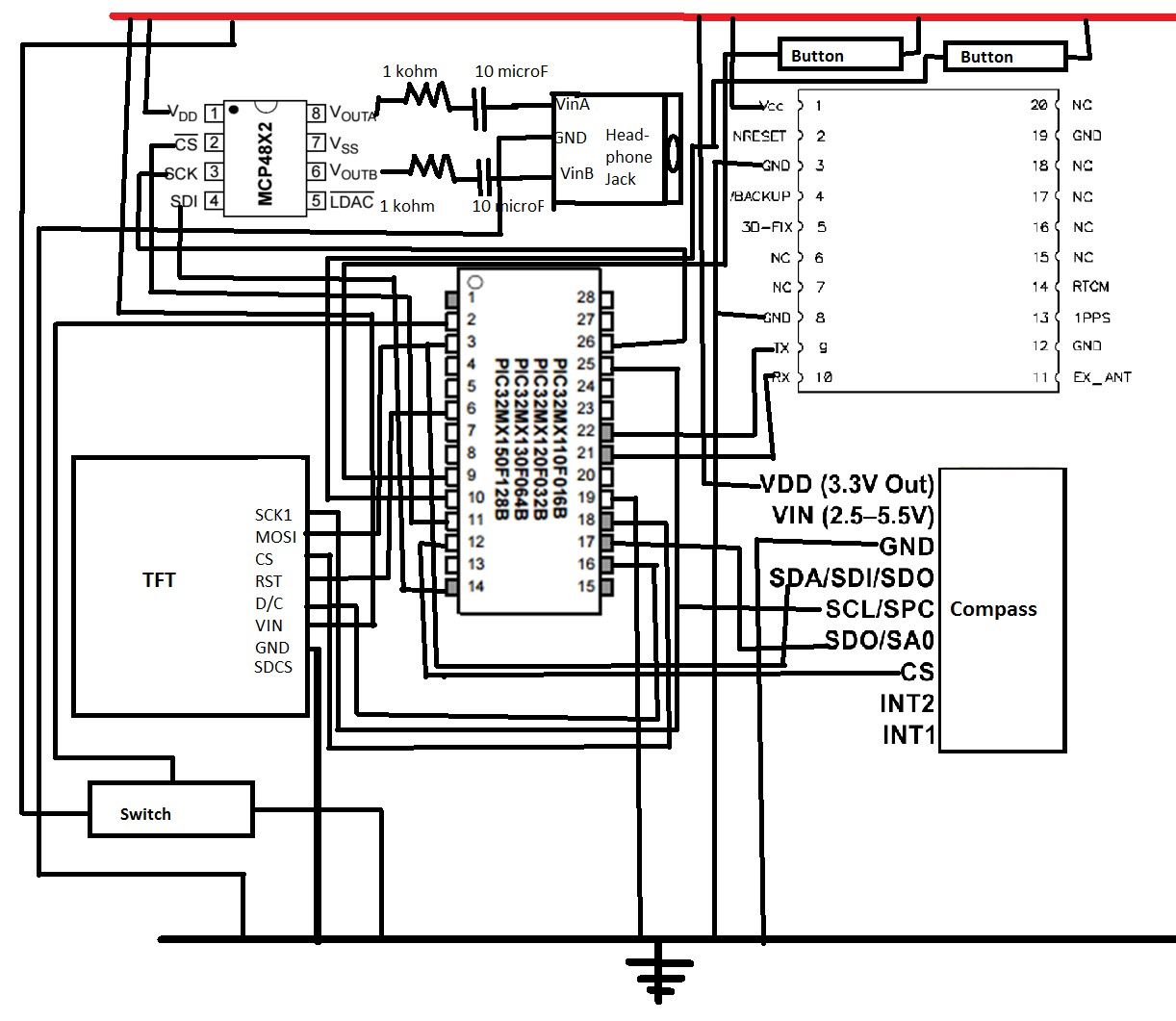

Program/Hardware Design top

The project hardware consisted of an Adafruit Ultimate GPS breakout board, digital compass, PIC32, DAC, and adafruit TFT LCD display, buttons, and switches. Pin conflicts were resolved in to accommodate the hardware and configured in the PIC32.

GPS

The program involves several concurrent threads coordinating the different inputs and outputs from the hardware. The serial thread receives the UART instructions from the GPS module. When the GPS has a signal and is powered on, it continually sends data via the UART standard that contains information in specific sentence formats. After reading the UART, the serial thread parses the sentence. It copies the entire received string (presumably containing several GPS sentences) and looks for a $ character, followed five characters later by an A. This denotes the $GPGGA format, which contains the longitude and latitude of the GPS device. The variable start marks where the $GPGGA thread starts in the UART buffer, the string a is a copy of that buffer, and flag is a variable that denotes that $GPGGA has been found.

Early on we encountered an error in the UART signal that prevented it from receiving any more than one sentence from the GPS. It turned out that the UART received a framing error message from the GPS receiver after every message that prevented it from receiving the sentences. Our solution was to clear all errors before receiving from the UART.

The parsing continues in the lcd protothread, which also outputs all displayed data to the TFT. If flag has been set to 1, it goes to start in a and takes two substrings 9 digits long at positions 18 and 30 after start. According to the $GPGGA format, that will be where the longitude and latitude are. Next, the TFT thread displays the array name, which is the list of locations. A variable selected denotes the item on the list that is selected, which is highlighted on the TFT.

After converting the longitude and latitude strings from the GPS to doubles, the GPS directions thread calculates the value difference, which is the direction one would have to walk in order to get from the current position to the selected location. It subtracts that from the user’s bearing to set the variable direction, the angle the user must turn to be heading towards the selected location, and the angle that will be the angle the sound will be coming from. The TFT will display “left”, “right”, “forward” or “backwards”, depending on this angle.

Sound Synthesis

The first step for the sound synthesis is to generate sound via a sine wave. The sine wave generation is taken from lab two the DTMF generator. An interrupt (ISR) is in charge of looping through the sine table at a set rate. The rate is changed for when the user is facing backwards from the destination. The sound delay between the two years is implemented by starting one sinewave later than the other. Since both sine waves start from zero, the phase information is kept intact. A counter is implemented to keep track of the time delay. Different cases are written for when the sound comes from the right verses when it comes from the left. Negative angle means the sound should come from the left side and positive angle denotes right side. An angle based attenuation is also implemented for each year. The ear further away is attenuated by cos(theta) while the closer ear is kept at normal level. The attenuation is implemented by a multiplying the DAC data by the attenuation amount. The DAC data needs to be normalized to be within 0-4096, therefore the sine wave oscillates around a level of 2047. The sound is then fed to channels A and B of the DAC. We used channel A for left ear data and channel B for right ear information. CS needs to be low before data can be written to the DAC and a high CS ends the DAC transaction. Therefore, CS needs to be raised then lowered (set and cleared) between transmitting channel A data and channel B data.

In order to use the DAC with the headphones, a 10uF capacitor and 1kOhm resistor needed to be connected in series to the DAC output before feeding the signal to a headphone jack. This prevents clipping the headphones The digital and analog signal needed to be physically far away from each other to prevent information corruption. The left and right inputs on the headphone jack should not be shorted together to keep information per ear intact.

The buttons protothread contains the user controls, two buttons and a switch. Both buttons are debounced and pressing them increments and decrements the selected variable, moving the highlighted location up and down the list. The switch is connected to a PIC input that stores its status as a variable and turns the sound on and off based on that variable’s value.

Challenges

A lot of difficulty in the coding was in the parsing of the GPS data. At first, we used much of the same strategy we’d used in previous labs for reading in a UART serial transmission. Not only did we have to continually read new data whenever it was available, but we had to parse the thread by distinguishing which “sentence” the GPS was sending. We also had to distinguish when the GPS wasn’t receiving data because it wasn’t receiving a signal. In that case, the sentence would begin the same way, but output the separating commas with no data in between them. In order to extract the data in floating-point form from a string that contained both numbers and letters, we had to first take the digits at the positions that would be the longitude and latitude if the GPS was receiving a signal. If they contained a comma, the position information wouldn’t be calculated and would remain the same, as that meant the GPS wasn’t receiving a signal. If not, we saved a substring of the two numbers and converted them to floating-point values using the atof function. This was the new position data.

Initially we calculated the bearing from keeping a running list of the last six positions the GPS was at and subtracting the current position from the one five previously. We calculated bearing by direction that the user was walking. However, this wasn’t an optimal solution. The device wouldn’t work accurately if the user wasn’t moving, and it didn’t account for the user facing in a different direction than he was walking. The device also took a long while to adjust the sound, waiting until five different position revisions had gone by, which means the sound is inaccurate for a noticeable amount of time.

Compass

To get an accurate bearing/heading of the user’s head we integrated a digital compass using the SPI protocol. Getting it to communicate over SPI proved to be a headache for us. Remember to always read from the SPI receive buffer after issuing a write to the transmit buffer even when there is nothing to read. Another thing that we had to consider was how the data was to be read from the compass. Devices like the one we were using split the lower and higher bits belonging to the data of interest into two registers and as a result we had to be careful with how we were concatenating the data read from the registers. But once we were correctly reading the X and Y component data from the device, it was fairly straightforward to get the heading. The accuracy was very satisfactory for our purposes so we did not implement tilt-compensation or additional calibration but it’s very doable if necessary and many guides on it can be found. The compass shared SPI with TFT LCD display.

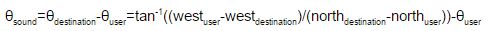

Math

Calculating the direction of the sound required three pieces of information: The longitude and latitude from the GPS, the desired longitude and latitude from the lookup array, and the bearing of the user from the compass. The direction of the sound will be the difference between the angle of the bearing of the user and the angle the user has to travel at to reach his destination:

The west coordinates are flipped because in the western hemisphere, a greater value of longitude goes left relative to north, flipping the coordinate system around the north axis.

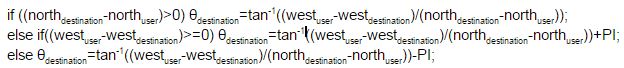

The arctangent used in this calculation must be a full-circle arctangent, one that takes into account the signs of the elements being divided to determine which half of the semicircle the resulting arctangent is in. We constructed this function using a regular semicircle arctangent using the following if-statements:

One common error during the coding process involved the domain of the angles involved. At some points it was necessary to convert arctangents that went from -pi/2 to pi/2 into full-circle range with the added information of whether or not the first element was positive. Other times certain processes required converting from an 0-2pi model to a -pi-pi model. The biggest error was in the sound synthesis, which took numbers between -pi and pi and wouldn’t work properly for numbers outside that range. The variable direction was the difference between bearing and difference, two numbers that ranged from -pi to pi themselves. The difference between bearing and difference would range from -2pi to 2pi, falling outside the range the sound thread expects. Upon noticing this mistake, it was a simple conversion in the sound protothread by 2pi to ensure that the input stays within -pi to pi. Sound worked properly once the protection against the angle restriction was taken into account.

Results top

We tested the resolution of our device by measuring how far we had to turn our heads until there was an audible change in where the sound was coming from. The test subject put on the device and the person conducting the experiment put a ruler under his nose. The subject turned his head to either side until he could hear a difference in the tone, and that point was measured on the ruler and taken down. Here are the results of several different subjects, all starting at a base point of 5 inches:

| Left measurement (inches) | Right Measurement (inches) | Nose-to-neck measurement (inches) | Angle (radian) | Angle (degree) |

| 2.5 | 7.5 | 6 | 0.3948 | 22.62 |

| 3.5 | 7.25 | 6 | 0.3588 | 20.556 |

| 3.75 | 6.25 | 6 | 0.2054 | 11.768 |

Table 1: Resolution Results

The device demonstrates a rough resolution of around 11 degrees to 20 degrees depending on the user.

Better and more fine tuned resolution measurements can be obtained by writing a software the bounce the sound a couple degrees to the left or right and seeing if the user can tell the difference. The sound will be gradually moved further and further to the sides one degree at a time until the user can differentiate between the sound coming from the left or right.

The end product was tested on a few users, who were not part of the team who designed the project. They were classmates and passersby. It successfully navigated two users to Barton Hall, one user to Day Hall, and one user to law library. They put the headphones/earbuds on and we selected a destination for them. They then put the hat on the head and were given one instruction for how to use the device. The only instructions given was to follow the direction the sound is coming from and that a higher pitch meant they were facing the wrong way. They were not told of the destination. However, we let them know when the destination was reached since a proximity check was not implemented on the project. All four set out from Philips Hall and successfully navigated to the selected destination. The person who walked to the Law School had to go through Hollister Hall, however the navigation system still worked. Once the gps acquires a fix, it can keep the fix for some distance into a building. The time spent in Hollister Hall was short enough that the gps did not lose fix and the user was able to successfully navigate to the Law School.

The gps was speced out to give the current location accurate to within 5 meters, however, during testing we found the accuracy to be well within 5 meters by checking the acquired coordinates on google maps. For example, during initial testing, we stood right outside Duffield and wrote down the coordinates the GPS was giving. The resulting plot on google maps placed it in right in front of the doors we were standing next to.

Since the device is implemented as a straight line navigation from the user’s current location to the destination, it can not be used with eyes closed or for the vision impaired. More functions such as navigation on paths or obstacle detection will need to be implemented for the product to be used without the aid of sight. Since the headphones/earbuds will be in and preventing hearing surrounding sounds, the user will have to rely on sight to safely cross the street. The product could potentially be further developed to be used without the aid of sight in future iterations.

Conclusions top

Analysis of Design

Our GPS was successfully able to direct users to the buildings we selected for them on the TFT screen. The test subjects weren’t aware where they were being navigated, but they did arrive at the given destination based on the auditory cues. One subject noted how quickly the device responded to the turning of his head back and forth, the sound staying roughly at the selected location the whole time.

One way we could improve the design of this project is by improving the physical layout. The current layout on the board isn’t optimal for the user. The buttons and switch are lower than the screen and wiring and difficult to access, while the sensitive wiring is on top of the hat and fully exposed. Especially given that this device is exclusively for outdoor use, the protection of the circuitry as it stands now is problematic. It certainly couldn’t function on a rainy day. The product could be cleaned up and placed inside the hat to help protect it from outdoor elements.

Another area that needs further work is the navigation process. Currently, it leads you straight in the direction of your destination and doesn’t give you any indication of when you’ve arrived. It would be an improvement if the program tells you that you’ve arrived after reaching a certain distance from your destination, likely a value that depends on the destination, such as the size of the building. A proximity check could be used to implement that the user has reached their destination.

Intellectual Property Considerations

Certain parts of this project, specifically the TFT, the PIC, the GPS and the compass, are all patented products: The PIC from Microchip, the TFT and the GPS from Adafruit, and the compass from Pololu. The TFT and the PIC were borrowed from the Cornell lab, while the Compass and GPS we bought ourselves from their companies’ online stores.

Much of this project was inspired by a previous project in this course that involved a sound-based direction system titled Auditory Navigator. Like ours, it used sound delay between two ears to guide a user towards a selected location, and received location and bearing information from a GPS device and a compass.

Some of the code was reused from previous labs. Our sound synthesis code drew heavily on the starter code from a lab in which we created a DTMF dialer, and our UART receiver from the GPS is derived from the UART receiver starter code from a lab in which we created a motor controller and tachometer. The entire code structure uses Protothreads, a structure we used for all our Microcontrollers projects that was also provided for us beforehand.

Ethical Considerations

The chief ethical concern, as listed in the IEEE code of ethics, was that of user safety of the device. The device directs users through sound, which requires headphones in the user’s ears, and directs them in a straight line towards buildings irrespective of where the buildings may be. We foresaw that this may be an issue when dealing with locations where it would be unsafe to walk in a straight line from point A to point B. Our testing space around the Cornell campus contains several busy streets, and may require users to cross the street at several locations. We warned all test subjects not to be confused by sounds directing them in a potentially unsafe direction by mentioning the dangers beforehand.

Another ethical concern is alerting the user of the specific circumstances under which the device may function. As it has a GPS receiver, it doesn’t work indoors. It’s also not waterproofed and won’t respond well to moist weather conditions. This may damage the device to the point of it being unusable in the future. The user is also advised to be aware of the relative difficulty of balancing the device on your head as opposed to a normal hat. Improper care for keeping the device upright may cause damage to the exposed parts as well.

Legal Considerations

This device uses GPS signals and is subject to laws related to GPS usage. Under current US law, GPS signal data is available to all civil users worldwide free of charge.

Appendices top

Appendix I. Source Code

The full code for our project could be found in SoundNavigation.zip. The two main files used in the final project is SoundNavigationFinal_main_commented.c and lsm303d.c.

Appendix II. Schematics

Appendix III. Cost Considerations

| Component | Number of Component | Cost/Component | Total Cost | Source |

| Microstick | 1 | $10.00 | $10.00 | Lab rental |

| Solder board | 1 | $2.50 | $2.50 | Lab rental |

| Power Supply | 1 | $5.00 | $5.00 | Lab rental |

| PIC32 | 1 | $5.00 | $5.00 | Lab rental |

| TFT | 1 | $10.00 | $10.00 | Lab rental |

| Jumper Cables | 2 | $0.20 | $0.40 | Lab rental |

| GPS Breakout | 1 | $40.00 | $40.00 | Adafruit |

| Header Pins | 156 | $0.05 | $7.80 | Lab rental |

| Compass | 1 | $10.00 | $10.00 | Pomolu |

| Headphones | 1 | $0 | $0 | Donated |

| MIscellaneous | $0 | $0 | Lab | |

| Final Project Cost | $90.70 | |||

Table 2: Cost Considerations

Appendix IV. Team Member Tasks

Abdu - Compass, GPS, code integration

Sophia - Sound synthesis, perfboard/wiring, code integration

Vance - GPS, Buttons, LCD display

References top

DataSheets

- TFT Data Sheet: https://www.adafruit.com/datasheets/ILI9340.pdf

- PIC32 Data Sheet: http://people.ece.cornell.edu/land/courses/ece4760/PIC32/Microchip_stuff/2xx_datasheet.pdf

- Compass Data Sheet: https://www.pololu.com/file/0J703/LSM303D.pdf

- GPS Data Sheet: https://www.adafruit.com/datasheets/GlobalTop-FGPMMOPA6H-Datasheet-V0A.pdf

Vendors

Example Code

- http://people.ece.cornell.edu/land/courses/ece4760/PIC32/index_Protothreads.html

- http://people.ece.cornell.edu/land/courses/ece4760/labs/f2015/PIC_to_DAC_MCP4822.c

- http://people.ece.cornell.edu/land/courses/ece4760/PIC32/index_UART.html

Reference Projects

Acknowledgements top

We would like to thank Professor Bruce Land and all the Lab TAs for providing help and guidance.