Home | Introduction | My Model | Results and Discussion | References and Links

During the development of a perceptual system (such as the visual system), networks of neurons must learn to recognize features without any indication of the desired outcome. A feedforward network can learn to pull patterns out of a perceptual field by adjusting the weights of its synapses. One theory of how this occurs is with a hebbian learning algorithm [1]. In such an algorithm, neurons that fire together develop stronger synapses while those that fire separately weaken their synapses. Repeated presentation of a particular pattern (such as an oriented line) will cause select groups of neurons to fire again and again strengthening their synapses on particular post-synaptic neurons. These post-synaptic neurons thus become adept at recognizing a particular pattern.

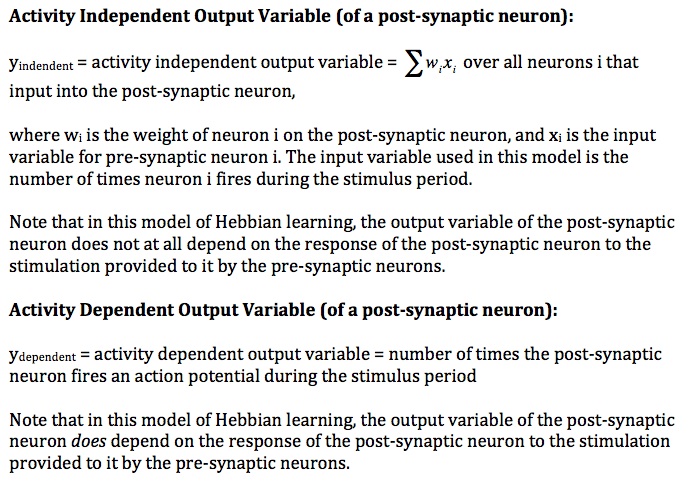

Hebbian Synapses work with the principle that “neurons that fire together tend to wire together”. More specifically, if two neurons are connected such that neuron i synapses on neuron j, the synapse connecting neuron i will be strengthened if neuron i fires slightly before neuron j becomes depolarized. Two foreseeable consequences of this Hebbian Rule for synaptic plasticity are specificity and associativity--see the image below [4]. Specificity implies that only pre-synaptic neurons that are active during the depolarization of the post-synaptic neuron tend to have their synapse on the post-synaptic neuron strengthened.

[7] Purves figure 24.8 page 587

Associativity implies that two pre-synaptic neurons who fire at the same time, together depolarizing the same post-synaptic cell, will tend to have to have their synapses on the post-synaptic cell strengthened MORE than if either of the two cells had fired individually--the greater degree of depolarization due to the cells firing together causes the two pre-synaptic neurons to associate on the post-synaptic neuron. Further, pre-synaptic cells that have a strong synapse on a post-synaptic cell can have the effect of pulling other more weakly connected cells towards the post-synaptic cell if the other cells fire at the same time as the stronger-synapsing cell.

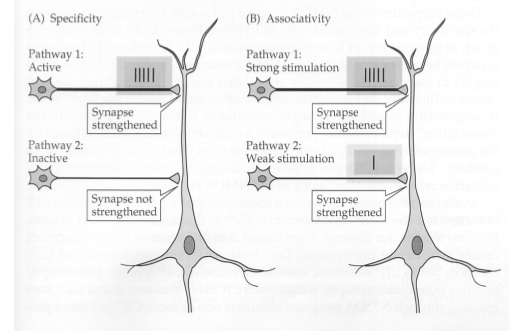

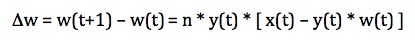

The Hebbian rule for synaptic plasticity is easily changed into a numerical algorithm for updating the weights of synapses. In a system with discrete time steps, the weights of the synapses are adjusted by the equation,

after a discrete time step. Here, the change in the weight (w) of the ith synapse on the post-synaptic neuron is equal to the product of the learning rate, n, the input of neuron i on the postsynaptic cell, x, and the post-synaptic cell response, y (also known as the output variable). The post-synaptic responce (output variable) can be defined in several ways (see the end of this page).

Oja's Rule is a slight modification of the Hebbian rule that prevents the weights of the synapses from diverging to infinity. It acts to normalize the weights of the synapses after each discrete update. Oja's rule for updating the weight of a synapse is given by the equation,

after a discrete time step (t). Here, the change in the weight (w) of a given input neuron is a function of the input, x, the post-synaptic cell response (output variable), the weight of the neuron before the update, and the learning rate, n.

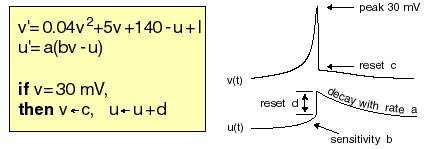

I used the Izhikevich Model that runs in Matlab in order to run all simulations. The Izhikevich model is a two-dimensional system of ordinary differential equations. While this system is not biophysically accurate in the sense of Hodgkin-Huxley-type models, it captures the major aspects of a simplified neural network system in the capacity that was necessary to complete this project. An area for possible future improvements of this model would use more biophysically accurate models.

Image courtesy of www.izhikevich.com. Electronic version of the figure above and reproduction permissions are freely available at www.izhikevich.com.

The details of the parameters and a good explanation of the model can be found on this page. All neurons used in my model are tonic spiking neurons with the following parameters:

a = 0.02

b = 0.2

c = -65

d = 6

I = 14

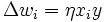

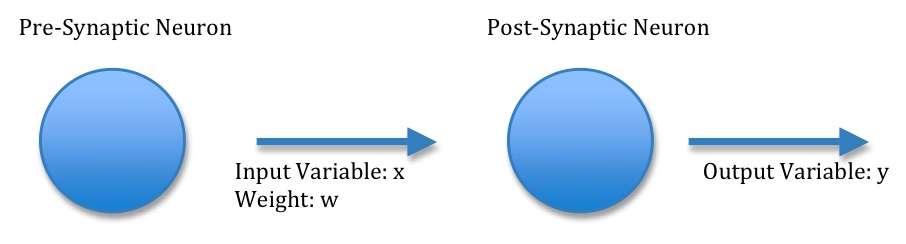

The purpose of this project was to test the efficacy of using an activity independent output variable versus a activity dependent output variable in the updating of synapse strengths. In an actual biological system, the updating of synpases is hypothesized to be due to the mechanism of long-term potentiation, mediated by AMPA and NMDA receptors [4]. The molecular mechanism of Hebbian Learning is not the concern of this project, rather I was interested in whether the updating of synapses would be more effective if the strengthening of synapses required post-synaptic cell activity in the form of action potentials rather than simply depolarization of the post-synaptic neuron. In other words, I wanted to test to see if Hebbian learning was more or less effective if the synapses connecting pre-synaptic cells to post-synaptic cells were only strengthened when post-synaptic cells fired immediately after pre-synaptic cells fired. Current evidence shows that simply the coupled depolarization of post-synaptic cells to the firing of pre-synaptic cells is all that is necessary to strengthen those synapses. Thus, in the currently-accepted model, post-synaptic cells may never fire in the presence of pre-synaptic cell activity, and synapse strengths would still be adjusted by the Hebbian Algorithm.

In this project I refer to an activity independent output variable and an activity dependent output variable. An activity independent variable will be considered to be one where synaptic plasticity of pre-synaptic cells on the post-synaptic cell requires no activity of the post-synaptic cell for Hebbian learning. An activity dependent output variable will be considered one where the post-synaptic cell must fire before the synapse is actually strengthened--it is not enough that pre-synaptic cells fire together and create depolarization, rather they must actually together cause the post-synaptic cell to fire or their synapses will not be strengthened.

The two types of output variables used in this project are summarized below: