Results

Here is a video of the functional system.

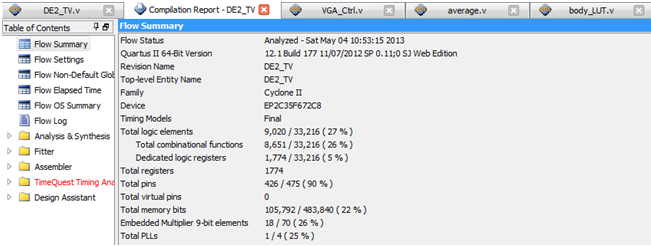

The entire system used 27% of logic elements and 22% memory on the DE2 board. The compilation report of the final design is shown below:

Here is a few pictures of the system at work:

Future Improvements

Since different body parts are recognized based on the location of the skin pixels in the camera frame, the system cannot distinguish 2 body parts all in the same frame region. One potential improvement for this project would be to perform a convolution of an eigenface with each frame to identify the location, size and distance of the head from the camera. One could also color-code user's arm using some sort of identifier to distinguish the left arm from the right arm and track each arm individually. Another cool extension would be to track the movement of the arm only using skin pixels but use 3 different down sampled frame to store the head, left and right arm individually. Since human movements are slow in comparison to VGA frame refresh rates, one can only allow small transitions for each body parts. In this case, even if only skin color detection is used, one would be able to tell the 2 arms apart in the same region/frame.

Conclusion

In this project, I successfully created a real time upper body movement tracker only using skin detection and frame partition. The user can interact with the system through a set of switches. The system was able to track movements of the user's head and arms quite accurately by only using skin color detection and several averaging modules. The entire system was written in Verilog and only used 27% logic elements and 22% memory on Altera's DE2 board. If I had more time, I would definitely love to try isolate left and right arm tracking using only skin detection using motion tracking and averaging.