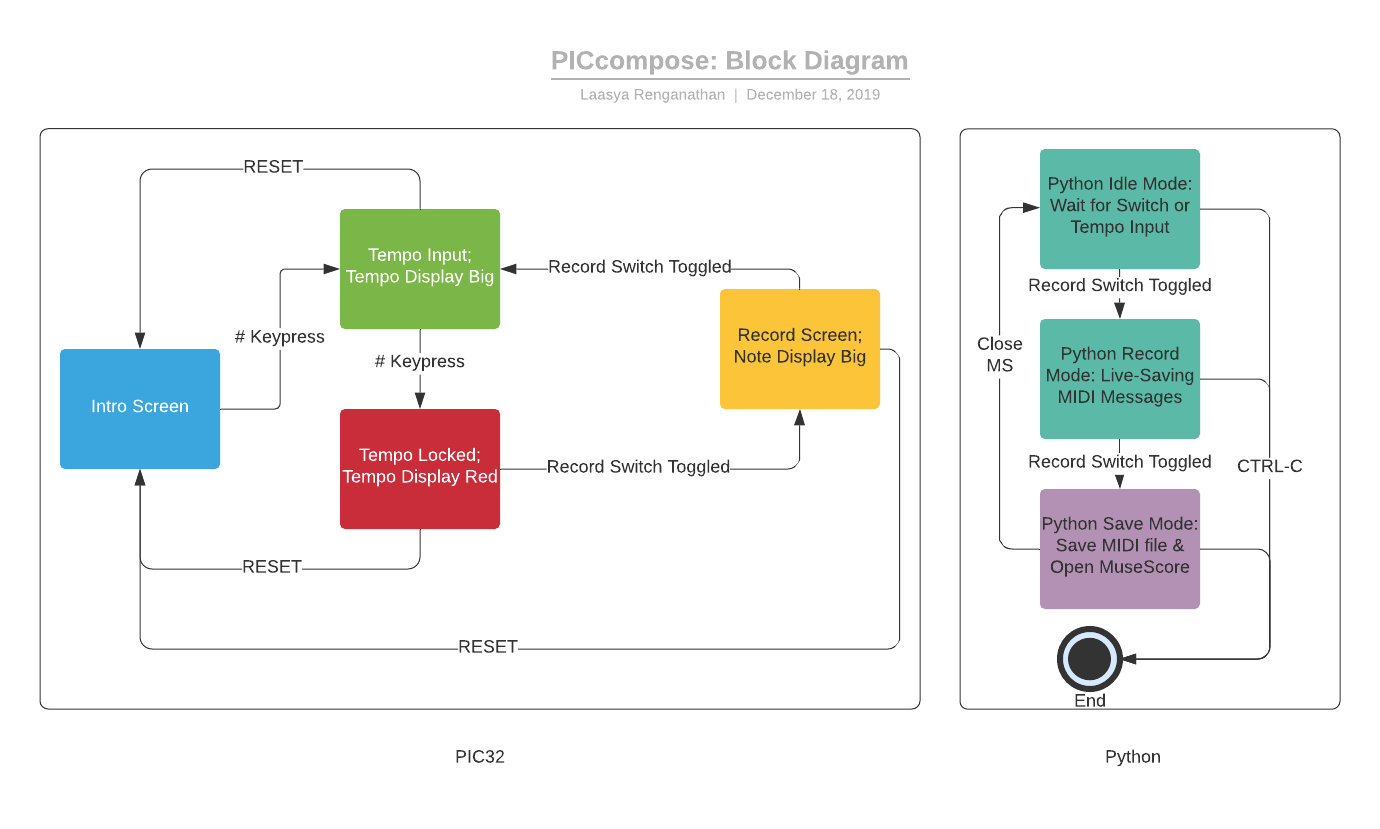

Software: Overview

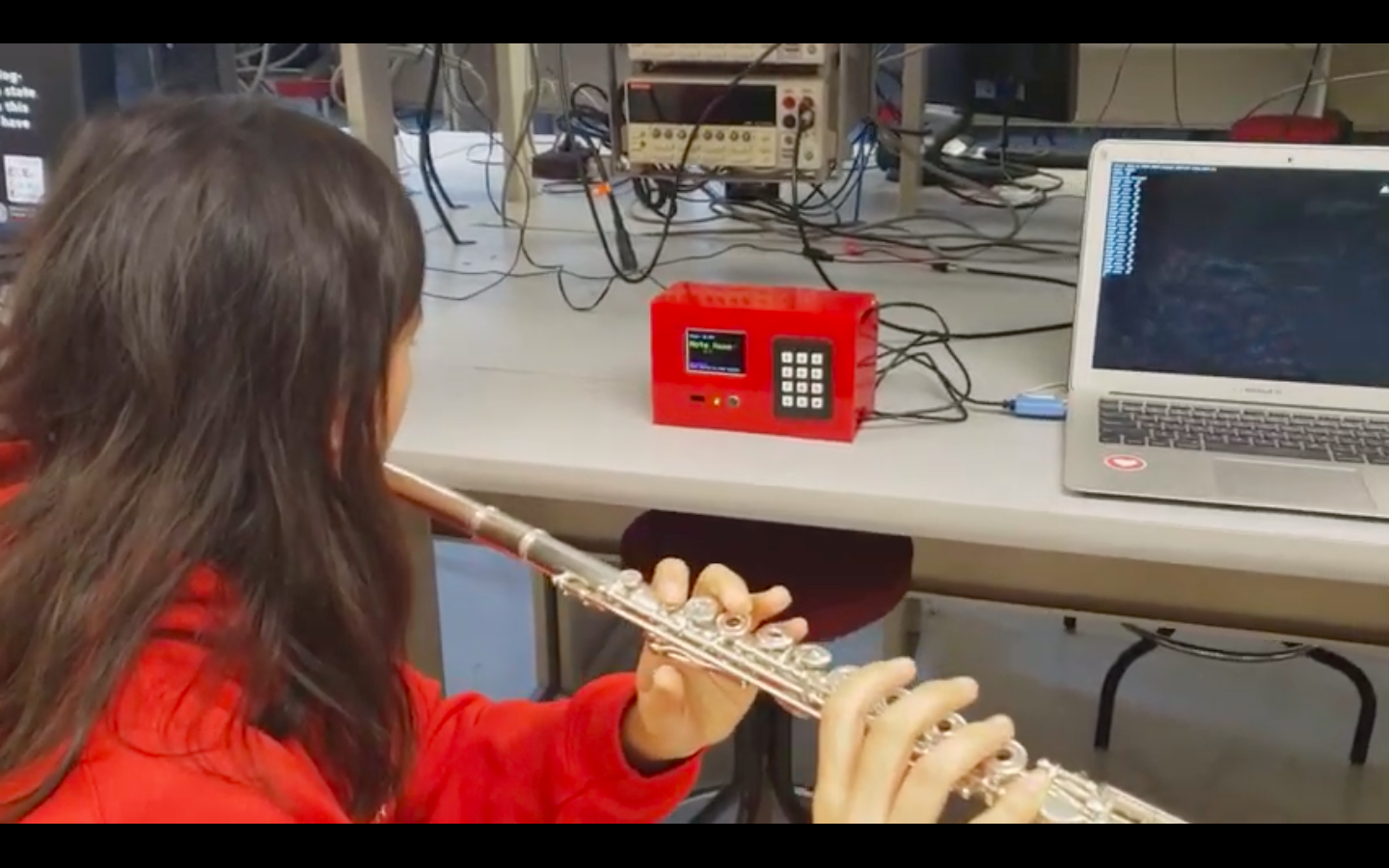

Our software components involved both a ProtoThreads C implementation running on the PIC32, as well as a Python script running on an external computer.

In the C code, we had an FFT function implementation, which an FFT thread would call.

That thread found the peak frequency from the FFT, calculated MIDI number and octave.

A metronome thread toggled an LED at a user-input tempo, thanks to a variable yield time, and a timer thread running every 1ms updated tft screen transitioning in and out of “record” mode,

displayed the note currently being played to the TFT screen, and sent serial note information to the external computer.

Additionally, we included a keypad thread which read input from the keypad, handled some locking, in addition to other user inputs.

The main C function set up all of these threads and displayed an intro screen welcoming the user to PICcompose.

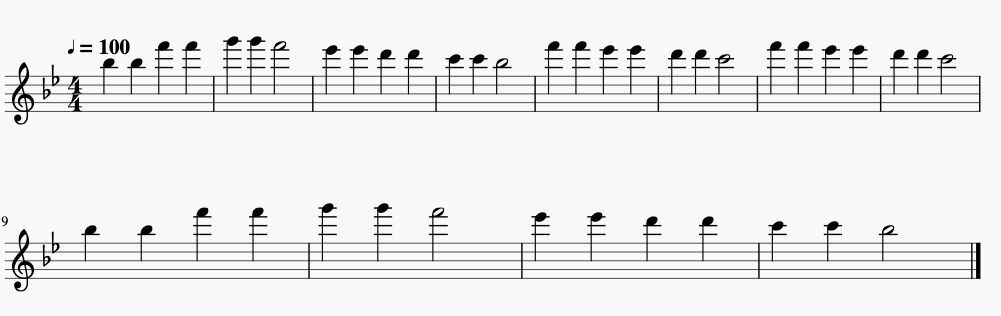

On the Python side, the serial data sent over from the PIC is converted to MIDI messages and saved to a file.

Finally, a subprocess call is made to open up MuseScore where the recorded MIDI file can be displayed!

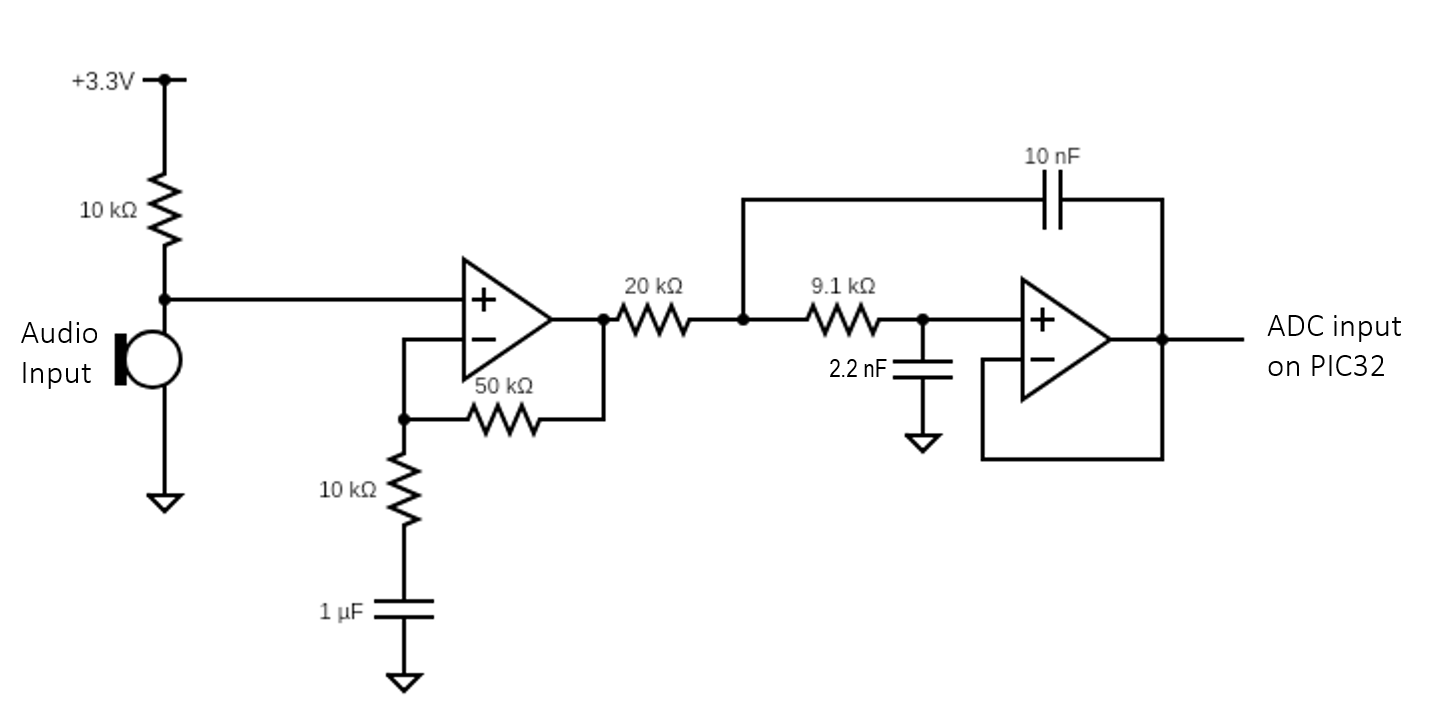

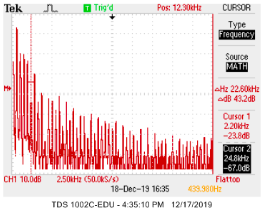

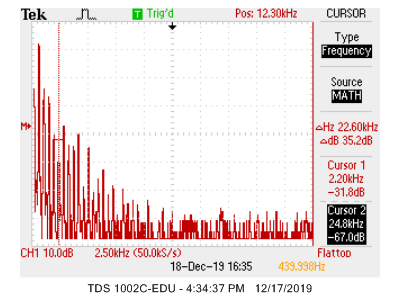

Audio Processing: FFT and Note Detection

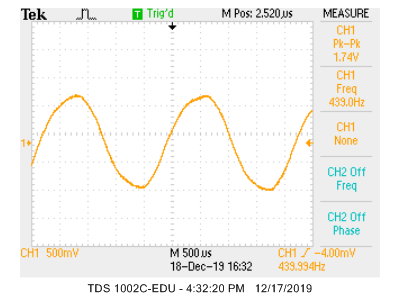

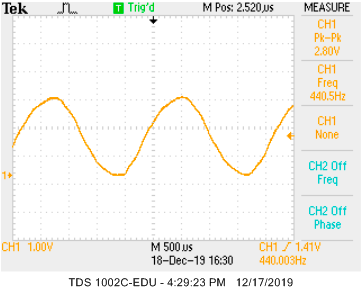

In order to interpret the notes in our audio data, we ran an FFT on the data coming into the ADC from the microphone circuit.

Our FFT code was adapted from sample code on the ECE 4760 website.

We used the same function and FFT thread, but changed the resolution of the FFT from a 512-point to a 1024-point FFT in order to more accurately distinguish between notes in our frequency range of interest.

Above middle C (C4), adjacent notes are at least 16Hz apart, and in the main range of a flute the difference between notes is more than that.

With an 8kHz sampling rate, a 1024-point FFT gives us a resolution of about 8Hz, which allows us to tell apart the notes we care about.

To avoid high-frequency aliasing caused by the start and stop of the FFT sampling, a ramp function was used.

Essentially, this ramp cut the beginning and end components of the FFT sample.

In the FFT thread, we added calculations to determine the highest-amplitude frequency and convert it into a note MIDI number.

To do this we simply added to a pre-existing loop that iterates through the FFT bins, comparing the amplitudes and saving both amplitude and frequency of any bin with a higher amplitude than the previously saved highest amplitude.

The frequency is calculated by multiplying the bin width (in our case, 8Hz) by the bin number.

Due to the presence of some large low-frequency components, we ignore the first 15 bins, giving us a frequency detection threshold of 117Hz.

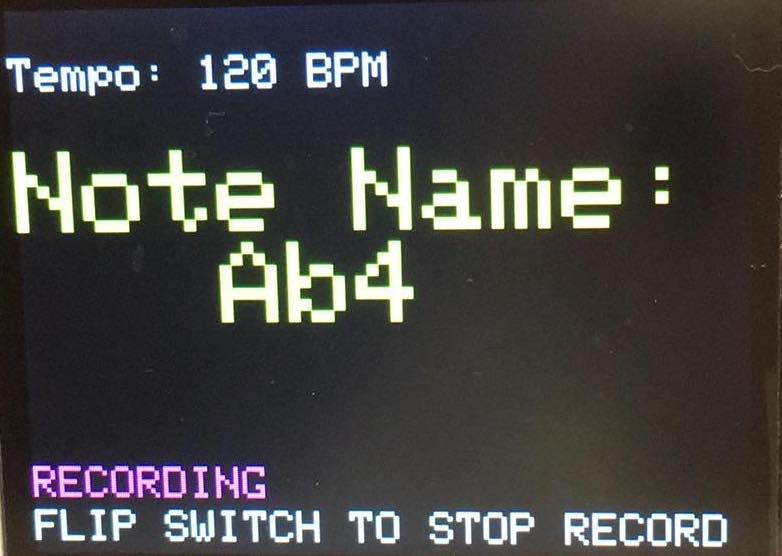

Once we know the strongest frequency present in the FFT, we convert the frequency to its MIDI number using the following equation, where m is the MIDI number and fm is the note frequency:

This MIDI number is saved as the current MIDI note (the note from the most recent run of the FFT), and used later in the serial communication.

We also calculate which octave the note is in from its MIDI number, which is used when we display the note name to the TFT screen.

Audio Processing: Metronome and Timing Threads

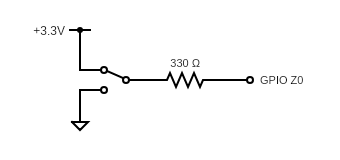

An entire thread was dedicated to the metronome aspect of record mode.

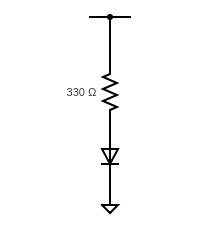

The metronome thread was responsible for blinking the LED at the user-set tempo.

To implement this, the frequency of the metronome was changed every time the tempo was set.

During this thread, the LED would toggle. By using the bpm to determine the thread’s yield time,

we were able to coordinate the tempo to the frequency at which the thread runs and

therefore the frequency at which the LED toggles.

The timing thread was primarily responsible for sending the MIDI numbers, via serial communication,

to the external computer.

This thread runs every millisecond, and revolves around whether the system is in record mode.

While in record mode, whenever a note starts or stops, this thread sends a serial message over

UART to the listening Python script containing the note’s MIDI number and the millisecond time stamp.

It does so by saving the previous note’s MIDI number as well as the current one and comparing these

to each other as well as the “zero” frequency, which is the frequency registered by the FFT

when no note is being played.

The UART messages are sent by spawning the thread PT_DMA_PutSerialBuffer.

The thread increments the recording time each time the thread executes in record mode.

This thread also prints the name of the note being played to the TFT display,

both in record mode and out, and re-prints the whole TFT display when the system transitions into and out of record mode.

Serial: Python and MIDI

We used a Python script in order to generate the actual MIDI files by listening for serial inputs, primarily using a library called mido for MIDI parsing and the pySerial library to read in the serial stream.

The first thing the script does is initialize a MidiFIle object and fill it with the default data (common between all MIDI files)

and establish a serial connection by listening at the 38400 baud rate.

On the computer end, that connection involved downloading the Prolific drivers for the UART cable, which can be found

here

for macOS. A few default global values are also set, such as a serial timeout length,

whether or not the system was in record mode, and some tempo-related data.

From here, an infinite while loop was used to process the serial stream. When a command is sent over by the PIC,

the script splits the message using spaces as delimiters and saves it in a message array.

The loop then goes through a series of cases depending on what is contained in the message.

The five valid serial messages begin with “BPM”, “BEGIN”, “START”, “STOP”, and “END”.

“BPM” was used to set the tempo, “BEGIN” and “END” were used to define the recording interval,

and “START” and “STOP” were used for individual note length and value determination.

If the message contains “BPM”, the next value in the array is assumed to be a tempo in beats per minute

that the user input from the keypad.

That value was saved as a global variable, which all note length calculations used, and also written to the MidiFile object.

If the script received a message with “BEGIN” in it, the system was put into record mode by setting the

recording global variable to True.

The “START” and “STOP” messages performed similar functions by writing MIDI bytes to the MidiFile object

corresponding to when a note started and stopped.

In the split array, following the “START” or “STOP” keywords, two pieces of information were sent over by the PIC:

MIDI note and global time in milliseconds.

When writing out the command in MIDI bytes, four numbers are required (delta time, MIDI message type, note number, velocity).

We could directly use the note number, and a default value for velocity, and the same MIDI message type for all notes (note_on).

However, we needed to perform some additional processing to get the delta time value required.

MIDI records global time using microseconds, or “ticks.” and the PPQ (ticks per quarter note)

is stored as a default (480) for MIDI tracks created with mido.

Both “START” and “STOP” messages required a delta time (that’s how rests are encoded), so we could calculate them similarly

using the following formula:

tick_diff = (start ms - last ms) * (1min / 60000ms) * (ticks/qn * qn/min)

Essentially, we found the difference in milliseconds between the current note and the last note,

converted that time to minutes, and then used the BPM (qn/min) and PPQ (ticks/qn) to get the note length in ticks,

and wrote that number to the MidiFile object.

Unfortunately, this system turned out to be too accurate, and resulted in random rests

and note blips of sixteenth note value or less. To mitigate this, we reduced our “resolution” to quarter note lengths,

i.e. the shortest note length possible was a quarter note.

Messages sent with calculated delta time values of less than half a quarter note were ignored,

everything else was “snapped” to a quarter note length using integer division and rounding.

Apart from note length determination, some additional filtering (such as removing consecutive notes with an octave or

more of a difference) was done in the loop.

Finally, when the script received an “END” message, the recording global variable was set to False,

and the MidiFile object was saved to a .mid file with the name “recording”+the current date and time in ISO format.

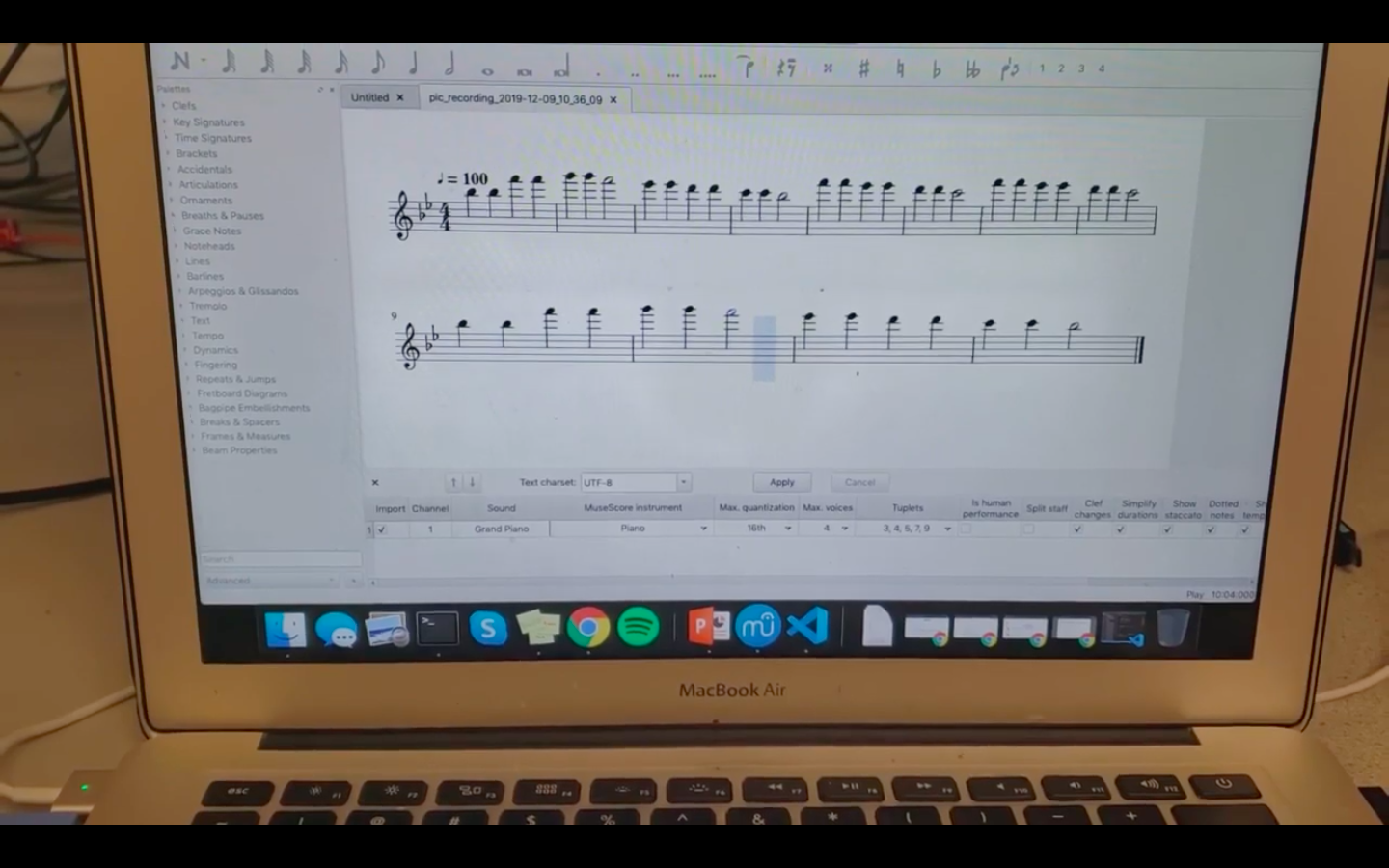

The Python script then triggered a subprocess call that would open up the MuseScore application on the computer,

where a user could open up the created MIDI file.

Once the MuseScore application was closed, the loop could be restarted with the defaults reset,

in order to make a new recording.

Controls: User Interface

The user interface involved a series of panels drawn using the functions from the TFT library.

The first pane is an intro screen only displayed once, when the system is first powered on.

The text is displayed using the printline() functions and the eight note was drawn using the

tft_drawLine() and tft_fillCircle() functions.

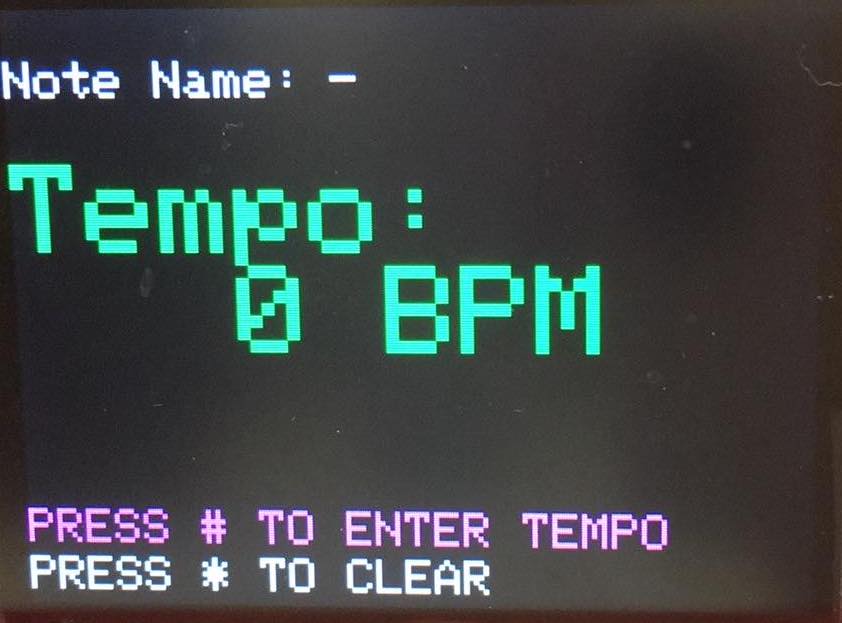

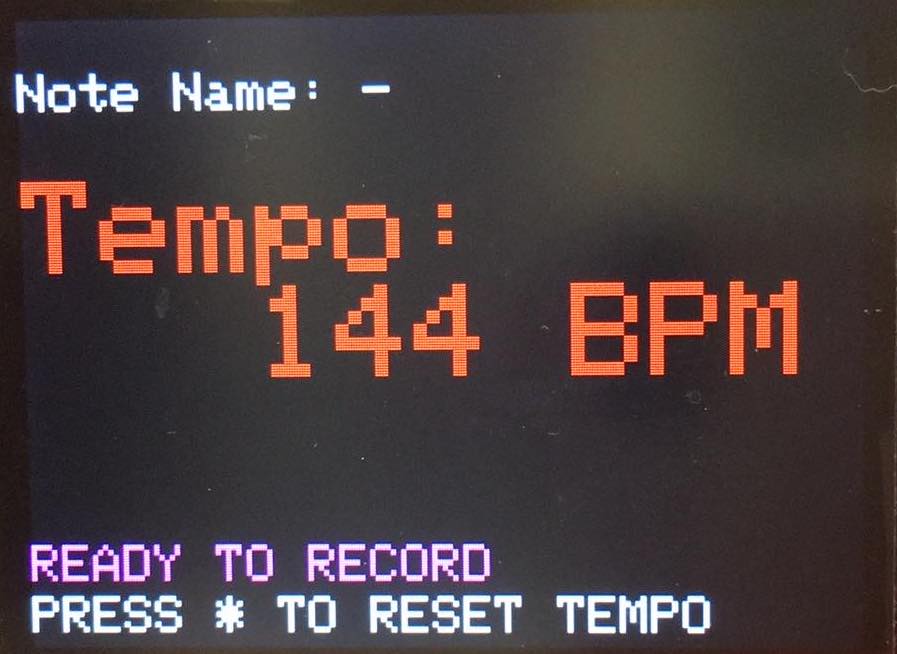

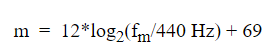

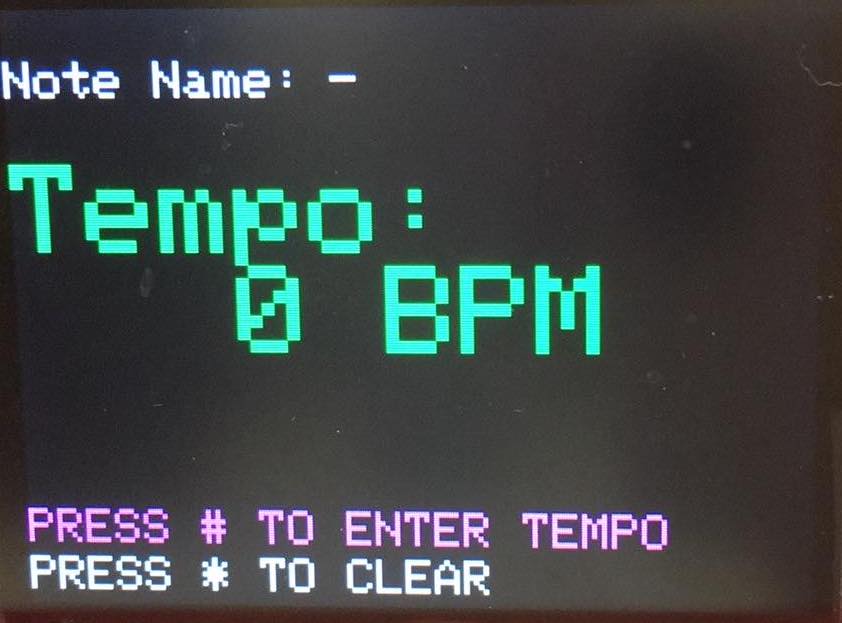

The following two panes are displayed to the user during the tempo input mode, and change based on button presses.

The first of the two is displayed as a user is inputting tempo, and the later one is displayed when the # key is pressed to "lock" the tempo input. The user prompts at the bottom change as well.

tft_fillRoundRect() functions are used to change displays, such as the note played at the time.

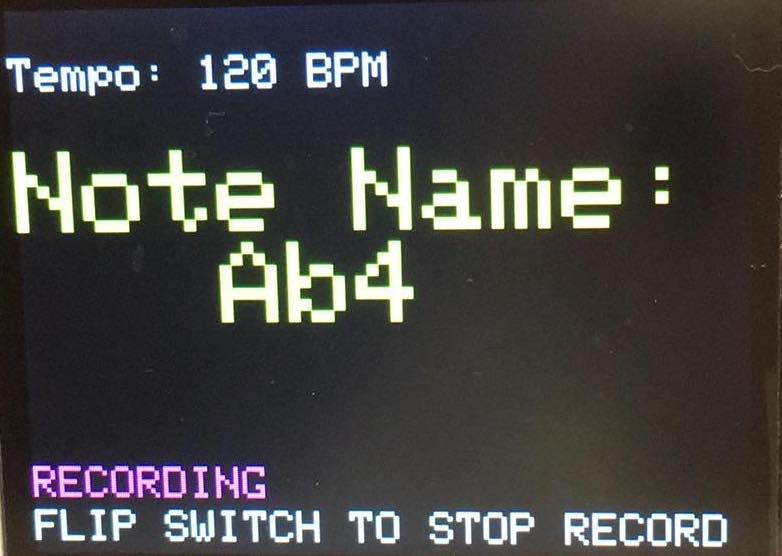

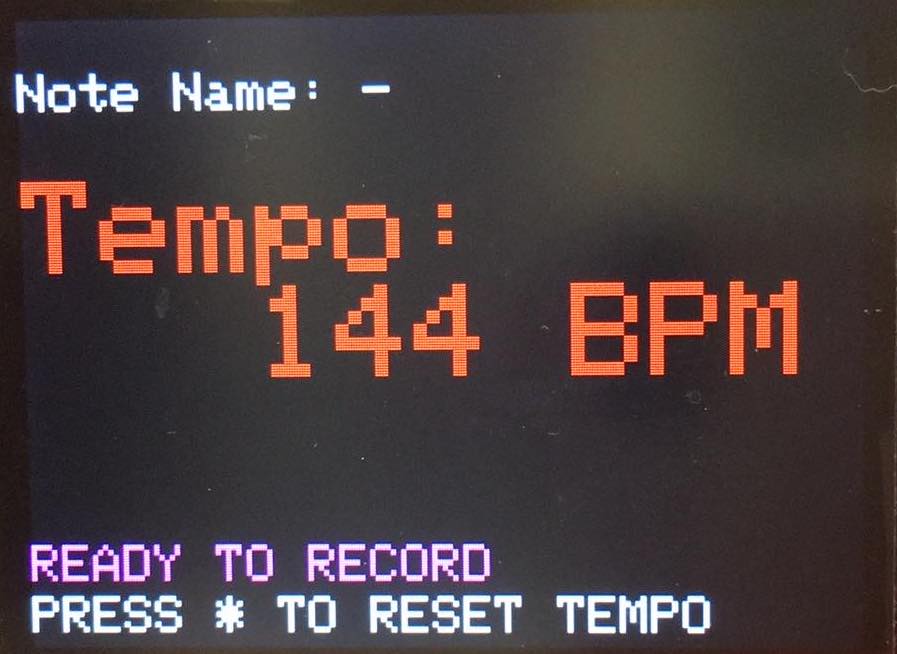

The final pane is switched to when the record switch on the front of the box is switched; switching it back returns the user to the tempo input screen.

The main differences from the previous two panes, besides the color, are the user prompts at the bottom and the size of the tempo/note being displayed.

Both modes allow you to see both pieces of information, but one is more important than the other depending on the current mode.

All four panes are shown below:

Intro Screen

Tempo Set Screen

Tempo Locked Screen

Record Screen

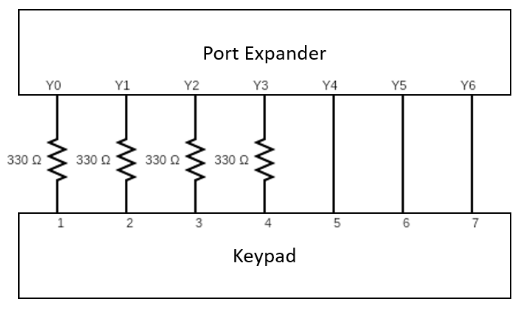

Controls: Keypad and Switches

The purpose of the keypad is to take user input to leave the start-up screen, and to take user input for setting the tempo.

A keypad thread was written to implement this functionality, and starts with a finite state machine that debounces key presses.

Keys are debounced to prevent a single key press from getting interpreted as multiple key presses.

After the keys are debounced, this thread interprets what key was pressed, and what action to take accordingly.

If the user is in tempo input mode and the tempo has not been entered (via the press of the # button),

this thread displays the key pressed on the TFT display in green.

This thread blocks users from entering tempos that are over three digits long.

If a user attempts to continue entering digits beyond three, these digits do not appear on the TFT display.

Once a user has entered their desired tempo, they press the # button.

The tempo is then locked, and further key presses are ignored unless the * button is pressed.

The * button allows users to clear and reset the tempo.

The purpose of the switch was to switch between tempo input mode and record mode.

When the switch is flipped from tempo input mode to record mode, the LED begins blinking and the Python script

begins listening for messages.

If a tempo has been set by the user, the recording tempo is that user-set tempo.

If the user did not enter a tempo, the recording tempo defaults to 120 BPM.

When the switch is flipped from record mode to tempo input mode, the previous tempo is cleared.

The user can then set a new tempo for the next recording.

Software: Closing Thoughts

As a whole we ran into little trouble implementing our full software structure and thread organization to execute the tasks we desired.

Although the FFT is computationally expensive, the complexity and computation time of our other threads was relatively low, which allowed everything to function smoothly from the user interface perspective with no noticeable delays in the timer and metronome threads.

We also did not run into any problems with running out of memory on the PIC32, despite the fact that increasing the FFT resolution used a lot more memory.

In addition, the python script interacted well with the UART serial interface.

The most tedious part of the software was designing the user interface, as it required us to meticulously plan out where everything should be drawn on the screen.